ARM Challenging Intel in the Server Market: An Overview

by Johan De Gelas on December 16, 2014 10:00 AM ESTThe ARM Based Challengers

Calxeda, AppliedMicro and ARM – in that order – have been talking about ARM based servers for years now. There were rumors about Facebook adopting ARM servers back in 2010.

Calxeda was the first to release a real server, the Boston Viridis, launched back in the beginning of 2013. The Calxeda ECX-1000 was based on a quad Cortex-A9 with 4MB L2. It was pretty slow in most workloads, but it was incredibly energy efficient. We found it to be a decent CPU for low-end web workloads. Intel's alternative, the S1260, was in theory faster, but it was outperformed in real server workloads by 20-40% and needed twice as much power (15W versus 8.3 W).

Unfortunately, the single-threaded performance of the Cortex-A9 was too low. As a result, you needed quite a bit of expensive hardware to compete with a simple dual socket low power Xeon running VMs. About 20 nodes (5 daughter cards) of micro servers or 80 cores were necessary to compete with two octal-core Xeons. The fact that we could use 24 nodes or 96 SoCs made the Calxeda based server faster, but the BOM (Bill of Materials) attached to so much hardware was high.

While the Calxeda ECX-1000 could compete on performance/watt, it could not compete on performance per dollar. Also, the 4GB RAM limit per node made it unattractive for several markets such as web caching. As a result, Calxeda was relegated to a few niche markets such as the low end storage market where it had some success, but it was not enough. Calxeda ran out of venture capital, and a promising story ended too soon, unfortunately.

AppliedMicro X-Gene

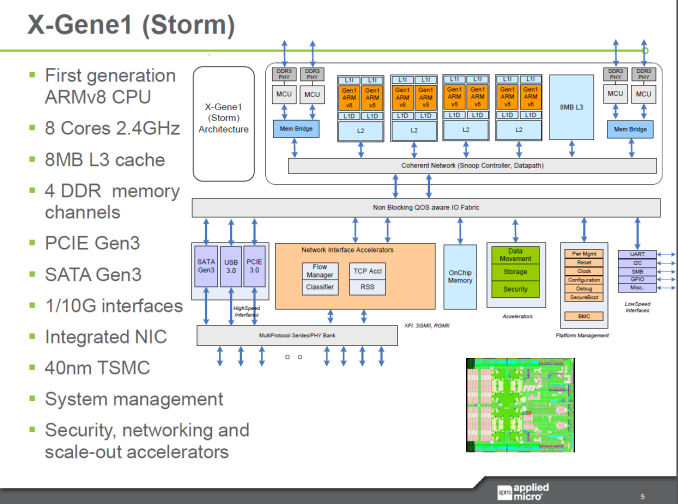

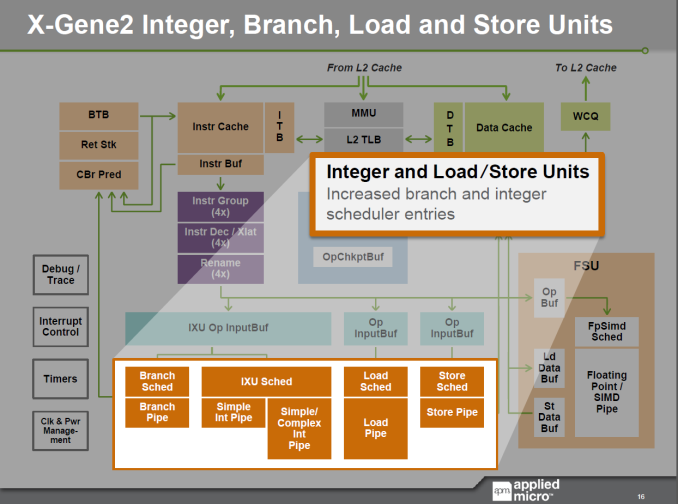

Just recently, AppliedMicro showed off their X-Gene ARM SoCs, but those are 40nm SoCs. The 28nm "ShadowCat" X-Gene 2 is due for the H1 of 2015. Just like Atom C2000, the AppliedMicro X-Gene ARM SoC has four pairs of cores that share an L2 cache. However, the similarity ends there. The core is a lot beefier and it features 4-wide issue with an execution backend with four integer pipelines and three FP pipelines (one 128-bit FP, one Load, one Store). The 2.4GHz octal-core X-Gene also has a respectable 8MB L3 cache and can access up to four memory channels, with an integrated dual 10GB Ethernet interface. In other words, the X-Gene is made to go after the Xeon E3, not the Atom C2000.

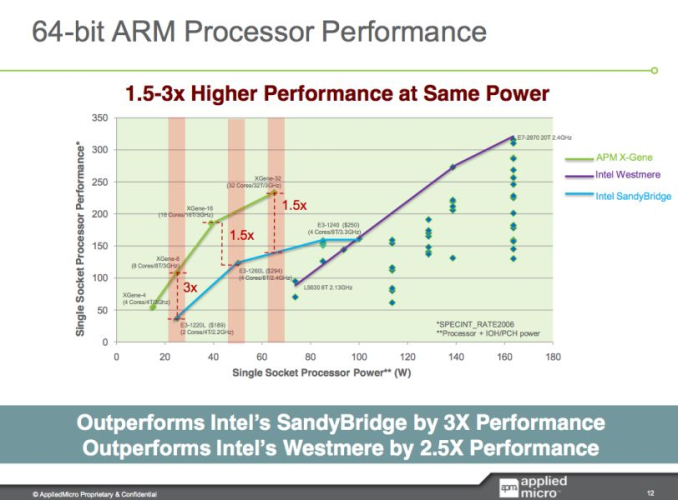

Of course, the AppliedMicro chip has been delayed many times. There were already performance announcements in 2011. The X-Gene1 8-core at 3GHz was supposed to be slightly slower than a quad-core Xeon E3-1260L "Sandy Bridge" at 2.4GHz in SPECINT_Rate2006.

Considering that the Haswell E3 is about 15-17% faster clock for clock, performance should be around Xeon E3-1240L V3 at 2GHz. But the X-Gene1 only reached 2.4GHz and not 3GHz, so it looks like an E3-1240L v3 will probably outperform the new challenger by a considerable margin. The E3-1230L (v1) was a 45W chip and the E3-1240L v3 is a 25W TDP chip, and as a result we also expect the performance/watt of an E3-1240L to be considerably better. Back in 2011, the SoC was expected to ship in late 2012 and have two years lead on the competition. It turned out to be two months.

Only a thorough test like our Calxeda review will really show what the X-Gene can do, but it is clear that AppliedMicro needs the X-Gene2 to be competitive. If AppliedMicro executes well with X-Gene2, it could get ahead once again... this time hopefully with a lead of more than two months.

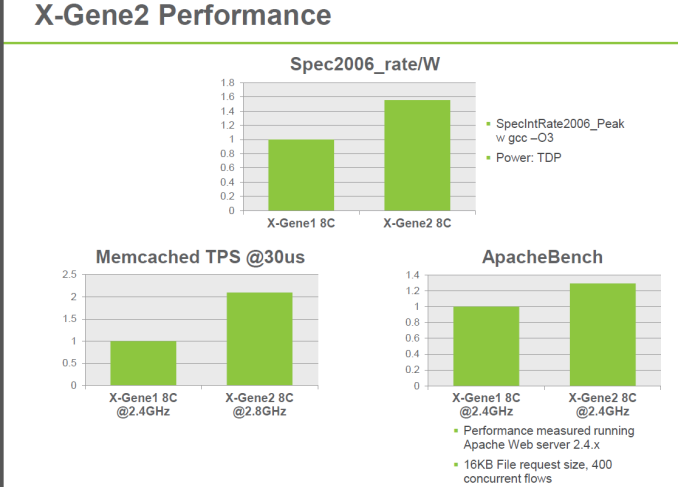

Indeed, early next year, things could get really interesting: the X-Gene2 will double to the amount of cores to 16 (at 2.4GHz) or up the clock speed to 2.8GHz (8-cores) courtesy of TSMC's 28nm process technology. The X-Gene2 is supposed to offer 50% more performance/watt with the same amount of cores.

AppliedMicro also announced the Skylark architecture inside X-Gene3. Courtesy of TSMC's 16nm node, the chip should run at up to 3GHz or have up to 64 cores. The chip should appear in 2016, but you'll forgive us for saying that we first want to see and review the X-Gene2 before we can be impressed with the X-Gene3 specs. We have seen too many vendors with high numbers on PowerPoint presentations that don't pan out in the real world. Nevertheless, the X-Gene2 looks very promising and is already running software. It just has to find a place in a real server in a timely fashion.

78 Comments

View All Comments

beginner99 - Tuesday, December 16, 2014 - link

Agree. I just don't see it. What wasn't mentioned or I might have missed is Intels turbo technology. Does ARM have anything similar? Single-threaded performance matters. If a websites takes double the time to be built by the server the user can notice this. And given complexity of modern web sites this is IMHO a real issue. Latency or "service time" is greatly affected by single-threaded performance. That's why visualization is great. Put tons of low-usage stuff on the same physical server and yet each request profits from the single-threaded performance.Now these ARM guys are targeting this high single-threaded performance but why would any company change? Whole software stack would have to change as well at don't forget the software usually cost way, way more than the hardware it runs on. So if you save 10% on the SOC you maybe save less than 1% on the total BOM including software. They can't win on price and on performance/watt Intel still hast best process. So no i don' see it except for niche markets like these Mips SOCs from cavium.

Ratman6161 - Wednesday, December 17, 2014 - link

"Xeon performance at ridiculous prices" I just don't get the "ridiculous prices" comment. To me, it seems like hardware these days is so cheap they are practically giving it away. I remember in the days of NT 4.0 Servers we paid $40K each for dual socket Dell systems with 16 GB Ram.A few years later we were doing Windows 2000 Server on Dell 2850's that were less than half the price.

Then in 2007 we went the VMWare route on Dell 2950's where the price actually went up to $23K but we were getting dual sockets/8 cores and 32GB of RAM so they made the $40K servers we bought years before look like toys.

Four years later we got R-710's that were dual socket/12 cores and 64GB or RAM and made the $23K 2950's look like clunkers but the price was once again almost half at about $12K.

Today we are looking at replacing the R-710's with the latest generation which will be even more cores and more RAM for about the same price.

So to me, the prices don't seem ridiculous at all. The servers themselves now make up only a fraction of our hardware costs with the expensive items being SAN storage. But that too is a lot cheaper. We are looking at going from our two SANS with 4GB fiber channel connections to a single SAN with 10GB Ethernet and more storage than the two old units combined...but still costing less than the old SANs did for just one. So prices there are expensive but less than half of what we paid in 2007 for more storage.

The real costs in the environment are in Software licensing and not I'm not talking about Microsoft or even VMware. Licensing those products are chump change compared to the Enterprise Software crooks...that's where the real costs are. The infrastructure of servers, storage and "plumbing" sorts of software like Windows Server and VMWare are cheap in comparison.

mrdude - Tuesday, December 16, 2014 - link

Great article, JohanI think the last page really describes why so many people, myself included, feel that ARM servers/vendors have a very good chance of entrenching themselves in the market. Server workloads are more complex and varied today than they ever have been in the past and it isn't high volume either: the Facebook example is a good one. These companies buy hardware by the truckload and can benefit immensely from customization that Intel may not have on offer.

To add to that, what wasn't mentioned is that ARM, due to its 'license everything' business model, provides these same companies the opportunity to buy ready-made bits of uArch and, with a significantly smaller investment, build them own as-close-to-ideal SoC/CPU/co-processor that they need.

Competition is a great thing for everyone.

JohanAnandtech - Tuesday, December 16, 2014 - link

True. Although it seems that only AMD really went for the "license almost everything" model of ARM.mrdude - Tuesday, December 16, 2014 - link

Yep. And that's likely due to the budget/timing constraints. I think they were gunning for the 'first to market' branding but they couldn't meet their own timelines. Something of a trend with that company. I'm curious as to why we haven't heard a peep from AMD or partners regarding performance or perf-per-watt. Iirc, we were supposed to see Seattle boards in Q3 of 2014.I also feel like ARM isn't going to stop at the interconnect. There's still quite a bit of opportunity for them to expand in this market.

cjs150 - Tuesday, December 16, 2014 - link

Ultimately, my interest in servers is limited but I would like a simple home server that would tie all my computers, NAS, tablets and the other bits and bobs that a geek household has.witeken - Tuesday, December 16, 2014 - link

Who's interested in Intel's data center strategy, can watch Diane Bryant's recent presentation (including PDF): http://intelstudios.edgesuite.net/im/2014/live_im.... The Q&A from 2013 also has some comments about ARM servers: http://intelstudios.edgesuite.net/im/2013/live_im....Kevin G - Tuesday, December 16, 2014 - link

"Now combine this with the fact that Windows on Alpha was available." - Except that Windows NT was available for Alpha. There was a beta for Windows 2000 in both 32 bit and 64 bit flavors for the curious.I disagree with the reason why Intel beat the RISC players. Two of the big players were defeated by corporate politics: Alpha and PA-RISC were under the control of HP who was planning to migrate to Itanium. That leaves POWER, SPARC, MIPs and Intel's own Itanium architecture at the turn of the millennium. Of those, POWER and SPARC are still around as they continue to execute. So the only two victims that can be claimed by better execution is MIPs and Intel's own Itanium.

While IBM and Oracle are still executing on hardware, the Unix market as a whole has decreased in size as a whole. The software side isn't as strong as it'd use to be. Linux has risen and proven itself to be a strong competitor to the traditional Unix distribution. Open source software has emerged to fill many of the roles Unix platforms were used to. Further more, many of these applications like Hadoop and Casandra are designed to be clustered and tolerate node failures. No need to spend extra money on big iron hardware if the software doesn't need that level of RAS for uptime. The general lower cost of Linux and open source software (though they're not free due to the need for support) combined with furhter tightening of budgets during the great recession has made many businesses reconsider their Unix platforms.

JohanAnandtech - Tuesday, December 16, 2014 - link

My main argument was that the RISC market was fragmented, and not comparable to what the x86 market is now (Intel dominating with a very large software base).While I agree with many of your points, you can not say that SPARC is not a victim. In 90ies, Sun had a very broad product range from entry-level workstation to high-end server. The same is true for the Power CPUs.

Kevin G - Wednesday, December 17, 2014 - link

The RISC market was fragmented on both hardware and software. The greatest example of this would be HP that had HPUX, Tru64, OpenVMS, and Nonstop as operating system and tried to get them all migrated to a common hardware platform: Itanium. How each platform handled backwards compatibility with their RISC roots was different (and Tru64 was killed in favor of HPUX).The midrange RISC workstation suffered the same fate as the dual socket x86 workstation market: good enough hardware and software existed for less. The race to 1Ghz between Intel and AMD cut out the performance advantage RISC platforms carried. Not to say that the RISC a chips didn't improve performance but vendors never took steps to improve their price. Window 2000 and the rise of Linux early in the 2000's gave x86 a software price advantage too while having good enough reliability.

Sun's hardware business did suffer some horrible delays which helped lead the company into Oracle's acquisition. Notably was the Rock chip which featured out-of-order execution but also out-of-order instruction retirement. Sun was never able to validate any prototype silicon and ship it to customers.