The Google Nexus 9 Review

by Joshua Ho & Ryan Smith on February 4, 2015 8:00 AM EST- Posted in

- Tablets

- HTC

- Project Denver

- Android

- Mobile

- NVIDIA

- Nexus 9

- Lollipop

- Android 5.0

SPECing Denver's Performance

Finally, before diving into our look at Denver in the real world on the Nexus 9, let’s take a look at a few performance considerations.

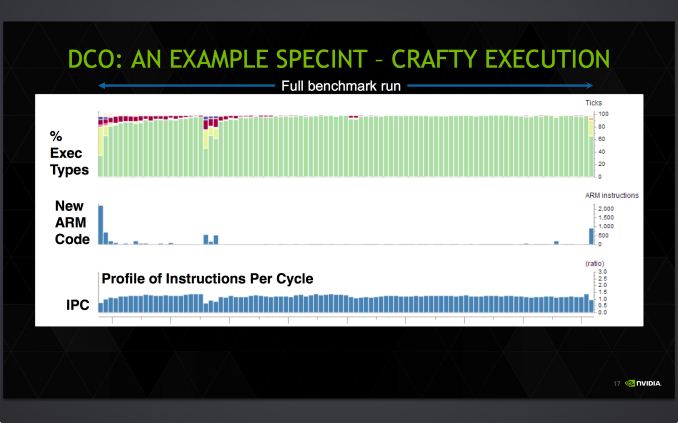

With so much of Denver’s performance riding on the DCO, starting with the DCO we have a slide from NVIDIA profiling the execution of SPECInt2000 on Denver. In it NVIDIA showcases how much time Denver spends on each type of code execution – native ARM code, the optimizer, and finally optimized code – along with an idea of the IPC they achieve on this benchmark.

What we find is that as expected, it takes a bit of time for Denver’s DCO to kick in and produce optimized native code. At the start of the benchmark execution with little optimized code to work with, Denver initially executes ARM code via its ARM decoder, taking a bit of time to find recurring code. Once it finds that recurring code Denver’s DCO kicks in – taking up CPU time itself – as the DCO begins replacing recurring code segments with optimized, native code.

In this case the amount of CPU time spent on the DCO is never too great of a percentage of time, however NVIDIA’s example has the DCO noticeably running for quite some time before it finally settles down to an imperceptible fraction of time. Initially a much larger fraction of the time is spent executing ARM code on Denver due to the time it takes for the optimizer to find recurring code and optimize it. Similarly, another spike in ARM code is found roughly mid-run, when Denver encounters new code segments that it needs to execute as ARM code before optimizing it and replacing it with native code.

Meanwhile there’s a clear hit to IPC whenever Denver is executing ARM code, with Denver’s IPC dropping below 1.0 whenever it’s executing large amounts of such code. This in a nutshell is why Denver’s DCO is so important and why Denver needs recurring code, as it’s going to achieve its best results with code it can optimize and then frequently re-use those results.

Also of note though, Denver’s IPC per slice of time never gets above 2.0, even with full optimization and significant code recurrence in effect. The specific IPC of any program is going to depend on the nature of the code, but this serves as a good example of the fact that even with a bag full of tricks in the DCO, Denver is not going to sustain anything near its theoretical maximum IPC of 7. Individual VLIW instructions may hit 7, but over any period of time if a lack of ILP in the code itself doesn’t become the bottleneck, then other issues such as VLIW density limits, cache flushes, and unavoidable memory stalls will. The important question is ultimately whether Denver’s IPC is enough of an improvement over Cortex A15/A57 to justify both the power consumption costs and the die space costs of its very wide design.

NVIDIA's example also neatly highlights the fact that due to Denver’s favoritism for code reuse, it is in a position to do very well in certain types of benchmarks. CPU benchmarks in particular are known for their extended runs of similar code to let the CPU settle and get a better sustained measurement of CPU performance, all of which plays into Denver’s hands. Which is not to say that it can’t also do well in real-world code, but in these specific situations Denver is well set to be a benchmark behemoth.

To that end, we have also run our standard copy of SPECInt2000 to profile Denver’s performance.

| SPECint2000 - Estimated Scores | ||||||

| K1-32 (A15) | K1-64 (Denver) | % Advantage | ||||

| 164.gzip |

869

|

1269

|

46%

|

|||

| 175.vpr |

909

|

1312

|

44%

|

|||

| 176.gcc |

1617

|

1884

|

17%

|

|||

| 181.mcf |

1304

|

1746

|

34%

|

|||

| 186.crafty |

1030

|

1470

|

43%

|

|||

| 197.parser |

909

|

1192

|

31%

|

|||

| 252.eon |

1940

|

2342

|

20%

|

|||

| 253.perlbmk |

1395

|

1818

|

30%

|

|||

| 254.gap |

1486

|

1844

|

24%

|

|||

| 255.vortex |

1535

|

2567

|

67%

|

|||

| 256.bzip2 |

1119

|

1468

|

31%

|

|||

| 300.twolf |

1339

|

1785

|

33%

|

|||

Given Denver’s obvious affinity for benchmarks such as SPEC we won’t dwell on the results too much here. But the results do show that Denver is a very strong CPU under SPEC, and by extension under conditions where it can take advantage of significant code reuse. Similarly, because these benchmarks aren’t heavily threaded, they’re all the happier with any improvements in single-threaded performance that Denver can offer.

Coming from the K1-32 and its Cortex-A15 CPU to K1-64 and its Denver CPU, the actual gains are unsurprisingly dependent on the benchmark. The worst case scenario of 176.gcc still has Denver ahead by 17%, meanwhile the best case scenario of 255.vortex finds that Denver bests A15 by 67%, coming closer than one would expect towards doubling A15's performance entirely. The best case scenario is of course unlikely to occur in real code, though I’m not sure the same can be said for the worst case scenario. At the same time we find that there aren’t any performance regressions, which is a good start for Denver.

If nothing else it's clear that Denver is a benchmark monster. Now let's see what it can do in the real world.

169 Comments

View All Comments

MonkeyPaw - Wednesday, February 4, 2015 - link

Maybe I missed it, but can you comment on browser performance in terms of tabs staying in memory? I had a Note 10.1 2014 for a brief time, and I found that tabs had to reload/refresh constantly, despite the 3GB of RAM. Has this gotten any better with the Nexus and Lollipop? Through research, I got the impression it was a design choice in Chrome, but I wondered if you could figure out any better. Say what you want about Windows RT, but my old Surface 2 did a good job of holding more tabs in RAM on IE.blzd - Friday, February 6, 2015 - link

Lollipop has memory management issues right now, as was mentioned in this very article. Apps are cleared from memory frequently after certain amount of up time and reboots are required.mukiex - Wednesday, February 4, 2015 - link

Hey Josh,Awesome review. As I'm sure others have noted, the Denver part alone was awesome to read about! =D

gixxer - Thursday, February 5, 2015 - link

There have been reports of a hardware refresh to address the buttons, light bleed, and flexing of the back cover. There was no mention of this in this article. What was the build date on the model that was used for this review? Is there any truth to the hardware refresh?Also, Lollipop is supposed to be getting a big update to 5.1 very soon. Will this article be updated with the new Lollipop build results? Will FDE have the option to be turned off in 5.1?

blzd - Friday, February 6, 2015 - link

Rumors. Unfounded rumors with zero evidence besides a Reddit post comparing an RMA device. All that proved was RMA worked as intended.The likelyhood of a hardware revision after 1 month on the market is basically 0%. The same goes for the N5 "revision" after 1 month which was widely reported and 100% proven to be false.

konondrum - Thursday, February 5, 2015 - link

My take from this article is that the Shield Tablet is probably the best value in the tablet market at the moment. I was really shocked to see the battery life go down significantly with Lollipop in your benchmarks, because in my experience battery life has been noticeably better than it was at launch.OrphanageExplosion - Thursday, February 5, 2015 - link

I seem to post this on every major mobile review you do, but can you please get it right with regards to 3DMark Physics? It's a pure CPU test (so maybe it should be in the CPU benches) and these custom dual-core efforts, whether it's Denver or Cyclone, always seem to perform poorly.There is a reason for that, and it's not about core count. Futuremark has even gone into depth in explaining it. In short, there's a particular type of CPU workload test where these architectures *don't* perform well - and it's worth exploring it because it could affect gaming applications.

http://www.futuremark.com/pressreleases/understand...

When I couldn't understand the results I was getting from my iPad Air, I mailed Futuremark for an explanation and I got one. Maybe you could do the same rather than just write off a poor result?

hlovatt - Thursday, February 5, 2015 - link

Really liked the Denver deep dive and we got a bonus in-depth tablet review. Thanks for a great article.behrangsa - Thursday, February 5, 2015 - link

Wow! Even iPad 4 is faster than K1? I remember nVidia displaying some benchmarks putting Tegra K1 far ahead of Apple's A8X.behrangsa - Thursday, February 5, 2015 - link

Anyway to edit comments? Looks like the K1 benchmark was against the predecessor to A8X, the A7.