The Google Nexus 9 Review

by Joshua Ho & Ryan Smith on February 4, 2015 8:00 AM EST- Posted in

- Tablets

- HTC

- Project Denver

- Android

- Mobile

- NVIDIA

- Nexus 9

- Lollipop

- Android 5.0

SoC Architecture: NVIDIA's Denver CPU

It admittedly does a bit of a disservice to the rest of the Nexus 9 both in terms of hardware and as a complete product, but there’s really no getting around the fact that the highlight of the tablet is its NVIDIA-developed SoC. Or to be more specific, the NVIDIA-developed Denver CPUs within the SoC, and the fact that the Nexus 9 is the first product to ship with a Denver CPU.

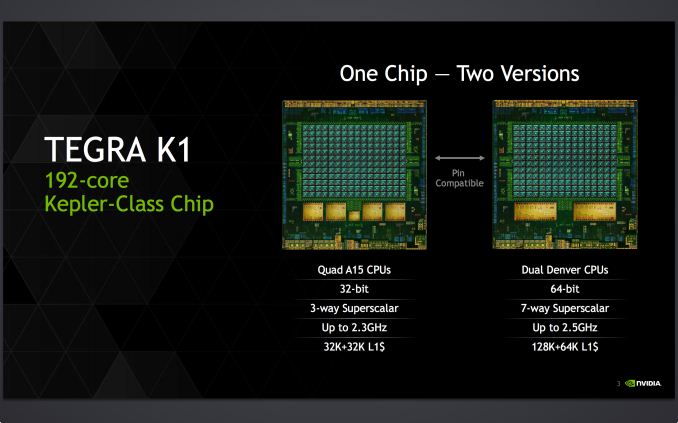

NVIDIA for their part is no stranger to the SoC game, now having shipped 5 generations of Tegra SoCs (with more on their way). Since the beginning NVIDIA has been developing their own GPUs and then integrating those into their Tegra SoCs, using 3rd party ARM cores and other 1st party and 3rd party designs to fully flesh out Tegra. However even though NVIDIA is already designing some of their own IP, there’s still a big leap to be made from using licensed ARM cores to using your own ARM cores, and with Denver NVIDIA has become just the second company to release their own ARMv8 design for consumer SoCs.

For long time readers Denver may feel like a long time coming, and that perception is not wrong. NVIDIA announced Denver almost 4 years ago, back at CES 2011, where at the time they made a broad announcement about developing their own 64bit ARM core for use in wide range of devices, ranging from mobile to servers. A lot has happened in the SoC space since 2011, and given NVIDIA’s current situation Denver likely won’t be quite as broad a product as they first pitched it as. But as an A15 replacement for the same tablet and high performance embedded markets that the TK1-32 has found a home in, the Denver-based TK1-64 should fit right in.

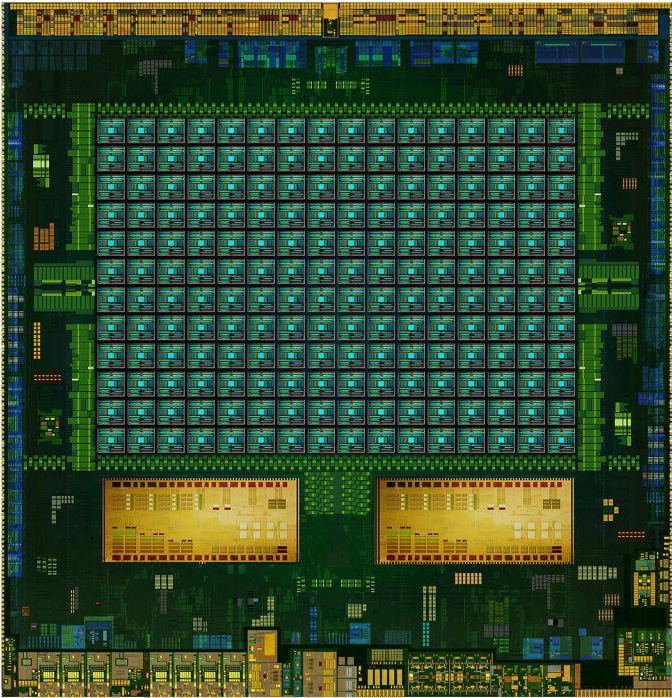

K1-64 Die Shot Mock-up (NVIDIA)

Denver comes at an interesting time for NVIDIA and for the ARM SoC industry as a whole. Apple’s unexpected launch of the ARMv8 capable Cyclone core in 2013 beat competing high-performance ARMv8 designs by nearly a year. And overall Apple set a very high bar for performance and power efficiency that is not easily matched and has greatly impacted the development and deployment schedules of other ARMv8 SoCs. At the same time because Cyclone and its derivatives are limited to iOS devices, the high-performance Android market is currently served by a mix of ARMv7 designs (A15, Krait, etc) and the just recently arrived A57 and Denver CPUs.

Showcasing the full scope of the ARM architecture license and how many different designs can execute the same instruction set, none of these ARMv8 CPUs are all that much alike. Thanks to its wide and conservatively clocked design, Apple’s Cyclone ends up looking a lot like what a recent Intel Core processor would look like if it were executing ARM instead of x86. Meanwhile ARM’s A57 design is (for lack of a better term) very ARMy, following ARM’s own power efficient design traditions and further iterating on ARM’s big.LITTLE philosophy to pair up high performance A57 and moderate performance A53 cores to allow a SoC to cover a wide power/performance curve. And finally we have Denver, perhaps the most interesting and certainly least conventional design, forgoing the established norms of Out of Order Execution (OoOE) in favor of a very wide in-order design backed by an ambitious binary translation and optimization scheme.

Counting Cores: Why Denver?

To understand Denver it’s best to start with the state of the ARM device market, and NVIDIA’s goals in designing their own CPU core. In the ARM SoC space, much has been made of core counts, both as a marketing vehicle and of value to overall performance. Much like the PC space a decade prior, when multi-core processors became viable they were of an almost immediate benefit. Even if individual applications couldn’t yet make use of multiple cores, having a second core meant that applications and OSes were no longer time-sharing a single core, which came with its own performance benefits. The OS could do its work in the background without interrupting applications as much, and greedy applications didn’t need to fight with the OS or other applications for basic resources.

However also like the PC space, the benefits of additional cores began to taper off with each additional core. One could still benefit from 4 cores over 2 cores, but unless software was capable of putting 3-4 cores to very good use, generally one would find that performance didn’t scale well with the cores. Compounding matters in the mobile ecosystem, the vast majority of devices run apps in a “monolithic” fashion with only one app active and interacting with the user at any given point in time. This meant that in absence of apps that could use 3-4 cores, there weren’t nearly as many situations in which multitasking could be employed to find work for the additional cores. The end result has been that it has been difficult for mobile devices to consistently saturate an SoC with more than a couple of cores.

Meanwhile the Cortex family of designs coming from ARM have generally allowed high core counts. Cortex-A7 is absolutely tiny, and even the more comparable Cortex-A15 isn’t all that big on the 28nm process. Quad core A15 designs quickly came along, setting the stage for the high core count situations we previously discussed.

This brings us to NVIDIA’s goals with Denver. In part due to the issues feeding 4 cores, NVIDIA has opted for a greater focus on single-threaded performance than the ARM Cortex designs they used previously. Believing that fewer, faster cores will deliver better real-world performance and better power consumption, NVIDIA set out to build a bigger, wider CPU that would do just that. The result of this project was what NVIDIA awkwardly calls their first “super core,” Denver.

Though NVIDIA wouldn’t know it at the time it was announced in 2011, Denver in 2015 is in good company that helps to prove that NVIDIA was right to focus on single-threaded performance over additional cores. Apple’s Cyclone designs have followed a very similar philosophy and the SoCs utilizing them remain the SoCs to beat, delivering chart-topping performance even with only 2 or 3 CPU cores. Deliver something similar in performance to Cyclone in the Android market and prove the performance and power benefits of 2 larger cores over 4 weaker cores, and NVIDIA would be well set in the high-end SoC marketplace.

Performance considerations aside, for NVIDIA there are additional benefits to rolling their own CPU core. First and foremost is that it reduces their royalty rate to ARM; ARM still gets a cut as part of their ISA license, but that cut is less than if you are also using ARM licensed cores. The catch of course is that NVIDIA needs to sell enough SoCs in the long run to pay for the substantial costs of developing a CPU, which means that along with the usual technical risks, there are some financial risks as well for developing your own CPU.

The second benefit to NVIDIA then is differentiation in a crowded SoC market. The SoC market has continued to shed players over the years, with players such as Texas Instruments and ST-Ericsson getting squeezed out of the market. With so many vendors using the same Cortex CPU designs, from a performance perspective their SoCs are similarly replaceable, making the risk of being the next TI all the greater. Developing your own CPU is not without risks as well – especially if it ends up underperforming the competition – but played right it means being able to offer a product with a unique feature that helps the SoC stand out from the crowd.

Finally, at the time NVIDIA announced Denver, NVIDIA also had plans to use Denver to break into the server space. With their Tesla HPC products traditionally paired x86 CPUs, NVIDIA could never have complete control over the platform, or the greater share of revenue that would entail. Denver in turn would allow NVIDIA to offer their own CPU, capturing that market and being able to play off of the synergy of providing both the CPU and GPU. Since then however the OpenPOWER consortium happened, opening up IBM’s POWER CPU lineup to companies such as NVIDIA and allowing them to add features such as NVLink to POWER CPUs. In light of that, while NVIDIA has never officially written off Denver’s server ambitions, it seems likely that POWER has supplanted Denver as NVIDIA’s server CPU of choice.

169 Comments

View All Comments

dtgoodwin - Wednesday, February 4, 2015 - link

I really appreciate the depth that this article has, however, I wonder if it would have been better to separate the in depth CPU analysis for a separate article. I will probably never remember to come back to the Nexus 9 review if I want to remember a specific detail about that CPU.nevertell - Wednesday, February 4, 2015 - link

Has nVidia exposed that they would provide a static version of the DCO so that app developers would be able to optimize their binaries at compile time ? Or do these optimizations rely on the program state when they are being executed ? From a pure academic point of view, it would be interesting to see the overhead introduced by the DCO when comparing previously optimized code without the DCO running and running the SoC as was intended.Impulses - Wednesday, February 4, 2015 - link

Nice in depth review as always, came a little late for me (I purchased one to gift it, which I ironically haven't done since the birthday is this month) but didn't really change much as far as my decision so it's all good...I think the last remark nails it, had the price point being just a little lower most of the minor QC issues wouldn't have been blown up...

I don't know if $300 for 16GB was feasible (pretty much the price point of the smaller Shield), but $350 certainly was and Amazon was selling it for that much all thru Nov-Dec which is bizarre since Google never discounted it themselves.

I think they should've just done a single $350-400 32GB SKU, saved themselves a lot of trouble and people would've applauded the move (and probably whined for a 64GB but you can't please everyone). Or a combo deal with the keyboard, which HTC was selling at 50% at one point anyway.

Impulses - Wednesday, February 4, 2015 - link

No keyboard review btw?JoshHo - Thursday, February 5, 2015 - link

We did not receive the keyboard folio for review.treecats - Wednesday, February 4, 2015 - link

Where is the comparison to NEXUS 10????Maybe because Nexus 10's battery life is crap after 1 year of use!!!

Please come back review it again when you used it for a year.

treecats - Wednesday, February 4, 2015 - link

My previously holds true for all the Nexus device line I own.I had Nexus 4,

currently have Nexus 5, and Nexus 10. All the Nexus devices I own have bad battery life after 1 year of use.

Google, fix the battery problem.

blzd - Friday, February 6, 2015 - link

That tells me you are mistreating your batteries. You think it's coincidence that it's happening to all your devices? Do you know how easy it is for batteries to degrade when over heating? Do you know every battery is rated for a certain number of charges only?Mostly you want to avoid heat, especially while charging. Gaming while charging? That's killing the battery. GPS navigation while charging? Again, degrading the battery.

Each time you discharge and charge the battery you are using one of it's charge cycles. So if you use the device a lot and charge it multiple times a day you will notice degradation after a year. This is not unique to Google devices.

grave00 - Sunday, February 8, 2015 - link

I don't think you have the latest info on how battery charging vs battery life works.hstewartanand - Wednesday, February 4, 2015 - link

Even though I personal have 6 tablets ( 2 iPads, 2 Windows 8.1 and 2 android ) and as developer I find them technically inferior to Actual PC - except for Windows 8.1 Surface Pro.I recently purchase an Lenovo y50 with i7 4700 - because I desired AVX 2 video processing. To me ARM based platforms will never replace PC devices for certain applications - like Video processing and 3d graphics work.

I am big fan of Nvidia GPU's but don't care much for ARM cpus - I do like the completion that it given to Intel to produce low power CPU's for this market

What I really like to see is a true technical bench mark that compare the true power of cpus from ARM and Intel and rank them. This includes using extended instructions like AVX 2 on Intel cpus.

Compared this with equivalent configured Nvidia GPU on Intel CPU - and I would say ARM has a very long way to go.

But a lot depends on what you doing with the device. I am currently typing this on a 4+ year old Macbook Air - because it easy to do it and convenient. My other Windows 8.1 ( Lenovo 2 Mix 8 - Intel Adam Baytrail ) has roughly the same speed - but Macbook AIR is more convenient. My primary tablet is the Apple Mini with Retina screen, it is also convent for email and amazon and small stuff.

The problem with some of bench marks - is that they maybe optimized for one platform more than another and dependent on OS components which may very between OS environments. So ideal the tests need to native compile for cpu / gpu combination and take advantage of hardware. I don't believe such a benchmark exists. Probably the best way to do this get developers interested in platforms to come up with contest for best score and have code open source - so no cheating. It would be interesting to see ranking of machines from tablets, phones, laptop and even high performance xeon machines. I also have an 8+ Year old dual Xeon 5160 Nvidia GTX 640 (best I can get on this old machine ) and I would bet it will blow away any of this ARM based tablets. Performance wise it a little less but close to my Lenovo y50 - if not doing VIDEO processing because of AVX 2 is such significant improvement.

In summary it really hard to compare performance of ARM vs Intel machines. But this review had some technical information that brought me back to my older days when writing assembly code on OS - PC-MOS/386