The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTPower, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

Having already seen the Maxwell architecture in action with the GTX 750 series, the GTX 980 and its GM204 Maxwell 2 GPU have a very well regarded reputation to live up to. GTX 750 Ti shattered old energy efficiency marks, and we expect much the same of GTX 980. After all, NVIDIA tells us that they can deliver more performance than the GTX 780 Ti for less power than the GTX 680, and that will be no easy feat.

| GeForce GTX 980 Voltages | ||||

| GTX 980 Boost Voltage | GTX 980 Base Voltage | GTX 980 Idle Voltage | ||

| 1.225v | 1.075v | 0.856v | ||

We’ll start as always with voltages, which in this case I think makes for one of the more interesting aspects of GTX 980. Despite the fact that GM204 is a pretty large GPU at 398mm2 and is clocked at over 1.2GHz, NVIDIA is still promoting a TDP of just 165W. One way to curb power consumption is to build a processor wide-and-slow, and these voltage numbers are solid proof that NVIDIA has not done that.

With a load voltage of 1.225v, NVIDIA is driving GM204 as hard (if not harder) than any of the Kepler GPUs. This means that all of NVIDIA’s power optimizations – the key to driving 5.2 billion transistors at under 165W – lie with other architectural optimizations the company has made. Because at over 1.2v, they certainly aren’t deriving any advantages from operating at low voltages.

Next up, let’s take a look at average clockspeeds. As we alluded to earlier, NVIDIA has maintained the familiar 80C default temperature limit for GTX 980 that we saw on all other high-end GPU Boost 2.0 enabled cards. Furthermore as a result of reinvesting most of their efficiency gains into acoustics, what we are going to see is that GTX 980 still throttles. The question then is by how much.

| GeForce GTX 980 Average Clockspeeds | |||

| Max Boost Clock | 1252MHz | ||

| Metro: LL |

1192MHz

|

||

| CoH2 |

1177MHz

|

||

| Bioshock |

1201MHz

|

||

| Battlefield 4 |

1227MHz

|

||

| Crysis 3 |

1227MHz

|

||

| TW: Rome 2 |

1161MHz

|

||

| Thief |

1190MHz

|

||

| GRID 2 |

1151MHz

|

||

| Furmark |

923MHz

|

||

What we find is that while our GTX 980 has an official boost clock of 1216MHz, our sustained benchmarks are often not able to maintain clockspeeds at or above that level. Of our games only Bioshock Infinite, Crysis 3, and Battlefield 4 maintain an average clockspeed over 1200MHz, with everything else falling to between 1151MHz and 1192MHz. This still ends up being above NVIDIA’s base clockspeed of 1126MHz – by nearly 100MHz at times – but it’s clear that unlike our 700 series cards NVIDIA is much more aggressively rating their boost clock. The GTX 980’s performance is still spectacular even if it doesn’t get to run over 1.2GHz all of the time, but I would argue that the boost clock metric is less useful this time around if it’s going to overestimate clockspeeds rather than underestimate. (ed: always underpromise and overdeliver)

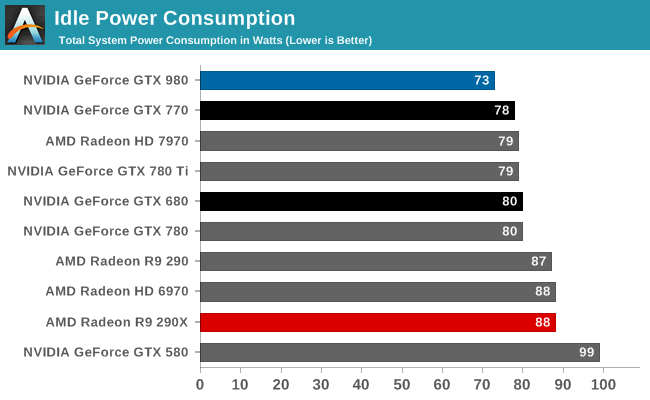

Starting as always with idle power consumption, while NVIDIA is not quoting specific power numbers it’s clear that the company’s energy efficiency efforts have been invested in idle power consumption as well as load power consumption. At 73W idle at the wall, our testbed equipped with the GTX 980 draws several watts less than any other high-end card, including the GK104 based GTX 770 and even AMD’s cards. In desktops this isn’t going to make much of a difference, but in laptops with always-on dGPUs this would be helpful in freeing up battery life.

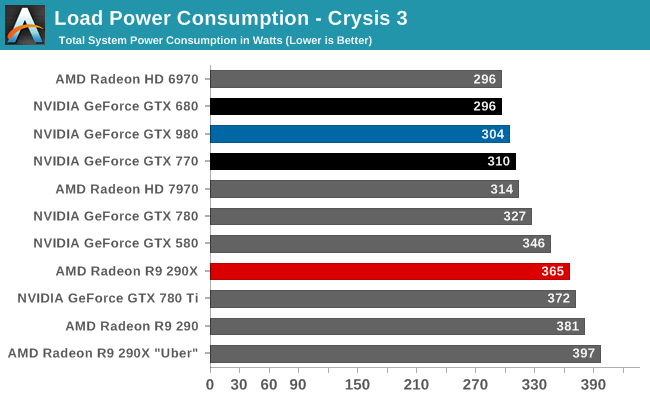

Our first load power test is our gaming test, with Crysis 3. Because we measure from the wall, this test means we’re seeing GPU power consumption as well as CPU power consumption, which means high performance cards will drive up the system power consumption numbers merely by giving the CPU more work to do. This is exactly what happens in the case of the GTX 980; at 304W it’s between the GK104 based GTX 680 and GTX 770, however it’s also delivering 30% better framerates. Accordingly the power consumption of the GTX 980 itself should be lower than either card, but we would not see it in a system power measurement.

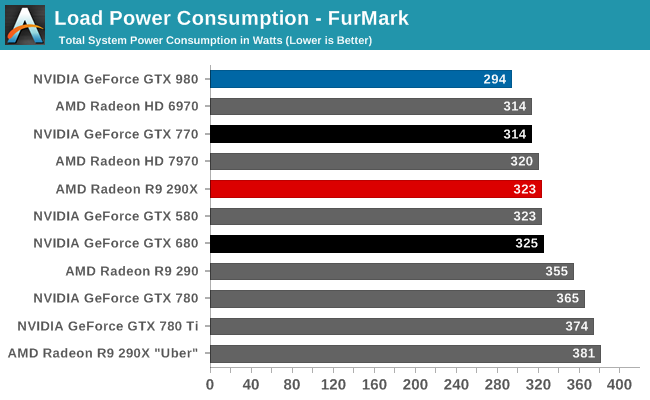

For that reason, when looking at recent generation cards implementing GPU Boost 2.0 or PowerTune 3, we prefer to turn to FurMark as it essentially nullifies the power consumption impact of the CPU. In this case we can clearly see what NVIDIA is promising: GTX 980’s power consumption is lower than everything else on the board, and noticeably so. With 294W at the wall, it’s 20W less than GTX 770, 29W less than 290X, and some 80W less than the previous NVIDIA flagship, GTX 780 Ti. At these power levels NVIDIA is essentially drawing the power of a midrange class card, but with chart-topping performance.

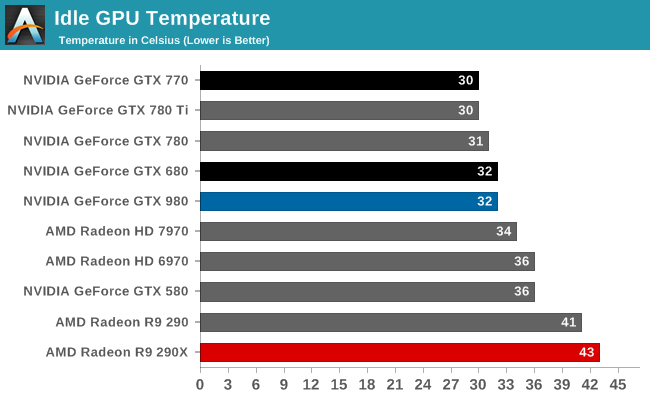

Moving on to temperatures, at idle we see nothing remarkable. All of these well-designed, low idle power designs are going to idle in the low 30s, especially since they’re not more than a few degrees over room temperature.

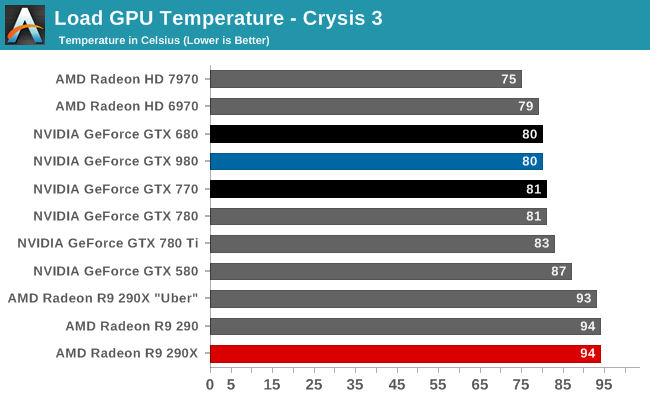

With an 80C throttle point in place for the GTX 980, it’s here where we see the card top out at. The fact that we’re hitting 80C is the reason why the card is exhibiting clockspeed throttling as we saw earlier. NVIDIA’s chosen fan curve is tuned for noise over temperature, so it’s letting the GPU reach its temperature throttle point rather than ramp up the fan (and the noise) too much.

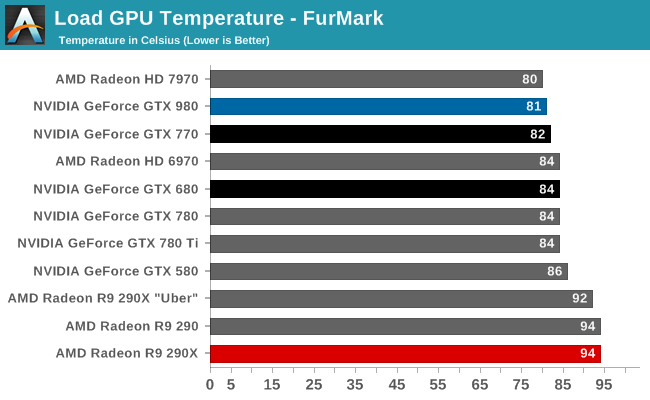

Once again we see the 80C throttle in action. Like all GPU Boost 2.0 NVIDIA cards, NVIDIA makes sure their products aren’t going to get well over 80C no matter the workload.

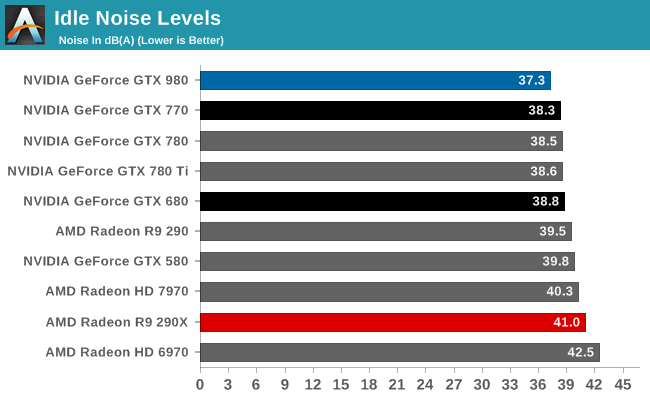

Last but not least we have our noise results. Right off the bat the GTX 980 is looking strong; even with the shared heritage of the cooler with the GTX 780 series, the GTX 980 is slightly but measurably quieter at idle than any other high-end NVIDIA or AMD card. At 37.3dB, the GTX 980 comes very close to being silent compared to the rest of the system.

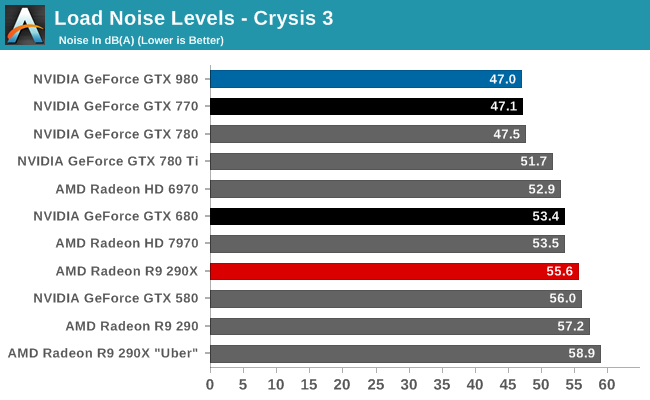

Our Crysis 3 load noise testing showcases the full benefits of the GTX 980’s well-built blower in action. GTX 980 doesn’t perform appreciably better than the GTX Titan cooler equipped GTX 770 and GTX 780, but then again GTX 980 is also not using quite as advanced of a cooler (forgoing the vapor chamber). Still, this is enough to edge ahead of the GTX 770 by 0.1dB, technically making it the quietest video card in this roundup. Though for all practical purposes, it’s better to consider it tied with the GTX 770.

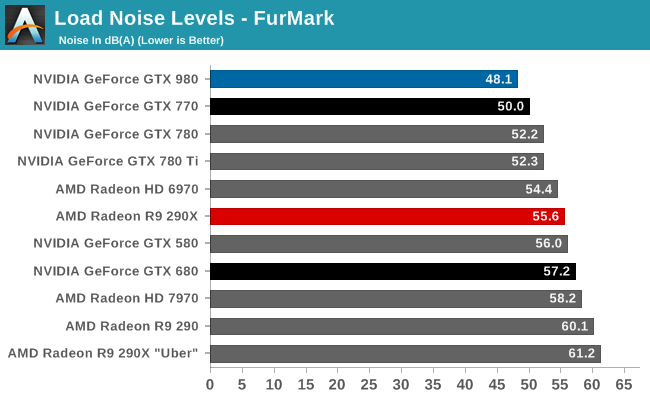

FurMark noise testing on the other hand drives a wedge between the GTX 980 and all other cards, and it’s in the GTX 980’s favor. Despite the similar noise performance between various NVIDIA cards under Crysis 3, under our maximum, pathological workload of FurMark the GTX 980 pulls ahead thanks to its 165W TDP. At the end of the day its lower TDP limit means that the GTX 980 never has too much heat to dissipate, and as a result it never gets too loud. In fact it can’t. 48.1dB is as loud as the GTX 980 can get, which is why the GTX 980’s cooler and overall build are so impressive. There are open air cooled cards that now underperform the GTX 980 that can’t hit these low of noise levels, never mind the other cards with blowers.

Between the GTX Titan and its derivatives and now GTX 980, NVIDIA has spent quite a bit of time and effort on building a better blower, and with their latest effort it really shows. All things considered we prefer blower type coolers for their heat exhaustion benefits – just install it and go, there’s almost no need to worry about what the chassis cooling can do – and with NVIDIA’s efforts to build such a solid cooler for a moderately powered card, the end result is a card with a cooler that offers all the benefits of a blower with the acoustics that can rival and open air cooler. It’s a really good design and one of our favorite aspects of GTX Titan, its derivatives, and now GTX 980.

274 Comments

View All Comments

squngy - Wednesday, November 19, 2014 - link

It is explained in the article.Because GTX980 makes so many more frames the CPU is worked a lot harder. The W in those charts are for the whole system so when the CPU uses more power it makes it harder to directly compare GPUs

galta - Friday, September 19, 2014 - link

The simple fact is that a GPU more powerful than a GTX 980 does not make sense right now, no matter how much we would love to see it.See, most folks are still gaming @ 1080, some of us are moving up to 1440. Under this scenarios, a GTX 980 is more than enough, even if quality settings are maxed out. Early reviews show that it can even handle 4K with moderate settings, and we should expect further performance gains as drivers improve.

Maybe in a year or two, when 4K monitors become more relevant, a more powerful GPU would make sense. Now they simply don't.

For the moment, nVidia's movement is smart and commendable: power efficiency!

I mean, such a powerful card at only 165W! If you are crazy/wealthy enough to have two of them in SLI, you can cut your power demand by 170W, with following gains in temps and/or noise, and and less expensive PSU, if you're building from scratch.

In the end, are these new cards great? Of course they are!

Does it make sense to up-grade right now? Only if you running a 5xx or 6xx series card, or if your demands have increased dramatically (multi-monitor set-up, higher res. etc.).

Margalus - Friday, September 19, 2014 - link

A more powerful gpu does make sense. Some people like to play their games with triple monitors, or more. A single gpu that could play at 7680x1440 with all settings maxed out would be nice.galta - Saturday, September 20, 2014 - link

How many of us demand such power? The ones who really do can go SLI and OC the cards.nVidia would be spending billions for a card that would sell thousands. As I said: we would love the card, but still no sense

Again, I would love to see it, but in the forseeable future, I won't need it. Happier with noise, power and heat efficiency.

Da W - Monday, September 22, 2014 - link

Here's one that demands such power. I play 3600*1920 using 3 screens, almost 4k, 1/3 the budget, and still useful for, you know, working.Don't want sli/crossfire. Don't want a space heater either.

bebimbap - Saturday, September 20, 2014 - link

gaming at 1080@144 or 1080 with min fps of 120 for ulmb is no joke when it comes to gpu requirement. Most modern games max at 80-90fps on a OC'd gtx670 you need at least an OC'd gtx770-780. I'd recommend 780ti. and though a 24" 1080 might seem "small" you only have so much focus. You can't focus on periphery vision you'd have to move your eyes to focus on another piece of the screen. the 24"-27" size seems perfect so you don't have to move your eyes/head much or at all.the next step is 1440@144 or min fps of 120 which requires more gpu than @ 4k60. as 1440 is about 2x 1080 you'd need a gpu 2x as powerful. so you can see why nvidia must put out a powerful card at a moderate price point. They need it for their 144hz gsync tech and 3dvision

imo the ppi race isn't as beneficial as higher refresh rate. For TVs manufacturers are playing this game of misinformation so consumers get the short end of the stick, but having a monitor running at 144hz is a world of difference compared to 60hz for me. you can tell just from the mouse cursor moving across the screen. As I age I realize every day that my eyes will never be as good as yesterday, and knowing that I'd take a 27" 1440p @ 144hz any day over a 28" 5k @ 60hz.

Laststop311 - Sunday, September 21, 2014 - link

Well it all depends on viewing distance. I use a 30" 2560x1600 dell u3014 to game on currently since it's larger i can sit further away and still have just as good of an experience as a 24 or 27 thats closer. So you can't just say larger monitors mean you can;t focus on it all cause you can just at a further distance.theuglyman0war - Monday, September 22, 2014 - link

The power of the newest technology is and has always been an illusion because the creation of games will always be an exercise in "compromise". Even a game like WOW that isn't crippled by console consideration is created by the lowest common denominator demographic in the PC hardware population. In other words... ( if u buy it they will make it vs. if they make it I will upgrade ). Besides the unlimited reach of an openworld's "possible" textures and vtx counts."Some" artists are of the opinion that more hardware power would result in a less aggressive graphic budget! ( when the time spent wrangling a synced normal mapped representation of a high resolution sculpt or tracking seam problems in lightmapped approximations of complex illumination with long bake times can take longer than simply using that original complexity ). The compromise can take more time then if we had hardware that could keep up with an artists imagination.

In which case I gotta wonder about the imagination of the end user that really believes his hardware is the end to any graphics progress?

ppi - Friday, September 19, 2014 - link

On desktop, all AMD needs to do is to lower price and perhaps release OC'd 290X to match 980 performance. It will reduce their margins, but they won't be irrelevant on the market, like in CPUs vs Intel (where AMD's most powerful beasts barely touch Intel's low-end, apart from some specific multi-threaded cases)Why so simple? On desktop:

- Performance is still #1 factor - if you offer more per your $, you win

- Noise can be easily resolved via open air coolers

- Power consumption is not such a big deal

So ... if AMD card at a given price is as fast as Maxwell, then they are clearly worse choice. But if they are faster?

In mobile, however, they are screwed big way, unless they have something REAL good in their sleeve (looking at Tonga, I do not think they do, as I am convinced AMD intends to pull off another HD5870 (i.e. be on the new process node first), but it apparently did not work this time around.)

Friendly0Fire - Friday, September 19, 2014 - link

The 290X already is effectively an overclocked 290 though. I'm not sure they'd be able to crank up power consumption reliably without running into heat dissipation or power draw limits.Also, they'd have to invest in making a good reference cooler.