The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTOverclocking GTX 980

One of GTX 750 Ti’s more remarkable features was its overclocking headroom. GM107 could overclock so well that upon initial release, NVIDIA did not program in enough overclocking headroom in their drivers to allow for many GTX 750 Ti cards to be overclocked to their true limits. This is a legacy we would be glad to see repeated for GTX 980, and is a legacy we are going to put to the test.

As with NVIDIA’s Kepler cards, NVIDIA’s Maxwell cards are subject to NVIDIA’s stringent power and voltage limitations. Overvolting is limited to NVIDIA’s built in overvoltage function, which isn’t so much a voltage control as it is the ability to unlock 1-2 more boost bins and their associated voltages. Meanwhile TDP controls are limited to whatever value NVIDIA believes is safe for that model card, which can vary depending on its GPU and its power delivery design.

For GTX 980 we have a 125% TDP limit, meanwhile we are able to overvolt by 1 boost bin to 1265MHz, which utilizes a voltage of 1.25v.

| GeForce GTX 980 Overclocking | ||||

| Stock | Overclocked | |||

| Core Clock | 1126MHz | 1377MHz | ||

| Boost Clock | 1216MHz | 1466MHz | ||

| Max Boost Clock | 1265MHz | 1515MHz | ||

| Memory Clock | 7GHz | 7.8GHz | ||

| Max Voltage | 1.25v | 1.25v | ||

GTX 980 does not let us down, and like its lower end Maxwell 1 based counterpart the GTX 980 turns in an overclocking performance just short of absurd. Even without real voltage controls we were able to push another 250MHz (22%) out of our GM204 GPU, resulting in an overclocked base clock of 1377MHz and more amazingly an overclocked maximum boost clock of 1515MHz. That makes this the first NVIDIA card we have tested to surpass both 1.4GHz and 1.5GHz, all in one fell swoop.

This also leaves us wondering just how much farther GM204 could overclock if we were able to truly overvolt it. At 1.25v I’m not sure too much more voltage is good for the GPU in the long term – that’s already quite a bit of voltage for a TSMC 28nm process – but I suspect there is some untapped headroom left in the GPU at higher voltages.

Memory overclocking on the other hand doesn’t end up being quite as extreme, but we’ve known from the start that at 7GHz for the stock memory clock, we were already pushing the limits for GDDR5 and NVIDIA’s memory controllers. Still, we were able to work another 800MHz (11%) out of the memory subsystem, for a final memory clock of 7.8GHz.

Before we go to our full results, in light of GTX 980’s relatively narrow memory bus and NVIDIA’s color compression improvements, we quickly broke apart our core and memory overclock testing in order to test each separately. This is to see which overclock has more effect: the core overclock or the memory overclock. One would presume that the memory overclock is the more important given the narrow memory bus, but as it turns out that is not necessarily the case.

| GeForce GTX 980 Overclocking Performance | |||||

| Core (+22%) | Memroy (+11%) | Combined | |||

| Metro: LL |

+15%

|

+4%

|

+20%

|

||

| CoH2 |

+19%

|

+5%

|

+20%

|

||

| Bioshock |

+9%

|

+4%

|

+15%

|

||

| Battlefield 4 |

+10%

|

+6%

|

+17%

|

||

| Crysis 3 |

+12%

|

+5%

|

+15%

|

||

| TW: Rome 2 |

+16%

|

+7%

|

+20%

|

||

| Thief |

+12%

|

+6%

|

+16%

|

||

While the core overclock is greater overall to begin with, what we’re also seeing is that the performance gains relative to the size of the overclock consistently favor the core overclock to the memory overclock. With a handful of exceptions our 11% memory overclock is netting us less than a 6% increase in performance. Meanwhile our 22% core overclock is netting us a 12% increase or more. This despite the fact that when it comes to core overclocking, the GTX 980 is TDP limited; in many of these games it could clock higher if the TDP budget was large enough to accommodate higher sustained clockspeeds.

Memory overclocking is still effective, and it’s clear that GTX 980 spends some of its time memory bandwidth bottlenecked (otherwise we wouldn’t be seeing even these performance gains), but it’s simply not as effective as core overclocking. And since we have more core headroom than memory headroom in the first place, it’s a double win for core overclocking.

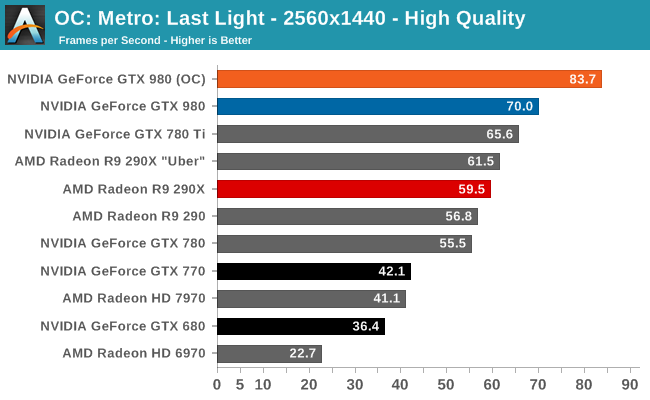

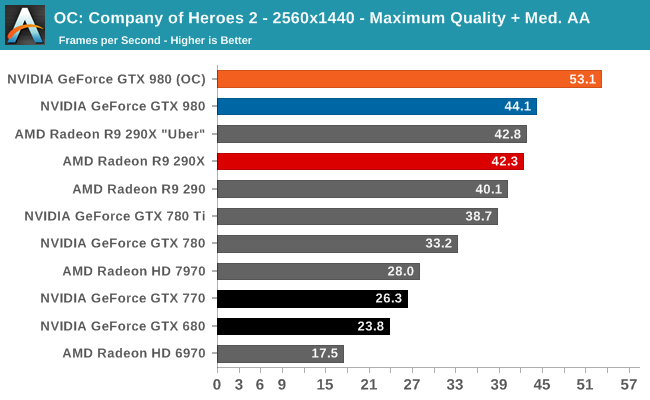

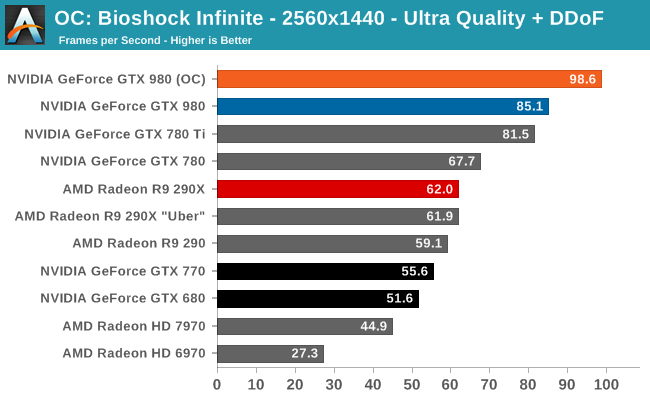

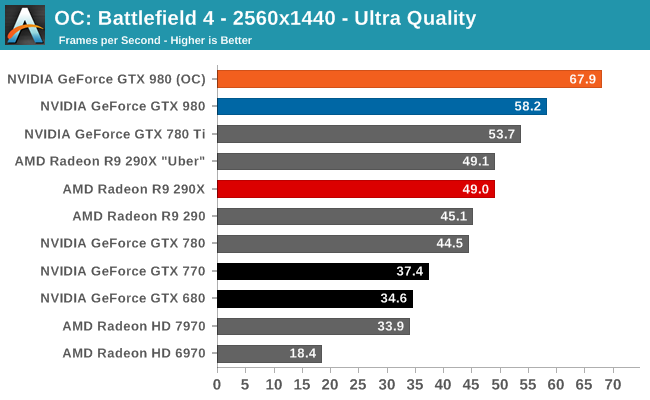

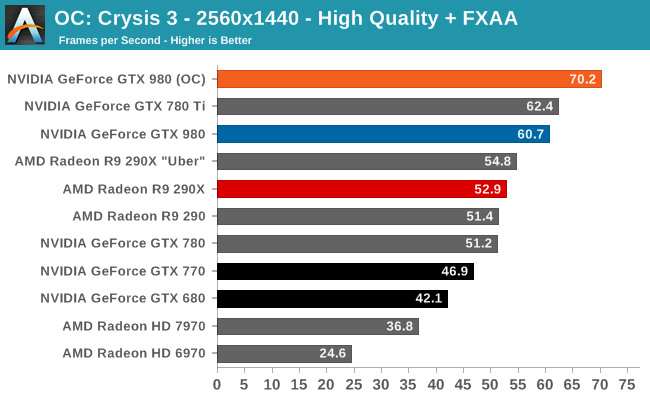

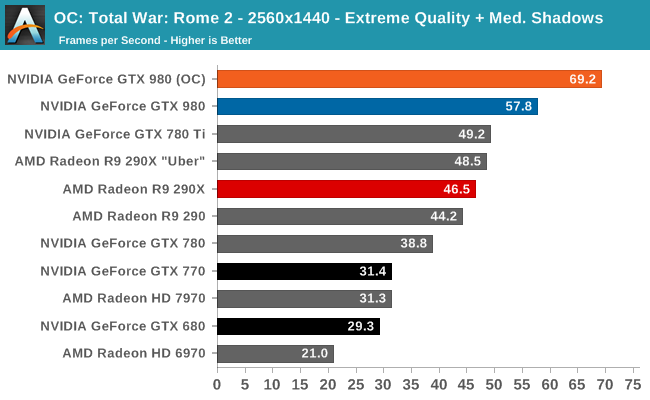

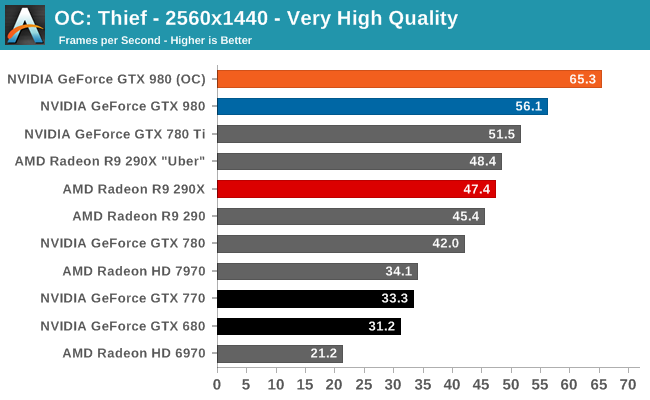

To put it simply, the GTX 980 was already topping the charts. Now with overclocking it’s another 15-20% faster yet. With this overclock factored in the GTX 980 is routinely 2x faster than the GTX 680, if not slightly more.

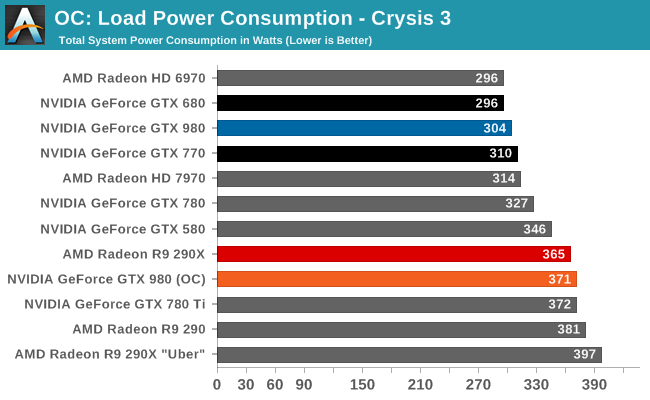

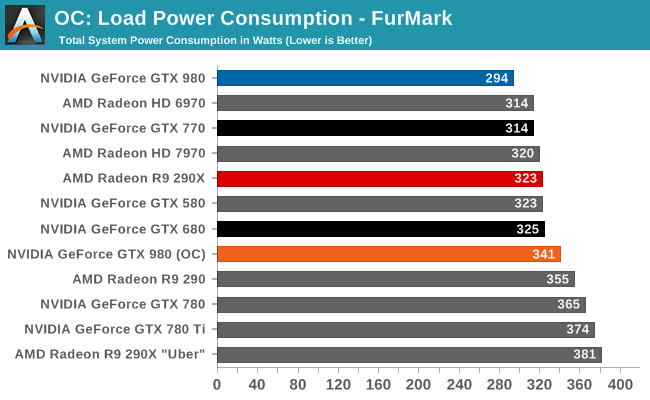

But you do pay for the overclock when it comes to power consumption. NVIDIA allows you to increase the TDP by 25%, and to hit these performance numbers you are going to need every bit of that. So what was once a 165W card is now a 205W card.

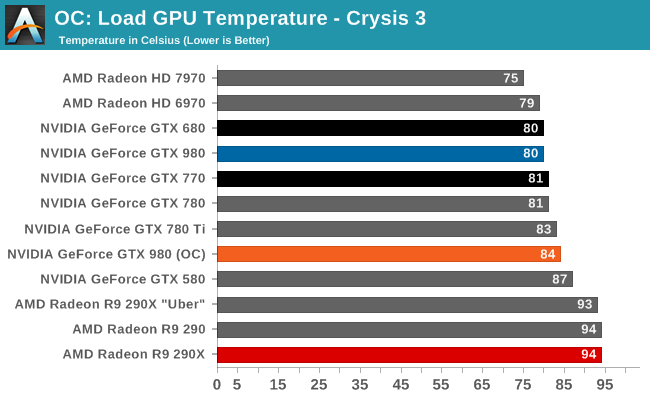

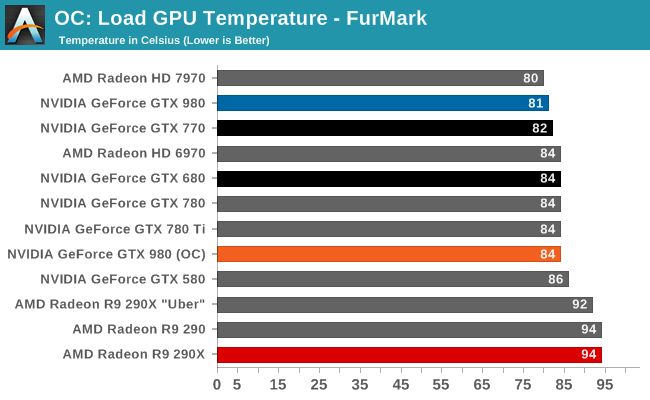

Even though overclocking involves raising the temperature limit to 91C, NVIDIA's fan curve naturally tops out at 84C. So even in the case of overclocking the GTX 980 isn't going to reach temperatures higher than the mid-80s.

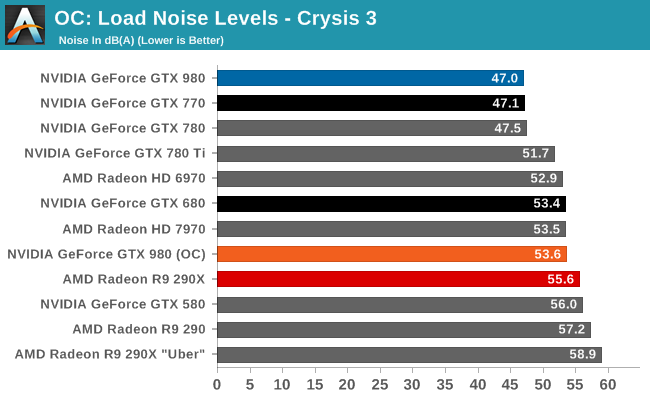

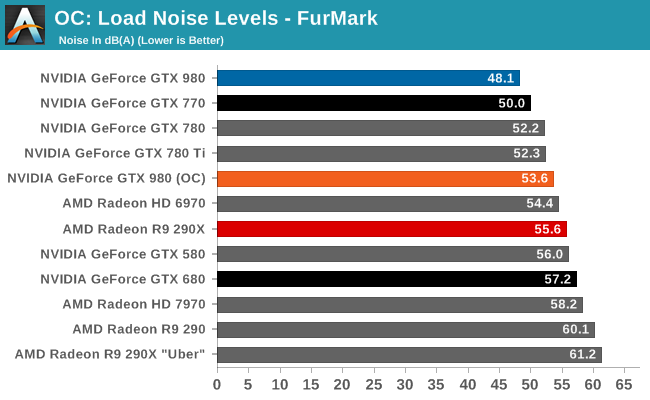

The noise penalty for overclocking is also pretty stiff. Since we're otherwise TDP limited, all of our workloads top out at 53.6dB, some 6.6dB higher than stock. In the big picture this means the overclocked GTX 980 is still in the middl of the pack, but it is noticably louder than before and louder than a few of NVIDIA's other cards. However interestingly enough it's no worse than the original stock GTX 680 at Crysis 3, and still better than said GTX 680 under FurMark. It's also still quieter than the stock Radeon R9 290X, not to mention the louder yet uber mode.

274 Comments

View All Comments

Sttm - Thursday, September 18, 2014 - link

"How will AMD and NVIDIA solve the problem they face and bring newer, better products to the market?"My suggestion is they send their CEOs over to Intel to beg on their knees for access to their 14nm process. This is getting silly, GPUs shouldn't be 4 years behind CPUs on process node. Someone cut Intel a big fat check and get this done already.

joepaxxx - Thursday, September 18, 2014 - link

It's not just about having access to the process technology and fab. The cost of actually designing and verifying an SoC at nodes past 28nm is approaching the breaking point for most markets, that's why companies aren't jumping on to them. I saw one estimate of 500 million for development of a 16/14nm device. You better have a pretty good lock on the market to spend that kind of money.extide - Friday, September 19, 2014 - link

Yeah, but the GPU market is not one of those markets where the verification cost will break the bank, dude.Samus - Friday, September 19, 2014 - link

Seriously, nVidia's market cap is $10 billion dollars, they can spend a tiny fortune moving to 20nm and beyond...if they want too.I just don't think they want to saturate their previous products with such leaps and bounds in performance while also absolutely destroying their competition.

Moving to a smaller process isn't out of nVidia's reach, I just don't think they have a competitive incentive to spend the money on it. They've already been accused of becoming a monopoly after purchasing 3Dfx, and it'd be painful if AMD/ATI exited the PC graphics market because nVidia's Maxwell's, being twice as efficient as GCN, were priced identically.

bernstein - Friday, September 19, 2014 - link

atm. it is out of reach to them. at least from a financial perspective.while it would be awesome to have maxwell designed for & produced on intel's 14nm process, intel doesn't even have the capacity to produce all of their own cpus... until fall 2015 (broadwell xeon-ep release)...

kron123456789 - Friday, September 19, 2014 - link

"it also marks the end of support for NVIDIA’s D3D10 GPUs: the 8, 9, 100, 200, and 300 series. Beginning with R343 these products are no longer supported in new driver branches and have been moved to legacy status." - This is it. The time has come to buy a new card to replace my GeForce 9800GT :)bobwya - Friday, September 19, 2014 - link

Such a modern card - why bother :-) The 980 will finally replace my 8800 GTX. Now that's a genuinely old card!!Actually I mainly need to do the upgrade because the power bills are so ridiculous for the 8800 GTX! For pities sake the card only has one power profile (high power usage).

djscrew - Friday, September 19, 2014 - link

Like +1kron123456789 - Saturday, September 20, 2014 - link

Oh yeah, modern :) It's only 6 years old) But it can handle even Tomb Raider at 1080p with 30-40fps at medium settings :)SkyBill40 - Saturday, September 20, 2014 - link

I've got an 8800 GTS 640MB still running in my mom's rig that's far more than what she'd ever need. Despite getting great performance from my MSI 660Ti OC 2GB Power Edition, it might be time to consider moving up the ladder since finding another identical card at a decent price for SLI likely wouldn't be worth the effort.So, either I sell off this 660Ti, give it to her, or hold onto it for a HTPC build at some point down the line. Decision, decisions. :)