The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTSynthetics

As always we’ll also take a quick look at synthetic performance. These tests mainly serve as a canary for finding important architectural changes, and with the exception of pixel throughput we are not expecting any major changes for GTX 980 and GM204.

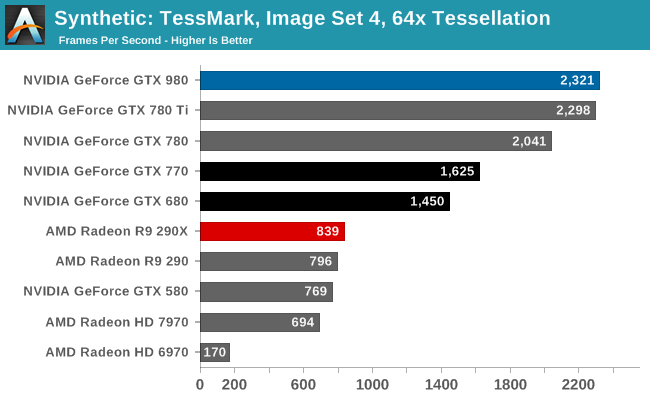

GM204 is designed to have an ever-so-slightly higher triangle throughput rate than GK110 – 16 tris/clock versus 15 tris/clock, and sure enough the GTX 980 comes out on top in TessMark, slightly edging out the GTX 780 Ti. The difference is only very slight here, and though GM204 should be a bit more powerful than GK110 in practice it’s a dead heat.

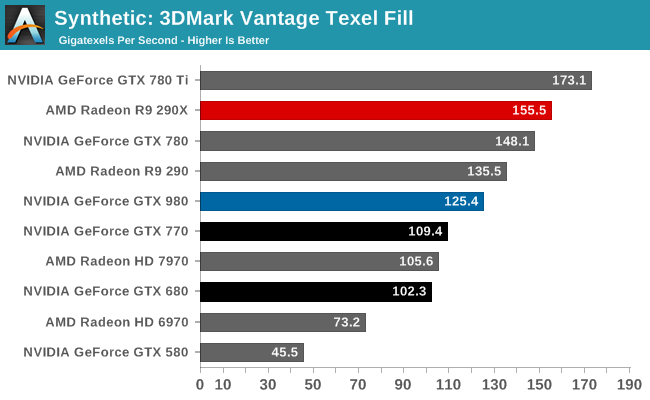

Moving on, we have our 3DMark Vantage texture and pixel fillrate tests, which present our cards with massive amounts of texturing and color blending work. These aren’t results we suggest comparing across different vendors, but they’re good for tracking improvements and changes within a single product family.

Beginning with Maxwell NVIDIA reduced their texture-to-compute ratio from 12:1 to 16:1. As a result of this change Maxwell GPUs have fewer texture units than comparable Kepler GPUs. Compounding this effect is the fact that Maxwell CUDA cores are more efficient than Kepler CUDA cores, leading to NVIDIA placing fewer cores overall and further reducing the texture fill rate.

As a result the GTX 980 is not texture fillrate competitive with any of the GK110 cards. It is competitive with the GK104 cards, but only because these cards had the same number of texture units at 128. NVIDIA has told us that they believe this new ratio is a better fit for modern workloads, and judging from the performance we’re seeing elsewhere it would appear that NVIDIA is right.

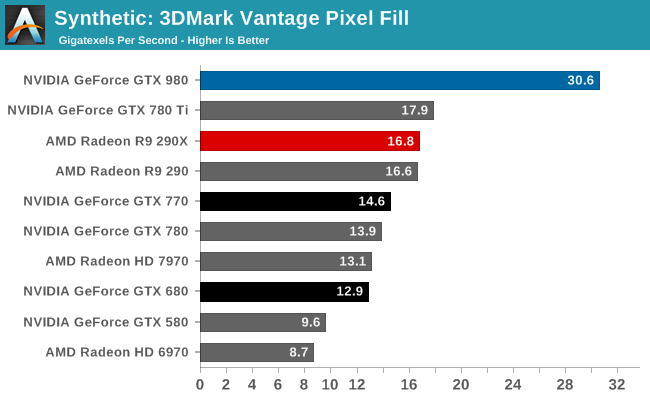

On the other hand, thanks to NVIDIA’s newer 3rd generation delta color compression technology, our 3DMark pixel fillrate performance is through the roof. GTX 980 comes very close to doubling the throughput of our GK110 cards and more than doubles the throughput of the GK104 cards, reflecting the fact that it has 64 ROPs and more importantly has the available bandwidth to put them to good use.

This benchmark in a nutshell is why NVIDIA can deliver chart-topping performance despite having only 2/3rds the memory bandwidth of GTX 780 Ti. By improving their color compression to this point, NVIDIA can significantly reduce their memory bandwidth requirements Maxwell 2, allowing them to do more with less. In real games the result won’t be anywhere near this remarkable since this is a pure pixel fillrate test, but it goes to show that NVIDIA has been able to expand their effective memory bandwidth in concert with their ROP and shader performance improvements.

274 Comments

View All Comments

kron123456789 - Friday, September 19, 2014 - link

Look at "Load Power Consuption — Furmark" test. It's 80W lower with 980 than with 780Ti.Carrier - Friday, September 19, 2014 - link

Yes, but the 980's clock is significantly lowered for the FurMark test, down to 923MHz. The TDP should be fairly measured at speeds at which games actually run, 1150-1225MHz, because that is the amount of heat that we need to account for when cooling the system.Ryan Smith - Friday, September 19, 2014 - link

It doesn't really matter what the clockspeed is. The card is gated by both power and temperature. It can never draw more than its TDP.FurMark is a pure TDP test. All NVIDIA cards will reach 100% TDP, making it a good way to compare their various TDPs.

Carrier - Friday, September 19, 2014 - link

If that is the case, then the charts are misleading. GTX 680 has a 195W TDP vs. GTX 770's 230W (going by Wikipedia), but the 680 uses 10W more in the FurMark test.I eagerly await your GTX 970 report. Other sites say that it barely saves 5W compared to the GTX 980, even after they correct for factory overclock. Or maybe power measurements at the wall aren't meant to be scrutinized so closely :)

Carrier - Friday, September 19, 2014 - link

To follow up: in your GTX 770 review from May 2013, you measured the 680 at 332W in FurMark, and the 770 at 383W in FurMark. Those numbers seem more plausible.Ryan Smith - Saturday, September 20, 2014 - link

680 is a bit different because it's a GPU Boost 1.0 card. 2.0 included the hard TDP and did away with separate power targets. Actually what you'll see is that GTX 680 wants to draw 115% TDP with NVIDIA's current driver set under FurMark.Carrier - Saturday, September 20, 2014 - link

Thank you for the clarification.wanderer27 - Friday, September 19, 2014 - link

Power at the wall (AC) is going to be different than power at the GPU - which is coming from the DC PSU.There are loses and efficiency difference in converting from AC to DC (PSU), plus a little wiggle from MB and so forth.

solarscreen - Friday, September 19, 2014 - link

Here you go:http://books.google.com/books?id=v3-1hVwHnHwC&...

PhilJ - Saturday, September 20, 2014 - link

As stated in the article, the power figures are total system power draw. The GTX980 is throwing out nearly double the FPS of the GTX680, so this is causing the rest of the system (mostly the CPU) to work harder to feed the card. This in tun drives the total system power consumption up, despite the fact the GTX980 itself is drawing less power than the GTX680.