The NVIDIA GeForce GTX 980 Review: Maxwell Mark 2

by Ryan Smith on September 18, 2014 10:30 PM ESTGRID 2

The final game in our benchmark suite is also our racing entry, Codemasters’ GRID 2. Codemasters continues to set the bar for graphical fidelity in racing games, and with GRID 2 they’ve gone back to racing on the pavement, bringing to life cities and highways alike. Based on their in-house EGO engine, GRID 2 includes a DirectCompute based advanced lighting system in its highest quality settings, which incurs a significant performance penalty but does a good job of emulating more realistic lighting within the game world.

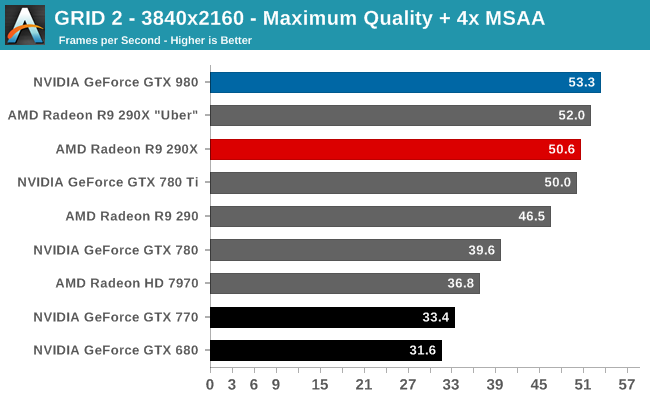

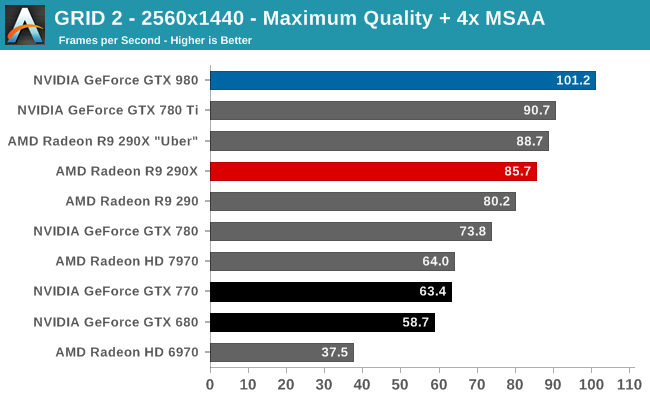

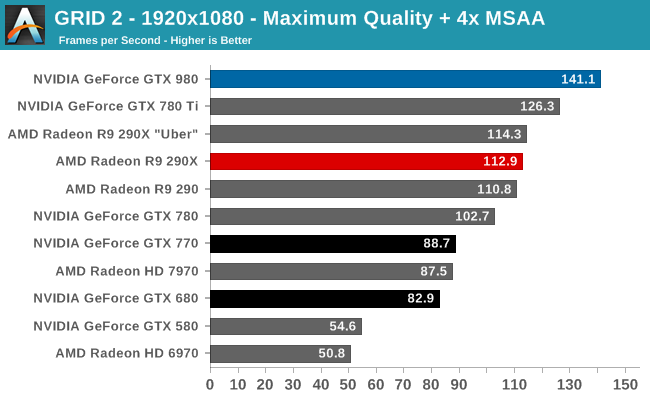

Our final game is another solid victory for the GTX 980. The GTX 980’s lead does shrink at 4K, otherwise we’re looking at a 12% advantage over the GTX 780 Ti and 14-23% over R9 290XU.

144Hz gamers will find 1080p quite useful, with the GTX 980 coming just short of averaging a matching framerate. Otherwise for 2560p one would need to settle for 101fps. Though for 4K gamers, even a single GTX 980 is more or less enough here; 53fps at 4K with Maximum quality and 4x MSAA means that at most a drop to 2x MSAA would get it above 60fps without involving a second card. Maybe this is a good case for NVIDIA’s new Multi-Frame sampled Anti-Aliasing?

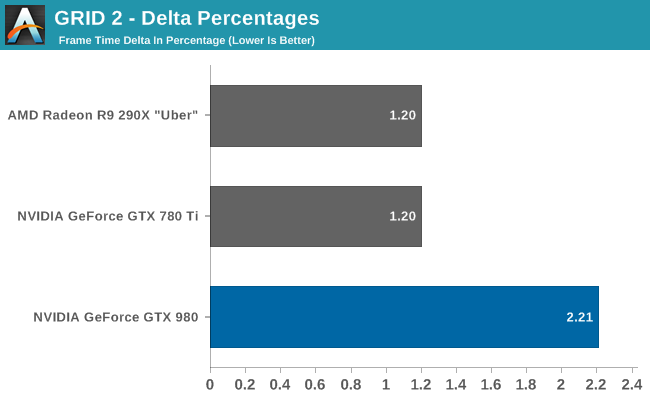

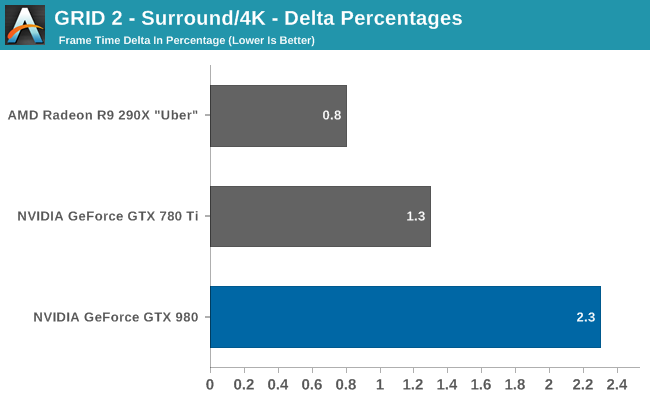

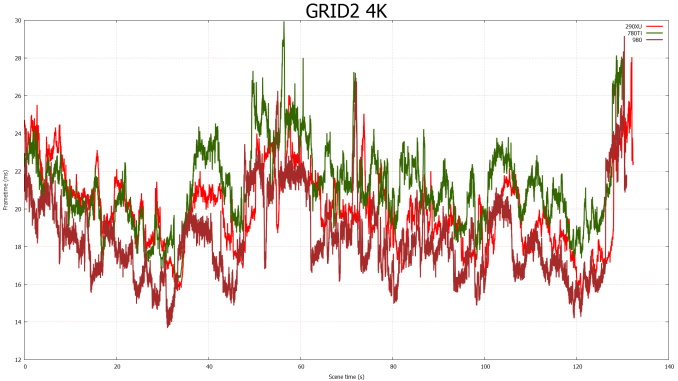

Our last set of delta percentages once again finds the GTX 980 easily below 3%. Though the variance is higher than with the other two cards, and by more than just what we would expect as a result of higher average framerates.

274 Comments

View All Comments

Sttm - Thursday, September 18, 2014 - link

"How will AMD and NVIDIA solve the problem they face and bring newer, better products to the market?"My suggestion is they send their CEOs over to Intel to beg on their knees for access to their 14nm process. This is getting silly, GPUs shouldn't be 4 years behind CPUs on process node. Someone cut Intel a big fat check and get this done already.

joepaxxx - Thursday, September 18, 2014 - link

It's not just about having access to the process technology and fab. The cost of actually designing and verifying an SoC at nodes past 28nm is approaching the breaking point for most markets, that's why companies aren't jumping on to them. I saw one estimate of 500 million for development of a 16/14nm device. You better have a pretty good lock on the market to spend that kind of money.extide - Friday, September 19, 2014 - link

Yeah, but the GPU market is not one of those markets where the verification cost will break the bank, dude.Samus - Friday, September 19, 2014 - link

Seriously, nVidia's market cap is $10 billion dollars, they can spend a tiny fortune moving to 20nm and beyond...if they want too.I just don't think they want to saturate their previous products with such leaps and bounds in performance while also absolutely destroying their competition.

Moving to a smaller process isn't out of nVidia's reach, I just don't think they have a competitive incentive to spend the money on it. They've already been accused of becoming a monopoly after purchasing 3Dfx, and it'd be painful if AMD/ATI exited the PC graphics market because nVidia's Maxwell's, being twice as efficient as GCN, were priced identically.

bernstein - Friday, September 19, 2014 - link

atm. it is out of reach to them. at least from a financial perspective.while it would be awesome to have maxwell designed for & produced on intel's 14nm process, intel doesn't even have the capacity to produce all of their own cpus... until fall 2015 (broadwell xeon-ep release)...

kron123456789 - Friday, September 19, 2014 - link

"it also marks the end of support for NVIDIA’s D3D10 GPUs: the 8, 9, 100, 200, and 300 series. Beginning with R343 these products are no longer supported in new driver branches and have been moved to legacy status." - This is it. The time has come to buy a new card to replace my GeForce 9800GT :)bobwya - Friday, September 19, 2014 - link

Such a modern card - why bother :-) The 980 will finally replace my 8800 GTX. Now that's a genuinely old card!!Actually I mainly need to do the upgrade because the power bills are so ridiculous for the 8800 GTX! For pities sake the card only has one power profile (high power usage).

djscrew - Friday, September 19, 2014 - link

Like +1kron123456789 - Saturday, September 20, 2014 - link

Oh yeah, modern :) It's only 6 years old) But it can handle even Tomb Raider at 1080p with 30-40fps at medium settings :)SkyBill40 - Saturday, September 20, 2014 - link

I've got an 8800 GTS 640MB still running in my mom's rig that's far more than what she'd ever need. Despite getting great performance from my MSI 660Ti OC 2GB Power Edition, it might be time to consider moving up the ladder since finding another identical card at a decent price for SLI likely wouldn't be worth the effort.So, either I sell off this 660Ti, give it to her, or hold onto it for a HTPC build at some point down the line. Decision, decisions. :)