The NVIDIA GeForce GTX 750 Ti and GTX 750 Review: Maxwell Makes Its Move

by Ryan Smith & Ganesh T S on February 18, 2014 9:00 AM ESTFinal Words

Bringing this review to a close, NVIDIA’s latest product launch has given us quite a bit to digest. Not only are we looking at NVIDIA’s latest products for the high volume mainstream desktop video card market, but we’re doing so through the glasses of a new generation of GPUs. With the GeForce GTX 750 series we are seeing our first look at what the next generation of GPUs will hold for NVIDIA, and if these cards are an accurate indication of what’s to follow then we’re being setup for quite an interesting time.

Starting from an architectural point of view, it’s clear from the very start that Maxwell is both a refresh of the Kepler architecture and at the same time oh so much more. I think from a feature perspective it’s going to be difficult not to be a bit disappointed that NVIDIA hasn’t pushed the envelope here in some manner, leaving us with a part that as far as features go is distinctly Kepler. Complete support for Direct3D 11.1 and 11.2, though not essential, would have been nice to have so that 11.2 could be the standard for new video cards in 2014. Otherwise I’ll fully admit I don’t know what else to expect of Maxwell – the lack of a new Direct3D standard leaves this as something of a wildcard – but it means that there isn’t a real marquee feature for the architecture to evaluate and marvel at.

On the other hand, the lack of a significant feature changes means that it’s much easier to evaluate Maxwell next to Kepler in the area where NVIDIA did focus: efficiency. This goes for power efficiency resource/compute efficiency, and space efficiency. Utilizing a number of techniques NVIDIA set out to double their performance per watt versus Kepler – a design that was already power efficient by desktop GPU standards – and it’s safe to say that they have accomplished this. With a higher resource efficiency giving NVIDIA additional performance with less hardware, and power optimizations bringing that power consumption down by dozens of watts, NVIDIA has done what in previous generations would have taken a die shrink. The tradeoff is that NVIDIA doesn’t have that die shrink, so die sizes grow in the process, but even then the fact that they packed so much more hardware into GM107 for only a moderate increase in die size is definitely remarkable from an engineering perspective.

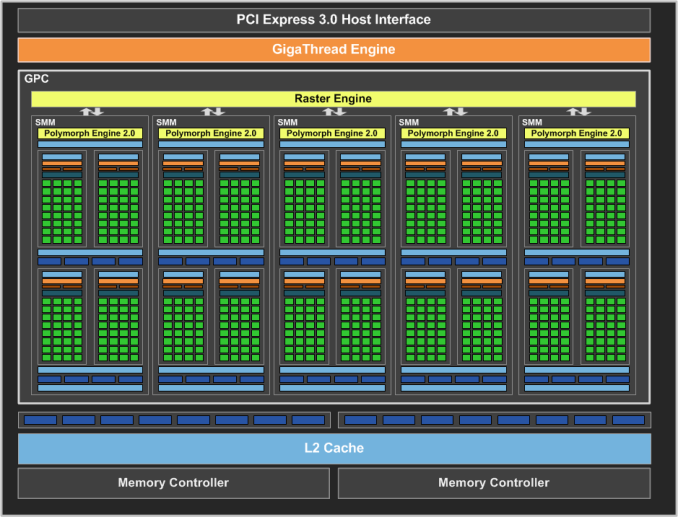

Efficiency aside, Maxwell’s architecture is something of an oddity at first, but given NVIDIA’s efficiency gains it’s difficult to argue with the outcome. The partitioning of the SMM means that we have partitions that feel a lot like GF100 SMs, which has NVIDIA going backwards in a sense due to the fact that significant resource sharing was something that first became big with Kepler. But perhaps that was the right move all along, as evidenced by what NVIDIA has achieved. On the other hand the upgrade of the compute feature set to GK110 levels is good news all around. The increased efficiency it affords improves performance alongside the other IPC improvements NVIDIA has worked in, plus it means that some of GK110’s more exotic features such as dynamic parallelism and HyperQ are now a baseline feature. Furthermore the reduction in register pressure and memory pressure all around should be a welcome development; compared to GK107 there are now more registers per thread, more registers per CUDA core, more shared memory per CUDA core, and a lot more L2 cache per GPU. All of which should help to alleviate memory related stalls, especially as NVIDIA is staying on the 128-bit bus.

With that in mind, this brings us to the cards themselves. By doubling their performance-per-watt NVIDIA has significantly shifted their performance both with respect to their own product lineup and AMD’s lineup. The fact that the GTX 750 Ti is nearly 2x as fast as the GTX 650 is a significant victory for NVIDIA, and the fact that it’s nearly 3x faster than the GT 640 – officially NVIDIA’s fastest 600 series card without a PCIe power plug requirement – completely changes the sub-75W market. NVIDIA wants to leverage GM107 and the GTX 750 series to capture this market for HTPC use and OEM system upgrades alike, and they’re in a very good position to do so. Plus it goes without saying that compared to last-generation cards such as the GeForce GTX 550 Ti, NVIDIA has finally doubled their performance (and halved their power consumption!), for existing NVIDIA customers looking for a significant upgrade from older GF106/GF116 cards.

But on a competitive basis things are not so solidly in NVIDIA’s favor. NVIDIA does not always attempt to compete with AMD on a price/performance basis in the mainstream market, as their brand and retail presence gives them something they can bank on even when they don’t have the performance advantage. In this case NVIDIA has purposely chosen to forgo chasing AMD for the price/performance lead, and as such for the price the GeForce GTX 750 cards are the weaker products. Radeon R7 265 holds a particularly large 19% lead over GTX 750 Ti, and in fact wins at every single benchmark. Similarly, Radeon R7 260X averages a 10% lead over GTX 750, and it does so while having 2GB of VRAM to GTX 750’s 1GB.

On a pure price/performance basis, the GTX 750 series is not competitive. If you’re in the sub-$150 market and looking solely at performance, the Radeon R7 260 series will be the way to go. But this requires forgoing NVIDIA’s ecosystem and their power efficiency advantage; if either of those matter to you, then the lower performance of the NVIDIA cards will be justified by their other advantages. With that said however, we will throw in an escape clause: NVIDIA has hard availability today, while AMD’s Radeon R7 265 cards are still not due for about another 2 weeks. Furthermore it’s not at all clear if retailers will hold to their $149 MSRP due to insane demand from cryptocoin miners; if that happens then NVIDIA’s competition is diminished or removed entirely, and NVIDIA wins on price/performance by default.

Wrapping things up, as excited as we get and as focused as we are on desktop cards, it’s hard not to view this launch as a preview of things to come. With laptop sales already exceeding desktop sales, it’s a foregone conclusion that NVIDIA will move more GM107 based video cards in mobile products than they will in desktops. With GK107 already being very successful in that space and GM107 doubling NVIDIA’s performance-per-watt – and thereby doubling their performance in those power-constrained devices – it means that GM107 is going to be an even greater asset in the mobile arena. To that end it will be very interesting to see what happens once NVIDIA starts releasing the obligatory mobile variants of the GTX 750 series, as what we’ve seen today tells us that we could be in for a very welcome jump in mobile performance.

177 Comments

View All Comments

Mondozai - Wednesday, February 19, 2014 - link

Anywhere outside of NA gives normal prices. Get out of your bubble.ddriver - Wednesday, February 19, 2014 - link

Yes, prices here are pretty much normal, no on rushes to waste electricity on something as stupid as bitcoin mining. Anyway, I got most of the cards even before that craze began.R3MF - Tuesday, February 18, 2014 - link

at ~1Bn transitors for 512Maxwell shaders i think a 20nm enthusiast card could afford the 10bn transistors necessary for a 4096 shaders...Krysto - Tuesday, February 18, 2014 - link

If Maxwell has 2x the P/W, and Tegra K2 arrives at 16nm, with 2 SMX (which is very reasonable expection), then Tegra K2 will have at least a 1 Teraflop of performance, if not more than 1.2 Teraflops, which would already surpass the Xbox One.Now THAT's exciting.

chizow - Tuesday, February 18, 2014 - link

It probably won't be Tegra K2, will most likely be Tegra M1 and could very well have 3xSMM at 20nm (192x2 vs. 128x3), which according to the article might be a 2.7x speed-up vs. just a 2x using Kepler's SMX arch. But yes, certainly all very exciting possibilities.grahaman27 - Wednesday, February 19, 2014 - link

the Tegra M1 will be on 16nm finfet if they stick to their roadmap. But, since they are bringing the 64bit version sooner than expected, I dont know what to expect. BTW, it has yet to be announce what manufacturing process the 64bit version will be... we can only hope TSMC 20nm will arrive in time.Mondozai - Wednesday, February 19, 2014 - link

Exciting or f%#king embarrassing for M$? Or for the console industry overall.RealiBrad - Tuesday, February 18, 2014 - link

Looks to be an OK card when you consider that mining has caused AMD cards to sell out and push up price.It looks like the R7 265 is fairly close on power, temp, and noise. If AMD supply could meet demand, then the 750Ti would need to be much cheaper and would not look nearly as good.

Homeles - Tuesday, February 18, 2014 - link

Load power consumption is clearly in Nvidia's favor.DryAir - Tuesday, February 18, 2014 - link

Power consumpion is way higher... give a look at TPU´s review. But price/perf is a lot beter yeah.Personally I'm a sucker for low power, and I will gadly pay for it.