The NVIDIA GeForce GTX 780 Ti Review

by Ryan Smith on November 7, 2013 9:01 AM ESTOverclocking

Finally, let’s spend a bit of time looking at the overclocking prospects for the GTX 780 Ti. Although GTX 780 Ti is now the fastest GK110 part, based on what we've seen with GTX 780 and GTX Titan there should still be some headroom to play with. Meanwhile there will also be the matter of memory overclocking, as 7GHz GDDR5 on a 384-bit bus presents us with a new baseline that we haven't seen before.

| GeForce GTX 780 Ti Overclocking | ||||

| Stock | Overclocked | |||

| Core Clock | 876MHz | 1026MHz | ||

| Boost Clock | 928MHz | 1078MHz | ||

| Max Boost Clock | 1020MHz | 1169MHz | ||

| Memory Clock | 7GHz | 7.6GHz | ||

| Max Voltage | 1.187v | 1.187v | ||

Overall our overclock for the GTX 780 Ti is a bit on the low side compared to the other GTX 780 cards we’ve seen in the past, but not immensely so. With a GPU overclock of 150MHz, we’re able to push the base clock and maximum boost clocks ahead by 17% and 14% respectively, which should further extend NVIDIA’s performance lead by a similar amount.

Meanwhile the inability to unlock a higher boost bin through overvolting is somewhat disappointing, as this is the first time we’ve seen this happen. To be clear here GTX 780 Ti does support overvolting – our card offers up to another 75mV of voltage – however on closer examination our card doesn’t have a higher bin within reach; 75mV isn’t enough to reach the next validated bin. Apparently this is something that can happen with the way NVIDIA bins their chips and implements overvolting, though this the first time we’ve seen a card actually suffer from this. The end result is that it limits our ability to boost at the highest bins, as we’d normally have a bin or two unlocked to further increase the maximum boost clock.

As for memory overclocking, we were able to squeeze out a bit more out of our 7GHz GDDR5, pushing our memory clock 600MHz (9%) higher to 7.6GHz. Memory overclocking is always something of a roll of the dice, so it’s not clear here whether this is average or not for a GK110 setup with 7GHz GDDR5. Given the general drawbacks of a wider memory bus we wouldn’t be surprised if this was average, but at the same time in practice GK110 cards haven’t shown themselves to be as memory bandwidth limited as GK104 cards. So 9%, though a smaller gain than what we’ve seen on other cards, should still provide GTX 780 Ti with enough to keep the overclocked GPU well fed.

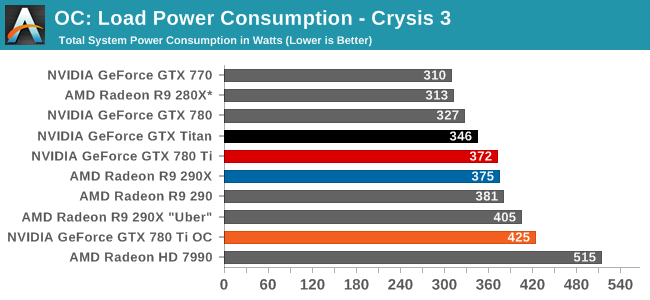

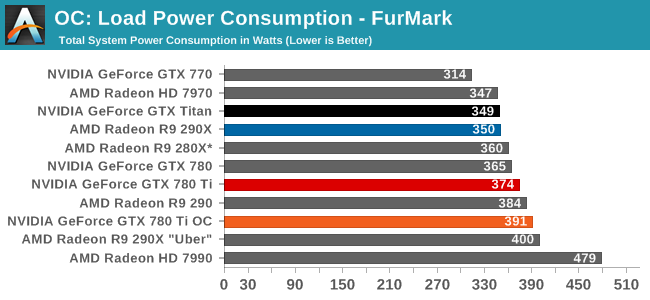

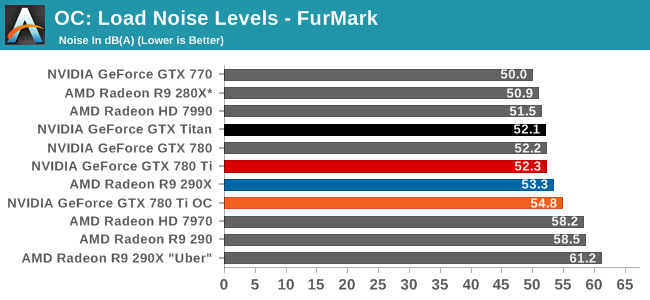

Starting as always with power, temperatures, and noise, we can see that overclocking GTX 780 Ti further increases its power consumption, and to roughly the same degree as what we’ve seen with GTX 780 and GTX Titan in the past. With a maximum TDP of just 106% (265W) the change isn’t so much that the card’s power limit has been significantly lifted – as indicated by FurMark – but rather raising the temperature limit virtually eliminates temperature throttling and as such allows the card to more frequently stay at its highest, most power hungry boost bins.

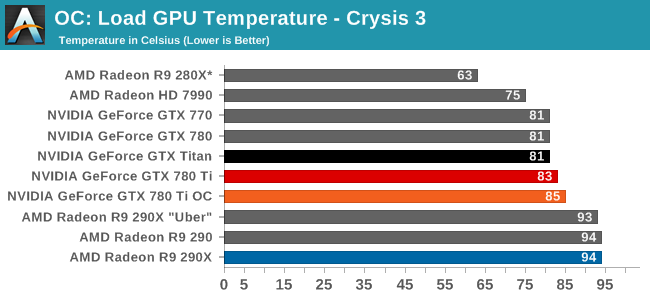

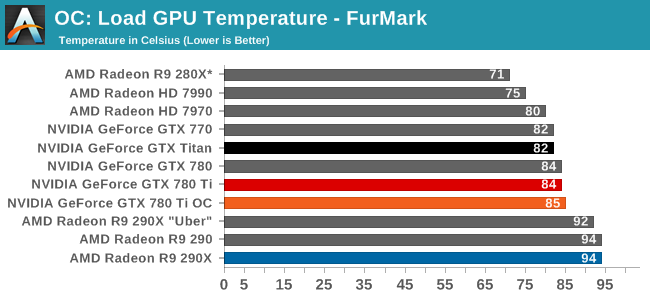

Despite the 95C temperature target we use for overclocking, the GTX 780 Ti finds its new equilibrium point at 85C. The fan will ramp up long before it allows us to get into the 90s.

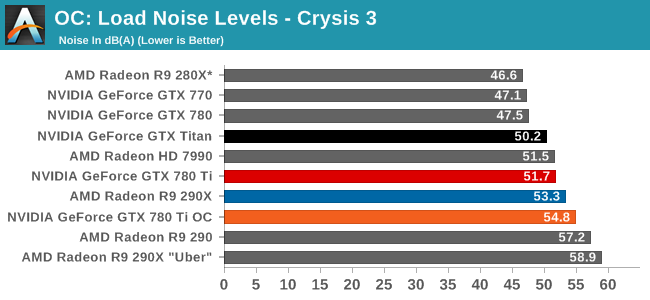

Given the power jump we saw with Crysis 3 the noise ramp up is surprisingly decent. A 3dB rise in noise is going to be noticeable, but even in these overclocked conditions it will avoid being an ear splitting change. To that end overclocking means we’re getting off of GK110’s standard noise efficiency curve just as it does for power, so the cost will almost always outpace the payoff on a relative basis.

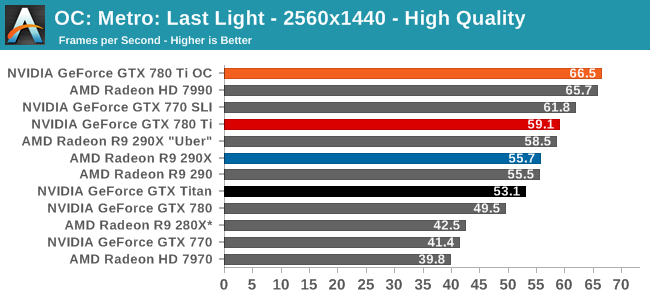

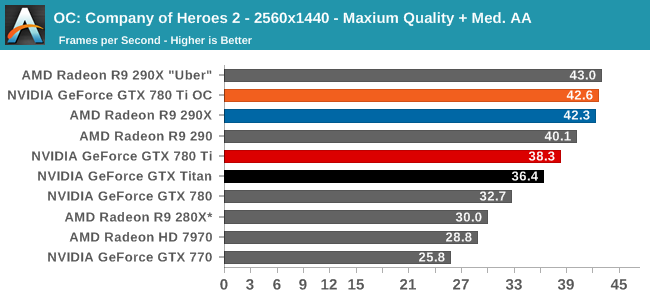

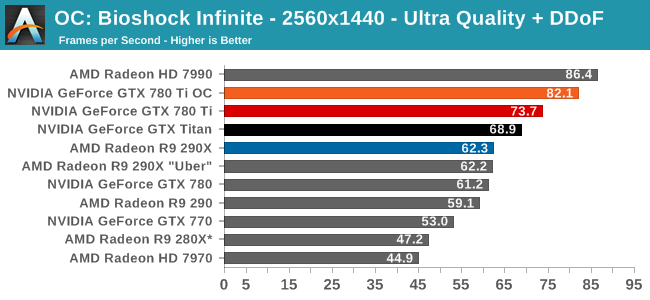

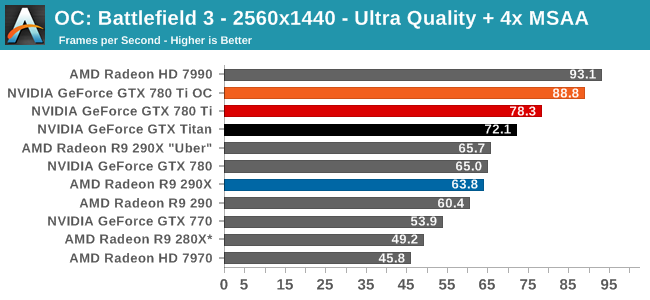

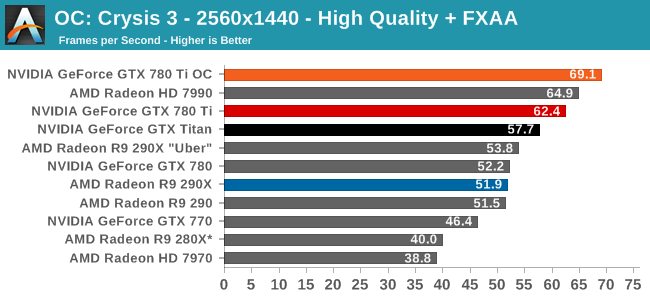

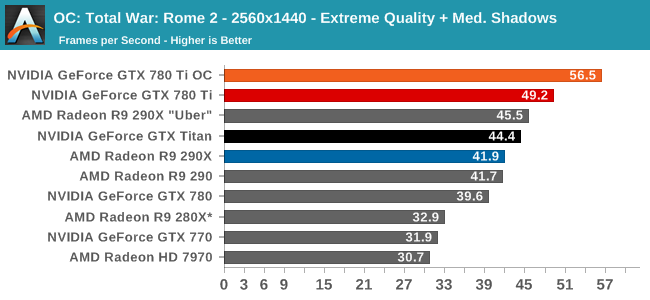

Finally, looking at gaming performance the the overall performance gains for overclocking are generally consistent. Between our 6 games we see a 10-14% performance increase, all in excess of the memory overclock and closely tracking the GPU overclock. GTX 780 Ti is already the fastest single-GPU card, so this only further improves its performance lead. But it does so while cutting into whatever is above it, be it the games where the stock 290X has a lead, or multi-GPU setups such as the 7990.

302 Comments

View All Comments

yuko - Monday, November 11, 2013 - link

for me neither of them is gamechanger ... gsync, shield ... nice stuff i don't needmantle: another nice approach to create an semi-closed-standard .. it's not that directX or opengl is allready existing and working quite good, no , we need another low level standard where amd creates the api (and to be honest, they would be quite stupid not optimizing it for their hardware).

I cannot believe and hope that mantle will flop, it does no favor to customers and the industry. It's just good for the marketing but has no real world use.

Kamus - Thursday, November 7, 2013 - link

Nope, it's confirmed for every frostbite 3 game coming out, that's at least a dozen so far, not to mention it's also officially coming to starcitizen, which runs on cryengine 3 I believe.But yes, even with those titles it's still a huge difference, obviously.

That said, you can expect that any engine optimized for GCN on consoles could wind up with mantle support, since the hard work is already done. And in the case of star citizen... Well, that's a PC exclusive, and it's still getting mantle.

StevoLincolnite - Thursday, November 7, 2013 - link

Mantle is confirmed for all Frostbite powered games.That is, Battlefield 4, Dragon Age 3, Mirrors Edge 2, Need for Speed, Mass Effect, StarWars Battlefront, Plant's vs Zombies: Garden Warfare and probably others that haven't been announced yet by EA.

Star Citizen and Thief will also support Mantle.

So that's EA, Cloud Imperium Games, Square Enix that will support the API and it hasn't even released yet.

ahlan - Thursday, November 7, 2013 - link

And for Gsync you will need a new monitor with Gsync support. I won't buy a new monitor only for that.jnad32 - Thursday, November 7, 2013 - link

http://ir.amd.com/phoenix.zhtml?c=74093&p=irol...BOOM!

Creig - Friday, November 8, 2013 - link

Gsync will only work on Kepler and above video cards.So if you have an older card, not only do you have to buy an expensive gsync capable monitor, you also need a new Kepler based video card as well. Even if you already own a Kepler video card, you still have to purchase a new gsync monitor which will cost you $100 more than an identical non-gsync monitor.

Whereas Mantle is a free performance boost for all GCN video cards.

Summary:

Gsync cost - Purchase new computer monitor +$100 for gsync module.

Mantle cost - Free performance increase for all GCN equipped video cards.

Pretty easy to see which one offers the better value.

neils58 - Sunday, November 10, 2013 - link

As you say Mantle is very exciting, but we don't know how much performance we are talking about yet. My thinking on saying that crossfire was AMD's only answer is that in order to avoid the stuttering effect of dropping below the Vsync rate, you have to ensure that the minimum framerate is much higher, which means adding more cards or turning down quality settings. If Mantle turns out to be a huge performance increase things might work out, but we just don't know.Sure, TN isn't ideal, but people with gaming priorities will already be looking for monitors with low input lag, fast refresh rates and features like backlight strobing for motion blur reduction, G-Sync will basically become a standard feature on a brands lineup of gaming oriented monitors. I think it'll come down in price a fair bit too once there are a few competing brands.

It's all made things tricky for me, I'm currently on a 1920x1200 'VA monitor on a 5850 and was considering going up to a 1440p 27" screen (which would have required a new GPU purchase anyway) G-Sync adds enough value to Gaming TN's to push me over to them.

jcollett - Monday, November 11, 2013 - link

I've got a large 27" IPS panel so I understand the concern. However, a good high refresh panel need not cost very much and still look great. Check out the ASUS VG248QE; been hearing good things about the panel and it is relatively cheap at about $270. I assume it would work with the G-Sync but I haven't confirmed that myself. I'll be looking for reviews of Battlefield 4 using Mantle this December as that could makeup a big part of the decision on my next card coming from Team Green or Red.misfit410 - Thursday, November 7, 2013 - link

I don't buy that it's a game changer, I have no intention of replacing my three Dell Ultrasharp monitors anytime soon, and even if I did I have no intention of dealing with buggy displayport as my only option to hook up a synced monitor.Mr Majestyk - Thursday, November 7, 2013 - link

+1I've got two high end Dell 27" monitors and it's a joke to think I'd swap them out for garbage TN monitors just to get G Sync.

I don't see the 780 Ti as being any skin off AMD's nose. It's much dearer for very small gains and we haven't seen the custom AMD boards yet. For now I'd probably get the R9 290, assuming custom boards can greatly improve on cooling and heat.