The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

Synthetics

As always we’ll also take a quick look at synthetic performance. The 290X shouldn’t pack any great surprises here since it’s still GCN, and as such bound to the same general rules for efficiency, but we do have the additional geometry processors and additional ROPs to occupy our attention.

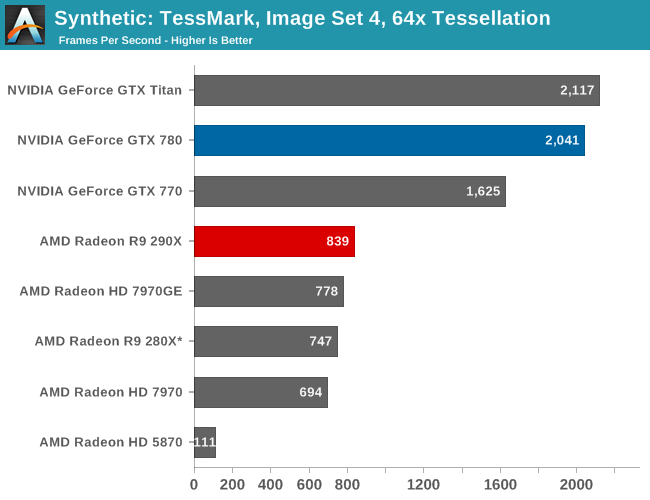

Right off the bat then, the TessMark results are something of a head scratcher. Whereas NVIDIA’s performance here has consistently scaled well with the number of SMXes, AMD’s seeing minimal scaling from those additional geometry processors on Hawaii/290X. Clearly Tessmark is striking another bottleneck on 290X beyond simple geometry throughput, though it’s not absolutely clear what that bottleneck is.

This is a tessellation-heavy benchmark as opposed to a simple massive geometry bencehmark, so we may be seeing a tessellation bottleneck rather than a geometry bottleneck, as tessellation requires its own set of heavy lifting to generate the necessary control points. The 12% performance gain is much closer to the 11% memory bandwidth gain than anything else, so it may be that the 280X and 290X are having to go off-chip to store tessellation data (we are after all using a rather extreme factor), in which case it’s a memory bandwidth bottleneck. Real world geometry performance will undoubtedly be better than this – thankfully for AMD this is the pathological tessellation case – but it does serve of a reminder of how much more tessellation performance NVIDIA is able to wring out of Kepler. Though the nearly 8x increase in tessellation performance since 5870 shows that AMD has at least gone a long way in 4 years, and considering the performance in our tessellation enabled games AMD doesn’t seem to be hurting for tessellation performance in the real world right now.

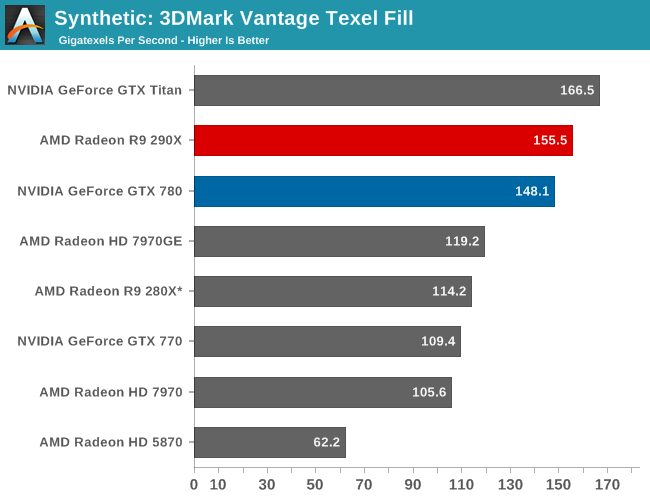

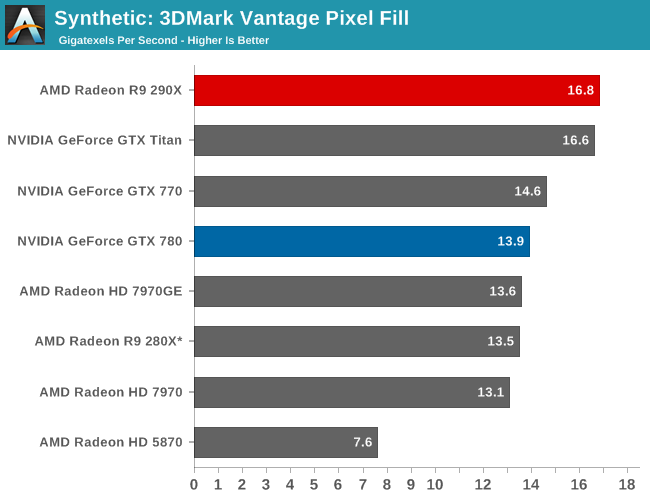

Moving on, we have our 3DMark Vantage texture and pixel fillrate tests, which present our cards with massive amounts of texturing and color blending work. These aren’t results we suggest comparing across different vendors, but they’re good for tracking improvements and changes within a single product family.

Looking first at texturing performance, we can see that texturing performance is essentially scaling 1:1 with what the theoretical numbers say it should. 36% better texturing performance over 280X is exactly in line with the increased number of texture units versus 280X, at the very least proving that 290X isn’t having any trouble feeding the increased number of texture units in this scenario.

Meanwhile for our pixel fill rates the results are a bit more in the middle, reflecting the fact that this test is a mix of ROP bottlenecking and memory bandwidth bottlenecking. Remember, AMD doubled the ROPs versus 280X, but only gave it 11% more memory bandwidth. As a result the ROPs’ ability to perform is going to depend in part on how well color compression works and what can be recycled in the L2 cache, as anything else means a trip to the VRAM and running into those lesser memory bandwidth gains. Though the 290X does get something of a secondary benefit here, which is that unlike the 280X it doesn’t have to go through a memory crossbar and any inefficiencies/overhead it may add, since the number of ROPs and memory controllers is perfectly aligned on Hawaii.

396 Comments

View All Comments

Spunjji - Friday, October 25, 2013 - link

Word.extide - Thursday, October 24, 2013 - link

That doesn't mean that AMD can't come up with a solution that might even be compatible with G-Sync... Time will tell..piroroadkill - Friday, October 25, 2013 - link

That would not be in NVIDIA's best interests. If a lot of machines (AMD, Intel) won't support it, why would you buy a screen for a specific graphics card? Later down the line, maybe something like the R9 290X comes out, and you can save a TON of money on a high performing graphics card from another team.It doesn't make sense.

For NVIDIA, their best bet at getting this out there and making the most money from it, is licencing it.

Mstngs351 - Sunday, November 3, 2013 - link

Well it depends on the buyer. I've bounced between AMD and Nvidia (to be upfront I've had more Nvidia cards) and I've been wanting to step up to a larger 1440 monitor. I will be sure that it supports Gsync as it looks to be one of the more exciting recent developments.So although you are correct that not a lot of folks will buy an extra monitor just for Gsync, there are a lot of us who have been waiting for an excuse. :P

nutingut - Saturday, October 26, 2013 - link

Haha, that would be something for the cartel office then, I figure.elajt_1 - Sunday, October 27, 2013 - link

This doesn't prevent AMD from making something similiar, if Nvidia decides to not make it open.hoboville - Thursday, October 24, 2013 - link

Gsync will require you to buy a new monitor. Dropping more money on graphics and smoothness will apply at the high end and for those with big wallets, but for the rest of us there's little point to jumping into Gsync.In 3-4 years when IPS 2560x1440 has matured to the point where it's both mainstream (cheap) and capable of delivering low-latency ghosting-free images, then Gsync will be a big deal, but right now only a small percentage of the population have invested in 1440p.

The fact is, most people have been sitting on their 1080p screens for 3+ years and probably will for another 3 unless those same screens fail--$500+ for a desktop monitor is a lot to justify. Once the monitor upgrades start en mass, then Gsync will be a market changer because AMD will not have anything to compete with.

misfit410 - Thursday, October 24, 2013 - link

G-String didn't kill anything, I'm not about to give up my Dell Ultrasharp for another Proprietary Nvidia tool.anubis44 - Tuesday, October 29, 2013 - link

Agreed. G-sync is a stupid solution to non-existent problem. If you have a fast enough frame rate, there's nothing to fix.MADDER1 - Thursday, October 24, 2013 - link

Mantle could be for either higher frame rate or more detail. Gsync sounds like just frame rate.