AMD Announces TrueAudio Technology For Upcoming GPUs

by Ryan Smith on September 25, 2013 7:30 PM EST

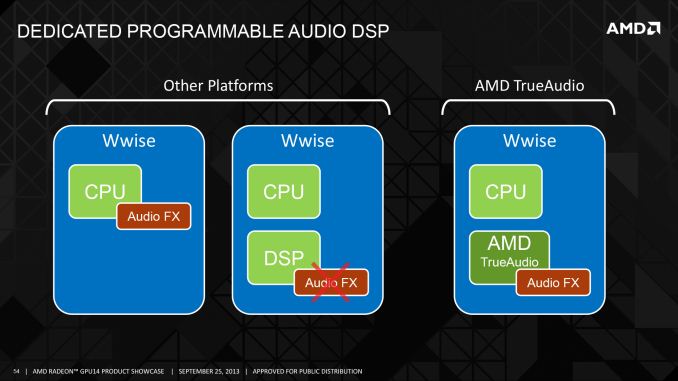

As part of today’s public session for AMD’s 2014 GPU product showcase, AMD has announced a new audio technology for some of their upcoming GPUs. Dubbed TrueAudio, Although technical details are light at this time – more is certainly to come under NDA – what AMD is describing would be consistent with them having integrated some form of audio DSP into their relevant GPUs.

The inclusion of an audio DSP comes at an interesting time for the industry. The launch of the next generation consoles has afforded everyone the chance to make significant technology changes, as the consoles and the realities of multi-platform game publishing meant that many developers stuck with a least common denominator on input, graphics, and audio. For PC game audio this meant that most audio was implemented entirely in software, just as it was with the consoles.

This also coincides with significant changes to the Windows audio stack that came with Windows Vista. Vista saw a significant overhaul of the Windows audio stack, where after years of bad experiences with audio hardware and dodgy drivers for low-end audio chips that implemented most of their functionality in software, Microsoft outright moved the bulk of the audio stack into the user space, i.e. into software. This vastly improved the audio stack stability and baseline features, however in doing so it cut off hardware audio acceleration of the principle 3D audio API of the time, DirectSound 3D.

But with the new consoles and Windows 8, the opportunity has arisen for changes to how audio is handled, and this is what AMD is seeking to capitalize on.

Audio DSPs are nothing new, with pioneers such as Creative Labs and Aureal jump-starting the market for those back in the late 90s. But due to the aforementioned issues they haven’t been a serious market since the launch of Creative Labs’ X-Fi back in 2005. Consequently what AMD is going to be doing here – offloading audio processing to their DSP to take advantage of the greater capabilities of task-dedicated hardware – isn’t itself new. But this is the first serious effort on the subject since 2005.

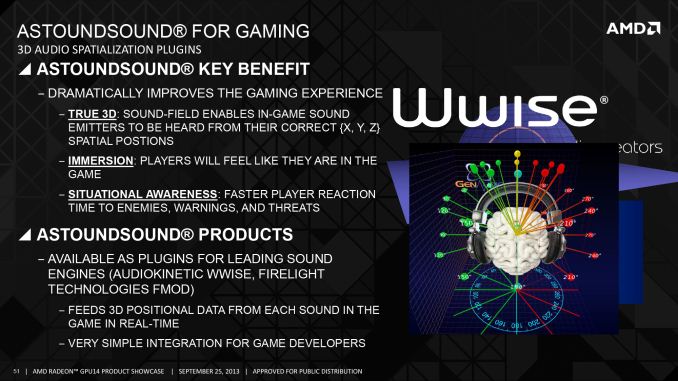

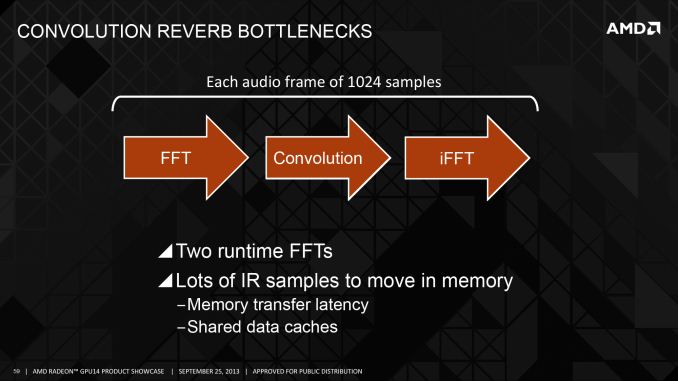

The advantages of utilizing the DSP are fairly straightforward. Simple audio calculations are cheap, and even simple 3D effects such as panning and precomputed reverb can be done similarly cheaply, but real-time reflections, reverb, and 3D transformations are expensive. Running the calculations to provide 3D audio over headphones and 2.1 speakers, or phantom speakers and above/below audio positioning in 5.1 setups is all very expensive. And for these reasons these effects aren’t used in current generation games. These are the kinds of effects AMD wants to bring (back) to PC gaming.

The challenge for AMD is that they’re going to need to get developers on board to utilize the technology, something that was a continual problem for Aureal and Creative. We don’t know just how the consoles will compare – we know the XB1 has its own audio DSPs, we know less about the PS4 – but in the PC space this would be an AMD-exclusive feature, which means the majority of enthusiast games (who historically have been NVIDIA equipped) will not be able to access this technology.

To jump ahead of that AMD is already forging relationships with the most important firms in the PC gaming audio space: the audio middleware providers. AMD is working very closely with audio firm GenAudio of AstoundSound fame, who in turn has developed audio engines utilizing the TrueAudio DSP. GenAudio will be releasing plugins for the common PC audio middleware to jumpstart the process, Firelight Technologies’ FMOD and AudioKinetics’ Wwise. AMD is also working with AudioKinetics directly towards the same goal.

AMD is also approaching game developers directly on this matter. Eidos has pledged support in their upcoming Thief game, and newcomer Xaviant pledging support for their in development magical loot game, Lichdom. All of this will of course be available to anyone using the Wwise or FMOD audio engines.

It bears mentioning that AMD’s audio DSP is not part of a stand-alone audio card, rather it’s a dedicated processor created so that developers can take advantage of the hardware to process their audio, and then passing that back to the sound card for presentation. This means that the audio DSP can be utilized regardless of the audio output method used – speakers, headphones, TVs via HDMI, etc – but it also means that developers need to actively include support for TrueAudio to use it. This won’t allow 5.1 audio to headphone downmixing for existing software, for example. Developers will at a minimum need to patch in support or design it into future games.

Wrapping things up, I had a chance to briefly try Xaviant’s Lichdom audio demo, which is already TrueAudio enabled. As someone who’s already a headphones-only gamer, this ended up being more impressive than any game/demo I’ve tried in the past. Xaviant has positional audio down very well – at least as good as Creative’s CMSS3D tech – and elevation effects were clearly better than anything I’ve heard previously. They’re also making heavy use of reverb, to the point where it’s being overdone for effect, but what’s there works very well.

To be clear here, nothing here is really groundbreaking; it’s merely a better implementation of existing ideas on positioning and reverb. But after a several year span of PC audio failing to advance (if not regressing) this is a welcome change to once again see positional audio and advanced audio processing taken seriously.

We’ll have more information on TrueAudio later on as AMD releases more details on the technology and what software will be using it.

62 Comments

View All Comments

IanCutress - Thursday, September 26, 2013 - link

Not everyone has an IGP :Pwhyso - Thursday, September 26, 2013 - link

Virtually every desktop chip intel sells has an igp. Every mobile chip amd or intel sells does. Many of AMD's desktop chips do. An igp in a system is much more prevalent than a special piece of hardware in the GPU. Plus that igp tends to sit idle.nezuko - Thursday, September 26, 2013 - link

Oh, believe me when I'm saying AMD will put that TrueAudio on their Next-gen APU to attract Intel user and nvidia user.Wolfpup - Wednesday, October 2, 2013 - link

Ooooh there's an interesting idea. Yeah, I'm always pissed off that CPUs are wasting gigantic amount of die area on terrible video I don't want. We could get at least one extra core if I'm remembering right, on Sandy Bridge? Probably worse now.use that for SOMETHING lol

moozoo - Thursday, September 26, 2013 - link

I actually think the audio dsp would have made sense if the dsp chip had gone into the xbox one, the ps4 and on APU's (i.e. for pc's and steamboxes). At this point they could included near hardware level audio in Mantle and have a uniform api across all the platforms.However as I understand it the dsp chip isn't in any of these.

i'm not here - Thursday, September 26, 2013 - link

If AMD gets developers to write for it then it has a chance. Echo/reverb are poor examples even if they take processing power. Positioning and staging would be a better focus to make things more realistic.[I remember 'SoundStorm.' Still a better sound than most of today's onboard sound chips.]

Daniel Egger - Thursday, September 26, 2013 - link

There's are other very good reason for doing it in hardware and next to the GPU: latency (for calculating the sound and effects), jitter and A/V sync offset. Interestingly all gamers seem to care for lowest display response times but no one seems to care that the audio actually matches the video...risa2000 - Thursday, September 26, 2013 - link

I wanted to write exactly this. Also, if the graphics card misses a frame (or two) because of heavy scene, few people will notice. However if the audio misses the frame everyone will immediately hear it.boomie - Thursday, September 26, 2013 - link

Maybe I will be able to get a positional sound that isn't a load of crap.Every single game with camera controls right now is doing the thing where if the sound source is exactly 90° to the left of the camera, the sound only comes from left channel with nothing on the right. How the hell this passes for acceptable and why I'm the only one noticing this I'm not sure.

c4keislie - Thursday, September 26, 2013 - link

As someone who is 75% percent deaf in one ear (and a whole lot of distortion in what I do hear) I definitely notice the 90degree sound source issue. Scripted sequences where someone is talking and you have no control over where you are looking are the worst, as I sometimes have to turn my headphones around to (L -> R, R -> L) just to hear the conversation.