Memory Scaling on Haswell CPU, IGP and dGPU: DDR3-1333 to DDR3-3000 Tested with G.Skill

by Ian Cutress on September 26, 2013 4:00 PM ESTThe activity cited most often for improved memory speeds is IGP gaming, and as shown in both of our tests of Crystalwell (4950HQ in CRB, 4750HQ in Clevo W740SU), Intel’s version of Haswell with the 128MB of L4 cache, having big and fast memory seems to help in almost all scenarios, especially when there is access to more and more compute units. In order to pinpoint where exactly the memory helps, we are reporting both average and minimum frame rates from the benchmarks, using the latest Intel drivers available. All benchmarks are also run at 1360x768 due to monitor limitations (and makes more relevant frame rate numbers).

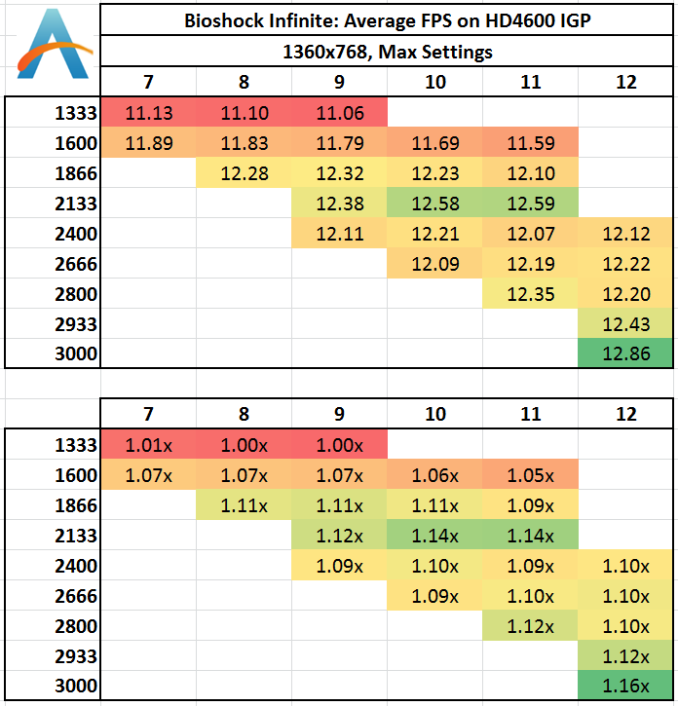

Bioshock Infinite: Average FPS

Average frame rate numbers for Bioshock Infinite puts a distinct well on anything 1333 MHz. Move up to 1600 gives a healthy 4-6% boost, and then again to 1866 for a few more percent. After that point the benefits tend to flatten out, but a bump up again after 2800 MHz might not be cost effective, especially using IGP.

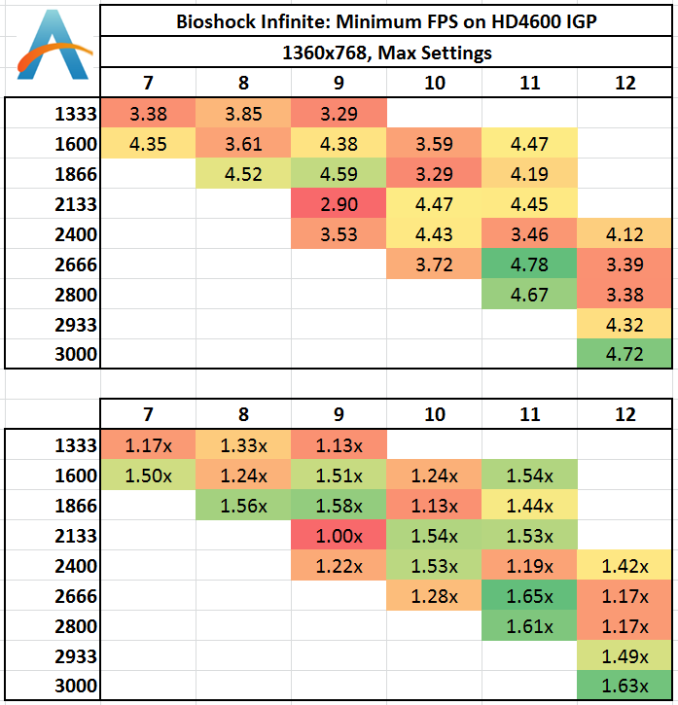

Bioshock Infinite: Minimum FPS

Unfortunately, minimum frame rates for Bioshock Infinite are a little over the place – we see this in both of our dGPU tests, suggesting more an issue with the title itself than the hardware.

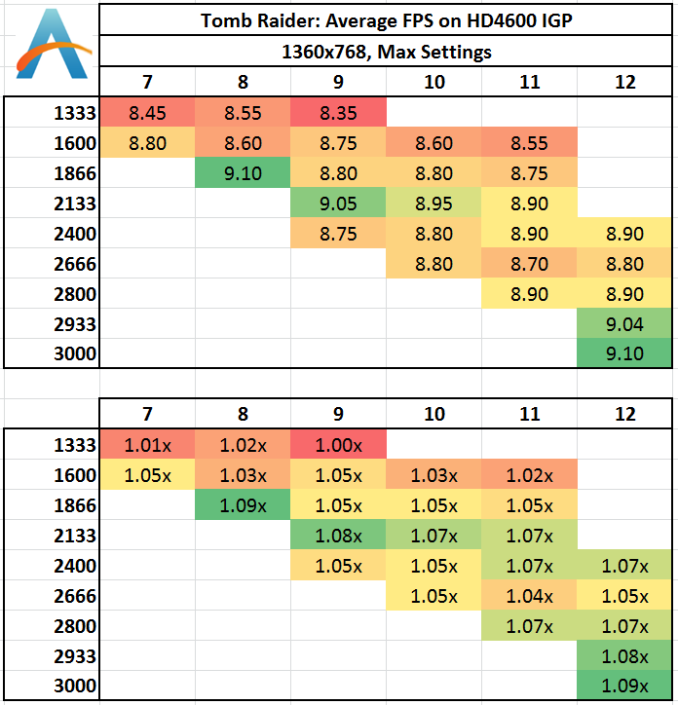

Tomb Raider: Average FPS

Similar to Bioshock Infinite, there is a distinct well at 1333 MHz memory. Moving to 1866 MHz makes the problem go away, but as the MHz rises we get another noticeable bump over 2800 MHz.

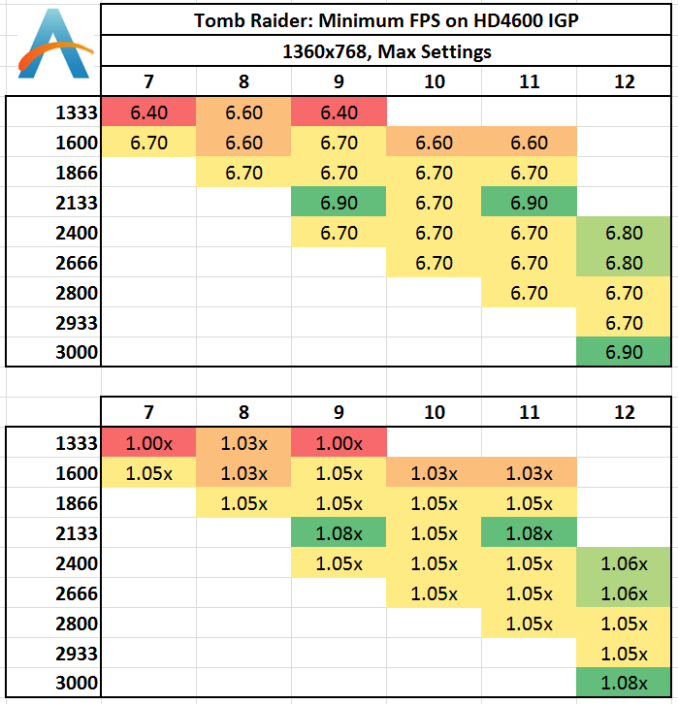

Tomb Raider: Minimum FPS

The minimum FPS rates shows that hole at 1333 MHz still, but everything over 1866 MHz gets away from it.

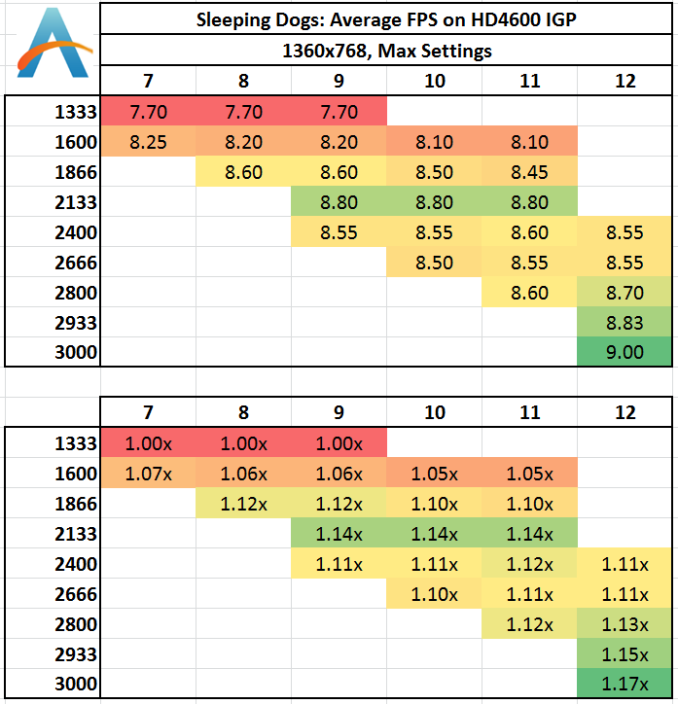

Sleeping Dogs: Average FPS

Sleeping Dogs seems to love memory – 1333 MHz is a dud but 2133 MHz is the real sweet spot (but 1866 MHz still does well). CL seems to make no difference, and after 2133 MHz the numbers take a small dive, but back up by 2933 again.

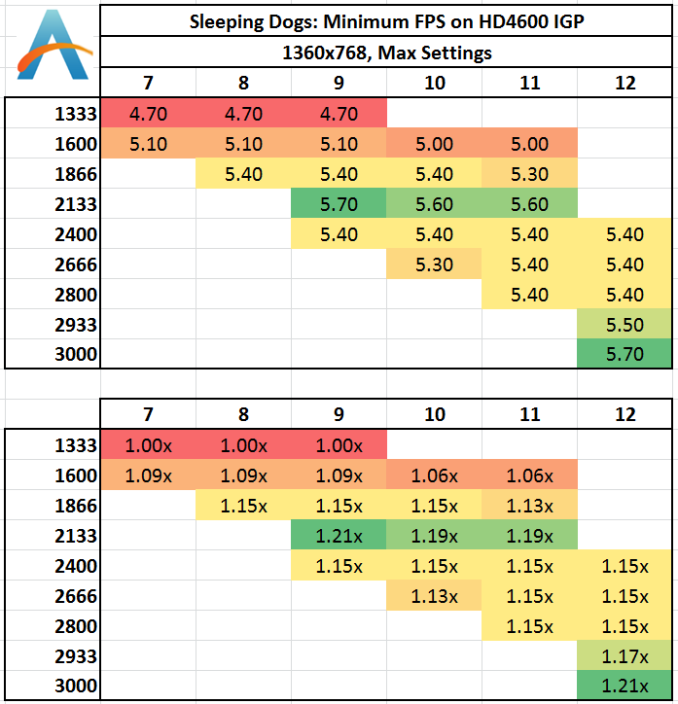

Sleeping Dogs: Minimum FPS

Like the average frame rates, it seems that 1333 MHz is a bust, 1866 MHz+ does the business, and 2133 MHz is the sweet spot.

89 Comments

View All Comments

MrSpadge - Thursday, September 26, 2013 - link

Is your HDD scratching because you're running out of RAM? Then an upgrade is worth it, otherwise not.nevertell - Thursday, September 26, 2013 - link

Why does going from 2933 to 3000, with the same latencies, automatically make the system run slower on almost all of the benchmarks ? Is it because of the ratio between cpu, base and memory clock frequencies ?IanCutress - Thursday, September 26, 2013 - link

Moving to the 3000 MHz setting doesn't actually move to the 3000 MHz strap - it puts it on 2933 and adds a drop of BCLK, meaning we had to drop the CPU multiplier to keep the final CPU speed (BCLK * multi) constant. At 3000 MHz though, all the subtimings in the XMP profile are set by the SPD. For the other MHz settings, we set the primaries, but we left the motherboard system on auto for secondary/tertiary timings, and it may have resulted in tighter timings under 2933. There are a few instances where the 3000 kit has a 2-3% advantage, a couple where it's at a disadvantage, but the rest are around about the same (within some statistical variance).Ian

mikk - Thursday, September 26, 2013 - link

What a stupid nonsense these iGPU Benchmarks. Under 10 fps, are you serious? Do it with some usable fps and not in a slide show.MrSpadge - Thursday, September 26, 2013 - link

Well, that's the reality of gaming on these iGPUs in low "HD" resolution. But I actually agree with you: running at 10 fps is just not realistic and hence not worth much.The problem I see with these benchmarks is that at maximum detail settings you're putting en emphasis on shaders. By turning details down you'd push more pixels and shift the balance towards needing more bandwidth to achieve just that. And since in any real world situation you'd see >30 fps, you ARE pushing more pixels in these cases.

RYF - Saturday, September 28, 2013 - link

The purpose was to put the iGPU into strain and explore the impacts of having faster memory in improving the performance.You seriously have no idea...

MrSpadge - Thursday, September 26, 2013 - link

Your benchmark choices are nice, but I've seen quite a few "real world" applications which benefit far more from high-performance memory:- matrix inversion in Matlab (Intel MKL), probably in other languages / libs too

- crunching Einstein@Home (BOINC) on all 8 threads

- crunching Einstein@Home on 7 threads and 2 Einstein@Home tasks on the iGPU

- crunching 5+ POEM@Home (BOINC) tasks on a high end GPU

It obviously depends on the user how real the "real world" applications are. For me they are far more relevant than my occasional game, which is usually fast enough anyway.

MrSpadge - Thursday, September 26, 2013 - link

Edit: in fact, I have set a maximum of 31 fps in PrecisionX for my nVidia, so that the games don't eat up too much crunching time ;)Oscarcharliezulu - Thursday, September 26, 2013 - link

Yep it'd be interesting to understand where extra speed does help, eg database, j2ee servers, cad, transactional systems of any kind, etc. otherwise great read and a great story idea, thanks.willis936 - Thursday, September 26, 2013 - link

SystemCompute - 2D Ex CPU 1600CL10. Nice.