Memory Scaling on Haswell CPU, IGP and dGPU: DDR3-1333 to DDR3-3000 Tested with G.Skill

by Ian Cutress on September 26, 2013 4:00 PM ESTOne of the touted benefits of Haswell is the compute capability afforded by the IGP. For anyone using DirectCompute or C++ AMP, the compute units of the HD 4600 can be exploited as easily as any discrete GPU, although efficiency might come into question. Shown in some of the benchmarks below, it is faster for some of our computational software to run on the IGP than the CPU (particularly the highly multithreaded scenarios).

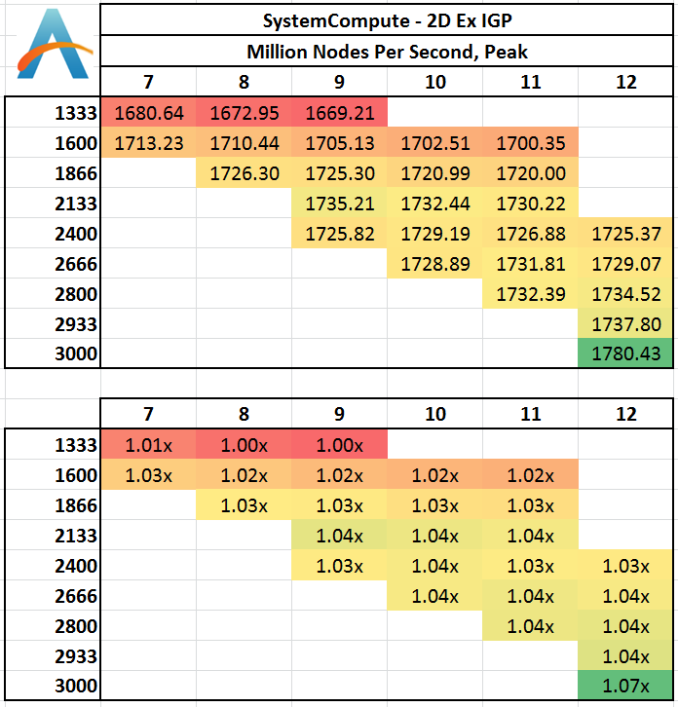

Grid Solvers - Explicit Finite Difference on IGP

As before, we test both 2D and 3D explicit finite difference simulations with 2n nodes in each dimension, using OpenMP as the threading operator in single precision. The grid is isotropic and the boundary conditions are sinks. We iterate through a series of grid sizes, and results are shown in terms of ‘million nodes per second’ where the peak value is given in the results – higher is better.

Two Dimensional:

The results on the IGP are 50% higher than those on the CPU, and it would seem that memory can make a difference as well. As long as 1333 MHz is not chosen, there is at least a 2% gain to be had. Otherwise, the next jump up is at 2666 MHz for another 2%, which might not be cost effective.

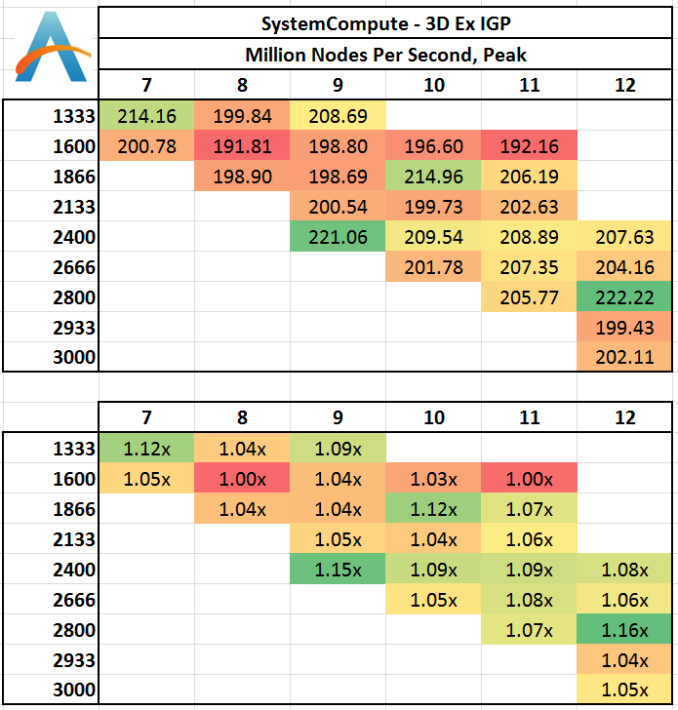

Three Dimensional:

The 3D results seem to be a little haphazard, with 1333 C7 and 2400 C9 both performing well. 1600 C11 definitely is out of the running, although anything 2400 MHz or above affords almost a 10%+ benefit.

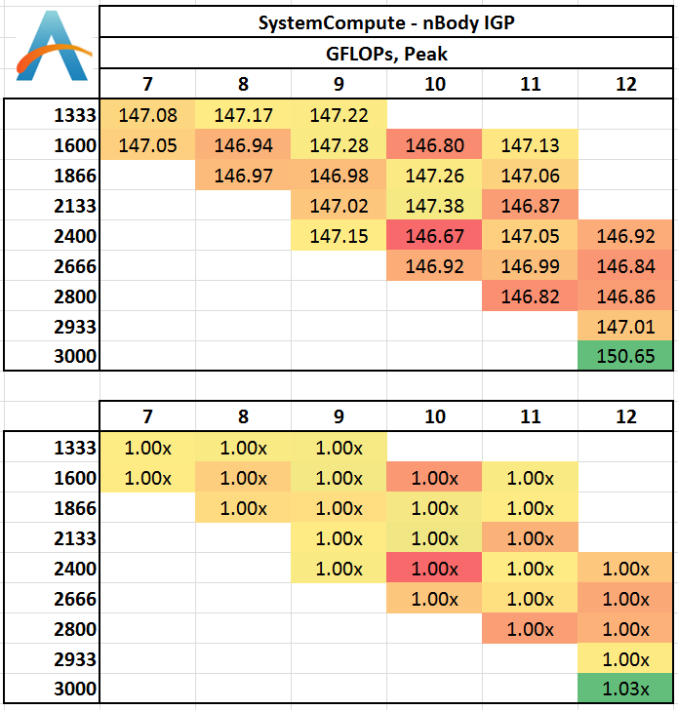

N-Body Simulation on IGP

As with the CPU compute, we run a simulation of 10240 particles of equal mass - the output for this code is in terms of GFLOPs, and the result recorded was the peak GFLOPs value.

In terms of a workload that calculates FLOPs, the operational workload does not seem to be affected by memory.

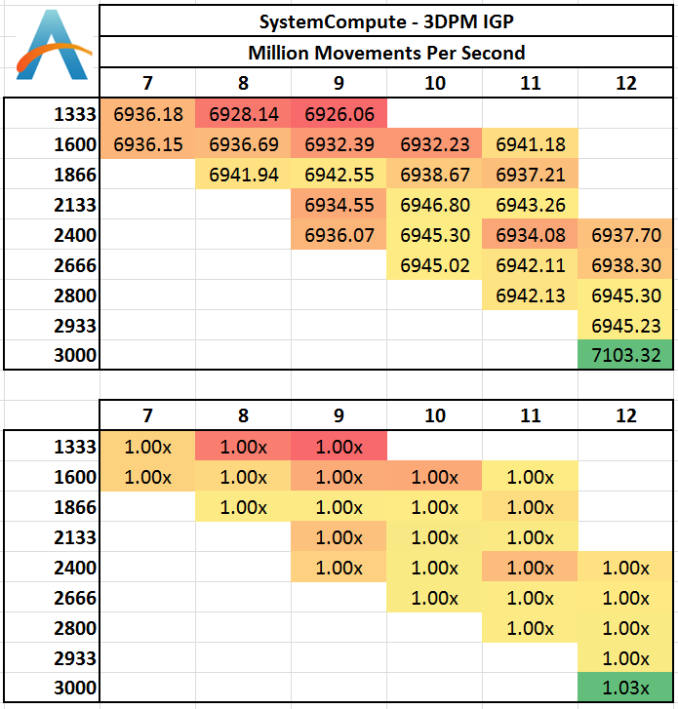

3D Particle Movement on IGP

Similar to our CPU Compute algorithm, we calculate the random motion in 3D of free particles involving random number generation and trigonometric functions. For this application we take the fastest true-3D motion algorithm and test a variety of particle densities to find the peak movement speed. Results are given in ‘million particle movements calculated per second’, and a higher number is better.

Despite this result being over 35x the equivalent calculation on a fully multithreaded 4770K CPU (200 vs. 7000), again there seems little difference between memory speeds. 3000 C12 gets a small peak over the rest, similar to the n-Body test.

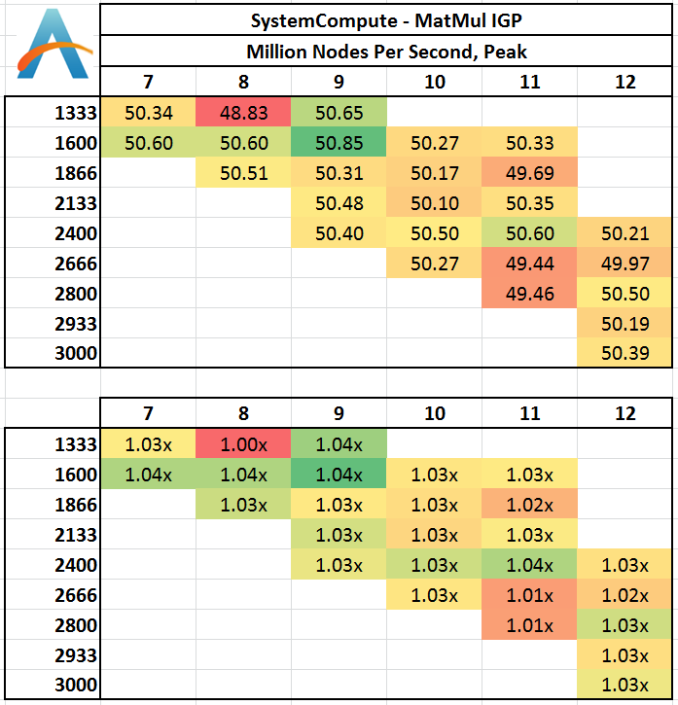

Matrix Multiplication on IGP

Matrix Multiplication occurs in a number of mathematical models, and is typically designed to avoid memory accesses where possible and optimize for a number of reads and writes depending on the registers available to each thread or batch of dispatched threads. He we have a crude MatMul implementation, and iterate through a variety of matrix sizes to find the peak speed. Results are given in terms of ‘million nodes per second’ and a higher number is better.

Matrix Multiplication on this scale seems to vary little between memory settings, although a shift towards the lower CL timings gives a marginally (though statistically minor) better result.

3D Particle Movement on IGP

Similar to our 3DPM Multithreaded test, except we run the fastest of our six movement algorithms with several million threads, each moving a particle in a random direction for a fixed number of steps. Final results are given in million movements per second, and a higher number is better.

While there is a slight dip using 1333 C9, in general almost all of our memory timing settings perform roughly the same. The peak shown using our memory kit at its XMP rated timings are presumably more due to the adjustments in BCLK which need to be made in order to hit this memory frequency.

89 Comments

View All Comments

DanNeely - Thursday, September 26, 2013 - link

A suggestion for future articles of this type. If the results mostly show that really slow memory is bad but above that it doesn't really matter, normalizing data with a reasonably priced option that performs well as 1.0 might make clearer. ex for the current results put 1866-C9 as 1.0, and having 1333 as .9x and 3000 as 1.02. I think this would would help drive home that you're hitting diminishing returns on the cheap stuff.superjim - Thursday, September 26, 2013 - link

It looks like the days of 1600 C9 being the standard are over however the Hynix fire isn't helping faster memory prices any. 4-5 months ago you could get 2x 4GB of 1600 C9 for $30-35 bucks.Belial88 - Tuesday, October 1, 2013 - link

That's because just like when HDD prices skyrocketed due to the 2011 Thailand Flood, RAM prices have skyrocketed due to the 2013 Hynix Factory Fire. Prices had started to rise around early 2013 due to market consolidation and some other electronics (tablet, console, etc market needs), nothing huge, and they were actually starting to drop until the factory fire.As for 1600 C9 being some sort of standard, well, what Intel/AMD specifies as their rated RAM speed is no more useful than what they specify their CPU speed, as we know the chip can go way above that. JPeople who are savvy and know how to buy RAM, can buy RAM easily capable of 2400mhz CL8 by researching the RAM IC.

PSC/BBSE is easily capable of 2400mhz CL8 and generally costs ~$60 per 8gb (ie similar to the cheapest ddr3 ram). You can find some Hynix CFRs (double sided, unlike MFR, meaning they don't hit the high mhz numbers, but way better 24/7 performance clock for clock, kinda like dual channel vs single channel) for around $65, like the Gskill Ripjaws X 2400CL11 (currently like $75 on newegg), which will easily do ~2800mhzCL13.

RAM speed has always made an impact, the problem with reviews like the above is assuming you can't overclock RAM, and have to pay for it. In reality there is only ~5 different types of RAM (and a few subtypes). If you are smart and purchase Hynix, Samsung instead of Spektek, Qimonda, you can get RAM that easily does 2400mhz+ for the same or simliar price as the cheapest spekteks. If you assume that going from 1600 to 2400 will cost you $100+, of course it's a ripoff...

But if you buy, say, some Gskill Pi's 1600mhz for bargain bin, and overclock them to 2400CL8, you gain a good 10+ fps for almost nothing, and that's an awesome value. All RAM is just merely rebranded Spektek/Qimonda/PSC/BBSE/Hynix/Samsung, ie the same RAM is sold as 1600 CL9, 1600 CL8 1.65v, 1866 CL10, 2000CL11, 2133 CL12, etc ad nauesum.

vol7ron - Monday, September 30, 2013 - link

Relational results are helpful -I think they've been added since your comment- but I also like to see the empirical data as is also being listed.I know these things are currently being done in this article, I just want to make it a point not to make the decision to use one or the other, but both, again in the future.

vol7ron - Monday, September 30, 2013 - link

As an amendment, I want to add that the thing I would change in the future is the colors used. The spectrum should be green:good red:hazardous/bad. If you have something at 1.00x, perhaps that should be yellow, since it's the neutral starting position.alfredska - Monday, September 30, 2013 - link

Yes, this is some pretty basic stuff. It seems there's bouncing back and forth between green = good/bad right now. The author needs to stick to a convention throughout the article. I'm not really of the opinion that green and red are the best choices, but at least if a convention is used I can train my eyes.xTRICKYxx - Thursday, September 26, 2013 - link

It would be cool to see other IGP's including Iris Pro or HD 5000. Also, Richland may see slightly more than the 5% Haswell's HD 4600 has.Khenglish - Thursday, September 26, 2013 - link

I would expect richland/trinity to have larger gains since the IGP has access to only 4MB cache instead of 6MB or 8MB found on intel processors.yoki - Thursday, September 26, 2013 - link

hi, you said that the order of importance place amount of memory & their placement is most importance, but not a clue regarding how this scale in real world... for example i have 1600mhz 7Cl 6GB RAM in a x58 system,,,,should i upgrade it to 12GB ... how much i'll gain from thatIanCutress - Thursday, September 26, 2013 - link

That's ultimately up for the user to determine based on workload, gaming style, etc. I'd always suggest playing it safe, so if you plan on doing anything that would tax a system, 12gb might be a safe bet. That's X58 though, this is talking about Haswell, whose memory controller can take this high end kits ;)