NVIDIA GeForce GTX 770 Review: The $400 Fight

by Ryan Smith on May 30, 2013 9:00 AM ESTPower, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

GTX 770 ends up being an interesting case study in all 3 factors due to the fact that NVIDIA is pushing the GK104 GPU so hard. Though the old version of GPU Boost muddles things some, there’s no denying that higher clockspeeds coupled with the higher voltages needed to reach those clockspeeds has a notable impact on power consumption. This makes it very hard for NVIDIA to stick to their efficiency curve, since adding voltages and clockspeeds offers diminishing returns for the increase in power consumption.

| GeForce GTX 770 Voltages | ||||

| GTX 770 Max Boost | GTX 680 Max Boost | GTX 770 Idle | ||

| 1.2v | 1.175v | 0.862v | ||

As we can see, NVIDIA has pushed up their voltage from 1.175v on GTX 680 to 1.2v on GTX 770. This buys them the increased clockspeeds they need, but it will drive up power consumption. At the same time GPU Boost 2.0 helps to counter this some, as it will keep leakage from being overwhelming by keeping GPU temperatures at or below 80C.

| GeForce GTX 770 Average Clockspeeds | |||

| Max Boost Clock | 1136MHz | ||

| DiRT:S |

1136MHz

|

||

| Shogun 2 |

1136MHz

|

||

| Hitman |

1136MHz

|

||

| Sleeping Dogs |

1102MHz

|

||

| Crysis |

1136MHz

|

||

| Far Cry 3 |

1136MHz

|

||

| Battlefield 3 |

1136MHz

|

||

| Civilization V |

1136MHz

|

||

| Bioshock Infinite |

1128MHz

|

||

| Crysis 3 |

1136MHz

|

||

Speaking of clockspeeds, we also took the average clockspeeds for GTX 770 in our games. In short, GTX 770 is almost always at its maximum boost bin of 1136; the oversized Titan cooler keeps temperatures just below the thermal throttle, and there’s enough TDP headroom left that the card doesn’t need to pull back to avoid that. This is one of the reasons why GTX 770’s performance advantage over GTX 680 is greater than the clockspeed increases alone.

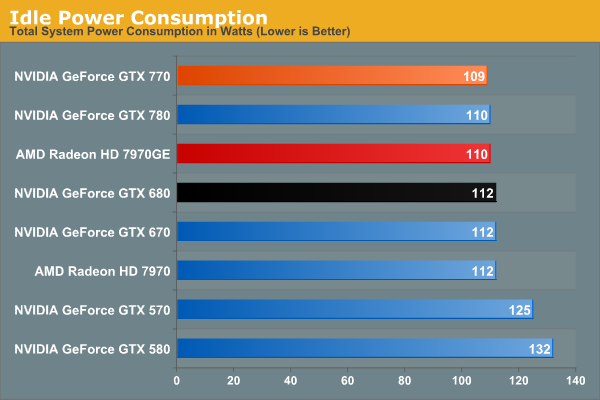

We don’t normally publish this data, but GTX 770 has an extra interesting attribute about it: its idle clockspeed is lower than other Kepler parts. GTX 680 and GTX 780 both idle at 324MHz, but GTX 770 idles at 135MHz. Even 324MHz has proven low enough to keep Kepler’s idle power in check in the past, so it’s not entirely clear just what NVIDIA is expecting here. We’re seeing 1W less at the wall, but by this point the rest of our testbed is drowning out the video card.

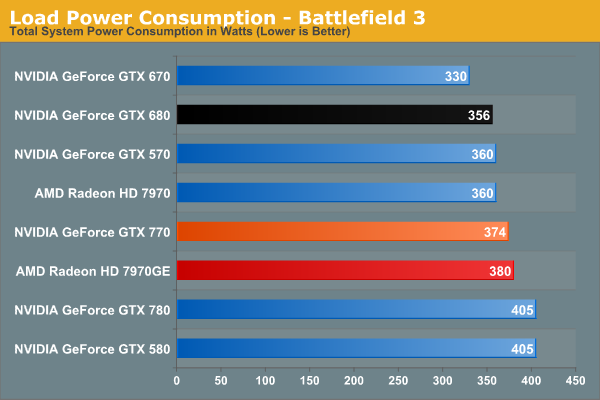

Moving on to BF3 power consumption, we can see the power cost of GTX 770’s performance. 374W at the wall is only 18W more than GTX 680, thanks in part to the fact that GTX 770 isn’t hitting its TDP limit here. At the same time compared to the outgoing GTX 670, this is a 44W difference. This makes it very clear that GTX 770 is not a drop-in replacement for GTX 670 as far as power and cooling go. On the other hand GTX 770 and GTX 570 are very close, even if GTX 770’s TDP is technically a bit higher than GTX 570’s.

Despite this runup, GTX 770 still stays a hair under 7970GE, despite the slightly higher CPU power consumption from GTX 770’s higher performance in this benchmark. It’s only 6W at the wall, but it showcases that NVIDIA didn’t have to completely blow their efficiency curve to get a GK104 card back up to 7970GE performance levels.

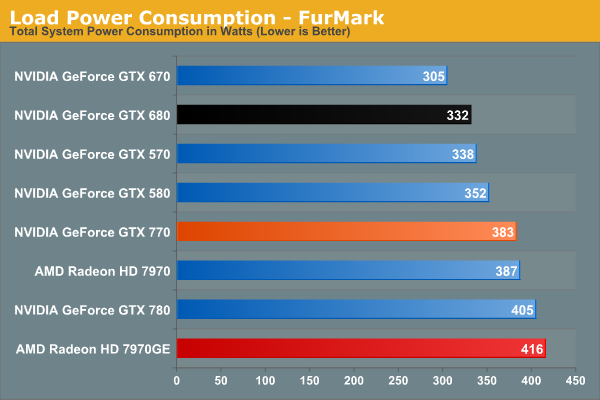

In our TDP constrained scenario we can see the gaps between our cards grow. 78W separates the GTX 770 from GTX 670, and even GTX 680 draws 41W less, almost exactly what we’d expect from their published TDPs. On the flip side of the coin 383W is still less than both 7970 cards, reflecting the fact that GTX 770 is geared for 230W while AMD’s best is geared for 250W.

This is also a reminder however that at a mid-generation product extra performance does not come for free. With the same process and the same architecture, performance increases require power increases. This won’t significantly change until we see 20nm cards next year.

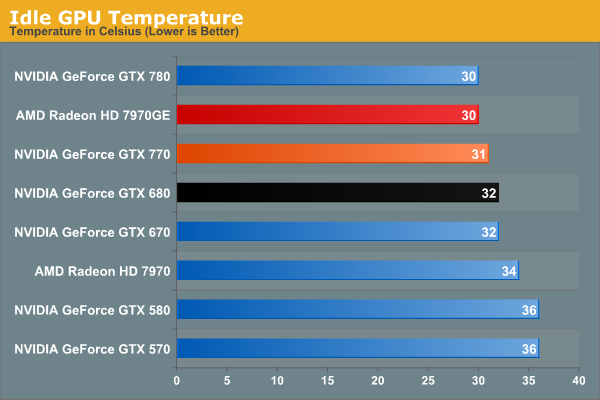

Moving on to temperatures, these are going to be a walk in the part for the reference GTX 770 due to the Titan cooler. At idle we see it hit 31C, which is actually 1C warmer than GTX 780, but this really just comes down to uncontrollable variations in our tests.

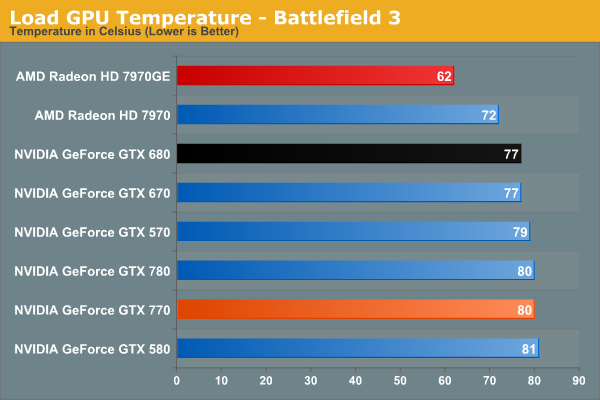

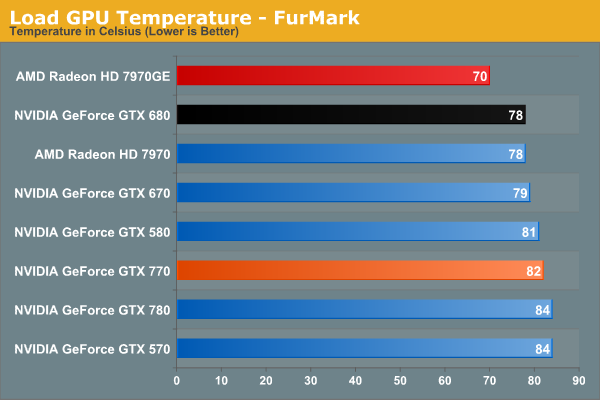

As a GPU Boost 2.0 card temperatures will top out at 80C in games, and that’s exactly what happens here. Interestingly, GTX 770 is just hitting 80C, as evidenced by our clockspeeds earlier. If it was running hotter, it would have needed to drop to lower clockspeeds.

Of course it doesn’t hold a candle here to 7970GE, but that’s the difference between a blower and an open air cooler in action. The blower based 7970 is much closer, as we’d expect.

Under FurMark the temperature situation is largely the same. The GTX 770 comes up to 82C here (favoring TDP throttling over temperature throttling), but the relative rankings are consistent.

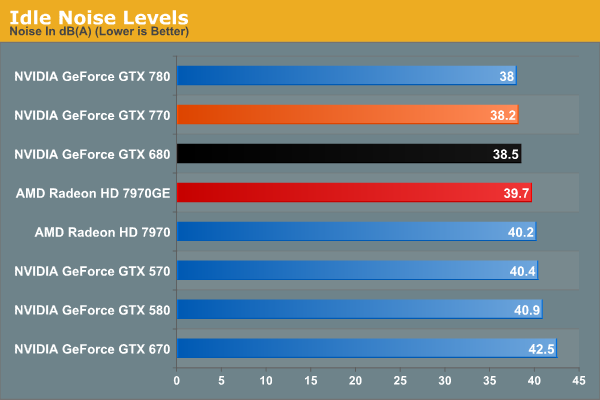

With Titan’s cooler in tow, idle noise looks very good on GTX 770.

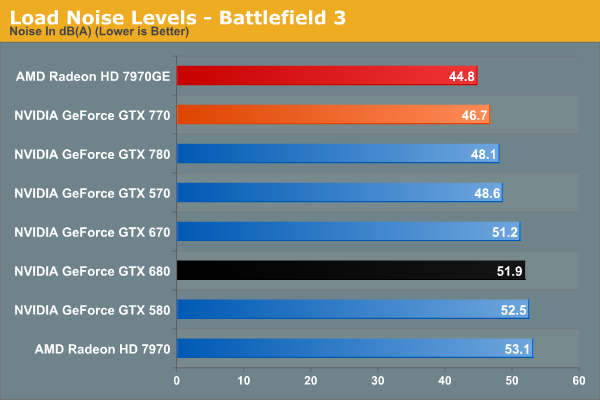

Our noise results under Battlefield 3 are a big part of the reason we’ve been calling the Titan cooler oversized for GTX 770. When is the last time we’ve seen a blower on a 230W card that only hit 46.7dB? The short answer is never. GTX 770’s fan simply doesn’t have to rev up very much to handle the lesser heat output. In fact it’s damn near competitive with the open air cooled 7970GE; there’s still a difference, but it’s under 2dB. More importantly however, despite being a more powerful and more power-hungry card than the GTX 680, the GTX 770 is over 5dB quieter, and this is despite the fact that the GTX 680 is already a solid card own its own. Titan’s cooler is certainly expensive, but it gets results.

Of course this is why it’s all the more a shame that none of NVIDIA’s partners are releasing retail cards with this cooler. There are some blowers in the pipeline, so it will be interesting to see if they can maintain Titan’s performance while giving up the metal.

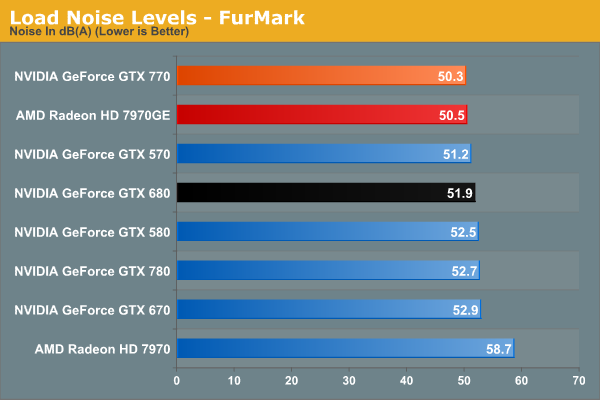

With FurMark pushing our GTX 770 at full TDP, our noise results are still good, but not as impassive as they were under BF3. 50.3dB is still over a dB quieter than GTX 680, though obviously much closer than before. On the other hand the GTX 770 ever so slightly edges out the 7970GE and its open air cooler. Part of this comes down to the TDP difference of course, but beating an open air cooler like that is still quite the feat.

Wrapping things up here, it will be interesting to see where NVIDIA’s partners go with their custom designs. GTX 770, despite being a higher TDP part than both GTX 670 and GTX 680, ends up looking very impressive when it comes to noise, and it would be great to see NVIDIA’s partners match that. At the same time the increased power consumption and heat generation relative to the GeForce 600 series is unfortunate, but not unexpected. But for buyers coming from the GeForce 400 and GeForce 500 series, GTX 770 is in-line with what those previous generation cards were already pulling.

117 Comments

View All Comments

chizow - Thursday, May 30, 2013 - link

They are both overpriced relative to their historical cost/pricing, as a result you see Nvidia has posted record margins last quarter, and will probably do similarly well again.Razorbak86 - Thursday, May 30, 2013 - link

Cool! I'm both a customer and a shareholder, but my shares are worth a hell of a lot more than my SLi cards. :)antef - Thursday, May 30, 2013 - link

I'm not happy that NVIDIA threw power efficiency to the wind this generation. What is with these GPU manufacturers that they can't seem to CONSISTENTLY focus on power efficiency? It's always...."Oh don't worry, next gen will be better we promise," then it finally does get better, then next gen sucks, then again it's "don't worry, next gen we'll get power consumption down, we mean it this time." How about CONTINUING to focus on it? Imagine any other product segment where a 35%! power increase would be considered acceptable, there is none. That makes a 10 or whatever FPS jump not impressive in the slightest. I have a 660 Ti which I feel has an amazing speed to power efficiency ratio, looks like this generation definitely needs to be sat out.jwcalla - Thursday, May 30, 2013 - link

It's going to be hard to get a performance increase without sacrificing some power while using the same architecture. You pretty much need a new architecture to get both.jasonelmore - Thursday, May 30, 2013 - link

or a die shrinkBlibbax - Thursday, May 30, 2013 - link

As these cards have configurable TDP, you get to choose your own priorities.coldpower27 - Thursday, May 30, 2013 - link

There isn't much you can really do when your working with the same process node and same architecture, the best you can hope for is a slight bump in efficiency at the same performance level but if you increase performance past the sweet spot, you sacrifice efficiency.In past generation you had half node shrinks. GTX 280 -> GTX 285 65nm to 55nm and hence reduced power consumption.

Now we don't, we have jumped straight from 55nm -> 40nm -> 28nm, with the next 20nm node still aways out. There just isn't very much you can do right now for performance.

JDG1980 - Thursday, May 30, 2013 - link

Yes, this is really TSMC's fault. They've been sitting on their ass for too long.tynopik - Thursday, May 30, 2013 - link

maybe a shade of NVIDIA green for the 770 in the charts instead of AMD red?joel4565 - Thursday, May 30, 2013 - link

Looks like an interesting part. If for no other reason that to put pressure on AMD's 7950 Ghz card. I imagine that card will be dropping to 400ish very soon.I am not sure what card to pick up this summer. I want to buy my first 2560x1440 monitor (leaning towards Dell 2713hm) this summer, but that means I need a new video card too as my AMD 6950 is not going to have the muscle for 1440p. It looks like both the Nvida 770 and AMD 7950 Ghz are borderline for 1440p depending on the game, but there is a big price jump to go to the Nvidia 780.

I am also not a huge fan of crossfire/sli although I do have a compatible motherboard. Also to preempt the 2560/1440 vs 2560/1600 debate, yes i would of course prefer more pixels, but most of the 2560x1600 monitors I have seen are wide gamut which I don't need and cost 300-400 more. 160 vertical pixels are not worth 300-400 bucks and dealing with the Wide gamut issues for programs that aren't compatible.