Intel Iris Pro 5200 Graphics Review: Core i7-4950HQ Tested

by Anand Lal Shimpi on June 1, 2013 10:01 AM ESTCrysis Warhead

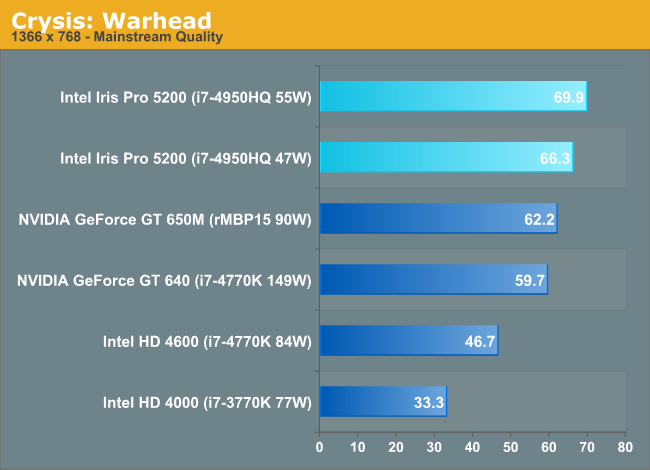

Up next is our legacy title for 2013, Crysis: Warhead. The stand-alone expansion to 2007’s Crysis, at over 4 years old Crysis: Warhead can still beat most systems down. Crysis was intended to be future-looking as far as performance and visual quality goes, and it has clearly achieved that. We’ve only finally reached the point where high-end single-GPU cards have come out that can hit 60fps at 1920 with 4xAA, while low-end GPUs are just now hitting 60fps at lower quality settings and resolutions.

I can't believe it. An Intel integrated solution actually beats out an NVIDIA discrete GPU in a Crysis title. The 5200 does well here, outperforming the 650M by 12% in its highest TDP configuration. I couldn't run any of the AMD parts here as Bulldozer based parts seem to have a problem with our Crysis benchmark for some reason.

Crysis: Warhead is likely one of the simpler tests we have in our suite here, which helps explain Intel's performance a bit. It's also possible that older titles have been Intel optimization targets for longer.

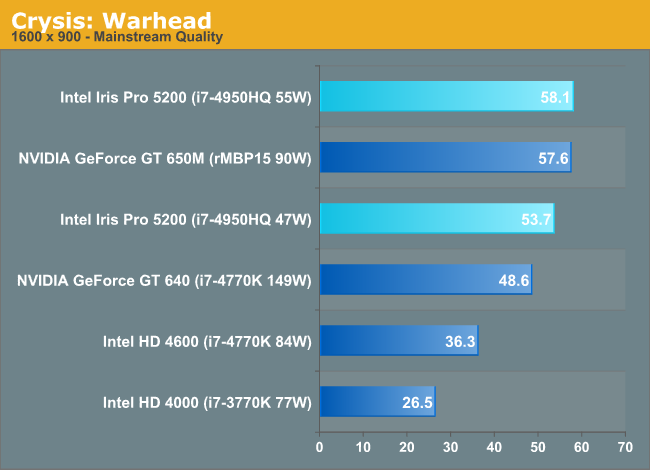

Ramping up the res kills the gap between the highest end Iris Pro and the GT 650M.

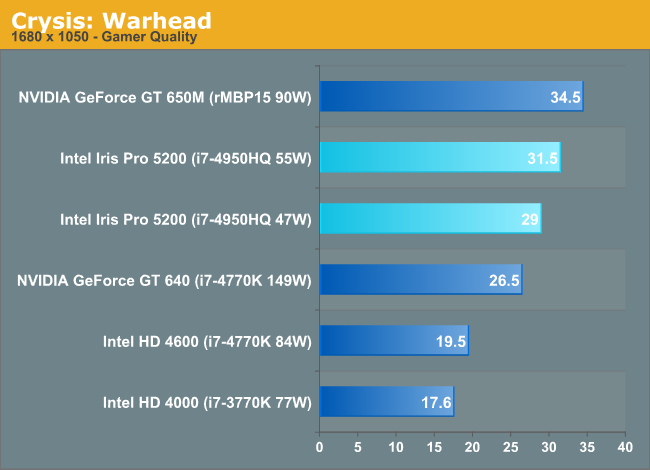

Moving to higher settings and at a higher resolution gives NVIDIA the win once more. The margin of victory isn't huge, but the added special effects definitely stress whatever Intel is lacking within its GPU architecture.

177 Comments

View All Comments

boe - Monday, June 3, 2013 - link

As soon as intel CPUs have video performance that exceeds NVidia and AMD flagship video cards I'll get excited. Until then I think of them as something to be disabled on workstations and to be tolerated on laptops that don't have better GPUs on board.MySchizoBuddy - Monday, June 3, 2013 - link

So Intel just took the OpenCL crown. Never thought this day would come.prophet001 - Monday, June 3, 2013 - link

I have no idea whether or not any of this article is factually accurate.However, the first page was a treat to read. Very well written.

:)

Teemo2013 - Monday, June 3, 2013 - link

Great success by Intel.4600 is near GT630 and HD4650 (much better than 6450 which sells for $15 at newegg)

5200 is better than GT640 and HD 6670 (currently sells like $50 at newegg)

Intel's intergrated used to be worthless comparing with discret cards. It slowly catches up during the past 3 years, and now 5200 is beating a $50 card. Can't wait for next year!

Hopefully this will finally push AMD and Nvidia to come up with meaningful upgrade to their low level product lines.

Cloakstar - Monday, June 3, 2013 - link

A quick check for my own sanity:Did you configure the A10-5800K with 4 sticks of RAM in bank+channel interleave mode, or did you leave it memory bandwidth starved with 2 sticks or locked in bank interleave mode?

The numbers look about right for 2 sticks, and if that is the case, it would leave Trinity at about 60% of its actual graphics performance.

I find it hard to believe that the 5800K is about a quarter the performace per watt of the 4950HQ in graphics, even with the massive, server-crushing cache.

andrerocha - Monday, June 3, 2013 - link

is this new cpu faster than the 4770k? it sure cost more?zodiacfml - Monday, June 3, 2013 - link

impressive but one has to take advantage of the compute/quick sync performance to justify the increase in price over the HD 4600ickibar1234 - Tuesday, June 4, 2013 - link

Well, my Asus G50VT laptop is officially obsolete! A Nvidia 512MB GDDR3 9800gs is completely pwned by this integrated GPU, and, the CPU is about 50-65% faster clock for clock to the last generation Core 2 Duo Penryn chips. Sure, my X9100 can overclock stably to 3.5GHZ but this one can get close even if all cores are fully taxed.Can't wait to see what the Broadwell die shrink brings, maybe a 6-core with Iris or a higher clocked 4-core?

I too see that dual core versions of mobile Haswell with this integrated GPU would be beneficial. Could go into small 4.5 pounds laptops.

AMD.....WTH are you going to do.

zodiacfml - Tuesday, June 4, 2013 - link

AMD has to create a Crystalwell of their own. I never thought Intel could beat them to it since their integrated GPUs always has needed bandwidth ever since.Spunjji - Tuesday, June 4, 2013 - link

They also need to find a way past their manufacturing process disadvantage, which may not be possible at all. We're comparing 22nm Apples to 32/28nm Pears here; it's a relevant comparison because those are the realities of the marketplace, but it's worth bearing in mind when comparing architecture efficiencies.