FCAT: The Evolution of Frame Interval Benchmarking, Part 1

by Ryan Smith on March 27, 2013 9:00 AM EST

In the last year, stuttering, micro-stuttering, and frame interval benchmarking have become a very big deal in the world of GPUs, and for good reason. Through the hard work of the Tech Report’s Scott Wasson and others, significant stuttering issues were uncovered involving AMD’s video cards, breaking long-standing perceptions on stuttering, where the issues lie, and which GPU manufacturer (if anyone) does a better job of handling the problem. The end result of these investigations has seen AMD embarrassed and rightfully so, as it turned out they were stuttering far worse than they thought, and more importantly far worse than NVIDIA.

The story does not stop there however. As AMD has worked on fixing their stuttering issues, the methodologies pioneered by Scott have gone on to gain wide acceptance across the reviewing landscape. This has the benefit of putting more eyes on the problem and helping AMD find more of their stuttering issues, but as it turns out it has also created some problems. As we laid out in detail yesterday in a conversation with AMD, the current methodologies rely on coarse tools that don’t have a holistic view of the entire rendering pipeline. And as such while these tools can see the big problems that started this wave of interest, their ability to see small problems and to tell apart stuttering from other issues is very limited. Too limited.

In their conversation AMD laid out their argument for a change in benchmarking. A rationale for why benchmarking should move from using tools like FRAPS that can see the start of the rendering pipeline, and towards other tools and methods that can see the end of the rendering pipeline. And AMD was not alone in this; NVIDIA too has shown concern about tools like FRAPS, and has wanted to see testing methodologies evolve.

That brings us to this week. Often evolution is best left to occur naturally. But other times evolution needs a swift kick in the pants. This week NVIDIA has decided to give evolution that swift kick in the pants. This week NVIDIA is introducing FCAT.

FCAT, the Frame Capture Analysis Tool, is NVIDIA’s take on what the evolution of frame interval benchmarking should look like. By moving the measurements of frame intervals from the start of the rendering pipeline to the end of the pipeline, FCAT evolves the state of benchmarking by giving reviewers and consumers alike a new way to measure frame intervals. A year and a half ago the use of FRAPS brought a revolution to the 3D game benchmarking scene, and today NVIDIA seeks to bring about that revolution all over again.

FCAT is a powerful, insightful, and perhaps above all else labor intensive tool. For these reasons we are going to be splitting up our coverage on FCAT into two parts. Between trade shows and product launches we simply have not had enough time to put together a complete and proper dataset for FCAT, so rather than to do this poorly, we’re going to hold back our results until we’ve had a chance to run all of the FCAT tests and scenarios that we want to run

In part one of our series on FCAT, today we will be taking a high-level overview of FCAT. How it works, why it’s different from FRAPS, and why we are so excited about this tool. Meanwhile next week will see the release of part two of our series, in which we’ll dive into our FCAT results, utilizing FCAT to its full extent to look at where FCAT sees stuttering and under what conditions. So with that in mind, let’s dive into FCAT.

Reprise: When FRAPS Isn’t Enough

Since we covered the subject of FRAPS in great detail yesterday, we’re not going to completely rehash it. But for those of you who have not had the time to read yesterday’s article, here’s a quick rundown of how FRAPS measures frame intervals, and why at times this can be insufficient.

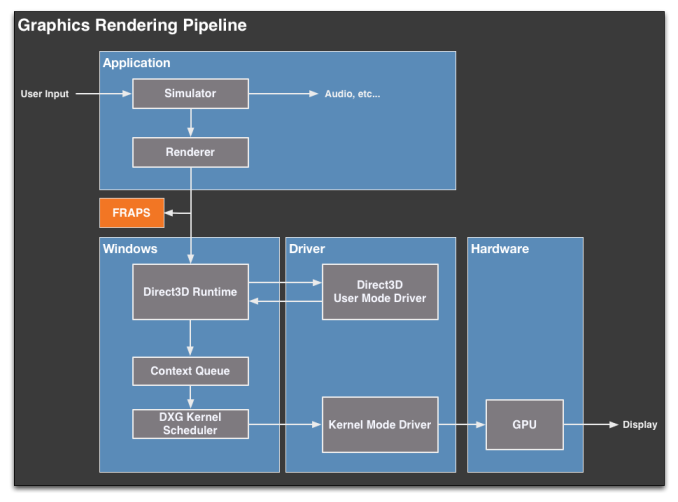

Direct3D (and OpenGL) uses a complex rendering pipeline that spans several different mechanisms and stages. When a frame is generated by an application, it must travel through the pipeline to Direct3D, the video drivers, a frame queue (the context queue), a GPU scheduler, the video drivers again, the GPU, and finally after that a frame can be displayed. The pipeline analogy is used here because that’s exactly what it is, with the added complexity of the context queue sitting in the middle of that pipeline.

FRAPS for its part exists at almost the very beginning of this pipeline. It interfaces with individual applications and intercepts the Present calls made to Direct3D that mark the end of each frame. By counting Present calls FRAPS can easily tell how many frames have gone into the pipeline, making it a simple and effective tool for measuring average framerates.

The problem with FRAPS as it were, is that while it can also be used to measure the intervals between frames, it can only do so at the start of the rendering pipeline, by counting the time between Present calls. This, while better than nothing, is far removed from the end of the pipeline where the actual buffer swaps take place, and ultimately is equally removed from the end-user experience. Furthermore because FRAPS is so far up the rendering pipeline, it’s insulated from what’s going on elsewhere; the context queue in particular can hold up to 3 frames, which means the rate of flow into the context queue can at times be very different from the rate of flow outside of the context queue.

As a result FRAPS is best descried as a coarse tool. It can see particularly egregious stuttering situations – like what AMD has been experiencing as of late – but it cannot see everything. It cannot see stuttering issues the context queue hides, and it’s particularly blind to what’s going on in multi-GPU scenarios.

88 Comments

View All Comments

Dribble - Wednesday, March 27, 2013 - link

No tech report have something very similar to what is described above with the colour bars - it's what detected the runt frames that afflict xfire. The nvidia tool looks like a more professional complete solution, but tech report did it first.Klimax - Thursday, March 28, 2013 - link

You will still need FRAPS like tools, because FCAT won't see some problems and changes in pipeline.Hrel - Wednesday, March 27, 2013 - link

making it a simple an effective tool - and*it can only do at the start of the rendering pipeline - it can only do so* at the...

Taracta - Wednesday, March 27, 2013 - link

A color bar can hold a whole lot of information. From my reasoning, it might be possible to timestamp each line in a frame, but let's not get that detailed. How about just timestamping each frame and coding the information in the overlay. Each 24-bit pixel of the overlay is 3 bytes of information. An overlay at about 10 pixels wide would give 30 bytes of information on each line. This should be enough to timestamp the frame from were FRAPS would get its information to be compared with the time of what come out at the end of the pipeline. Why wouldn't this be possible?Rythan - Wednesday, March 27, 2013 - link

Agreed. Ideally, you'd write a frame number and timestamp on each line of the overlay (as FRAPS/FCAT does), and separately transmit the frame number and timestamp to the video capture system (a simple 32-bit GPIO interface would do). This would tell you everything about latency, stutter, and dropped frames from the PRESENT call to the montior.Ryan Smith - Wednesday, March 27, 2013 - link

Unfortunately that won't work. The timestamp needs to be generated at the moment the simulation state finalized, before the draw calls are sent off. Present is too late, particularly because a pipeline stall means that Present may come well after the simulation state has been finalized.Taracta - Wednesday, March 27, 2013 - link

The whole issue is not the simulation state but what happens between Present and your Display. The accuracy of what is being stimulated is not what the beanchmark is about (it would be nice to have a benchmark for that) but to measure the frame latency introduced by the graphics pipeline.shtldr - Thursday, March 28, 2013 - link

At least the game/benchmark could encode the simulation time when it calls "present" and the capture analysis tool could then discover fluctuations in the time difference between simulation time and shown-on-the-screen time. Ideally, such a difference should be constant all the time, leading to a perfectly smooth rendering of the simulation. This is what someone has already pointed out earlier in the comments.arbiter9605 - Wednesday, April 17, 2013 - link

i might be off, Like how frap's puts in its overlay of fps, dxframe overlay, puts the color on each frame in same way so colors are on each frame that through drivers, gpu, directx etc.toyotabedzrock - Wednesday, March 27, 2013 - link

Why can't they just send back a signal when the buffer swap happens? It seems that drawing more stuff on the screen adds a delay to rendering.