NVIDIA's GeForce GTX Titan, Part 1: Titan For Gaming, Titan For Compute

by Ryan Smith on February 19, 2013 9:01 AM ESTMeet The GeForce GTX Titan

As we briefly mentioned at the beginning of this article, the GeForce GTX Titan takes a large number of cues from the GTX 690. Chief among these is that it’s a luxury card, and as such is built to similar standards as the GTX 690. Consequently, like the GTX 690, Titan is essentially in a league of its own when it comes to build quality.

Much like the GTX 690 was to the GTX 590, Titan is an evolution of the cooler found on the GTX 580. This means we’re looking at a card roughly 10.5” in length using a double-wide cooler. The basis of Titan’s cooler is a radial fan (blower) sitting towards the back of the card, with the GPU, RAM, and most of the power regulation circuitry in front of the fan. As a result the bulk of the hot air generated by Titan is blown forwards and out of the card. However it’s worth noting that unlike most other blowers technically the back side isn’t sealed, and while there is relatively little circuitry behind the fan, it would be incorrect to state that the card is fully exhausting. With that said, leaving the back side of the card open seems to be more about noise and aesthetics than it does heat management.

Like the GTX 580 but unlike the GTX 680, heat transfer is provided by a nickel tipped aluminum heatsink attached to the GPU via a vapor chamber. We typically only see vapor chambers on premium cards due to their greater costs, but also when space is at a premium. Meanwhile NVIDIA seems to be pushing the limits of heatsink size here, with the fins on Titan’s heatsink actually running beyond the base of the vapor chamber. Meanwhile providing the thermal interface between the GPU itself and the vapor chamber is a silk screened application of a high-end Shin-Etsu thermal compound; NVIDIA claims this compound offers over twice the performance of GTX 680’s grease, although of all of NVIDIA’s claims this is the least possible to validate.

Moving on, catching what the vapor chamber doesn’t cover is an aluminum baseplate that runs along the card, not only providing structural rigidity but also providing cooling for the VRMs and for the RAM on the front side of the card. Baseplates aren’t anything new for NVIDIA, but again this is something that we don’t see a lot of except on their more premium cards.

Capping off Titan we have its most visible luxury aspects. Like the GTX 690 before it, NVIDIA has replaced virtually every bit of plastic with metal for aesthetic/perceptual purposes. This time the entire shroud and fan housing is composed of casted aluminum, which NVIDIA tells us is easier to cast than the previous mix of aluminum and magnesium that the GTX 690 used. Meanwhile the polycarbonate window makes its return allowing you to see Titan’s heatsink solely for the sake of it.

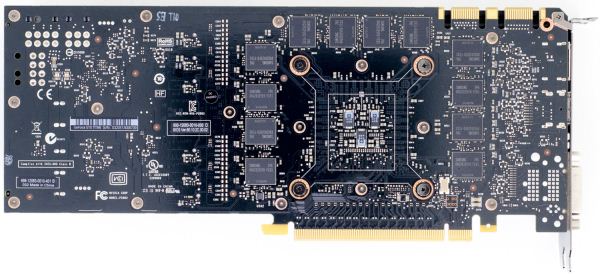

As for the back side of the card, keeping with most of NVIDIA’s cards Titan runs with a bare back. The GDDR5 RAM chips don’t require any kind of additional cooling, and a metal backplate while making for a great feeling card, occupies precious space that would otherwise impede cooling in tight spaces.

Moving on, let’s talk about the electrical details of Titan’s design. Whereas GTX 680 was a 4+2 power phase design – 4 power phases for the GPU and 2 for the VRAM – Titan improves on this by moving to a 6+2 power phase design. I suspect the most hardcore of overclockers will be disappointed with Titan only having 6 phases for the GPU, but for most overclocking purposes this would seem to be enough.

Meanwhile for RAM it should come as no particular surprise that NVIDIA is once more using 6GHz RAM here. Specifically, NVIDIA is using 24 6GHz Samsung 2Gb modules here, totaling up to the 6GB of RAM we see on the card. 12 modules are on front with the other 12 modules on the rear. The overclocking headroom on 6GHz RAM seems to vary from chip to chip, so while Titan should have some memory overclocking headroom it’s hard to say just what the combination of luck and the wider 384bit memory bus will do.

Providing power for all of this is a pair of PCIe power sockets, a 6pin and an 8pin, for a combined total of 300W of capacity. With Titan only having a TDP of 250W in the first place, this leaves quite a bit of headroom before ever needing to run outside of the PCIe specification.

At the other end of Titan we can see that NVIDIA has once again gone back to their “standard” port configuration for the GeForce 600 series: two DL-DVI ports, one HDMI port, and one full-size DisplayPort. Like the rest of the 600 family, Titan can drive up to four displays so this configuration is a good match. Though I would still like to see two mini-DisplayPorts in the place of the full size DisplayPort, in order to tap the greater functionality DisplayPort offers though its port conversion mechanisms.

157 Comments

View All Comments

bigboxes - Tuesday, February 19, 2013 - link

This is Wreckage we're talking about. He's trolling. Nothing to see here. Move along.chizow - Tuesday, February 19, 2013 - link

I agree with his title, that AMD is at fault at the start of all of this, but not necessarily with the rest of his reasonings. Judging from your last paragraph, you probably agree to some degree as well.This all started with AMD's pricing of the 7970, plain and simple. $550 for a card that didn't come anywhere close to justifying the price against the last-gen GTX 580, a good card but completely underwhelming in that flagship slot.

The 7970 pricing allowed Nvidia to:

1) price their mid-range ASIC, GK104, at flagship SKU position

2) undercut AMD to boot, making them look like saints at the time and

3) delay the launch of their true flagship SKU, GK100/110 nearly a full year

4) Jack up the prices of the GK110 as an ultra-premium part.

I saw #4 occurring well over a year ago, which was my biggest concern over the whole 7970 pricing and GK104 product placement fiasco, but I had no idea Nvidia would be so usurous as to charge $1k for it. I was expecting $750-800....$1k....Nvidia can go whistle.

But yes, long story short, Nvidia's greed got us here, but AMD definitely started it all with the 7970 pricing. None of this happens if AMD prices the 7970 in-line with their previous high-end in the $380-$420 range.

TheJian - Wednesday, February 20, 2013 - link

You realize you're dogging amd for pricing when they lost 1.18B for the year correct? Seriously you guys, how are you all not understanding they don't charge ENOUGH for anything they sell? They had to lay of 30% of the workforce, because they don't make any money on your ridiculous pricing. Your idea of pricing is KILLING AMD. It wasn't enough they laid of 30%, lost their fabs, etc...You want AMD to keep losing money by pricing this crap below what they need to survive? This is the same reason they lost the cpu war. They charged less for their chips for the whole 3yrs they were beating Intel's P4/presHOT etc to death in benchmarks...NV isn't charging too much, AMD is charging too LITTLE.AMD has lost 3-4B over the last 10yrs. This means ONE thing. They are not charging you enough to stay in business.

This is not complicated. I'm not asking you guys to do calculus here or something. If I run up X bills to make product Y, and after selling Y can't pay X I need to charge more than I am now or go bankrupt.

Nvidia is greedy because they aren't going to go out of business? Without Intel's money they are making 1/2 what they did 5yrs ago. I think they should charge more, but this is NOT gouging or they'd be making some GOUGING like profits correct? I guess none of you will be happy until they are both out of business...LOL

chizow - Wednesday, February 20, 2013 - link

1st of all, AMD as a whole lost money, AMD's GPU division (formerly ATI) has consistently operated at a small level of profit. So comparing GPU pricing/profits impact on their overall business is obviously going to be lost in the sea of red ink on AMD's P&L statement.Secondly, the massive losses and devaluation of AMD has nothing to do with their GPU pricing, as stated, the GPU division has consistently turned a small profit. The problem is the fact AMD paid $6B for ATI 7 years ago. They paid way too much, most sane observers realized that 7 years ago and over the past 5-6 years it's become more obvious. The former ATI's revenue and profits did not justify the $6B price tag and as a result, AMD was *FORCED* to write down their assets as there were some obvious valuation issues related to the ATI acquisition.

Thirdly, AMD has said this very month that sales of their 7970/GHz GPUs in January 2013 alone exceeded sales of those cards in the previous *TWELVE MONTHS* prior. What does that tell you? It means their previous price points that steadily dropped from $550>500>$450 were more than the market was willing to bear given the product's price:performance relative to previous products and the competition. Only after they settled in on that $380/$420 range for the 7970/GHz edition along with a very nice game bundle did they start moving cards in large volume.

Now you do the math, if you sell 12x as many cards in 1 month at $100 profit instead of 1/12x as many cards at $250 profit over the course of 1 year, would you have made more money if you just sold the higher volume at a lower price point from the beginning? The answer is yes. This is a real business case that any Bschool grad will be familiar with when performing a cost-value-profit analysis.

CeriseCogburn - Sunday, February 24, 2013 - link

Wow, first of all, basic common sense is all it takes, not some stupid idiot class for losers who haven't a clue and can't do 6th grade math.Unfortunately, in your raging fanboy fever pitch, you got the facts WRONG.

AMD said it sold more in January than any other SINGLE MONTH of 2012 including "Holiday Season" months.

Nice try there spanky, the brain farts just keep a coming.

frankgom23 - Tuesday, February 19, 2013 - link

Who wants to pay more for lessno new features..., this is a paper launch of a useless board for the consumer, I don't even need to see official benchmarks, I'm completely dissapointed.

Maybe it's time to go back to ATI/AMD.

imaheadcase - Tuesday, February 19, 2013 - link

If you would actually READ the article you would know why.I love how people cry a river without actually knowing how the card will perform yet.

CeriseCogburn - Sunday, February 24, 2013 - link

Yes, go back, your true home is with losers and fools and crashers and bankrupt idiots who cannot pay for their own stuff.The last guy I talked to who installed a new AMD card for his awesome Eyefinity monitors gaming setup struggled for several days encompassing dozens of hours to get the damned thing stable, exclaimed several times he had finally achieved, and yet, the next day at it again, and finally took the thing, walked outside and threw it up against the brick wall "shattering it into 150 pieces" and "he's not going dumpster diving" he tells me, to try to retrieve a piece or part of it which might help him repair one of the two other DEAD upper range amd cards ( of 4 dead amd cards in the house ) he recently bought for mega gaming system.

ROFL

Yeah man, not kidding. He doesn't like nVidia by the way. He still is an amd fanboy.

He is a huge gamer with multiple systems all running all day and night - and his "main" is "down"... needless to say it was quite stressful for him and has done nothing good for the very long friendship.

LOL - Took it and in a seeing red rage and smashed that puppy to smithereens against the brick wall.

So please, head back home, lots of lonely amd gamers need support.

iMacmatician - Tuesday, February 19, 2013 - link

"For our sample card this manifests itself as GPU Boost being disabled, forcing our card to run at 837MHz (or lower) at all times. This is why NVIDIA’s official compute performance figures are 4.5 TFLOPS for FP32, but only 1.3 TFLOPS for FP64. The former assumes that boost is enabled, while the latter is calculated around GPU Boost being disabled. The actual execution rate is still 1/3."But the 837 MHz base and 876 MHz boost clocks give 2·(876 MHz)·(2688 CCs) = 4.71 SP TFLOPS and 2·(837 MHz)·(2688 CCs)·(1/3) = 1.50 DP TFLOPS. What's the reason for the discrepancies?

Ryan Smith - Tuesday, February 19, 2013 - link

Apparently in FP64 mode Titan can drop down to as low as 725MHz in TDP-constrained situations. Hence 1.3TFLOPS, since that's all NVIDIA can guarantee.