The ARM vs x86 Wars Have Begun: In-Depth Power Analysis of Atom, Krait & Cortex A15

by Anand Lal Shimpi on January 4, 2013 7:32 AM EST- Posted in

- Tablets

- Intel

- Samsung

- Arm

- Cortex A15

- Smartphones

- Mobile

- SoCs

Cortex A15: SunSpider 0.9.1

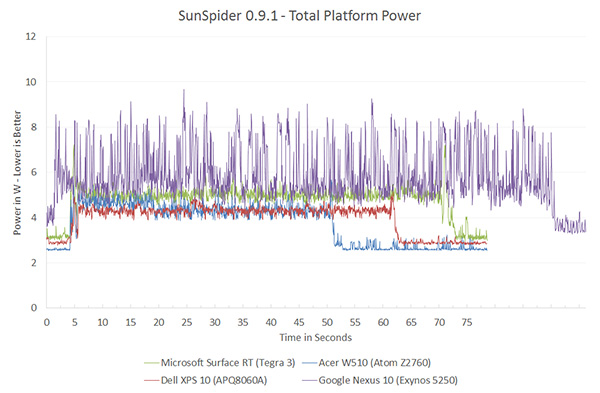

SunSpider performance in Chrome on the Nexus 10 isn't all that great to begin with, so the Exynos 5250 curve is longer than the competition. I wouldn't pay too much attention to overall performanceas that's more of a Chrome optimization issue, but we begin to shine some light on Cortex A15's power consumption:

Although these line graphs are neat to look at, it's tough to quantify exactly what's going on here. Following every graph from here on forward I'll present a bar chart that integrates over the benchmark time period (excluding idle) and presents total energy used during the task in Joules.

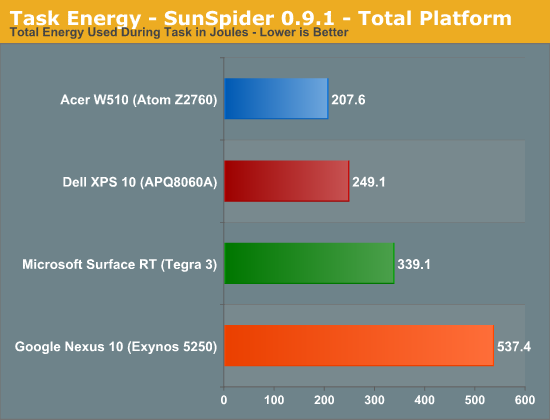

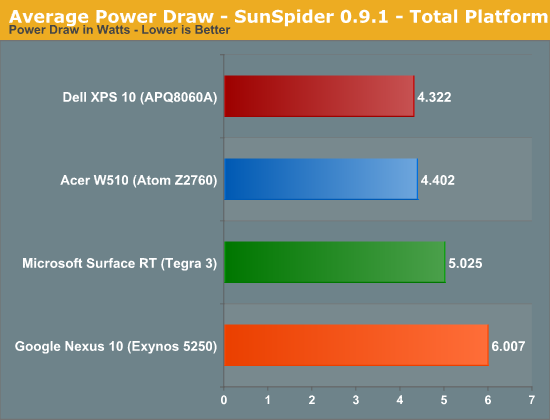

The data here reflects what you see in the chart above fairly well. Acer/Intel manage to get the edge over Dell/Qualcomm when it comes to total energy consumed during the test. The Nexus 10 doesn't do so well here but that's likely a software issue more than anything else.

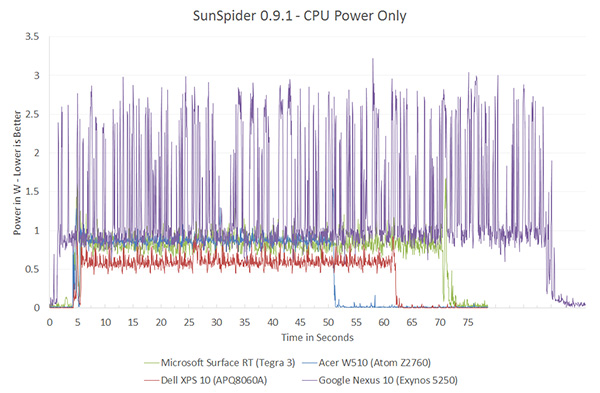

CPU power is just insane. Peak power consumption is around 3W, compared to around 1W for the competition.

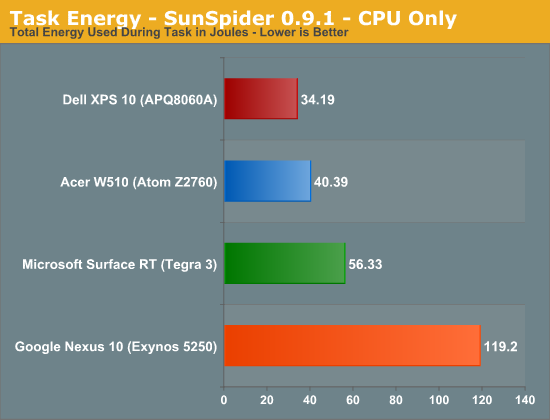

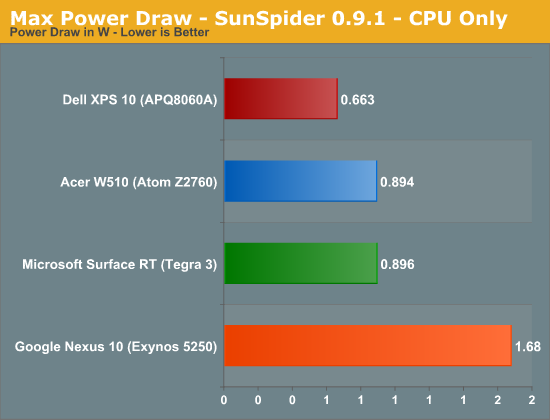

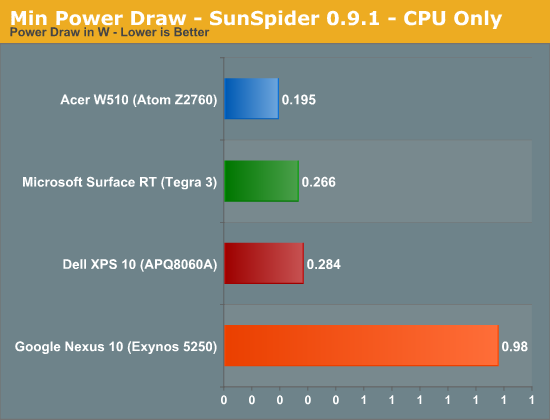

Looking at the CPU core itself, Qualcomm appears to have the advantage here but keep in mind that we aren't yet tracking L2 cache power on Krait (but we are on Atom). Regardless Atom and Krait are very close.

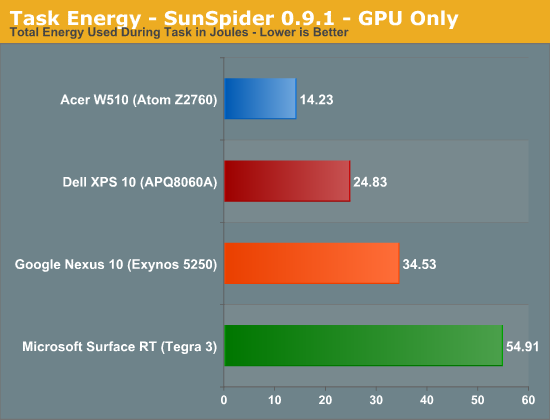

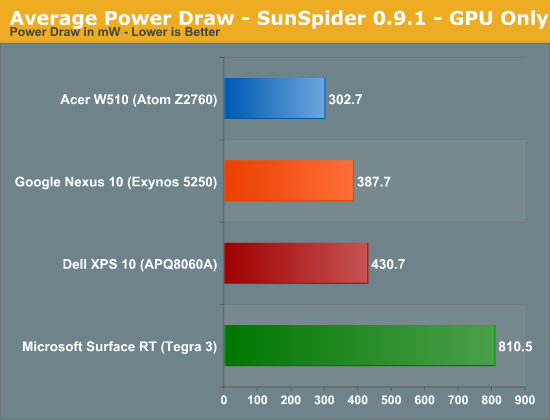

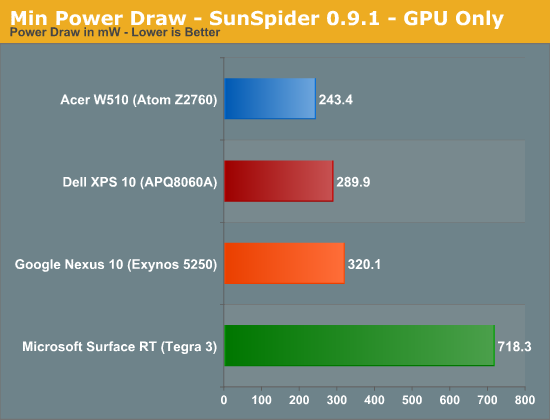

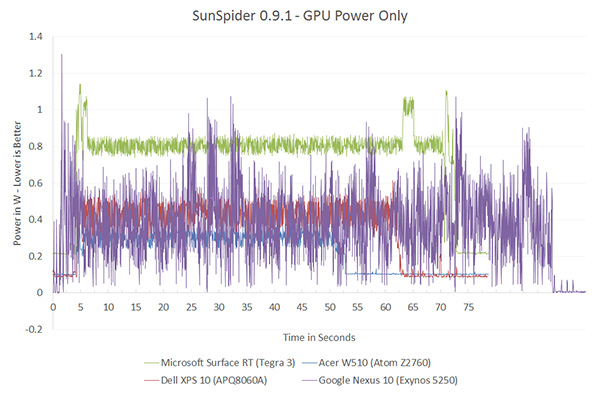

Even GPU power consumption is pretty high compared to everything else (minus Tegra 3).

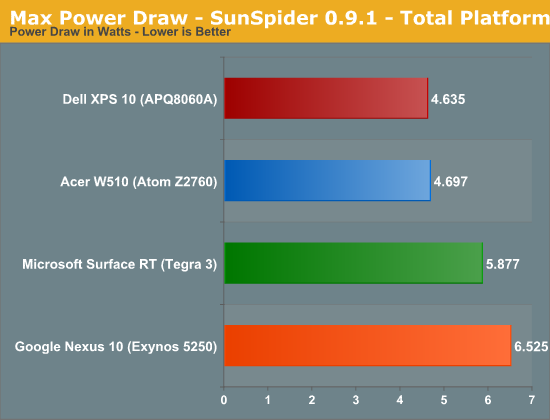

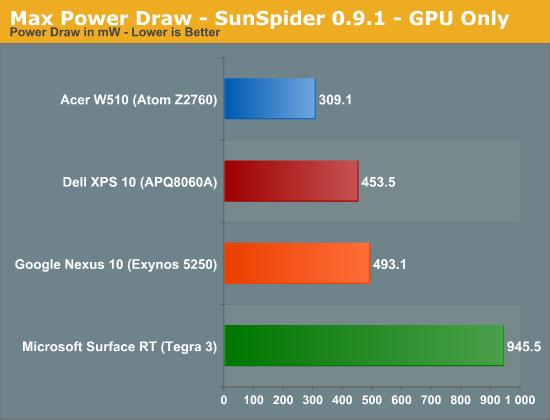

SunSpider - Max, Avg, Min Power

For your reference, the remaining graphs present max, average and min power draw throughout the course of the benchmark (excluding beginning/end idle times).

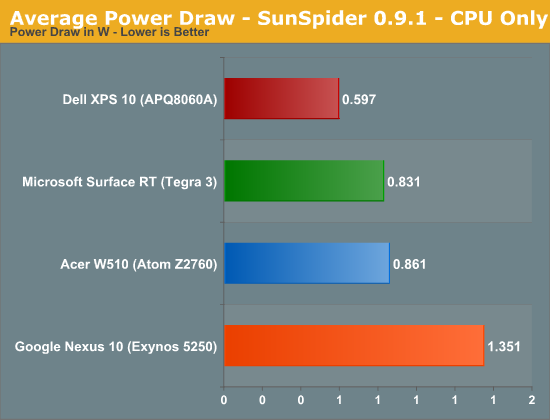

Average Power Draw

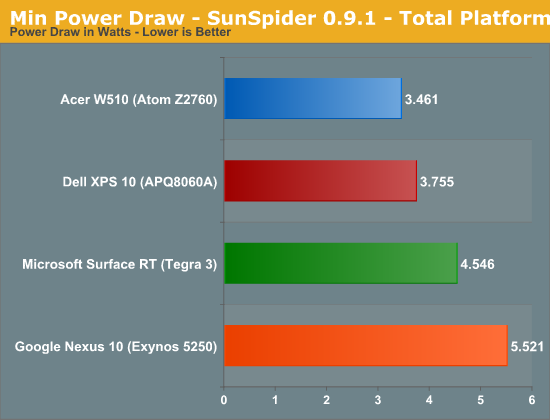

Minimum Power Draw

140 Comments

View All Comments

metafor - Friday, January 4, 2013 - link

It matters to a degree. Look at the CPU power chart, the CPU is constantly being ramped from low to high frequencies and back.Tegra automatically switches the CPU to a low-leakage core at some frequency threshold. This helps in almost all situations except for workloads that constantly keep the CPU at above that threshold, which, if you look at the graph, isn't the case.

That being said, that doesn't mean it'll be anywhere near enough to catch up to its Atom and Krait competitors.

jeffkro - Saturday, January 5, 2013 - link

The tegra 3 is also not the post powerful arm processor, intel obviously chose it to make atom look better.npoe1 - Wednesday, January 9, 2013 - link

From one of Ananad's articles: "NVIDIA recently revealed it was doing something similar to this with its upcoming Tegra 3 (Kal-El) SoC. NVIDIA outfitted its next-generation SoC with five CPU cores, although only a maximum of four are visible to the OS. If you’re running light tasks (background checking for email, SMS/MMS, twitter updates while your phone is locked) then a single low power Cortex A9 core services those needs while the higher performance A9s remain power gated. Request more of the OS (e.g. unlock your phone and load a webpage) and the low power A9 goes to sleep and the 4 high performance cores wake up."http://www.anandtech.com/show/4991/arms-cortex-a7-...

jeffkro - Saturday, January 5, 2013 - link

A15 currently pulls to much power for smartphone but it makes for a great tablet chip as well as providing enough horse power to power basic laptops.djgandy - Friday, January 4, 2013 - link

The most obvious thing here is that PowerVR graphics are far superior to Nvidia graphics.Wolfpup - Friday, January 4, 2013 - link

Actually no, that isn't obvious at all. Tegra 3 is a two year old design, on a 2 generations old process. The fact that it's still competitive today is just because it was so good to begin with. It'll be nessisary to look at the performance and power usage of upcoming Nvidia chips on the same process to actually say anything "obvious" about them.Death666Angel - Friday, January 4, 2013 - link

According to Wikipedia, the 545 is from January '10, so it's got its a 3 year old now. The only current gen thing here is the Mali. The 225 is just a 220 with a higher clock, so it's about 1.5 to 2 years old.djgandy - Friday, January 4, 2013 - link

And a 4/5 year old atom and the 2/3 year+ old SGX545 aren't old designs?Look at the power usage of Nvidia. It's way beyond what is acceptable for any SOC design. Phones from 2 years ago used far less power on older processes than the 40nm T3! Just look at GLbenchmark battery life tests for the HTC One X and you'll see how poor the T3 GPU is. In fact just take your Nvidia goggles off and re-read this whole article.

Wolfpup - Friday, January 4, 2013 - link

Atom's basic design is old, the manufacturing process is newer. Tegra 3 is by default at the biggest disadvantage here. You accuse me of bias when it appears you're actually biased.Chloiber - Tuesday, January 8, 2013 - link

First of all it's still 40nm.Second of all: you mentioned the battery benchmarks yourself. Go look at the Nexus 4 review and look how the international version of the One X fares. Battery life on the T3 One X is very good, if you take into account that it's based on 40nm compared to 28nm of the One XL and uses 4 cores.