Intel SSD 335 (240GB) Review

by Kristian Vättö on October 29, 2012 11:30 AM ESTTesting Endurance

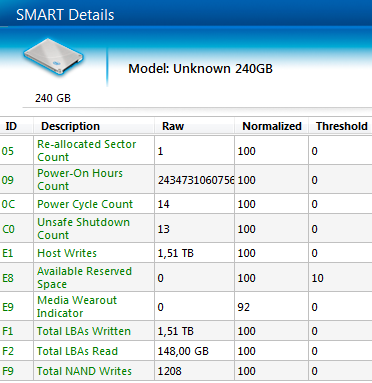

We've mentioned in the past that NAND endurance is not an issue for client workloads. While Intel's SSD 335 moves to 20nm MLC NAND, the NAND itself is still still rated at the same 3,000 P/E cycles as Intel's 25nm MLC NAND. Usually we can't do any long-term endurance testing on SSDs for the initial review because it simply takes way too long to wear out an SSD. Even if you're constantly writing to a drive, it will take weeks, possibly even months for the drive to wear out. Fortunately Intel reports total NAND writes and percentage of lifespan remaining as SMART values that can be read using the Intel SSD Toolbox. The variables we want to pay attention to are the E9 and F9 SMART values, which represent the Media Wearout Indicator (MWI) and total NAND writes. Using those values, we can estimate the long-term endurance of an SSD without weeks of testing. Here is what the SMART data looked like before I started our endurance test:

This screenshot was taken after all our regular tests had been run, hence there are already some writes to the drive, although nothing substantial. What surprised me was that the MWI was already at 92, even though I had only written 1.2TB to the NAND. Remember that the MWI begins at 100 and then decreases down to 1 as the drive uses up its program/erase cycles. Even after it has hit 1, it's likely the drive can still withstand additional write/erase cycles thanks to MLC NAND typically behaving better than the worst-case estimates.

We've never received an Intel SSD sample that started with such a low MWI, indicating either a firmware bug or extensive in-house testing before the drive was sent to us.

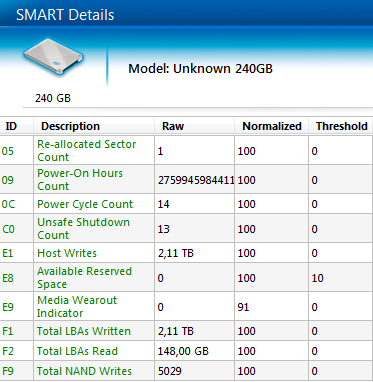

To write as much as possible to the drive before the NDA lift, I first filled the drive with incompressible data and then proceeded with incompressible 4KB random writes at queue depth of 32. SandForce does real-time data compression and deduplication, so using incompressible random data was the best way to write a lot of data to NAND in a short period of time. I ran the tests in about 10-hour blocks, here is the SMART data after 11 hours of writing:

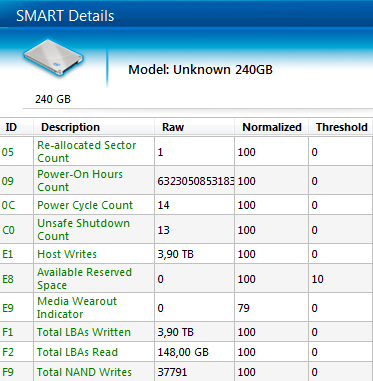

I had written another ~3.8TB to the NAND in just 11 hours but what's shocking is that the MWI had dropped from 92 to 91. With the SSD 330, Anand wrote 7.6TB to the NAND and the MWI stayed at 100, and that was a 60GB model; our SSD 335 is 240GB and thus it should be more durable (more NAND to write to). It's certainly possible that the MWI was at the edge of 92 and 91 after Intel's in-house testing, but I decided to run more tests to see if that was the case. Let's fast-forward 105 hours that I spent writing to the drive in total:

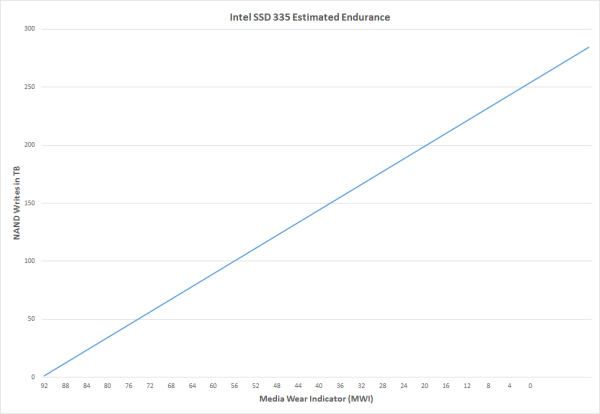

In a few days, I managed to write a total of 37.8TB to the NAND and during that time, the MWI had dropped from 92 to 79. In other words, I used up 13% of the drive's available P/E cycles. This is far from being good news. Based on the data I gathered, the MWI would hit 0 after around 250TB of NAND writes, which translates to less than 1,000 P/E cycles.

I showed Intel my findings and they were as shocked as I was. The drive had undergone their validation before shipping and nothing out of the ordinary was found. Intel confirmed that the NAND in SSD 335 should indeed be 3,000 P/E cycles, so my findings contradicted with that data by a fairly significant margin. Intel hadn't seen anything like this and asked me to send the drive back for additional testing. We'll be getting a new SSD 335 sample to see if we can replicate the issue.

It's understandable that the endurance of 20nm NAND may be slightly lower compared to 25nm even though they are both rated at 3,000 P/E cycles (Intel does have 25nm with 5,000 cycles as well) because 25nm is now a mature process whereas 20nm is very new. Remember that the P/E cycle rating is the minimum the NAND must withstand; in reality it can be much more durable as we saw with the SSD 330 (based on our tests its NAND was good for at least 6,000 P/E cycles). Hence both 20nm and 25nm MLC NAND can be rated at 3,000 cycles, although their endrudance in real world may vary (but both should still last for at least 3,000 cycles).

It's too early to conclude much based on our sample size of one. There's always the chance that our drive was defective or subject to a firmware bug. We'll be updating this section once we get a new drive in house for additional testing.

69 Comments

View All Comments

MichaelD - Tuesday, October 30, 2012 - link

I agree! Take Corsair for example; they've got like a hundred (sic) different SKU's. "Force" "Subforce" "Battle" "Skeedaddle" and "Primo" versions of SSDs...marketing FUD at it's finest. Only the .5% of SSD buyers (like AT readers) will actually look at specs and decide. The other 99.5% will just buy whatever box has the "fastest looking" cover art.zanon - Monday, October 29, 2012 - link

Now they just get giggle-stomped across the board by Samsung. I hope Intel decides to be competitive again someday, but in the mean time it's hard to see any reason to bother.MadMan007 - Monday, October 29, 2012 - link

Read past the sythetics to real-world tests. It is at least competitive in most cases, and all these drives are stupid fast anyway.jeffbui - Monday, October 29, 2012 - link

Why is there such a discrepancy between the power consumption figures given by the manufacturer vs what you're getting from your testing? (Samsung mostly) Other websites are getting completely different power usage figures as well.DanNeely - Monday, October 29, 2012 - link

Comments on one of AT's other recent SSD articles claimed this is because the power consumption test is being done using an external enclosure that never lets the drive drop into it's lowest power states. I didn't see any official comment on it.Kristian Vättö - Monday, October 29, 2012 - link

Some manufacturers such as Samsung report their power numbers with DIPM/HIPM (Device/Host Initiated Link Power Management) enabled, which can lower the power consumption significantly. DIPM/HIPM are not enabled on desktop by default and I'm not sure if all laptops have them enabled either.We have ran tests with DIPM/HIPM enabled and gotten results similar to what manufacturers report, but so far we have kept on publishing numbers with DIPM/HIPM disabled. We will probably add DIPM/HIPM numbers once we redo our SSD testing methodology.

DanNeely - Monday, October 29, 2012 - link

If available, would the feature be called DIPM/HIPM in our bios's; or is it likely to be obfuscated to something else?Also, why is it often disabled by default? Is there a penalty related to enabling it?

Kristian Vättö - Monday, October 29, 2012 - link

Here are instructions for enabling DIPM/HIPM:http://www.sevenforums.com/tutorials/177819-ahci-l...

In desktops it's not as important because you aren't running off of a battery and the power that SSDs/HDs use is so little anyway that it won't affect your power bill. I'm not sure why it's disabled, though, because I havent heard of any concrete issues caused by it.

Per Hansson - Monday, October 29, 2012 - link

Actually I'm not sure that DIPM/HIPM is the whole reason.I mentioned it previously and it for sure can have a dramatic difference.

But just as important is the measuring equipment used for the power consumption.

A cheap DMM only measures in very slow intervals, you would get very different results when measuring using a $100 Fluke vs a $1000 Fluke vs a Scope with really high bandwidth.

It's because the SSD changes power levels many hundred times per second and this is too fast for a regular DMM so you just get some of the data points, not enough to make a reliable averge...

MrSpadge - Monday, October 29, 2012 - link

Wouldn't the slower / cheaper DMM measure over longer intervals (that's why it's slow in the first place) and hence automatically average over some fluctuation?