AMD A10-5800K & A8-5600K Review: Trinity on the Desktop, Part 2

by Anand Lal Shimpi on October 2, 2012 1:45 AM ESTPower Consumption

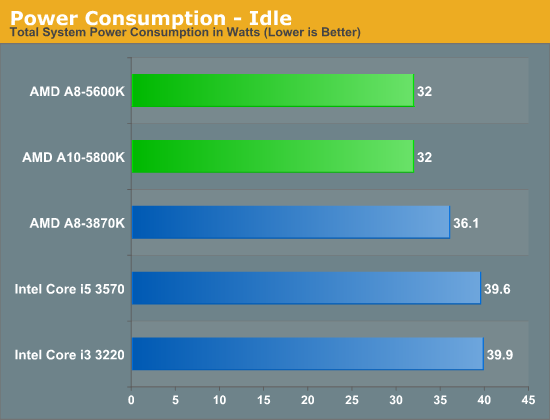

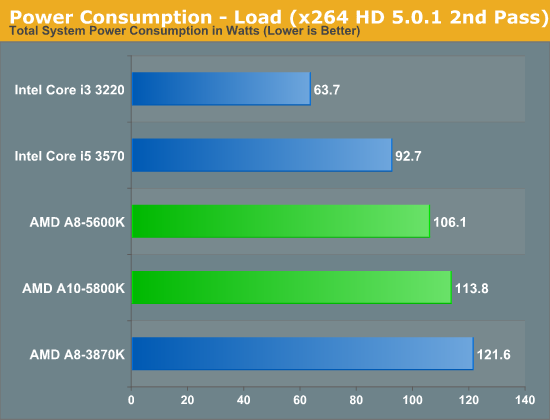

Intel has a full process node advantage when you compare Ivy Bridge and Trinity, as a result of that plus an architectural efficiency advantage you just get much better power consumption from the Core i3 than you do with Trinity. Idle power is very good but under heavy CPU load Trinity consumes considerably more power. You're basically looking at quad-core Ivy Bridge levels of power usage under load but performance closer to that of a dual-core Ivy Bridge. AMD really needs a lot of design level efficiency improvements to get power consumption under control. Compared to Llano, Trinity is a bit more efficient it seems so there's an actual improvement there.

Of course on the processor graphics side the story is much closer with Trinity being a bit more power hungry than Ivy Bridge, but not nearly by this margin.

178 Comments

View All Comments

mattgmann - Tuesday, October 2, 2012 - link

I still don't understand where these CPUs fit in the market. Sure, this line of CPUs has made some advances, but the features it relies on to succeed aren't good enough in real world applications.1. Gaming. It's still not fast enough to run modern games. Not to argue, but lowest possible settings and super low frame rates aren't good enough.

2. Content creation. In certain points in your day you may run a short program that's optimized to work well. But the rest of the day, you'll be wishing you had a quad core intel.

3. Casual home/office use. A pure waste of electricity. The intel chip decodes HD video fine and provides a quicker user experience with a much lower energy cost (and is dead silent)

The "upgrade path" argument doesn't make a ton of sense either. In reality, not many people actually follow upgrade paths on platforms, because, in reality, you end up spending today's prices for yesterdays technology. Low end systems just aren't meant to be upgraded; they're meant to be replaced.

I REALLY want AMD to give intel a kick in the but. I miss the days of low end, unlocked intel processors. Think of what those little i3's could probably do with unlocked multi's, 1.4V vcore and some fast memory!

At least this is a step in the right direction for amd....sort of

Hubb1e - Tuesday, October 2, 2012 - link

I'm sorry but your answers to your own question are inaccurate.1. Gaming. For many people who are not on this forum low / medium settings are fine and older games are cheaper to buy and are still fun. Wow and Diablo play very nicely on this APU at medium / high settings and that is where the vast majority of casual gamers are buying.

2. Content creation. If you are wishing you had a quad core intel then you're in need of a real workstation, not an i3 competitor. I work fine on a mobile i5 -540 at 2.5ghz. 90% of the time it is idle.

3. Casual home office use is all idle and if you go back and look Trinity idles lower than Ivy so I don't see your point about it being a waste of electricity. A quicker user experience is about the SSD and not the CPU. Users will not notice a 12% difference in CPU performance.

4. Agree with the upgrade path, though as a builder for my family FM1 being a dead end made it a socket that I didn't want to touch.

mikato - Wednesday, October 3, 2012 - link

Well said. I would also like to point out that Angry Birds and Words With Friends are also "modern games".vegemeister - Wednesday, October 3, 2012 - link

1. Gaming: Look at those benchmarks. Low settings, punk-ass resolution, no AA, and STILL DROPPING FRAMES.HTPC: there are two kinds pf CPUs for HTPC: those that can decode 10 bit h.264 at 1920x1080 in real time, and those that cannot. Unfortunately, this review doesn't have benchmarks for that.

iTzSnypah - Tuesday, October 2, 2012 - link

The A4-5300 looks promising for its price and intended use. I keep telling my brother that he really needs to upgrade his computer (8 year old HP with Single-core AMD Athlon X64) and the A4-5300 looks like it would fit his needs perfectly. I get tired of going to his house and waiting 5 minutes to open the internet. Also only being able to watch 360/480p (depending on the 'mood' of the computer) is beyond annoying. Its his birthday this month so I might surprise him.Hubb1e - Tuesday, October 2, 2012 - link

I have a single core Athlon64 at 2.4 ghz that works just fine. The problem is a lack of ram, slow hard drive, OS bloat, and a lack of GPU acceleration for youtube. I have 1.5GB of memory and a good video card that offloads youtube and the single core computer runs pretty well. I am constantly amazed at how well it works for casual use.But yeah, an upgrade could be in order but I'd argue the Celeron G530 would be a better choice. Anand tests the Pentium and it actually beats the A10 in some benches. The G530 is still a full dual core CPU and is only a few mhz slower. The A4 drops a whole module and in benches on Toms looks pretty slow.

Jamahl - Tuesday, October 2, 2012 - link

Where was the A4 benched on Tom's? From what I can tell the A6-5400K is drawing very close to the 3870K in gaming. The A4 will be further behind but it'll still be up with say, the triple core A6-3500 performance imo.Ananke - Tuesday, October 2, 2012 - link

These are great for OEM, the 95% of the PC market :). You know, what people are buying from HP, Dell, Lenovo etc.Enthusiasts will probably not be appealed by Trinity, but enthusiasts are very small market.

wenbo - Tuesday, October 2, 2012 - link

Enthusiasts are very small market, but they are very VERBAL :)vegemeister - Tuesday, October 2, 2012 - link

I see that you are planning to move to a newer version of x264 for benchmarking. Since direct comparisons are going to be invalidated anyway, why not go ahead and move to a crf encode like everyone else not stuck in the last decade?2-pass does not compress any more effectively than 1-pass. The only reason to use it is to get very close to a particular file size. x264 is much better than you at deciding how many bits it needs for acceptable quality on a particular file. These days, most people store their video on media far larger than a single file. It no longer makes sense to benchmark the use case of sqeezing as much quality as possible out of 700 MB.