The Intel Ivy Bridge (Core i7 3770K) Review

by Anand Lal Shimpi & Ryan Smith on April 23, 2012 12:03 PM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

Discrete GPU Gaming Performance

Gaming performance with a discrete GPU does improve in line with the rest of what we've seen thus far from Ivy Bridge. It's definitely a step ahead of Sandy Bridge, but not enough to warrant an upgrade in most cases. If you haven't already made the jump to Sandy Bridge however, the upgrade will do you well.

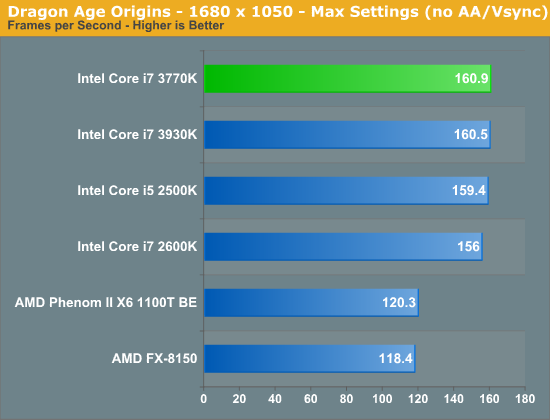

Dragon Age Origins

DAO has been a staple of our CPU gaming benchmarks for some time now. The third/first person RPG is well threaded and is influenced both by CPU and GPU performance. Our benchmark is a FRAPS runthrough of our character through a castle.

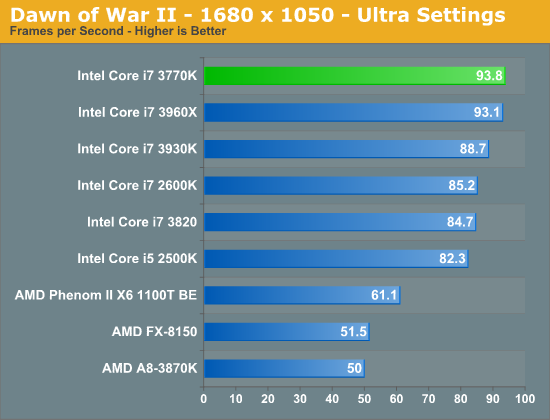

Dawn of War II

Dawn of War II is an RTS title that ships with a built in performance test. I ran at Ultra quality settings at 1680 x 1050:

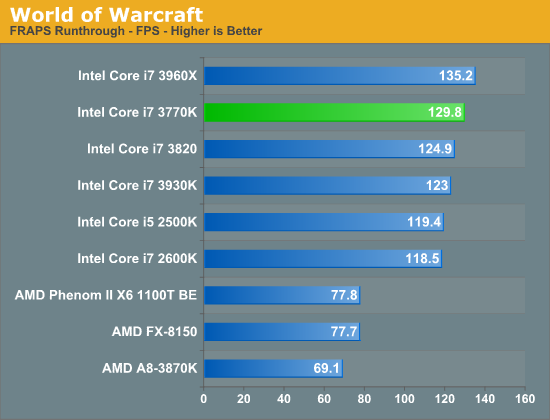

World of Warcraft

Our WoW test is run at High quality settings on a lightly populated server in an area where no other players are present to produce repeatable results. We ran at 1680 x 1050.

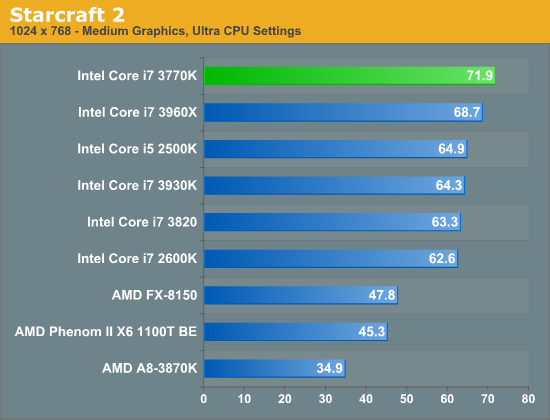

Starcraft 2

We have two Starcraft II benchmarks: a GPU and a CPU test. The GPU test is mostly a navigate-around-the-map test, as scrolling and panning around tends to be the most GPU bound in the game. Our CPU test involves a massive battle of 6 armies in the center of the map, stressing the CPU more than the GPU. At these low quality settings however, both benchmarks are influenced by CPU and GPU. We'll get to the GPU test shortly, but our CPU test results are below. The benchmark runs at 1024 x 768 at Medium Quality settings with all CPU influenced features set to Ultra.

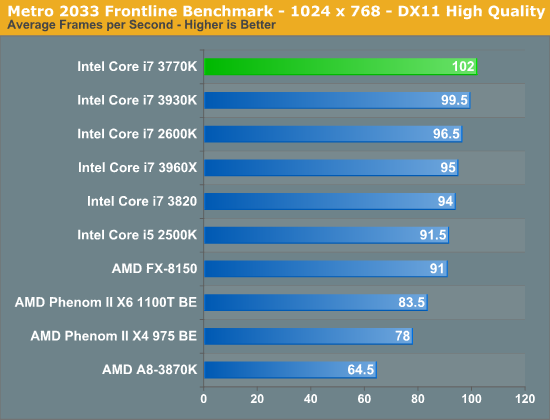

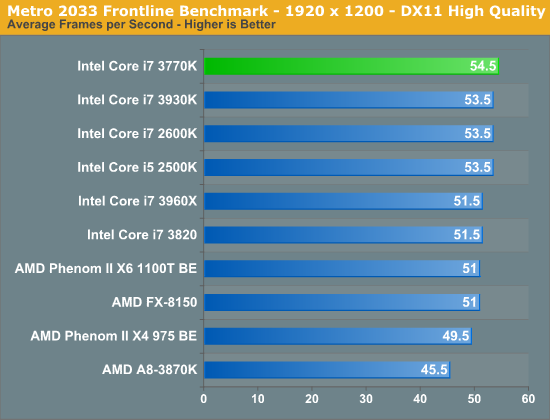

Metro 2033

We're using the Metro 2033 benchmark that ships with the game. We run the benchmark at 1024 x 768 for a more CPU bound test as well as 1920 x 1200 to show what happens in a more GPU bound scenario.

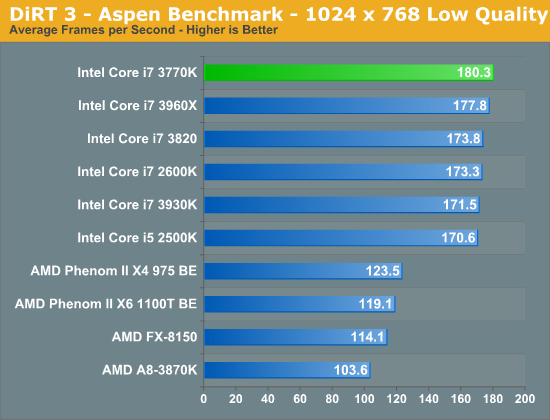

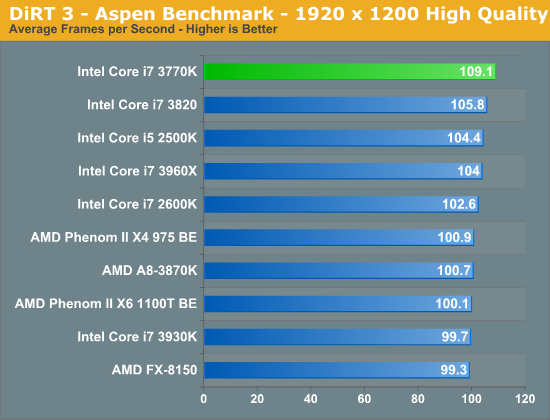

DiRT 3

We ran two DiRT 3 benchmarks to get an idea for CPU bound and GPU bound performance. First the CPU bound settings:

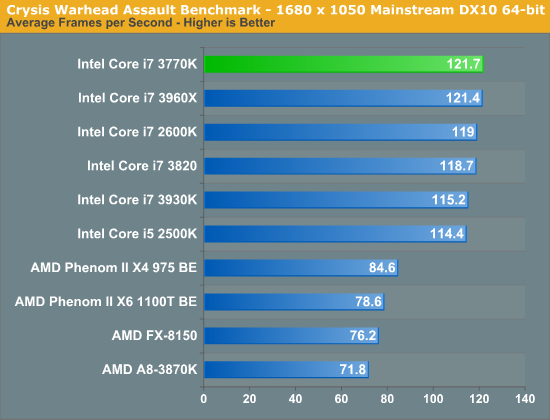

Crysis: Warhead

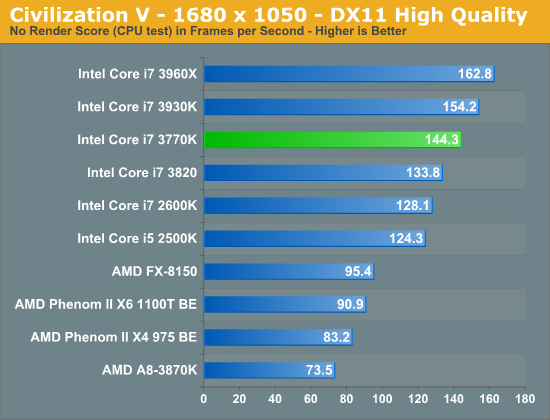

Civilization V

Civ V's lateGameView benchmark presents us with two separate scores: average frame rate for the entire test as well as a no-render score that only looks at CPU performance. We're looking at the no-render score here to isolate CPU performance alone:

173 Comments

View All Comments

ijozic - Thursday, April 26, 2012 - link

Maybe because people who prefer to have the IPS screen would also like to have support for graphics switching to have a nice battery life while not doing anything GPU intensive. This was the one thing I expected from Ivy Bridge upgrade and NADA.uibo - Monday, April 23, 2012 - link

Does anyone know if the 24Hz issue has been resolved?uibo - Monday, April 23, 2012 - link

nevermind just saw the htpc perspective reviewanirudhs - Monday, April 23, 2012 - link

I didn't notice that issue. 23.976*1000 = 23976 frames, 24 * 1000 = 24000 frames, in 16 mins 40 secs. So that's about one second of mismatch for every 1000 seconds. I could not notice this discrepancy while playing a Blu Ray on my PC. Could you?Old_Fogie_Late_Bloomer - Monday, April 23, 2012 - link

Okay, well, I'm pretty sure that you would notice two seconds of discrepancy between audio and video after half an hour of viewing, or four seconds after an hour, or eight seconds by the end of a two-hour movie.However, the issue is actually more like having a duplicated frame every 40 seconds or so, causing a visible stutter, which seems like it would be really obnoxious if you started seeing it. I don't use the on-board SB video, so I can't speak to it, but clearly it is an issue for many people.

JarredWalton - Monday, April 23, 2012 - link

I watch Hulu and Netflix streams on a regular basis. They do far more than "stutter" one frame out of every 960. And yet, I'm fine with their quality and so our millions of other viewers. I think the crowd that really gets irritated by the 23.976 FPS problems is diminishingly small. Losing A/V sync would be a horrible problem, but AFAIK that's not what happens so really it's just a little 0.04 second "hitch" every 40 seconds.Old_Fogie_Late_Bloomer - Monday, April 23, 2012 - link

Well, I can certainly appreciate that argument; I don't really use either of those services, but I know from experience they can be glitchy. On the other hand, if I'm watching a DVD (or <ahem> some other video file <ahem>) and it skips even a little bit, I know that I will notice it and usually it drives me nuts.I'm not saying that it's a good (or, for that matter, bad) thing that I react that way, and I know that most people would think that I was being overly sensitive (which is cool, I guess, but people ARE different from one another). The point is, if the movie stutters every 40 seconds, there are definitely people who will notice. They will especially notice if everything else about the viewing experience is great. And I think it's understandable if they are disappointed at a not insignificant flaw in what is otherwise a good product.

Now, if my math is right, it sounds like they've really got the problem down to once every six-and-a-half minutes, rather than every 40 seconds. You know, for me, I could probably live with that in an HTPC. But I certainly wouldn't presume to speak for everyone.

anirudhs - Tuesday, April 24, 2012 - link

I will get a discrete GPU and then do a comparison.anirudhs - Monday, April 23, 2012 - link

a discrete GPU! I could use a bump in transcoding performance for my ever-growing library of Blu-Rays.chizow - Monday, April 23, 2012 - link

Looks like my concerns a few years ago with Intel's decision to go on-package and eventually on-die GPU were well warranted.It seems as if Intel will be focusing much of the benefits from smaller process nodes toward improving GPU performance rather than CPU performance with that additional transistor budget and power saving.

I guess we will have to wait for IVB-E before we get a real significant jump in performance in the CPU segment, but I'm really not that optimistic at this point.