AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

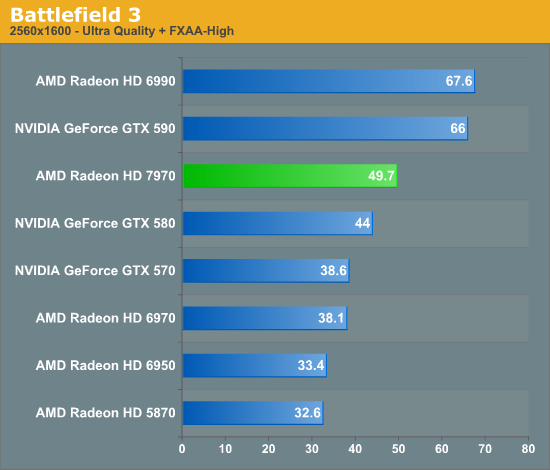

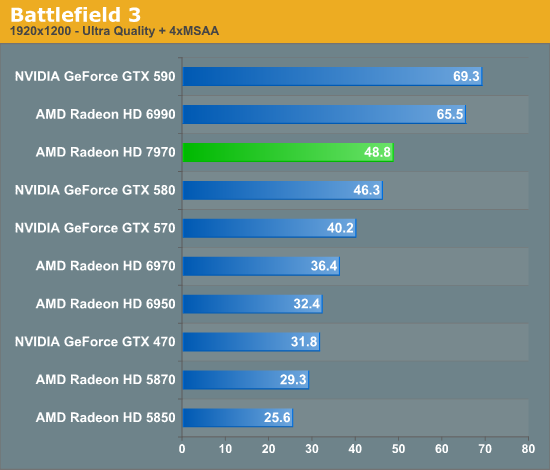

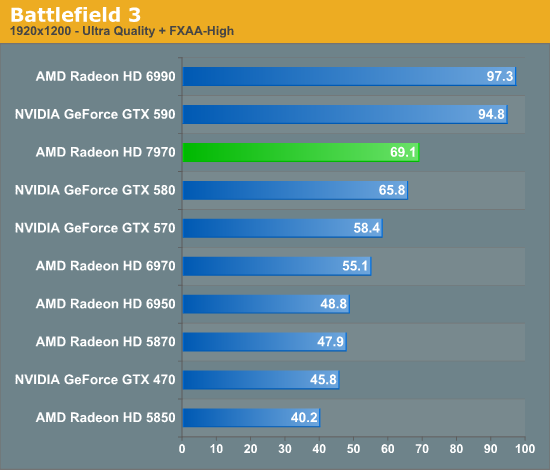

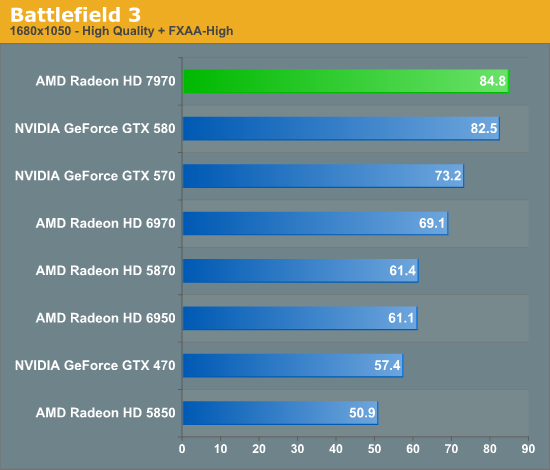

Battlefield 3

Its popularity aside, Battlefield 3 may be the most interesting game in our benchmark suite for a single reason: it’s the first AAA DX10+ game. It’s been 5 years since the launch of the first DX10 GPUs, and 3 whole process node shrinks later we’re finally to the point where games are using DX10’s functionality as a baseline rather than an addition. Not surprisingly BF3 is one of the best looking games in our suite, but as with past Battlefield games that beauty comes with a high performance cost

How to benchmark BF3 is a point of great contention. Our preference is to always stick to the scientific method, which means our tests need to be perfectly repeatable or very, very close. For that reason we’re using an on-rails section of the single player game, Thunder Run, to do our testing. This isn’t the most strenuous part of Battlefield 3 – multiplayer can get much worse – but it’s the most consistent part of the game. In general we’ve found that minimum framerates in multiplayer are about half of the average framerate in Thunder Run, so it’s important to frame your expectations accordingly.

With that out of the way, Battlefield 3 ends up being one of the worst games for the 7970 from a competitive standpoint. It always maintains a lead over the GTX 580, but the greatest lead is only 13% at 2560 without any MSAA, and everywhere else it’s 3-5%. Of course it goes without saying that realistically BF3 is only playable at 1920 (no MSAA) and below on any of the single-GPU cards in this lineup, so unfortunately for AMD it’s the 5% number that’s the most relevant.

Meanwhile compared to the 6970, the 7970’s performance gains are also a bit below average. 2560 and 1920 with MSAA are quite good at 30% and 34% respectively, but at 1920 without MSAA that’s only a 25% gain, which is one of the smaller gaps between the two cards throughout our entire test suite.

The big question of course is why are we only seeing such a limited lead from the 7970 here? BF3 implements a wide array of technologies so it’s hard to say for sure, but there is one thing we know they implement in the engine that only NVIDIA can use: Driver Command Lists, the same “secret sauce” that boosted NVIDIA’s Civilization V performance by so much last year. So it may be that NVIDIA’s DCL support is helping their performance here in BF3, much like it was in Civ V.

But in any case, this is probably the only benchmark that’s really under delivered for the 7970. 5% is still a performance improvement (and we’ll take it any day of the week), but this silences any reasonable hope of being able to use 1920 at Ultra settings with MSAA on a single-GPU card for the time being.

292 Comments

View All Comments

CrystalBay - Thursday, December 22, 2011 - link

Hi Ryan , All these older GPUs ie (5870 ,gtx570 ,580 ,6950 were rerun on the new hardware testbed ? If so GJ lotsa work there.FragKrag - Thursday, December 22, 2011 - link

The numbers would be worthless if he didn'tAnand Lal Shimpi - Thursday, December 22, 2011 - link

Yep they're all on the new testbed, Ryan had an insane week.Take care,

Anand

Lifted - Thursday, December 22, 2011 - link

How many monitors on the market today are available at this resolution? Instead of saying the 7970 doesn't quite make 60 fps at a resolution maybe 1% of gamers are using, why not test at 1920x1080 which is available to everyone, on the cheap, and is the same resolution we all use on our TV's?I understand the desire (need?) to push these cards, but I think it would be better to give us results the vast majority of us can relate to.

Anand Lal Shimpi - Thursday, December 22, 2011 - link

The difference between 1920 x 1200 vs 1920 x 1080 isn't all that big (2304000 pixels vs. 2073600 pixels, about an 11% increase). You should be able to conclude 19x10 performance from looking at the 19x12 numbers for the most part.I don't believe 19x12 is pushing these cards significantly more than 19x10 would, the resolution is simply a remnant of many PC displays originally preferring it over 19x10.

Take care,

Anand

piroroadkill - Thursday, December 22, 2011 - link

Dell U2410, which I have :3and Dell U2412M

piroroadkill - Thursday, December 22, 2011 - link

Oh, and my laptop is 1920x1200 too, Dell Precision M4400.My old laptop is 1920x1200 too, Dell Latitude D800..

johnpombrio - Wednesday, December 28, 2011 - link

Heh, I too have 3 Dell U2410 and one Dell 2710. I REALLY want a Dell 30" now. My GTX 580 seems to be able to handle any of these monitors tho Crysis High-Def does make my 580 whine on my 27 inch screen!mczak - Thursday, December 22, 2011 - link

The text for that test is not really meaningful. Efficiency of ROPs has almost nothing to do at all with this test, this is (and has always been) a pure memory bandwidth test (with very few exceptions such as the ill-designed HD5830 which somehow couldn't use all its theoretical bandwidth).If you look at the numbers, you can see that very well actually, you can pretty much calculate the result if you know the memory bandwidth :-). 50% more memory bandwidth than HD6970? Yep, almost exactly 50% more performance in this test just as expected.

Ryan Smith - Thursday, December 22, 2011 - link

That's actually not a bad thing in this case. AMD didn't go beyond 32 ROPs because they didn't need to - what they needed was more bandwidth to feed the ROPs they already had.