NVIDIA's GeForce GTX 560 Ti w/448 Cores: GTX 570 On A Budget

by Ryan Smith on November 29, 2011 9:00 AM ESTThe Test, Crysis, BattleForge, & Metro 2033

Yesterday NVIDIA launched their first 290 series beta driver - this was intended to be the launch driver for the GTX 560-448, but QA kept it held up longer than expected. In lieu of that we are using 285.62, the WHQL 285 series driver for the GTX 560-448.

For our look at performance we’ll be taking a look at our Zotac card both at NVIDIA’s stock speeds and at Zotac’s factory overclock. For power/temp/noise we’ll only be looking at Zotac’s card – the lack of a reference design means that temperatures and noise can’t be extrapolated for other partners’ cards.

| CPU: | Intel Core i7-920 @ 3.33GHz |

| Motherboard: | Asus Rampage II Extreme |

| Chipset Drivers: | Intel 9.1.1.1015 (Intel) |

| Hard Disk: | OCZ Summit (120GB) |

| Memory: | Patriot Viper DDR3-1333 3x2GB (7-7-7-20) |

| Video Cards: |

AMD Radeon HD 6870 AMD Radeon HD 6970 AMD Radeon HD 6950 NVIDIA GeForce GTX 580 NVIDIA GeForce GTX 570 NVIDIA GeForce GTX 560 Ti Zotac GeForce GTX 560 Ti 448 Cores Limited Edition |

| Video Drivers: |

NVIDIA GeForce Driver 285.62 AMD Catalyst 11.11a |

| OS: | Windows 7 Ultimate 64-bit |

As this is not a new architecture, we’ll keep the commentary thinner than usual. The near-GTX 570 specifications mean there aren’t any surprises with game performance.

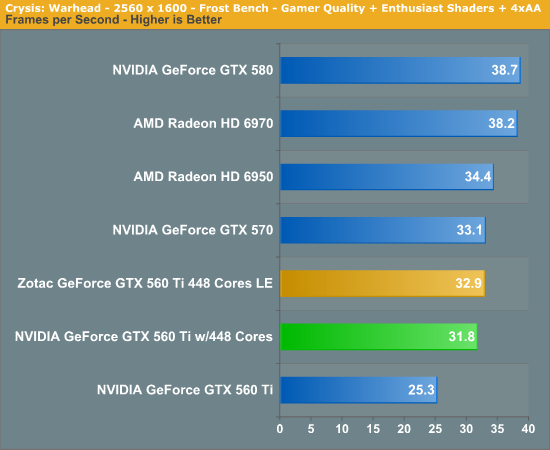

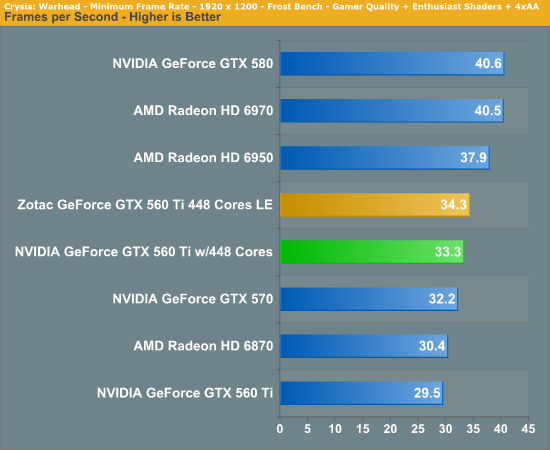

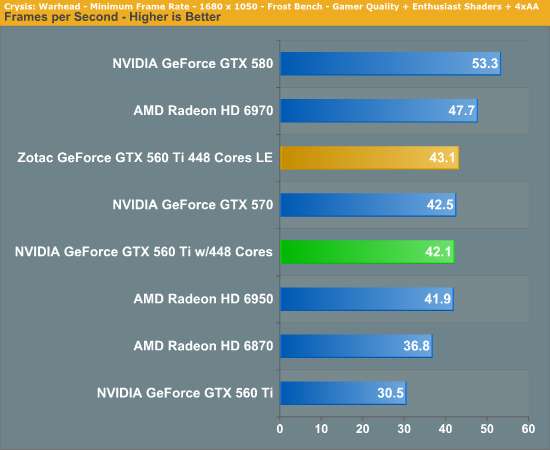

Starting as always with Crysis, at 2560 we can see that while GF100 cards perform decently at 2560, it’s really only the GTX 580 that stands a chance in any shader-heavy game. The GTX 560-448 in that respect is a lot like the GTX 560 Ti: it’s best suited for 1920 and below.

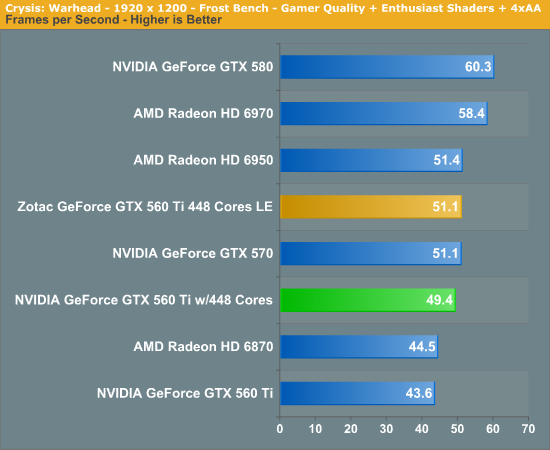

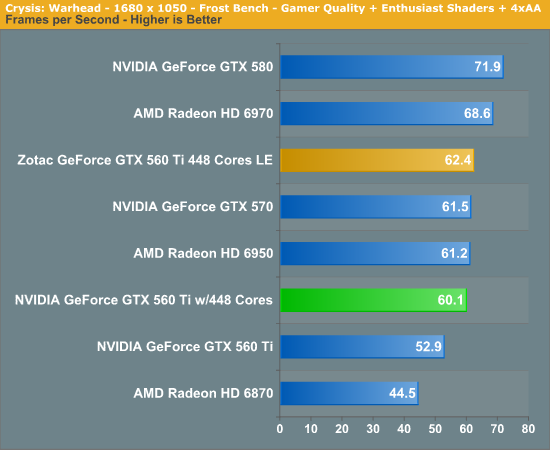

At 1920 and 1680 the GTX 560-448 is well ahead of its GF114-based namesake. As with the specs and architecture, the GTX 560-448 has more in common with the GTX 570 than it does the GTX 560 Ti. The end result is that the GTX 560-448 is just shy of 50fps at 1920, only a few percent off of the GTX 570. With Zotac’s overclock that closes the gap exactly, delivering the same 51.1fps performance. This goes to show just how close the GTX 560-448 and GTX 570 really are. NVIDIA may not want to call it a GTX 570 LE, but that’s really what it is.

Meanwhile compared to AMD’s lineup things are a little less rosy. The GTX 570 at launch was closer to competition for the Radeon HD 6970, but here the GTX 570 and GTX 560-448 are tied by or beaten by AMD’s cheaper 6950.

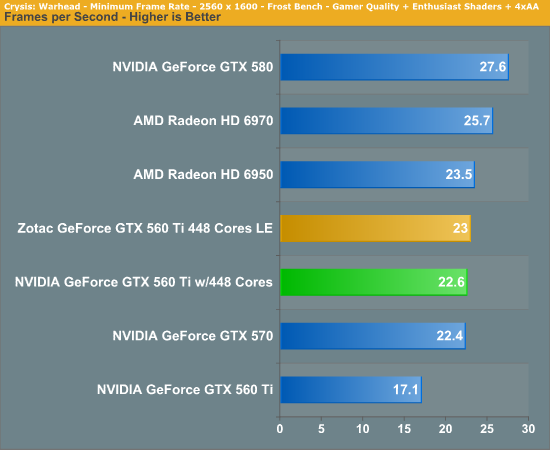

Looking at the minimum framerates we see the same trends. The GTX 560-448 is well above the GTX 560 Ti – by 13% at 1920 – but the 6950 is once again the victor.

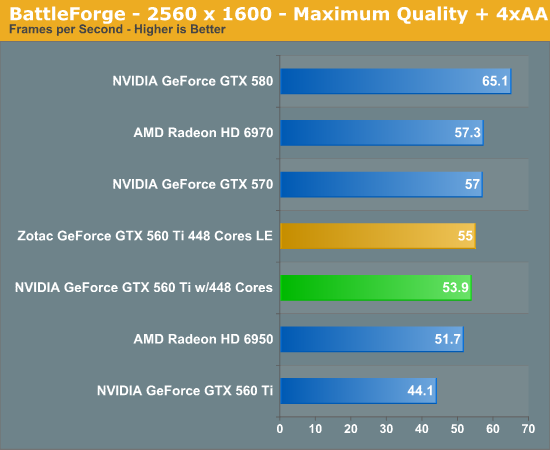

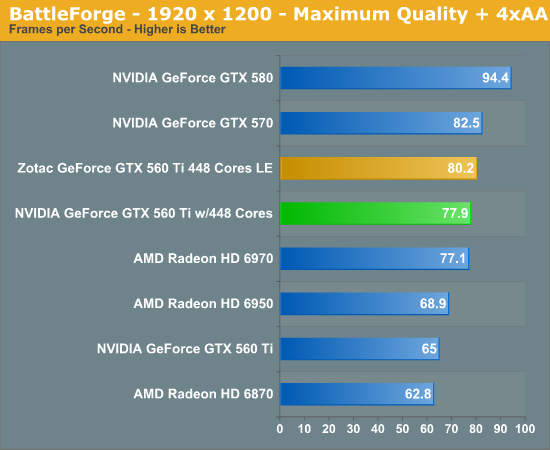

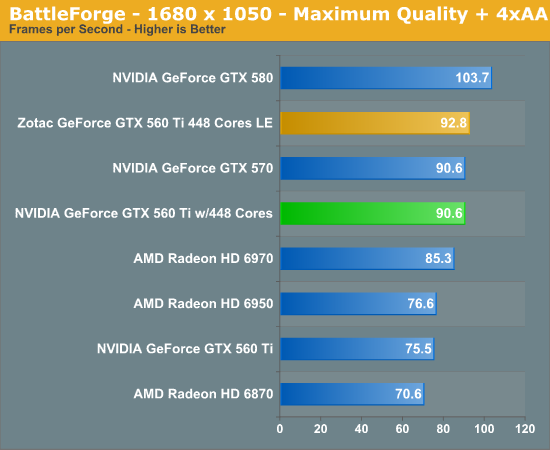

Moving on to BattleForge, we see the emergence of AMD and NVIDIA switching places based on the game being tested. BattleForge favors NVIDIA cards, and as a result the GTX 560-448 does quite well here, tying AMD’s more expensive 6970 at 1920. Zotac’s overclock further improves thing, but as BattleForge likes memory bandwidth, it can’t overcome the GTX 570’s 5% memory bandwidth advantage.

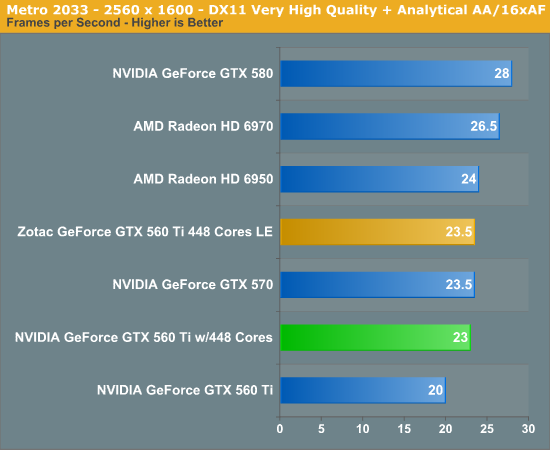

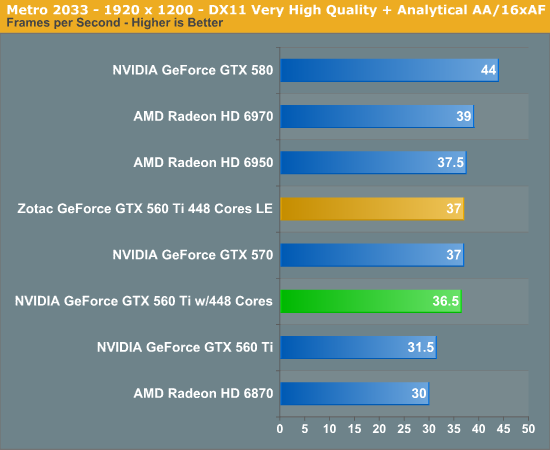

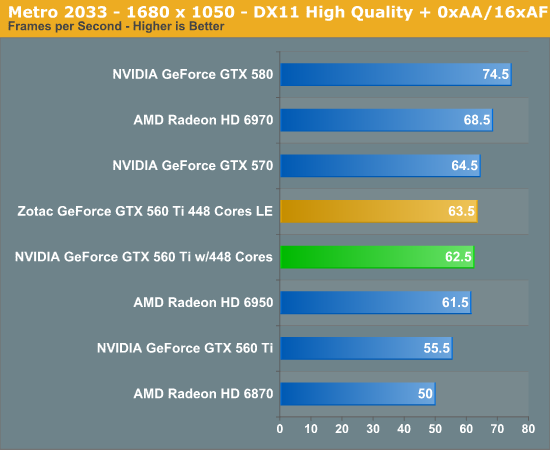

With Metro 2033 we see AMD and NVIDIA swap positions again, this time leaving AMD’s lineup with the very slight edge. This puts the 6950 ahead of the GTX 560-448 at 1920, even with Zotac’s overclock. Practically speaking however you’re not going to break 40fps on a single card without a GTX 580.

80 Comments

View All Comments

ericore - Tuesday, November 29, 2011 - link

This card is the most Perfect example of a corporation trying to milk the consumer.The new Geforce cards are just after Christmas, so what does Nvidia do release a limited addition crap product VS what's around the corner and with a crappy name. The limited namespace is ingenious, but I must hardheadedly agree with Anand on the namespace issue.

Intelligent people will forgot this card, and wait till after Christmas. Nvidia will have no choice to release Graphics card in Q1 because AMD is going to deliver a serious can of whip ass because of their ingenious decision to go with a low power process silicon VS high performance. You see, they've managed to keep the performance but at half the power then add that it is 28nm VS 40nm and what an nerdy orgasm that is. Nvidia will be on their knees, and we may finally see them offer much lower priced cards; so do you buy from the pegger or from the provider? That's a rhetorical question haha.

Revdarian - Tuesday, November 29, 2011 - link

Actually after Christmas you can expect is a 7800 by AMD (that is mid range of the new production, think around or better than current 6900), one month later with luck the high end AMD, and you won't expect the green camp to get a counter until March at the earliest.Now that was said on a Hard website by the owner directly, so i would take it as being very accurate all in all.

ericore - Tuesday, November 29, 2011 - link

HAha, so same performance at half the power + 28nm VS 40nm + potential Rambus memory which is twice as fast, all in all we are looking at -- at least -- double frame rates. Nvidia was an uber fail with their fermi hype. AMD has not hyped the product at all, but rest assure it will be a bomb and in fact is the exact opposite story to fermi. Clever AMD you do me justice in your intelligent business decisions, worthy of my purchase.HStanford1 - Wednesday, December 7, 2011 - link

Can't say the same about their CPU lineupRoflmao

granulated - Tuesday, November 29, 2011 - link

The ad placement under the headline is for the old 384 pipe card !If that isn't an accident I will be seriously annoyed.

DanNeely - Tuesday, November 29, 2011 - link

"It’s quite interesting to find that idle system power consumption is several watts lower than it is with the GTX 570. Truth be told we don’t have a great explanation for this; there’s the obvious difference in coolers, but it’s rare to see a single fan have this kind of an impact."I think it's more likely that Zotak used marginally more efficient power circuitry than on the 570 you're comparing against. 1W there is a 0.6% efficiency edge, 1W on a fan at idle speed is probably at least a 30% difference.

LordSojar - Tuesday, November 29, 2011 - link

Look at all the angry anti-nVidia comments, particularly those about them releasing this card before the GTX 600 series.nVidia is a company. They are here to make money. If you're an uninformed consumer, then you are a company's (no matter what type they are) bread and butter, PERIOD. You people seem to forget companies aren't in the charity business...

As for this card, it's an admirable performer, and a good alternative to the GTX 570. That's all it is.

As for AMD... driver issues or not aside, their control panel is absolutely god awful (and I utilize a system with a fully updated CCC daily). CCC is a totally hilarious joke and should be gutted and redone completely; it's clunky, filled with overlapping/redundant options and ad-ridden. Total garbage... if you even attempt to defend that, you are the very definition of a fanboy.

As for microstutter, AMD's Crossfire is generally worse at first simply because of the lack of frequent CFX profile updates. Once those updates are in place, it's a non issue between the two companies, they both have it in some capacity using dual/tri/quad GPU solutions. Stop jumping around with your red or green pompoms like children.

AMD has fewer overall features at a lower overall price. nVidia has more overall features at a higher overall price. Gee... who saw that coming...? Both companies make respectable GPUs and both have decent drivers, but it's a fact that nVidia tend to have the edge in the driver category while AMD have an edge in the actual hardware design category. One is focused on very streamlined, gaming centric graphics cards while the other is focused on more robust, computing centric graphics cards. Get a clue...

...and let's not even discuss CUDA vs Stream... Stream is total rubbish, and if you don't program, you have no say in countering that point, so please don't even attempt to. Any programmer worth their weight will tell you, quite simply, that for massively parallel workloads where GPU computing has an advantage that CUDA is vastly superior to ANYTHING AMD offers by several orders of magnitude and that nVidia offers far better support in the professional market when compared to AMD.

I'm a user of both products, and personally, I do prefer nVidia, but I try not to condemn people for using AMD products until the moment they try to assert that they got a better deal or condemn me for slightly preferring nVidia due to feature sets. People will choose what they want; power users generally go with nVidia, which does carry a price premium for the premium feature sets. Mainstream and gaming enthusiasts go with AMD, because they are more affordable for every fps you get. Welcome to Graphics 101. Class dismissed.

marklahn - Wednesday, November 30, 2011 - link

Simply put, nvidia has cuda and physx, amd has higher ALU performance which can be beneficial in some scenarios - gogo OpenCL for not being vendor specific though!marklahn - Wednesday, November 30, 2011 - link

Oh and Close to the Metal, brook and stream are all mainly things of the past, so don't bring that up please. ;)Revdarian - Wednesday, November 30, 2011 - link

Such a long post does not make you right, in the part of "CUDA vs Stream" you actually mean "CUDA vs OpenCL and DirectCompute" for example, as those are the two vendor agnostic standards, so that just shows that what is really "rubbish" is your attempt to pose as an authority on the subject.