Rage Against the (Benchmark) Machine

by Jarred Walton on October 14, 2011 11:55 PM ESTTechnical Discussion

The bigger news with Rage is that this is id’s launch title to demonstrate what their id Tech 5 engine can do. It’s also the first major engine in a long while to use OpenGL as the core rendering API, which makes it doubly interesting for us to investigate as a benchmark. And here’s where things get really weird, as id and John Carmack have basically turned the whole gaming performance question on its head. Instead of fixed quality and variable performance, Rage shoots for fixed performance and variable quality. This is perhaps the biggest issue people are going to have with the game, especially if they’re hoping to be blown away by id’s latest graphical tour de force.

Running on my gaming system (if you missed it earlier, it’s an i7-965X @ 3.6GHz, 12GB RAM, GTX 580 graphics), I get a near-constant 60FPS, even at 2560x1600 with 8xAA. But there’s the rub: I don’t ever get more than 60FPS, and certain areas look pretty blurry no matter what I do. The original version of the game offered almost no options other than resolution and antialiasing, while the latest patch has opened things up a bit by adding texture cache and anisotropic filtering settings—these can be set to either Small/Low (default pre-patch) or Large/High. If you were hoping for a major change in image quality, however, post-patch there’s still plenty going on that limits the overall quality. For one, even with 21GB of disk space, id’s megatexturing may provide near-unique textures for the game world but many of the textures are still low resolution. Antialiasing is also a bit odd, as it appears have very little effect on performance (up to a certain point); the most demanding games choke at 2560x1600 4xAA, even with a GTX 580, but Rage chugs along happily with 8xAA. (16xAA on the other hand cuts frame rates almost in half.)

The net result is that both before and after the latest patch, people have been searching for ways to make Rage look better/sharper, with marginal success. I grabbed one of the custom configurations listed on the Steam forums to see if that helped at all. There appears to be a slight tweak in anisotropic filtering, but that’s about it. [Edit: removed link as the custom config appears mostly worthless—see updates.] I put together a gallery of several game locations using my native 2560x1600 resolution with 8xAA, at the default Small/Low settings (for texturing/filtering), at Large/High, and using the custom configuration (Large/High with additional tweaks). These are high quality JPEG files that are each ~1.5MB, but I have the original 5MB PNG files available if anyone wants them.

You can see that post-patch, the difference between the custom configuration and the in-game Large/High settings is negligible at best, while the pre-patch (default) Small/Low settings have some obvious blurriness in some locations. Dead City in particular looked horribly blurred before the patch; I started playing Rage last week, and I didn’t notice much in the way of texture blurriness until I hit Dead City, at which point I started looking for tweaks to improve quality. It looks better now, but there are still a lot of textures that feel like they need to be higher resolution/quality.

Something else worth discussing while we’re on the subject is Rage’s texture compression format. S3TC (also called DXTC) is the standard compressed texture format, first introduced in the late 90s. S3TC/DXTC achieves a constant 4:1 or 6:1 compression ratio of textures. John Carmack has stated that all of the uncompressed textures in Rage occupy around 1TB of space, so obviously that’s not something they could ship/stream to customers, as even with a 6:1 compression ratio they’d still be looking at 170GB of textures. In order to get the final texture content down to a manageable 16GB or so, Rage uses the HD Photo/JPEG XR format to store their textures. The JPEG XR content then gets transcoded on-the-fly into DXTC, which is used for texturing the game world.

The transcoding process is one area where NVIDIA gets to play their CUDA card once more. When Anand benchmarked the new AMD FX-8150, he ran the CPU transcoding routine in Rage as one of numerous tests. I tried the same command post-patch, and with or without CUDA transcoding my system reported a time of 0.00 seconds (even with one thread), so that appears to be broken now as well. Anyway, I’d assume that a GTX 580 will transcode textures faster than any current CPU, but just how much faster I can’t say. AMD graphics on the other hand will currently have to rely on the CPU for transcoding.

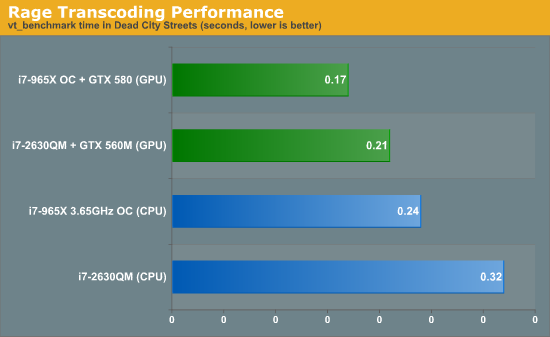

Update: Sorry, I didn't realize that you had to have a game running rather than just using vt_benchmark at the main menu. Bear in mind that I'm using a different location than Anand used in his FX-8150 review; my save is in Dead City, which tends to be one of the more taxing areas. I'm using two different machines as a point of reference, one a quad-core (plus Hyper-Threading) 3.65GHz i7-965 and the other a quad-core i7-2630QM. I've also got results with and without CUDA, since both systems are equipped with NVIDIA GPUs. Here's the result, which admittedly isn't much:

This is using "vt_benchmark 8" and reporting the best score, but regardless of the number of threads it's pretty clear that CUDA is able to help speed up the image transcoding process. How much this actually affects gameplay isn't so clear, as new textures are likely transcoded in small bursts once the initial level load is complete. It's also worth pointing out that the GPU transcoding looks like it would be of more benefit with slower CPUs, as my desktop realized a 41% improvement while the lower clocked notebook (even with a slower GPU) realized a 52% improvement. I also tested the GTX 580 and GTX 560M with and without CUDA transcoding and didn’t notice a difference in perforamnce, but I don’t have any empirical data. That brings us to the final topic.

80 Comments

View All Comments

SSIV - Saturday, October 15, 2011 - link

This is my first post on Anandtech ever. I've been a reader for a long time, but never felt the necessity to resort to posting prior to this moment.So, mostly due to rageConfig.cfg misinformation on the internet, the article's verdict of RAGE as a benchmarking tool is void.

Firstly, the steam forums config doesn't do anything, if you try changing some of the environment variables written in it from the in-game console you'll notice no change. There's only a few variables worth changing. The rest is locked.

Secondly, the variables worth changing are mentioned on nvidia's website:

http://www.geforce.com/News/articles/how-to-unlock...

Concerning the constant-fps/variable-quality statement you'll notice that this can be easily changed to constant-quality (if you read the above link's content). Hi-res textures are enabled once you change a few variables to 16384 (read link for details).

For heavy benchmarking I'd recommend using this config:

jobs_numThreads 12 // makes big difference on AMD X6 1055T

vt_maxPPF 128 // max is 128

vt_pageimagesizeuniquediffuseonly2 16384

vt_pageimagesizeuniquediffuseonly 16384

vt_pageimagesizeunique 16384

vt_pageimagesizevmtr 16384

vt_restart

r_multiSamples 32

vt_maxaniso 4 // 0-16 (32?)

image_anisotropy 4 // same

CUDA transcode is not included in config because it can be changed on-demand under video options. Whereas settings such as r_multiSamples 32 cannot be set from menu (16 is highest).

Please revise the benchmarking verdict, because it doesn't do the game justice.

Also, it'd make Anandtech the first site to make a real benchmark of RAGE.

I'd like to see this happen :)

ssj4Gogeta - Saturday, October 15, 2011 - link

@Jarred WaltonPlease do take a look at this, as the images posted there show a very noticeable improvement in texture quality.

JarredWalton - Saturday, October 15, 2011 - link

SSIV, you're correct that most of the variables in that config I linked didn't do anything, which is why I didn't test with them for most of the time. I ran some screenshot comparisons, shrugged, and moved on. And you might be surprised to hear that I actually already read that whole article before you every posted; I just never tried forcing the 16K textures (as the article itself states you "might" see an improvement). Anyway, I'll check 16K textures, but let me clarify a few things.1) Setting Texture Cache to "Large" gets to very nearly the same quality as the forced 8K textures.

2) CUDA transcode was tested separately, and has no apparent impact on performance for my systems.

3) Forcing constant quality is fine, but that doesn't do anything for the 60FPS frame rate cap...

4) ...unless you force at least 16xAA, and possibly 32xAA. (I tried 32xAA once before and the game crashed, but perhaps that was a bad config file.)

Even if you can get below the 60FPS frame rate cap, however, that does not make a game a good benchmark. Testing at silly levels of detail to try to get below the frame rate cap is not useful, especially if many of the changes that will cut the frame rate down far enough result in negligible (or worse) image quality.

Thanks for the input, but unless I'm sorely mistaken this CFG file hacking still won't make Rage into a useful benchmark. Anyway, I'll investigate a few more things and add an addendum, based on what I discover.

JarredWalton - Saturday, October 15, 2011 - link

Addendum: 16k textures do absolutely nothing for quality. What's more, you really start to run into other issues. Specifically:1) Using a GTX 580 with 12GB of system RAM, 16k forced textures (using your above list of custom settings) causes every level to load super slow -- you can see the low-res textures, frame rate is at 1-2 FPS, and it takes 5 to 20 seconds (sometimes longer) for a level to finish loading. This is of course assuming nothing crashes, which in my limited experience over the past 30 minutes is quite common.

2) 16k textures with 8xAA enabled crashes at 2560x1600, so I had to drop to 4xAA to even get levels to attempt to load (though 1080p 8xAA works okay).

3) Even with 16k textures, the difference compared to 8k screenshots is virtually zero. Without running an image diff, I couldn't tell which looks better, but even the file sizes are nearly equal

4) Finally, even if we get rid of the 16k textures idea, 16xAA looks worse than 4xAA and 8xAA, because everything becomes overly blurred, and 32xAA looks downright awful. "Hooray! No jaggies! Boo! No detail!"

JarredWalton - Saturday, October 15, 2011 - link

Also FYI, there are now two paragraphs and a final image gallery on page 3 discussing the above.SSIV - Sunday, October 16, 2011 - link

I'm aghast at the vigor of your response! Thank you for investigating and researching this Jarred Walton. With things looking as they are now I can see that not much else can be done about the benchmark verdict.What >8xAA settings do to the game sounds worrysome however. I assume id will address this sooner or later.

Because the textures load iteratively, they're blurry at first. Under heavy load the engine might only have enough time to AA the first first texture layer (might be a realtime constraint). But this is just my hypothesis, which shouldn't be true because it implies some sort of texture iteration load block.

16k has small differences, mostly on road signs and bump mapped cement blocks. But like you said, it's not a significant improvement compared to 8k.

Thank you for your effort and making an insightful review!

SSIV - Monday, October 17, 2011 - link

A 32-bit game can only allocate as much memory as 32 bits permits, which lands around 3072MiB. So unless RAGE uses PAE, which diminishes overall ram performance, your 12GiB of ram will never be utilized.Having said that, the sentence beginning with "Using a GTX 580 with 12GB of system RAM" is slightly disappointing.

Again, great review.

JonnyDough - Monday, October 17, 2011 - link

Maybe not, but his link sure did help show me what Rage can really look like. Wow! Those textures make the game look sooo much better! I'm sure an old console can't produce that clarity!SSIV - Monday, October 17, 2011 - link

Here's some more texture comparisons if you're interested:http://forums.steamgames.com/forums/showpost.php?p...

Biggest difference between 8k & 16k are signs and jagged peaks of blocks/rocks. Goes unnoticed once motion blur kicks in.

As a side note, I hope a game that'll utilize the dynamic lighting part of this game engine will appear soon. That'll be an interesting add to the Tech 5 stew.

JarredWalton - Monday, October 17, 2011 - link

I think these images show what I hinted at above: depending on your system and settings, if you go beyond a certain point in "quality" you may end up with inconsistent overall quality. 2560x1600 with 4xAA and 16k textures certainly did it for my GTX 580, and 1920x1080 at 8xAA seemed to do it as well. Some of the linked images show definite improvements, but interestingly there's at least one shot (Img 2) where the 8k texturing isn't consistently better than the 4k texturing -- two of the building walls as well as the distant mountains look like 1k textures or something.If you have enough VRAM, 16k textures with 8xAA looks like the best you'll get, provided you only run at 1080p or lower resolution (or at least not 2560x1600/2560x1440). On my system, however, 16k causes problems far too often for me to recommend it. I tried binding a key to switch between 16k and 8k texture cache, and most of the time when I try switching to 16k the game crashes. I'd be curious to hear what hardware others are using (and what drivers) where 16k is stable at 2560x1600.