The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTResolution Scaling with Intel HD Graphics 3000

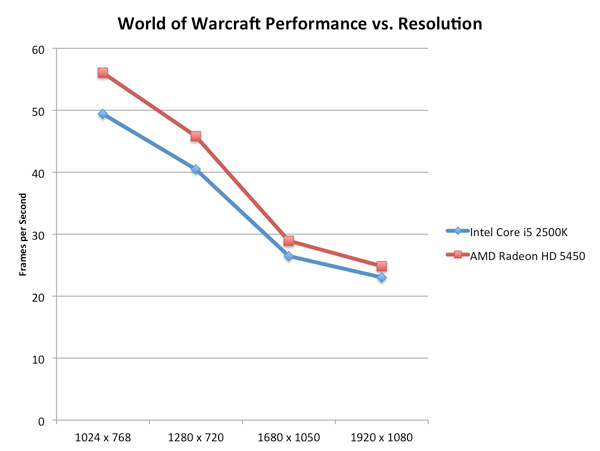

All of our tests on the previous page were done at 1024x768, but how much of a hit do you really get when you push higher resolutions? Does the gap widen between a discrete GPU and Intel's HD Graphics as you increase resolution?

On the contrary: low-end GPUs run into memory bandwidth limitations just as quickly (if not quicker) than Intel's integrated graphics. Spend about $70 and you'll see a wider gap, but if you pit Intel's HD Graphics 3000 against a Radeon HD 5450 the two actually get closer in performance the higher the resolution is—at least in memory bandwidth bound scenarios:

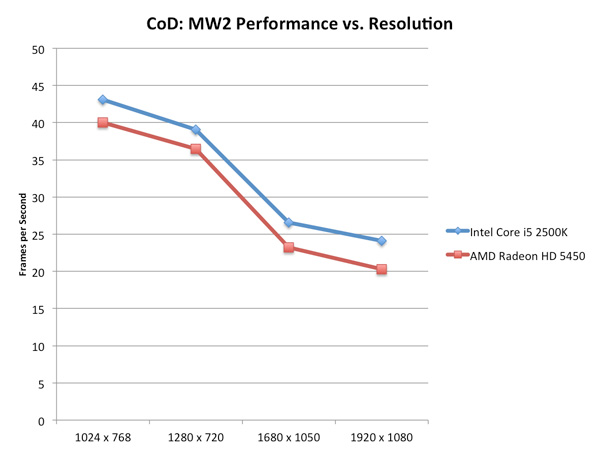

Call of Duty: Modern Warfare 2 stresses compute a bit more at higher resolutions and thus the performance gap widens rather than closes:

For the most part, at low quality settings, Intel's HD Graphics 3000 scales with resolution similarly to a low-end discrete GPU.

Graphics Quality Scaling

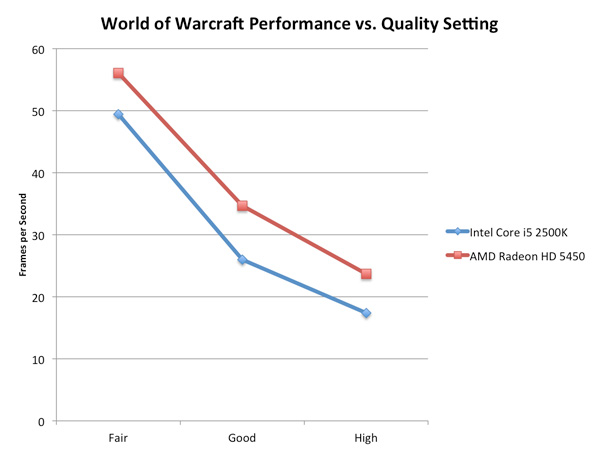

The biggest issue with integrated and any sort of low-end graphics is that you have to run games at absurdly low quality settings to avoid dropping below smooth frame rates. The impact of going to higher quality settings is much greater on Intel's HD Graphics 3000 than on a discrete card as you can see by the chart below.

The performance gap between the two is actually its widest at WoW's "Good" quality settings. Moving beyond that however shrinks the gap a bit as the Radeon HD 5450 runs into memory bandwidth/compute bottlenecks of its own.

283 Comments

View All Comments

DanNeely - Monday, January 3, 2011 - link

The increased power efficiency might allow Apple to squeeze a GPU onto their smaller laptop boards without loosing runtime due to the smaller battery.yuhong - Monday, January 3, 2011 - link

"Unlike P55, you can set your SATA controller to compatible/legacy IDE mode. This is something you could do on X58 but not on P55. It’s useful for running HDDERASE to secure erase your SSD for example"Or running old OSes.

DominionSeraph - Monday, January 3, 2011 - link

"taking the original Casino Royale Blu-ray, stripping it of its DRM"Whoa, that's illegal.

RussianSensation - Monday, January 3, 2011 - link

It would have been nice to include 1st generation Core i7 processors such as 860/870/920-975 in Starcraft 2 bench as it seems to be very CPU intensive.Also, perhaps a section with overclocking which shows us how far 2500k/2600k can go on air cooling with safe voltage limits (say 1.35V) would have been much appreciated.

Hrel - Monday, January 3, 2011 - link

Sounds like this is SO high end it should be the server market. I mean, why make yet ANOTHER socket for servers that use basically the same CPU's? Everything's converging and I'd just really like to see server mobo's converge into "High End Desktop" mobo's. I mean seriously, my E8400 OC'd with a GTX460 is more power than I need. A quad would help with the video editing I do in HD but it works fine now, and with GPU accelerated rendering the rendering times are totally reasonable. I just can't imagine anyone NEEDING a home computer more powerful than the LGS-1155 socket can provide. Hell, 80-90% of people are probably fine with the power Sandy Bridge gives in laptops now.mtoma - Monday, January 3, 2011 - link

Perhaps it is like you say, however it's always good for buyers to decide if they want server-like features in a PC. I don't like manufacturers to dictate to me only one way to do it (like Intel does now with the odd combination of HD3000 graphics - Intel H67 chipset). Let us not forget that for a long time, all we had were 4 slots for RAM and 4-6 SATA connections (like you probably have). Intel X58 changed all that: suddenly we had the option of having 6 slots for RAM, 6-8 SATA connections and enough PCI-Express lanes.I only hope that LGA 2011 brings back those features, because like you said: it's not only the performance we need, but also the features.

And, remeber that the software doesn't stay still, it usualy requires multiple processor cores (video transcoding, antivirus scanning, HDD defragmenting, modern OS, and so on...).

All this aside, the main issue remains: Intel pus be persuaded to stop luting user's money and implement only one socket at a time. I usually support Intel, but in this regard, AMD deserves congratulations!

DanNeely - Monday, January 3, 2011 - link

LGA 2011 is a high end desktop/server convergence socket. Intel started doing this in 2008, with all but the highest end server parts sharing LGA1366 with top end desktop systems. The exception was quad/octo socket CPUs, and those using enormous amounts of ram using LGA 1567.The main reason why LGA 1155 isn't suitable for really high end machines is that it doesn't have the memory bandwidth to feed hex and octo core CPUs. It's also limited to 16 PCIe 2.0 lanes on the CPU vs 36 PCIe 3.0 lanes on LGA2011. For most consumer systems that won't matter, but 3/4 GPU card systems will start loosing a bit of performance when running in a 4x slot (only a few percent, but people who spend $1000-2000 on GPUs want every last frame they can get), high end servers with multiple 10GB ethernet cards and PCIe SSD devices also begin running into bottlenecks.

Not spending an extra dollar or five per system for the QPI connections only used in multi-socket systems in 1155 also adds up to major savings across the hundreds of millions of systems Intel is planning to sell.

Hrel - Monday, January 3, 2011 - link

I'm confused by the upset over playing video at 23.967hz. "It makes movies look like, well, movies instead of tv shows"? What? Wouldn't recording at a lower frame rate just mean there's missed detail especially in fast action scenes? Isn't that why HD runs at 60fps instead of 30fps? Isn't more FPS good as long as it's played back at the appropriate speed? IE whatever it's filmed at? I don't understand the complaint.On a related note hollywood and the world need to just agree that everything gets recorded and played back at 60fps at 1920x1080. No variation AT ALL! That way everything would just work. Or better yet 120FPS and with the ability to turn 3D on and off as u see fit. Whatever FPS is best. I've always been told higher is better.

chokran - Monday, January 3, 2011 - link

You are right about having more detail when filming with higher FPS, but this isn't about it being good or bad, it's more a matter of tradition and visual style.The look movies have these days, the one we got accustomed to, is mainly achieved by filming it in 24p or 23.967 to be precise. The look you get when filming with higher FPS just doesn't look like cinema anymore but tv. At least to me. A good article on this:

http://www.videopia.org/index.php/read/shorts-main...

The problem with movies looking like TV can be tested at home if you got a TV that has some kind of Motion Interpolation, eg. MotionFlow called by Sony or Intelligent Frame Creation by Panasonic. When turned on, you can see the soap opera effect by adding frames. There are people that don't see it and some that do and like it, but I have to turn it of since it doesn't look "natural" to me.

CyberAngel - Thursday, January 6, 2011 - link

http://en.wikipedia.org/wiki/Showscan