AMD's Radeon HD 6970 & Radeon HD 6950: Paving The Future For AMD

by Ryan Smith on December 15, 2010 12:01 AM ESTCayman: The Last 32nm Castaway

With the launch of the Barts GPU and the 6800 series, we touched on the fact that AMD was counting on the 32nm process to give them a half-node shrink to take them in to 2011. When TSMC fell behind schedule on the 40nm process, and then the 32nm process before canceling it outright, AMD had to start moving on plans for a new generation of 40nm products instead.

The 32nm predecessor of Barts was among the earlier projects to be sent to 40nm. This was due to the fact that before 32nm was even canceled, TSMC’s pricing was going to make 32nm more expensive per transistor than 40nm, a problem for a mid-range part where AMD has specific margins they’d like to hit. Had Barts been made on the 32nm process as projected, it would have been more expensive to make than on the 40nm process, even though the 32nm version would be smaller. Thus 32nm was uneconomical for gaming GPUs, and Barts was moved to the 40nm process.

Cayman on the other hand was going to be a high-end part. Certainly being uneconomical is undesirable, but high-end parts carry high margins, especially if they can be sold in the professional market as compute products (just ask NVIDIA). As such, while Barts went to 40nm, Cayman’s predecessor stayed on the 32nm process until the very end. The Cayman team did begin planning to move back to 40nm before TSMC officially canceled the 32nm process, but if AMD had a choice at the time they would have rather had Cayman on the 32nm process.

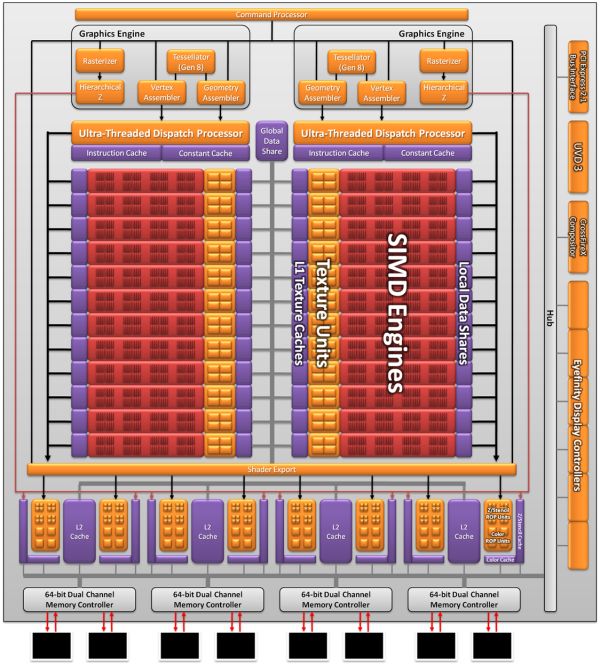

As a result the Cayman we’re seeing today is not what AMD originally envisioned as a 32nm part. AMD won’t tell us everything that they had to give up to create the 40nm Cayman (there has to be a few surprises for 28nm) but we do know a few things. First and foremost was size; AMD’s small die strategy is not dead, but getting the boot from the 32nm process does take the wind out of it. At 389mm2 Cayman is the largest AMD GPU since the disastrous R600, and well off the sub-300mm2 size that the small die strategy dictates. In terms of efficient usage of space though AMD is doing quite well; Cayman has 2.64 billion transistors, 500mil more than Cypress. AMD was able to pack 29% more transistors in only 16% more space.

Even then, just reaching that die size is a compromise between features and production costs. AMD didn’t simply settle for a larger GPU, but they had to give up some things to keep it from being even larger. SIMDs were on the chopping block; 32nm Cayman would have had more SIMDs for more performance. Features were also lost, and this is where AMD is keeping mum. We know PCI Express 3.0 functionality was scheduled for the 32nm part, where AMD had to give up their PCIe 3.0 controller for a smaller 2.1 controller to make up for their die size difference. This in all honesty may have worked out better for them: PCIe 3.0 ended up being delayed until November, so suitable motherboards are still at least months away.

The end result is that Cayman as we know it is a compromise to make it happen on 40nm. AMD got their new VLIW4 architecture, but they had to give up performance and an unknown number of features to get there. On the flip side this will make 28nm all the more interesting, as we’ll get to see many of the features that were supposed to make it for 2010 but never arrived.

168 Comments

View All Comments

Remon - Wednesday, December 15, 2010 - link

Seriously, are you using 10.10? It's not like the 10.11 have been out for a while. Oh, wait...They've been out for almost a month now. I'm not expecting you to use the 10.12, as these were released just 2 days ago, but you can't have an excuse about not using a month old drivers. Testing overclocked Nvidia cards against newly released cards, and now using older drivers. This site get's more biased with each release.

cyrusfox - Wednesday, December 15, 2010 - link

I could be wrong, but 10.11 didn't work with the 6800 series, so I would imagine 10.11 wasn't meant for the 6900 either. If that is the case, it makes total sense why they used 10.10(cause it was the most updated driver available when they reviewed.)I am still using 10.10e, thinking about updating to 10.12, but why bother, things are working great at the moment. I'll probably wait for 11. or 11.2.

Remon - Wednesday, December 15, 2010 - link

Nevermind, that's what you get when you read reviews early in the morning. The 10.10e was for the older AMD cards. Still, I can't understand the difference between this review and HardOCP's.flyck - Wednesday, December 15, 2010 - link

it doesn't. Anand has the same result for 25.. resolutions with max details AA and FSAA.Presentation on anand however is more focussed on 16x..10.. resolutions. (last graph) if you look in the first graph you'll notice the 6970/6950 performs like HardOcp. e.g. the higher the quality the smaller the gap becomes between 6950 and 570 and 6970 and 580. the lower the more 580 is running away and 6970/6950 are trailing the 570.

Gonemad - Wednesday, December 15, 2010 - link

Oookay, new card from the red competitor. Welcome aboard.But, all of this time, I had to ask: why is Crysis is so punitive on the graphics cards? I mean, it was released eons ago, and still can't be run with everything cranked up in a single card, if you want 60fps...

Is it sloppy coding? Does the game *really* looks better with all the eye candy? Or they built a "FPS bug" on purpose, some method of coding that was sure to torture any hardware that would be built in the next 18 months after release?

I will get slammed for this, but for instance, the water effects on Half Life 2 look great even on lower spec cards, once you turn all the eye-candy on, and the FPS doesn't drop that much. The same for some subtle HDR effects.

I guess I should see this game by myself and shut up about things I don't know. Yes, I enjoy some smooth gaming, but I wouldn't like to wait 2 years after release to run a game smoothly with everything cranked up.

Another one is Dirt 2, I played it with all the eye candy to the top, my 5870 dropped to 50-ish FPS (as per benchmarks),it could be noticed eventually. I turned one or two things off, checked if they were not missing after another run, and the in game FPS meter jumped to 70. Yay.

BrightCandle - Wednesday, December 15, 2010 - link

Crysis really does have some fabulous graphics. The amount of foliage in the forests is very high. Crysis kills cards because it really does push current hardware.I've got Dirt 2 and its not close in the level of detail. Its a decent looking game at times but its not a scratch on Crysis for the amount of stuff on screen. Half life 2 is also not bad looking but it still doesn't have the same amount of detail. The water might look good but its not as good as a PC game can look.

You should buy Crysis, its £9.99 on steam. Its not a good game IMO but it sure is pretty.

fausto412 - Wednesday, December 15, 2010 - link

yes...it's not much of a fun game but damn it is prettyAnnihilatorX - Wednesday, December 15, 2010 - link

Well original Crysis did push things too far and optimization could be used. Crysis Warhead is much better optimized while giving pretty identical visuals.fausto412 - Wednesday, December 15, 2010 - link

"I guess I should see this game by myself and shut up about things I don't know. Yes, I enjoy some smooth gaming, but I wouldn't like to wait 2 years after release to run a game smoothly with everything cranked up."that's probably a good idea. Crysis was made with future hardware in mind. It's like a freaking tech demo. Ahead of it's time and beaaaaaautiful. check it out on max settings,...then come back tell us what you think.

TimoKyyro - Wednesday, December 15, 2010 - link

Thank you for the SmallLuxGPU test. That really made me decide to get this card. I make 3D animations with Blender in Ubuntu so the only thing holding me back is the driver support. Do these cards work in Ubuntu? Is it possible for you to test if the Linux drivers work at the time?