AMD's Radeon HD 6970 & Radeon HD 6950: Paving The Future For AMD

by Ryan Smith on December 15, 2010 12:01 AM ESTTweaking PowerTune

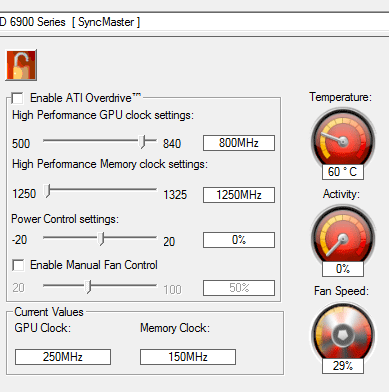

While the primary purpose of PowerTune is to keep the power consumption of a video card within its TDP in all cases, AMD has realized that PowerTune isn’t necessarily something everyone wants, and so they’re making it adjustable in the Overdrive control panel. With Overdrive you’ll be able to adjust the PowerTune limits both up and down by up to 20% to suit your needs.

We’ll start with the case of increasing the PowerTune limits. While AMD does not allow users to completely turn off PowerTune, they’re offering the next best thing by allowing you to increase the PowerTune limits. Acknowledging that not everyone wants to keep their cards at their initial PowerTune limits, AMD has included a slider with the Overdrive control panel that allows +/- 20% adjustment to the PowerTune limit. In the case of the 6970 this means the PowerTune limit can be adjusted to anywhere between 200W and 300W, the latter being the ATX spec maximum.

Ultimately the purpose of raising the PowerTune limit depends on just how far you raise it. A slight increase can bring a slight performance advantage in any game/application that is held back by PowerTune, while going the whole nine yards to 20% is for all practical purposes disabling PowerTune at stock clocks and voltages.

We’ve already established that at the stock PowerTune limit of 250W only FurMark and Metro 2033 are PowerTune limited, with only the former limited in any meaningful way. So with that in mind we increased our PowerTune limit to 300W and re-ran our power/temperature/noise tests to look at the full impact of using the 300W limit.

| Radeon HD 6970: PowerTune Performance | ||||

| PowerTune 250W | PowerTune 300W | |||

| Crysis Temperature | 78 | 79 | ||

| Furmark Temperature | 83 | 90 | ||

| Crysis Power | 340W | 355W | ||

| Furmark Power | 361W | 422W | ||

As expected, power and temperature both increase with FurMark with PowerTune at 300W. At this point FurMark is no longer constrained by PowerTune and our 6970 runs at 880MHz throughout the test. Overall our power consumption measured at the wall increased by 60W, while the core clock for FurMark is 46.6% faster. It was under this scenario that we also “uncapped” PowerTune for Metro, when we found that even though Metro was being throttled at times, the performance impact was impossibly small.

Meanwhile we found something interesting when running Crysis. Even though Crysis is not impacted by PowerTune, Crysis’ power consumption still crept up by 15W. Performance is exactly the same, and yet here we are with slightly higher power consumption. We don’t have a good explanation for this at this point – PowerTune only affects the core clock (and not the core voltage), and we never measured Crysis taking a hit at 250W or 300W, so we’re not sure just what is going on. However we’ve already established that FurMark is the only program realistically impacted by the 250W limit, so at stock clocks there’s little reason to increase the PowerTune limit.

This does bring up overclocking however. Due to the limited amount of time we had with the 6900 series we have not been able to do a serious overclocking investigation, but as clockspeed is a factor in the power equation, PowerTune is going to impact overclocking. You’re going to want to raise the PowerTune limit when overclocking, otherwise PowerTune is liable to bring your clocks right back down to keep power consumption below 250W. The good news for hardcore overclockers is that while AMD set a 20% limit on our reference cards, partners will be free to set their own tweaking limits – we’d expect high-end cards like the Gigabyte SOC, MSI Lightning, and Asus Matrix lines to all feature higher limits to keep PowerTune from throttling extreme overclocks.

Meanwhile there’s a second scenario AMD has thrown at us for PowerTune: tuning down. Although we generally live by the “more is better” mantra, there is some logic to this. Going back to our dynamic range example, by shrinking the dynamic power range power hogs at the top of the spectrum get pushed down, but thanks to AMD’s ability to use higher default core clocks, power consumption of low impact games and applications goes up. In essence power consumption gets just a bit worse because performance has improved.

Traditionally V-sync has been used as the preferred method of limiting power consumption by limiting a card’s performance, but V-sync introduces additional input lag and the potential for skipped frames when triple-buffering is not available, making it a suboptimal solution in some cases. Thus if you wanted to keep a card at a lower performance/power level for any given game/application but did not want to use V-sync, you were out of luck unless you wanted to start playing with core clocks and voltages manually. By being able to turn down the PowerTune limits however, you can now constrain power consumption and performance on a simpler basis.

As with the 300W PowerTune limit, we ran our power/temperature/noise tests with the 200W limit to see what the impact would be.

| Radeon HD 6970: PowerTune Performance | ||||

| PowerTune 250W | PowerTune 200W | |||

| Crysis Temperature | 78 | 71 | ||

| Furmark Temperature | 83 | 71 | ||

| Crysis Power | 340W | 292W | ||

| Furmark Power | 361W | 292W | ||

Right off the bat everything is lower. FurMark is now at 292W, and quite surprisingly Crysis is also at 292W. This plays off of the fact that most games don’t cause a card to approach its limit in the first place, so bringing the ceiling down will bring the power consumption of more power hungry games and applications down to the same power consumption levels as lesser games/applications.

Although not whisper quiet, our 6970 is definitely quieter at the 200W limit than the default 250W limit thanks to the lower power consumption. However the 200W limit also impacts practically every game and application we test, so performance is definitely going to go down for everything if you do reduce the PowerTune limit by the full 20%.

| Radeon HD 6970: PowerTune Crysis Performance | ||||

| PowerTune 250W | PowerTune 200W | |||

| 2560x1600 | 36.6 | 28 | ||

| 1920x1200 | 51.5 | 43.3 | ||

| 1680x1050 | 63.3 | 52 | ||

At 200W, you’re looking at around 75%-80% of the performance for Crysis. The exact value will depend on just how heavy of a load the specific game/application was in the first place.

168 Comments

View All Comments

Ryan Smith - Wednesday, December 15, 2010 - link

Exactly the same as on Cypress.L2: 128KB per ROP block (so 512KB)

L1: 8KB per SIMD

LDS: 32KB per SIMD

GDS: 64KB

http://images.anandtech.com/doci/4061/MidLevelView...

I don't have the register file size readily available.

DanNeely - Wednesday, December 15, 2010 - link

How likely is the decrease from 2 to 1 operations per clock likely to affect real world applications?yeraldin37 - Wednesday, December 15, 2010 - link

My current cards are running at 870Mhz(GPU) and 1100Mhz(clock), faster than stock 5870, those benchmarks for new 6970 are really disappointing, I was seriously expecting to get a single 6970 for Christmas to replace my 5850OC CF cards and make room for additional cards or even have a free pcie to plug my gtx460 for physx capability. I was going to be happy to get at least 80% of my current 5850CF setup from new 6970. what a joke! I will not make any move and wait for upcoming next generation 28nm amd GPU's. We have to be fair and mention all great efforts from AMD team to bring new technology to newest radeon cards, however not enough performance for die hard gamers. If gtx 580 were 20% cheaper I might consider to buy one, I personally never ever pay more than $400 for one(1) video card.Nfarce - Wednesday, December 15, 2010 - link

Reading Tom's Hardware they essentially slam AMD's marketing these cards as a 570-580 beater. Guru3D is also less than friendly. Interstingly, *both* sites have benches showing the 570 an d580 beating the 6950 and 6970 commandingly. What's up with that exactly?fausto412 - Wednesday, December 15, 2010 - link

it's called AMD didn't deliver on the hype...they deserve to get slammed.medi01 - Wednesday, December 15, 2010 - link

AMD delivers cards with better performance/price ratio that also consume less power. How come there is a reason to "slam", eh?zst3250 - Friday, December 31, 2010 - link

Off yourself cretin, prefearbly by getting your cranium kicked in.Mr Perfect - Thursday, December 16, 2010 - link

Wait, is Tom's reputable again? Haven't read that site since the Athlon XP was new....AnnonymousCoward - Wednesday, December 15, 2010 - link

As a 30" owner and gamer, I would never run at 2560x1600 with AA enabled if that causes <60fps. I'd disable AA. Who wouldn't value framerate over AA? So when the fps is <60, please compare cards at 2560x1600 without AA, so that I'm able to apply the results to a purchase decision.SimpJee - Wednesday, December 15, 2010 - link

Greetings, also a 30'' gamer. If you see the FPS above 30 with AA enabled, you can assume it will be (much) higher without it enabled so what's the point in actually having the author bench it without AA? Plus, anything above 30 FPS is just icing on the cake as far as I'm concerned.