NVIDIA's GeForce GTX 570: Filling In The Gaps

by Ryan Smith on December 7, 2010 9:00 AM ESTCivilization V

The other new game in our benchmark suite is Civilization 5, the latest incarnation in Firaxis Games’ series of turn-based strategy games. Civ 5 gives us an interesting look at things that not even RTSes can match, with a much weaker focus on shading in the game world, and a much greater focus on creating the geometry needed to bring such a world to life. In doing so it uses a slew of DirectX 11 technologies, including tessellation for said geometry and compute shaders for on-the-fly texture decompression.

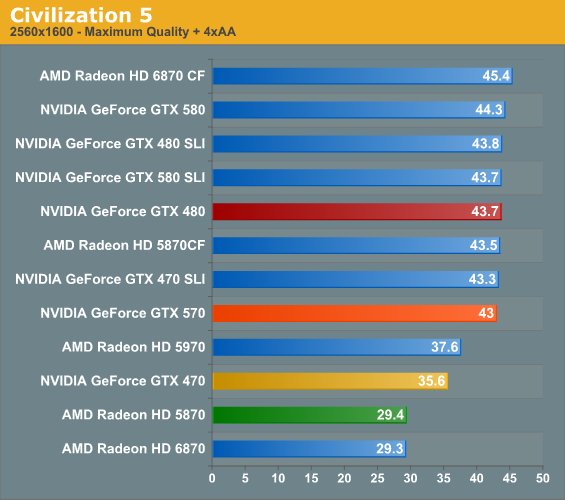

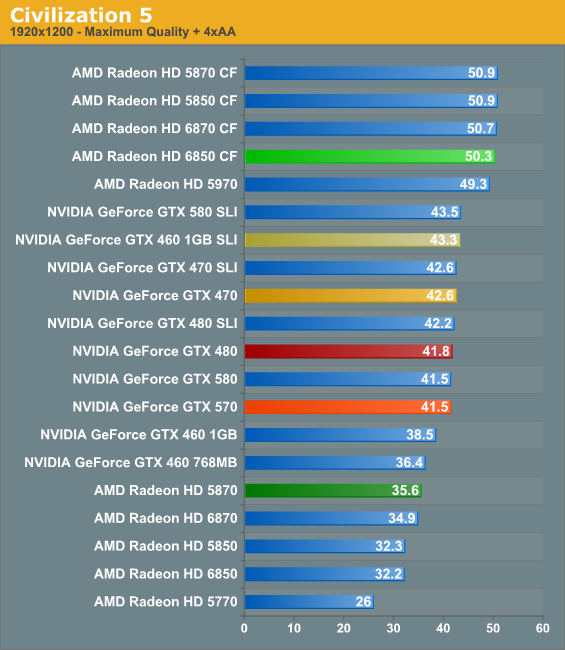

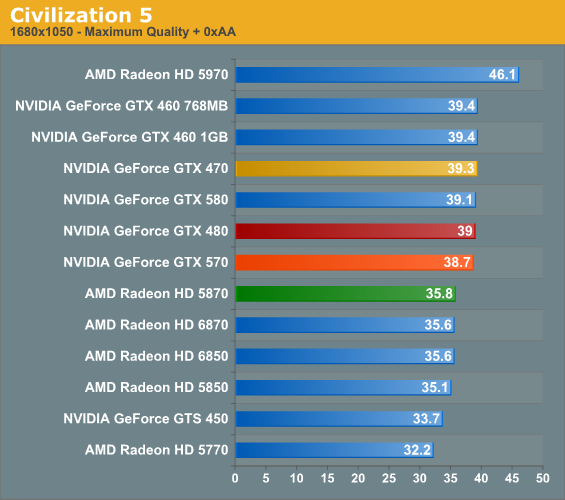

Civ 5 is a very interesting game for us. It’s one of the few games we’ve ever tested where performance increases with resolution, something we’re content to attribute to its unique use of DX11 features and what may be a better match between tessellated triangles and 16 pixel quads at higher resolutions. The result is that while it’s CPU limited most of the time, there’s very little reason to not crank up the resolution to your monitor’s native resolution.

Because we’re CPU limited several things are going on. Until we hit 2560 the 470, 480, 570, and 580 are all effectively tied with each other, with the 470 finally falling behind in the end. As a result at 2560 it appears that a GTX 570 is all that’s necessary to take full advantage of the game. Meanwhile NVIDIA has always done better than AMD here in single-GPU scenarios, so the Radeon 5870 is nearly 20% behind the GTX 570. On the flip side the 6850CF shoots well ahead of the GTX 570, hitting what appears to be the cap for AMD cards at around 51fps.

54 Comments

View All Comments

TheHolyLancer - Tuesday, December 7, 2010 - link

likely because when the 6870s came out they included an FTW edition of the 460 and was hammered? Not to mention in their own guild lines they said no OCing in launch articles.If they do do OC comp, most likely in a special article, possibly with retail brought samples rather than sent demos...

Ryan Smith - Tuesday, December 7, 2010 - link

As a rule of thumb I don't do overclock testing with a single card, as overclocking is too variable. I always wait until I have at least 2 cards to provide some validation to our results.CurseTheSky - Tuesday, December 7, 2010 - link

I don't understand why so many cards still cling to DVI. Seeing that Nvidia is at least including native HDMI on their recent generations of cards is nice, but why, in 2010, on an enthusiast-level graphics card, are they not pushing the envelope with newer standards?The fact that AMD includes DVI, HDMI, and DisplayPort natively on their newer lines of cards is probably what's going to sway my purchasing decision this holiday season. Something about having all of these small, elegant, plug-in connectors and then one massive screw-in connector just irks me.

Vepsa - Tuesday, December 7, 2010 - link

Its because most people still have DVI for their desktop monitors.ninjaquick - Tuesday, December 7, 2010 - link

DVI is a very good plug man, I don't see why you're hating on it.ninjaquick - Tuesday, December 7, 2010 - link

I meant to reply to OP.DanNeely - Tuesday, December 7, 2010 - link

Aside from apple almost noone uses DP. Assuming it wasn't too late in the life cycle to do so, I suspect that the new GPU used in the 6xx series of cards next year will have DP support so nvidia can offer many display gaming on a single card, but only because a single DP clockgen (shared by all DP displays) is cheaper to add than 4 more legacy clockgens (one needed per VGA/DVI/HDMI display).Taft12 - Tuesday, December 7, 2010 - link

Market penetration is just a bit more important than your "elegant connector" for an input nobody's monitor has. What a poorly thought-out comment.CurseTheSky - Tuesday, December 7, 2010 - link

Market penetration starts by companies supporting the "cutting edge" of technology. DisplayPort has a number of advantages over DVI, most of which would be beneficial to Nvidia in the long run, especially considering the fact that they're pushing the multi-monitor / combined resolution envelope just like AMD.Perhaps if you only hold on to a graphics card for 12-18 months, or keep a monitor for many years before finally retiring it, the connectors your new $300 piece of technology provides won't matter to you. If you're like me and tend to keep a card for 2+ years while jumping on great monitor deals every few years as they come up, it's a different ballgame. I've had DisplayPort-capable monitors for about 2 years now.

Dracusis - Tuesday, December 7, 2010 - link

I invested just under $1000 in a 30" professional 8-bit PVA LCD back in 2006 that is still better than 98% of the crappy 6-bit TN panels on the market. It has been used with 4 different video cards, supports DVI, VGA, Component HD and Composite SD. Has an ultra wide color gamut (113%), great contrast, matt screen with super deep blacks and perfectly uniform backlighting along with mem card readers and USB ports.Display Port, not any other monitor on the market offers me absolutely nothing new or better in terms of visual quality or features.

If you honestly see an improvement in quality spending $300 ever 18 months on a new "value" displays then I feel sorry for you, you've made some poorly informed choices and wasted a lot of money.