AMD's Six-Core Phenom II X6 1090T & 1055T Reviewed

by Anand Lal Shimpi on April 27, 2010 12:26 AM EST- Posted in

- CPUs

- AMD

- Phenom II X6

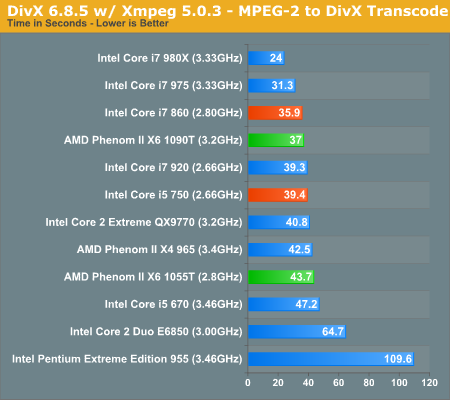

DivX 6.8.5 with Xmpeg 5.0.3

Our DivX test is the same DivX / XMpeg 5.03 test we've run for the past few years now, the 1080p source file is encoded using the unconstrained DivX profile, quality/performance is set balanced at 5 and enhanced multithreading is enabled.

Thanks to AMD's Turbo Core the Phenom II X6 is pretty close here, but still not able to topple Intel's Core i5 and i7.

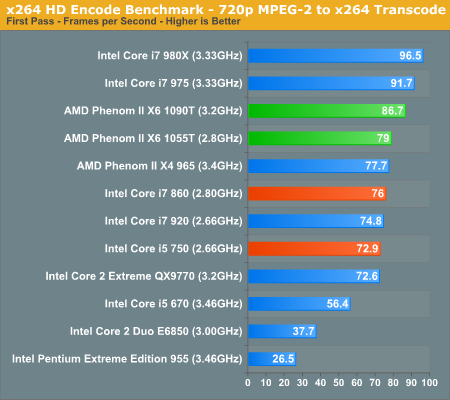

x264 HD Video Encoding Performance

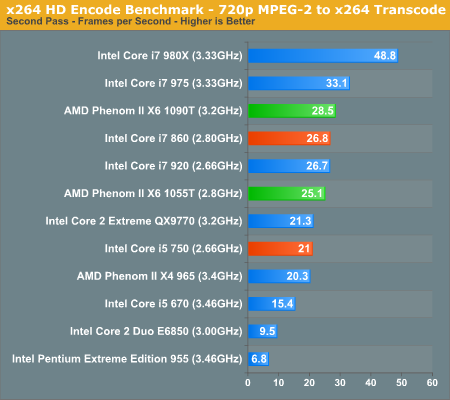

Graysky's x264 HD test uses x264 to encode a 4Mbps 720p MPEG-2 source. The focus here is on quality rather than speed, thus the benchmark uses a 2-pass encode and reports the average frame rate in each pass.

And we finally see the Phenom II X6 flex its muscle, even the 1055T is faster than the Core i7 860:

In the actual encoding pass the 1055T falls behind the 860 but it's still a good 19% faster than the Core i5 750.

168 Comments

View All Comments

silverblue - Thursday, April 29, 2010 - link

I agree. If it's a struggle to utilise all six cores at 100%, just add another program to the mix. This may just prove once and for all if a physical Stars core can beat a logical i- core, and thus whether AMD were right to launch Thuban in the first place.Scali - Friday, April 30, 2010 - link

I'll say a few things to that...A physical Stars core actually has to beat TWO logical i-cores. After all, we have 6 Stars cores vs 8 logical i-cores.

So if we were to say that the 4 physical cores on both are equal (which they're not, because the i-cores have an advantage), that leaves 2 physical cores against 4 logical cores.

Another thing is that if you have to work hard to set up a multitasking benchmark that shows Thuban in a favourable light, doesn't that already prove the opposite of what you are trying to achieve?

I mean, how realistic is it for a consumer processor to set up Virtual Box/VMWare benchmarks? Doesn't that belong in the server reviews (where as I recall, AMD's 6-cores couldn't beat Intel's 8 logical cores either in virtualization benchmarks)?

Virtualization is not something that a consumer processor needs to be particularly good at, I would say. Gaming, video processing, photo editing. Now those are things that consumers/end-users will be doing.

wyvernknight - Thursday, April 29, 2010 - link

@mapesdhsTheres no such thing as an AM3 board with DDR2. Only an AM2+ board with DDR2 that has AM3 support. The MA770-UD3 you gave as an example is an AM2+ board with AM3 compatibility. "Support for Socket AM3 / AM2+ / AM2 processors". AM3 boards do not have support for AM2+ and AM2 processors.

mapesdhs - Thursday, April 29, 2010 - link

Strange then that the specs pages specifically describe the sockets as being AM3.

Ian.

Skyflitter - Thursday, April 29, 2010 - link

Could someone please tell me the difference between the Phenom II X6 1090T & 1055T.I would like to put one of these new chips into my Gigabyte DDR2 MB but the Gigabyte web site says my board only supports the 1035T and the 1055T chips. My board is rated @ 140 W. ( GA-MA770-UD3 )

I am currently running a Athlon 64 x2 6400+ ( 3.4Ghz ) and I do not want to loose to much clock speed by going with 1055T ( 2.8 Ghz ).

Do all the new Phenom II X6 support DDR2?

cutterjohn - Friday, April 30, 2010 - link

I'm waiting for them to cough up a new arch that delivers MUCH better per-core performance.There is just no value proposition with their 6 core CPU that mostly matches a 5 core i7 920 which can be had for a roughly similar pricepoint, i.e. i7 930 $199 @ MicroCenter.

Either way unless I win the giveaway :D, I'm now planning at least until next year to upgrade the desktop to see how Sandy Bridge comes out and IF AMD manages to get out their new CPU. I figure that I may as well wait now for the next sockets LGA2011 for Intel, and what I'm sure will be a new one for AMD with their new CPU. As an added bonus I'll be skipping the 1st generation of DX11 hw, as new architectures to support new APIs DX11/OGL4 tend to not be quite the best optimized or robust, especially apparently in nVidia's case this time. (Although AMD had an easier time of it as they made few changes from R7XX to R8XX as is usual for them. AMD need to really start spending some cash on R&D if they wish to remain relevant.)

silverblue - Friday, April 30, 2010 - link

The true point of the X6 is heavy multi-tasking. I'd love to see a real stress test thrown at these to show what they can do, and thus validate their existence.pow123 - Wednesday, May 5, 2010 - link

You would have to be insane to pay $1000 for a chip that may be good for gaming. at $199 with slightly lower performance its a no brainer. When I build a system, I don't care if the frame rates etc is 10 to 15% better. Who cares ; the chip is fast and I have not problems playing high end games. I have no special setup and it does everything that my friends I7 can do. Good for me I get more pc for the buck . Go ahead and go broke buying just a motherboard and cpu when I can get a modern motherboard a cpu, 6gigs of ddr3 1600, a 1tb hd and a dvdrw. More for me.spda242 - Sunday, May 2, 2010 - link

I would really like to have seen a World of Warcraft test with there CPUs like you did with the Intel 6-core.It would be interesting to see if WoW can use all Core's and to what performance.

hajialibaig - Wednesday, May 5, 2010 - link

Not sure why there is no Power vs. Performance vs. Price comparison of the different processors. As for the performance, it could be anything that you want, such as Gaming Performance or Video Encoding.Such a comparison should be interesting, since you may as well pay back the higher initial price via power savings.