NVIDIA’s GeForce GTX 480 and GTX 470: 6 Months Late, Was It Worth the Wait?

by Ryan Smith on March 26, 2010 7:00 PM EST- Posted in

- GPUs

Temperature, Power, & Noise: Hot and Loud, but Not in the Good Way

For all of the gaming and compute performance data we have seen so far, we’ve only seen half of the story. With a 500mm2+ die and a TDP over 200W, there’s a second story to be told about the power, temperature, and noise characteristics of the GTX 400 series.

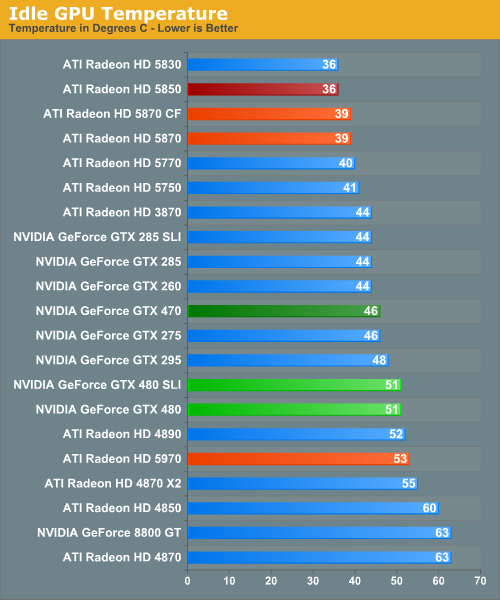

Starting with idle temperatures, we can quickly see some distinct events among our cards. The top of the chart is occupied solely by AMD’s Radeon 5000 series, whose small die and low idle power usage let these cards idle at very cool temperatures. It’s not until half-way down the chart that we find our first GTX 400 card, with the 470 at 46C. Truth be told we were expecting something a bit better out of it given that its 33W idle is only a few watts over the 5870 and has a fairly large cooler to work with. Farther down the chart is the GTX 480, which is in the over-50 club at 51C idle. This is where NVIDIA has to pay the piper on their die size – even the amazingly low idle clockspeed of 50MHz/core 101MHz/shader 67.5Mhz/RAM isn't enough to drop it any further.

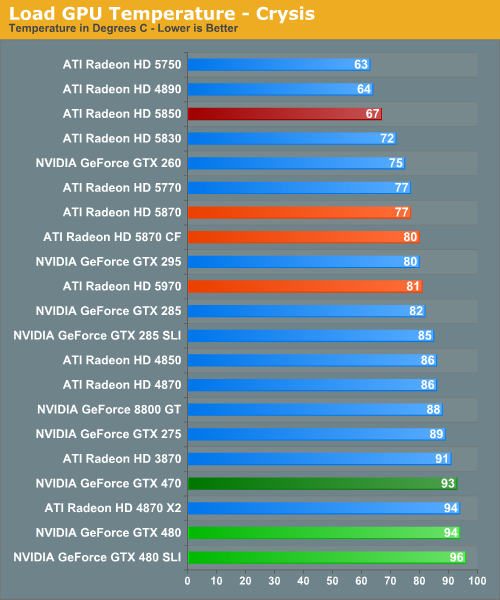

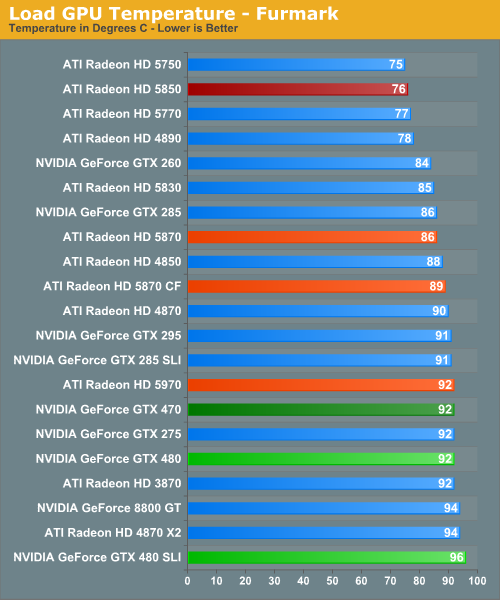

For our load temperatures, we have gone ahead and added Crysis to our temperature testing so that we can see both the worst-case temperatures of FurMark and a more normal gameplay temperature.

At this point the GTX 400 series is in a pretty exclusive club of hot cards – under Crysis the only other single-GPU card above 90C is the 3870, and the GTX 480 SLI is the hottest of any configuration we have tested. Even the dual-GPU cards don’t get quite this hot. In fact it’s quite interesting that unlike FurMark there’s quite a larger spread among card temperatures here, which only makes the GTX 400 series stand out more.

While we’re on the subject of temperatures, we should note that NVIDIA has changed the fan ramp-up behavior from the GTX 200 series. Rather than reacting immediately, the GTX 400 series fans have a ramp-up delay of a few seconds when responding to high temperatures, meaning you’ll actually see those cards get hotter than our sustained temperatures. This won’t have any significant impact on the card, but if you’re like us your eyes will pop out of your head at least once when you see a GTX 480 hitting 98C on FurMark.

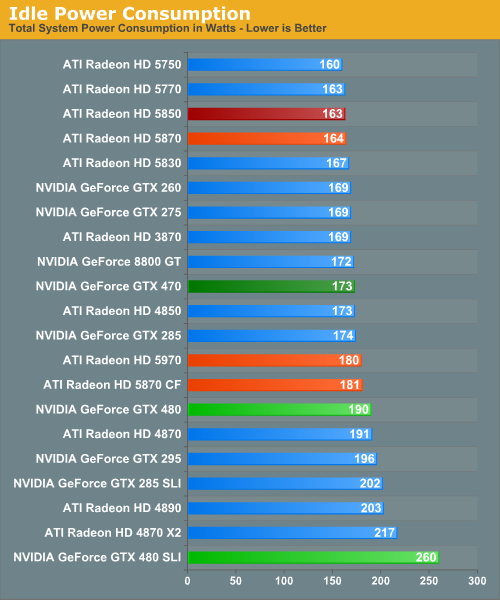

Up next is power consumption. As we’ve already discussed, the GTX 480 and GTX 470 have an idle power consumption of 47W and 33W respectively, putting them out of the running for the least power hungry of the high-end cards. Furthermore the 1200W PSU we switched to for this review has driven up our idle power load a bit, which serves to suppress some of the differences in idle power draw between cards.

With that said the GTX 200 series either does decently or poorly, depending on your point of view. The GTX 480 is below our poorly-idling Radeon 4000 series cards, but well above the 5000 series. Meanwhile the GTX 470 is in the middle of the pack, sharing space with most of the GTX 200 series. The lone outlier here is the GTX 480 SLI. AMD’s power saving mode for Crossfire cards means that the GTX 480 SLI is all alone at a total power draw of 260W when idle.

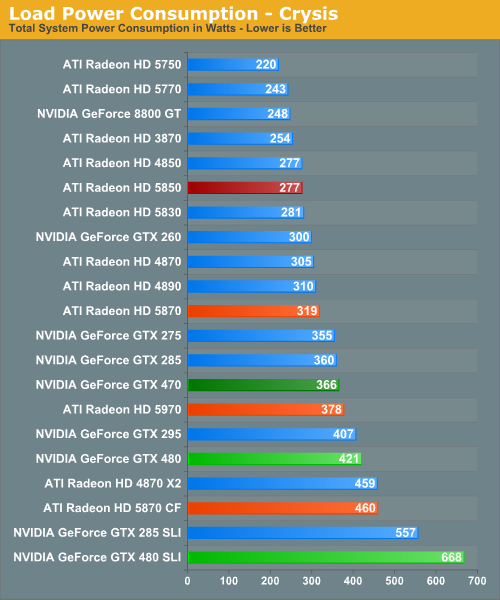

For load power we have Crysis and FurMark, the results of which are quite interesting. Under Crysis not only is the GTX 480 SLI the most demanding card setup as we would expect, but the GTX 480 itself isn’t too far behind. As a single-GPU card it pulls in more power than either the GTX 295 or the Radeon 5970, both of which are dual-GPU cards. Farther up the chart is the GTX 470, which is the 2nd most power draining of our single-GPU cards.

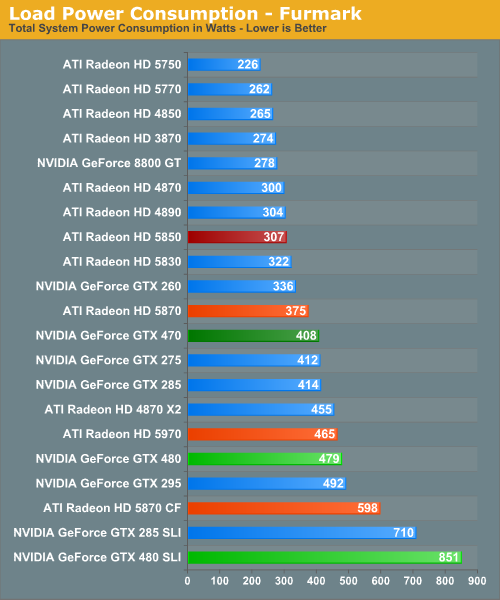

Under FurMark our results change ever so slightly. The GTX 480 manages to get under the GTX 295, while the GTX 470 falls in the middle of the GTX 200 series pack. A special mention goes out to the GTX 480 SLI here, which at 851W under load is the greatest power draw we have ever seen for a pair of GPUs.

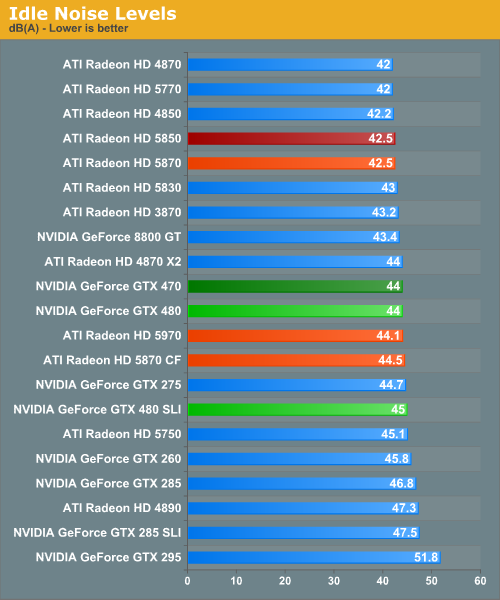

Idle noise doesn’t contain any particular surprises since virtually every card can reduce its fan speed to near-silent levels and still stay cool enough. The GTX 400 series is within a few dB of our noise floor here.

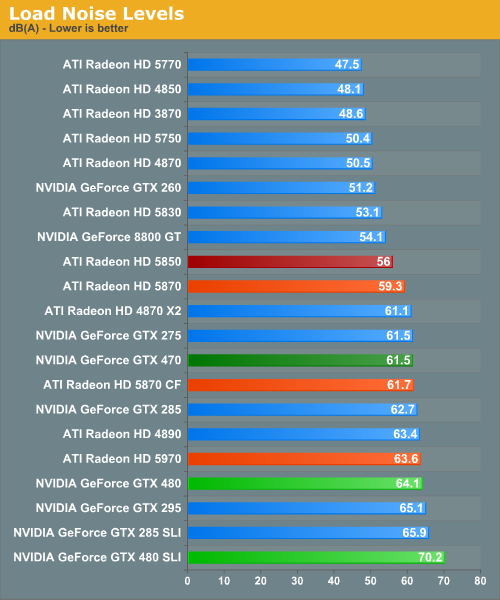

Hot, power hungry things are often loud things, and there are no disappointments here. At 70dB the GTX 480 SLI is the loudest card configuration we have ever tested, while at 64.1dB the GTX 480 is the loudest single-GPU card, beating out even our unreasonably loud 4890. Meanwhile the GTX 470 is in the middle of the pack at 61.5dB, coming in amidst some of our louder single-GPU cards and our dual-GPU cards.

Finally, with this data in hand we went to NVIDIA to ask about the longevity of their cards at these temperatures, as seeing the GTX 480 hitting 94C sustained in a game left us worried. In response NVIDIA told us that they have done significant testing of the cards at high temperatures to validate their longevity, and their models predict a lifetime of years even at temperatures approaching 105C (the throttle point for GF100). Furthermore as they note they have shipped other cards that run roughly this hot such as the GTX 295, and those cards have held up just fine.

At this point we don’t have any reason to doubt NVIDIA’s word on this matter, but with that said this wouldn’t discourage us from taking the appropriate precautions. Heat does impact longevity to some degree – we would strongly consider getting a lifetime warranty for the GTX 480 to hedge our bets.

196 Comments

View All Comments

nyran125 - Monday, April 12, 2010 - link

Im going wiht the less power hungry ati 5000 series. I know a 5850 card will easily fit in my Case aswell. There no way id choose the GTX 470 over any of the ati s870 or 5850 cards. So that only leaves the GTX 480 against either the 5870 or the 5850. The performance increase and power increase is NOT worth me paying for a nvidia card thats higher in price over the 5870.I meen even looking at the games. The games ill probably play Crysis adn BAttlefield bad company 2 come out on top of the nivdia 480 GTX. so bla.

Nvidia you need to make a much bette rcard than that fo rme to spend money on a GTX 470 or GTX 480 ove rthe 5870 or 5850.

nyran125 - Monday, April 12, 2010 - link

oh and secondly, if your buying a 200 series nivida card or the GTX 480 it isnt fast enough to future proof your computer. You might aswell go spend less money on a 5970 or a single 5870 you know it will last for the next 2 years and the GTX 480 will NOT last any longer than teh 5000 series with its 10-15% performance increase. I didnt like the 200 series nvidia cards and im not interested in even MORE power hungry cards that. I want less power hungry cards and efficiency. To me a game plays bugger all different with 60 FPS average and 100 fps average. If you have a 200 series card save your money and wait for the next gen of cards or at least wait till a DX 11 game actualyl comes out not just Just cause friggin 2..vagos - Thursday, April 15, 2010 - link

ok all theese cards are nice. new technology is very welcome. but where is the games to push them?? if i spent 400$ or 500$ on a new card where i could see a really big difference against my old 8800GT?? they sell hardware without software to support it...2 or 3 games makes no difference to me. ps3 an xbox360 have very old graphic cards compared to ati 5800 series and nvidia 400 and still tha games are looking beautifull. an in some cases mauch better than on pc...make new games for pc and then i will buy a new card! until then i will stuck with my xbox360...

Drizzit101 - Sunday, May 9, 2010 - link

I have been running the GTX 295. The plan was to buy a second GTX 295. Looking at the prices, I was thinking about just buying two GTX 470's. What the better move?Krazy Glew - Tuesday, May 11, 2010 - link

See http://semipublic.comp-arch.net/wiki/Poor_Man%27s_...In particular

US patent 7,117,421, Transparent error correction code memory system and method,

Danilak,

assigned to Nvidia,

2002.

http://semipublic.comp-arch.net/wiki/Poor_Man%27s_...

Matt Campbell - Monday, August 2, 2010 - link

Ryan, what was the special sauce you used to get Badaboom working on Fermi? My GTX 460 won't run it, and Elemental's website says Fermi support won't get added until Q4 2010. http://badaboomit.com/node/507niceboy60 - Friday, August 20, 2010 - link

I have the same probleme on my GTX 480 , Badaboom does not work on fermi ,according with my own experience and Badaboom official web siteI dont think this benchmarks are accurate

niceboy60 - Friday, August 20, 2010 - link

I bought a GTX 480 based on this review as I do a considerable amount of video convertingJust to find out ,dispite the GTX 480 is showing very good resaults when using Badaboom

The truth is Badaboom is not compatible yet with any GTX 400 series according with The badaboom web site .

adder1971 - Friday, September 17, 2010 - link

The Badaboom website says it does not work and when I try it with the GTX 465 it does not work. How were you able to get it to work? I have the NVIDIA latest release drivers as of today and the latest released version of Badaboom.wizardking - Tuesday, September 21, 2010 - link

I bought this card for it only ! I used badaboom with number which you use !!!!!!