Better Image Quality: Jittered Sampling & Faster Anti-Aliasing

As we’ve stated before, the DX11 specification generally leaves NVIDIA’s hands tied. Without capsbits they can’t easily expose additional hardware features beyond what DX11 calls for, and even if they could there’s always the risk of building hardware that almost never gets used, such as AMD’s Tessellator on the 2000-4000 series.

So the bulk of the innovation has to come from something other than offering non-DX11 functionality to developers, and that starts with image quality.

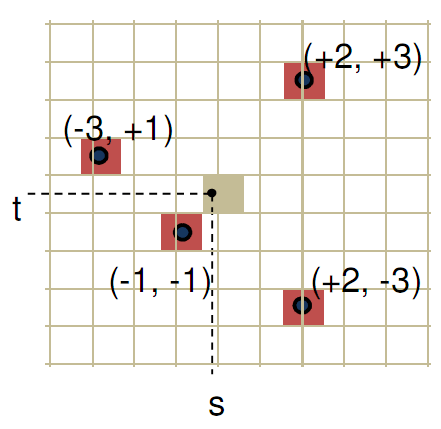

We bring up DX11 here because while it strongly defines what features need to be offered, it says very little about how things work in the backend. The Polymorph Engine is of course one example of this, but there is another case where NVIDIA has done something interesting on the backend: jittered sampling.

Jittered sampling is a long-standing technique used in shadow mapping and various post-processing techniques. In this case, jittered sampling is usually used to create soft shadows from a shadow map – take a random sample of neighboring texels, and from that you can compute a softer shadow edge. The biggest problem with jittered sampling is that it’s computationally expensive and hence its use is limited to where there is enough performance to pay for it.

In DX10.1 and beyond, jittered sampling can be achieved via the Gather4 instruction, which as the name implies is the instruction that gathers the neighboring texels for jittered sampling. Since DX does not specify how this is implemented, NVIDIA implemented it in hardware as a single vector instruction. The alternative is to fetch each texel separately, which is how this would be manually implemented under DX10 and DX9.

NVIDIA’s own benchmarks put the performance advantage of this at roughly 2x over the non-vectorized implementation on the same hardware. The benefit for developers will be that those who implement jittered sampling (or any other technique that can use Gather4) will find it to be a much less expensive technique here than it was on NVIDIA’s previous generation hardware. For gamers, this will mean better image quality through the greater use of jittered sampling.

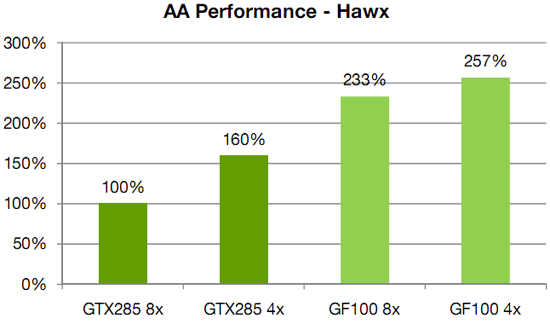

Meanwhile anti-aliasing performance overall received a significant speed boost. As with AMD, NVIDIA has gone ahead and tweaked their ROPs to reduce the performance hit of 8x MSAA, which on previous-generation GPUs could result in a massive performance drop. In this case NVIDIA has improved the compression efficiency in the ROPs to reduce the hit of 8x MSAA, and also cites the fact that having additional ROPs improves performance by allowing the hardware to better digest smaller primitives that can’t compress well.

NVIDIA's HAWX data - not independently verified

This is something we’re certainly going to be testing once we have the hardware, although we’re still not sold on the idea that the quality improvement from 8x MSAA is worth any performance hit in most situations. There is one situation however where additional MSAA samples do make a stark difference, which we’ll get to next.

115 Comments

View All Comments

chizow - Monday, January 18, 2010 - link

Looks like Nvidia G80'd the graphics market again by completely redesigning major parts of their rendering pipeline. Clearly not just a doubling of GT200, some of the changes are really geared toward the next-gen of DX11 and PhysX driven games.One thing I didn't see mentioned anywhere was HD sound capabilities similar to AMD's 5 series offerings. I'm guessing they didn't mention it, which makes me think its not going to be addressed.

mm2587 - Monday, January 18, 2010 - link

for nvidia to "g80" the market again they would need parts far faster then anything amd had to offer and to maintain that lead for several months. The story is in fact reversed. AMD has the significantly faster cards and has had them for months now. gf100 still isn't here and the fact that nvidia isn't signing the praises of its performance up and down the streets is a sign that they're acceptable at best. (acceptable meaning faster then a 5870, a chip that's significantly smaller and cheaper to make)chizow - Monday, January 18, 2010 - link

Nah, they just have to win the generation, which they will when Fermi launches. And when I mean "generation", I mean the 12-16 month cycles dictated by process node and microarchitecture. It was similar with G80, R580 had the crown for a few months until G80 obliterated it. Even more recently with the 4870X2 and GTX 295. AMD was first to market by a good 4 months but Nvidia still won the generation with GTX 295.FaaR - Monday, January 18, 2010 - link

Win schmin.The 295 ran extremely hot, was much MUCH more expensive to manufacture, and the performance advantage in games was negligible for the most part. No game is so demanding the 4870 X2 can't run it well.

The geforce 285 is at least twice as expensive as a radeon 4890, its closest competitor, so how you can say Nvidia "won" this round is beyond me.

But I suppose with fanboy glasses on you can see whatever you want to see. ;)

beck2448 - Monday, January 18, 2010 - link

Its amazing to watch ATI fanboys revise history.The 295 smoked the competition and ran cooler and quieter. Fermi will inflict another beatdown soon enough.

chizow - Monday, January 18, 2010 - link

Funny the 295 ran no hotter (and often cooler) with a lower TDP than the 4870X2 from virtually every review that tested temps and was faster as well. Also the GTX 285 didn't compete with the 4890, the 275 did in both price and performance.Its obvious Nvidia won the round as these points are historical facts based on mounds of evidence, I suppose with fanboy glasses on you can see whatever you want to see. ;)

Paladin1211 - Monday, January 18, 2010 - link

Hey kid, sometimes less is more. You dont need to post that much just to say "nVidia wins, and will win again". This round AMD has won with 2mil cards drying up the graphics market. You cant change this, neither could nVidia.Just come out and buy a Fermi, which is 15-20% faster than a HD 5870, for $500-$600. You only have to wait 3 months, and save some bucks until then. I have a HD 5850 here and I'm waiting for Tegra 2 based smartphone, not Fermi.

Calin - Tuesday, January 19, 2010 - link

Both Tegra 2 and Fermi are extraordinary products - if what NVidia says about them is true. Unfortunately, it doesn't seem like any of them is a perfect fit for the gaming desktop.Calin - Monday, January 18, 2010 - link

You don't win a generation with a very-high-end card - you win a generation with a mainstream card (as this is where most of the profits are). Also, low-end cards are very high-volume, but the profit from each unit is very small.You might win the bragging rights with the $600, top-of-the-line, two-in-one cards, but they don't really have a market share.

chizow - Monday, January 18, 2010 - link

But that's not how Nvidia's business model works for the very reasons you stated. They know their low-end cards are very high-volume and low margin/profit and will sell regardless.They also know people buying in these price brackets don't know about or don't care about features like DX11 and as the 5670 review showed, such features are most likely a waste on such low-end parts to begin with (a 9800GT beats it pretty much across the board).

The GPU market is broken up into 3 parts, High-end, performance and mainstream. GF100 will cover High-end and the top tier in performance with GT200 filling in the rest to compete with the lower-end 5850. Eventually the technology introduced in GF100 will diffuse down to lower-end parts in that mainstream segment, but until then, Nvidia will deliver the cutting edge tech to those who are most interested in it and willing to pay the premium for it. High-end and performance minded individuals.