Better Image Quality: Jittered Sampling & Faster Anti-Aliasing

As we’ve stated before, the DX11 specification generally leaves NVIDIA’s hands tied. Without capsbits they can’t easily expose additional hardware features beyond what DX11 calls for, and even if they could there’s always the risk of building hardware that almost never gets used, such as AMD’s Tessellator on the 2000-4000 series.

So the bulk of the innovation has to come from something other than offering non-DX11 functionality to developers, and that starts with image quality.

We bring up DX11 here because while it strongly defines what features need to be offered, it says very little about how things work in the backend. The Polymorph Engine is of course one example of this, but there is another case where NVIDIA has done something interesting on the backend: jittered sampling.

Jittered sampling is a long-standing technique used in shadow mapping and various post-processing techniques. In this case, jittered sampling is usually used to create soft shadows from a shadow map – take a random sample of neighboring texels, and from that you can compute a softer shadow edge. The biggest problem with jittered sampling is that it’s computationally expensive and hence its use is limited to where there is enough performance to pay for it.

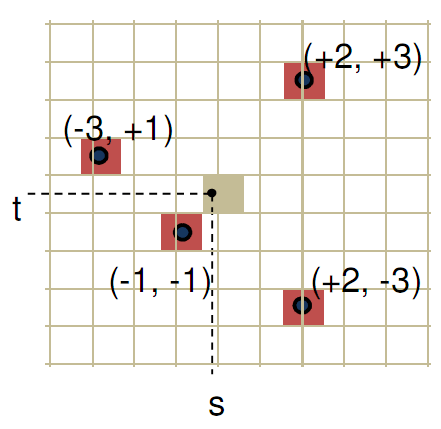

In DX10.1 and beyond, jittered sampling can be achieved via the Gather4 instruction, which as the name implies is the instruction that gathers the neighboring texels for jittered sampling. Since DX does not specify how this is implemented, NVIDIA implemented it in hardware as a single vector instruction. The alternative is to fetch each texel separately, which is how this would be manually implemented under DX10 and DX9.

NVIDIA’s own benchmarks put the performance advantage of this at roughly 2x over the non-vectorized implementation on the same hardware. The benefit for developers will be that those who implement jittered sampling (or any other technique that can use Gather4) will find it to be a much less expensive technique here than it was on NVIDIA’s previous generation hardware. For gamers, this will mean better image quality through the greater use of jittered sampling.

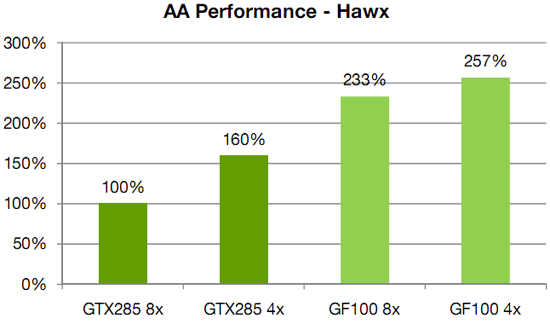

Meanwhile anti-aliasing performance overall received a significant speed boost. As with AMD, NVIDIA has gone ahead and tweaked their ROPs to reduce the performance hit of 8x MSAA, which on previous-generation GPUs could result in a massive performance drop. In this case NVIDIA has improved the compression efficiency in the ROPs to reduce the hit of 8x MSAA, and also cites the fact that having additional ROPs improves performance by allowing the hardware to better digest smaller primitives that can’t compress well.

NVIDIA's HAWX data - not independently verified

This is something we’re certainly going to be testing once we have the hardware, although we’re still not sold on the idea that the quality improvement from 8x MSAA is worth any performance hit in most situations. There is one situation however where additional MSAA samples do make a stark difference, which we’ll get to next.

115 Comments

View All Comments

DanNeely - Monday, January 18, 2010 - link

For the benefit of myself and everyone else who doesn't follow gaming politics closely, what is "the infamous Batman: Arkham Asylum anti-aliasing situation"?sc3252 - Monday, January 18, 2010 - link

Nvidia helped get AA working in batman which also works on ATI cards. If the game detects anything besides a Nvidia card it disables AA. The reason some people are angry is when ATI helps out with games it doesn't limit who can use the feature, at least that's what they(AMD) claim.san1s - Monday, January 18, 2010 - link

the problem was that nvidia did not do qa testing on ati hardwareMeghan54 - Monday, January 18, 2010 - link

And nvidia shouldn't have since nvidia didn't develop the game.On the other hand, you can be quite certain that the devs. did run the game on Ati hardware but only lock out the "preferred" AA design because of nvidia's money nvidia invested in the game.

And that can be plainly seen by the fact that when the game is "hacked" to trick the game into seeing an nvidia card installed despite the fact an Ati card is being used and AA works flawlessly....and the ATi cards end up faster than current nvidia cards....the game is exposed for what it is. Purposely crippling a game to favor one brand of video card over another.

But the nvididiots seem to not mind this at all. Yet, this is akin to Intel writing their complier to make AMD cpus run slower or worse on programs compiled with the Intel compiler.

Read about that debacle Intel's now suffering from and that the outrage is fairly universal. Now, you'd think nvidia would suffer the same nearly universal outrage for intentionally crippling a game's function to favor one brand of card over another, yet nvidiots make apologies and say "Ati cards weren't tested." I'd like to see that as a fact instead of conjecture.

So, one company cripples the function of another company's product and the world's up in arms, screaming "Monopolistic tactics!!!" and "Fine them to hell and back!"; another company does essentially the same thing and it gets a pass.

Talk about bias.

Stas - Tuesday, January 19, 2010 - link

If nV continues like this, it will turn around on them. It took MANY years for the market guards to finally say, "Intel, quit your sh*t!" and actually do something about it. Don't expect immediate retaliation in a multibillion dollar world-wide industry.san1s - Monday, January 18, 2010 - link

"yet nvidiots make apologies and say "Ati cards weren't tested." I'd like to see that as a fact instead of conjecture. "here you go

http://www.legitreviews.com/news/6570/">http://www.legitreviews.com/news/6570/

"On the other hand, you can be quite certain that the devs. did run the game on Ati hardware but only lock out the "preferred" AA design because of nvidia's money nvidia invested in the game. "

proof? that looks like conjecture to me. Nvidia says otherwise.

Amd doesn't deny it either.

http://www.bit-tech.net/bits/interviews/2010/01/06...">http://www.bit-tech.net/bits/interviews...iew-amd-...

they just don't like it

And please refrain from calling people names such as "nvidiot," it doesn't help portray your image as unbiased.

MadMan007 - Monday, January 18, 2010 - link

Oh for gosh sakes, this is the 'launch' and we can't even have a paper launch where at least reviewers get hardware? This is just more details for the same crap that was 'announced' when the 5800s came out. Poor show NV, poor show.bigboxes - Monday, January 18, 2010 - link

This is as close to a paper launch as I've seen in a while, except that there is not even an unattainable card. Gawd, they are gonna drag this out a lonnnnngg time. Better start saving up for that 1500W psu!Adul - Monday, January 18, 2010 - link

I suppose this is a vaporlaunch then.Adul - Monday, January 18, 2010 - link

I suppose this is a vaporlaunch then.