Better Image Quality: Jittered Sampling & Faster Anti-Aliasing

As we’ve stated before, the DX11 specification generally leaves NVIDIA’s hands tied. Without capsbits they can’t easily expose additional hardware features beyond what DX11 calls for, and even if they could there’s always the risk of building hardware that almost never gets used, such as AMD’s Tessellator on the 2000-4000 series.

So the bulk of the innovation has to come from something other than offering non-DX11 functionality to developers, and that starts with image quality.

We bring up DX11 here because while it strongly defines what features need to be offered, it says very little about how things work in the backend. The Polymorph Engine is of course one example of this, but there is another case where NVIDIA has done something interesting on the backend: jittered sampling.

Jittered sampling is a long-standing technique used in shadow mapping and various post-processing techniques. In this case, jittered sampling is usually used to create soft shadows from a shadow map – take a random sample of neighboring texels, and from that you can compute a softer shadow edge. The biggest problem with jittered sampling is that it’s computationally expensive and hence its use is limited to where there is enough performance to pay for it.

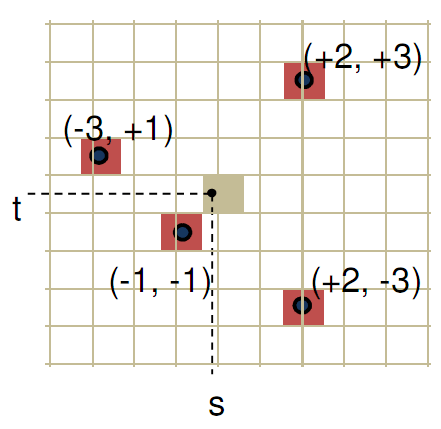

In DX10.1 and beyond, jittered sampling can be achieved via the Gather4 instruction, which as the name implies is the instruction that gathers the neighboring texels for jittered sampling. Since DX does not specify how this is implemented, NVIDIA implemented it in hardware as a single vector instruction. The alternative is to fetch each texel separately, which is how this would be manually implemented under DX10 and DX9.

NVIDIA’s own benchmarks put the performance advantage of this at roughly 2x over the non-vectorized implementation on the same hardware. The benefit for developers will be that those who implement jittered sampling (or any other technique that can use Gather4) will find it to be a much less expensive technique here than it was on NVIDIA’s previous generation hardware. For gamers, this will mean better image quality through the greater use of jittered sampling.

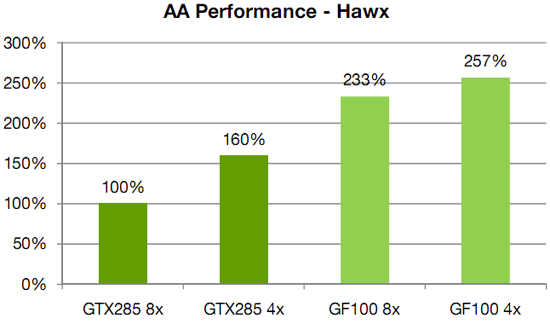

Meanwhile anti-aliasing performance overall received a significant speed boost. As with AMD, NVIDIA has gone ahead and tweaked their ROPs to reduce the performance hit of 8x MSAA, which on previous-generation GPUs could result in a massive performance drop. In this case NVIDIA has improved the compression efficiency in the ROPs to reduce the hit of 8x MSAA, and also cites the fact that having additional ROPs improves performance by allowing the hardware to better digest smaller primitives that can’t compress well.

NVIDIA's HAWX data - not independently verified

This is something we’re certainly going to be testing once we have the hardware, although we’re still not sold on the idea that the quality improvement from 8x MSAA is worth any performance hit in most situations. There is one situation however where additional MSAA samples do make a stark difference, which we’ll get to next.

115 Comments

View All Comments

SothemX - Tuesday, March 9, 2010 - link

WELL.lets just make it simple. I am an advid gamer...I WANT and NEED power and performance. I care only about how well my games play, how good they look, and the impression they leave with me when I am done.I own a PS3 and am thrilled they went with Nvidia- (smart move)

I own and PC that utilizes the 9800GT OC card....getting ready to upgrade to the new GF100 when it releases, last thing that is on my mind is how the market share is, cost is not an issue.

Hard-Core gaming requires Nvidia. Entry-level baby boomers use ATI.

Nvidia is just playing with their food....its a vulgar display of power- better architecture, better programming, better gamming.

StevoLincolnite - Monday, January 18, 2010 - link

[quote]So why does NVIDIA want so much geometry performance? Because with tessellation, it allows them to take the same assets from the same games as AMD and generate something that will look better. With more geometry power, NVIDIA can use tessellation and displacement mapping to generate more complex characters, objects, and scenery than AMD can at the same level of performance.[/quote]Might I add to that, nVidia's design is essentially "Modular" they can increase and decrease there geometry performance essentially by taking units out, this however will force programmers to program for the lowest common denominator, whilst AMD's iteration of the technology is the same across the board, so essentially you can have identical geometry regardless of the chip.

Yojimbo - Monday, January 18, 2010 - link

just say the minimum, not the lowest common denominator. it may look fancy bit it doesn't seem to fit.chizow - Monday, January 18, 2010 - link

The real distinction here is that Nvidia's revamp of fixed-function geometry units to a programmable, scalable, and parallel Polymorph engine means their implementation won't be limited to acceleration of Tesselation in games. Their improvements will benefit every game ever made that benefits from increased geometry performance. I know people around here hate to claim "winners" and "losers" around here when AMD isn't winning, but I think its pretty obvious Nvidia's design and implementation is the better one.Fully programmable vs. fixed-function, as long as the fully programmable option is at least as fast is always going to be the better solution. Just look at the evolution of the GPU from mostly fixed-function hardware to what it is today with GF100...a fully programmable, highly parallel, compute powerhouse.

mcnabney - Monday, January 18, 2010 - link

If Fermi was a winner Nvidia would have had samples out to be benchmarked by Anand and others a long time ago.Fermi is designed for GPGPU with gaming secondary. Goody for them. They can probably do a lot of great things and make good money in that sector. But I don't know about gaming. Based upon the info that has gotten out and the fact that reality hasn't appeared yet I am guessing that Fermi will only be slightly faster than 5870 and Nvidia doesn't want to show their hand and let AMD respond. Remember, AMD is finishing up the next generation right now - so Fermi will likely compete against Northern Isles on AMDs 32nm process in the Fall.

dragonsqrrl - Monday, February 15, 2010 - link

Firstly, did you not read this article? The gf100 delay was due in large part to the new architecture they developed, and architectural shift ATI will eventually have to make if they wish to remain competitive. In other words, similarly to the g80 enabling GPU computing features/unified shaders for the first time on the PC, Nvidia invested huge resources in r&d and as a result had a next generation, revolutionary GPU before ATI.Secondly, Nvidia never meant to place gaming second to GPU computing, as much as you ATI fanboys would like to troll about this subject. What they're trying to do is bring GPU computing up to the level GPU gaming is already at (in terms of accessibility, reliability, and performance). The research they're doing in this field could revolutionize research into many fields outside of gaming, including medicine, astronomy, and 'yes' film production (something I happen to deal with a LOT) while revolutionizing gaming performance and feature sets as well

Thirdly, I would be AMAZED if AMD can come out with their new architecture (their first since the hd2900) by the 3rd quarter of this year, and on the 32nm process. I just can't see them pushing GPU technology forward in the same way Nvidia has given their new business model (smaller GPUs, less focus on GPU computing), while meeting that tight deadline.

chewietobbacca - Monday, January 18, 2010 - link

"Winning" the generation? What really matters?The bottom line, that's what. I'm sure Nvidia liked winning the generation - I'm sure they would have loved it even more if they didn't lose market share and potential profits from the fight...

realneil - Monday, January 25, 2010 - link

winning the generation is a non-prize if the mainstream buyer can only wish they had one. Make this kind of performance affordable and then you'll impress me.chizow - Monday, January 18, 2010 - link

Yes and the bottom line showed Nvidia turning a profit despite not having the fastest part on the market.Again, my point about G80'ing the market was more a reference to them revolutionizing GPU design again rather than simply doubling transistors and functional units or increasing clockspeeds based on past designs.

The other poster brought up performance at any given point in time, I was simply pointing out a fact being first or second to market doesn't really matter as long as you win the generation, which Nvidia has done for the last few generations since G80 and will again once GF100 launches.

sc3252 - Monday, January 18, 2010 - link

Yikes, if it is more than the original GTX 280 I would expect some loud cards. When I saw those benchmarks of farcrry 2 I was disappointed that I didn't wait, but now that it is using more than a GTX 280 I think I may have made the right choice. While right now I wan't as much performance as possible eventually my 5850 will go into a secondary pc(why I picked 5850) with a lesser power supply. I don't want to have to buy a bigger power supply just because a friend might come over and play once a week.