AMD Athlon X2 7850 vs. Intel Pentium E5300: Choosing the Best $70 CPU

by Anand Lal Shimpi on April 28, 2009 11:00 AM EST- Posted in

- CPUs

$74 Gets You Faster than any Pentium 4 Ever Made

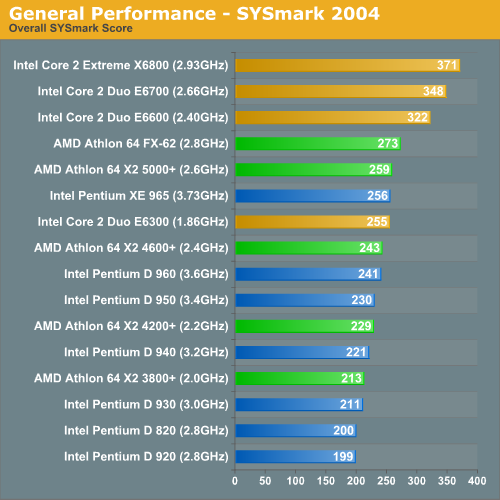

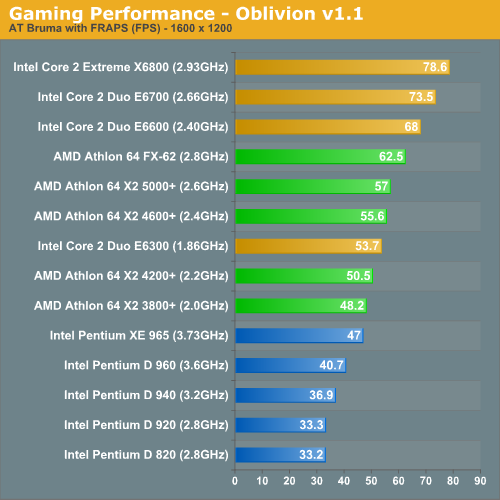

The Pentium E5300 is very similar in clock speed and cache size to some of the original Core 2 Duos that launched in the summer of 2006. You may recall that Intel offered both 2MB and 4MB L2 variants of the Core 2 at launch. The E6300 and E6400 both had a 2MB L2, while the E6600, E6700 and X6800 all had a 4MB L2.

The Pentium E5300 is based on the Wolfdale core, which is faster than the original Conroe based Core 2s - but it only has a 2MB L2 like the old E6400. The E6400 however ran at 2.16GHz, the E5300 runs at 2.60GHz. In other words, today’s $74 Pentium E5300 is faster than the original Core 2 Duo E6400.

But the comparison gets even more interesting. Remember that the E6400, at launch, was faster than even the fastest Pentium 4 - the dual core, four thread Pentium Extreme Edition 965 running at 3.73GHz. The charts below from my original Core 2 Duo review show just that:

Do you see where I’m going with this? While the data above is old, it shows that the E6400 was faster than the fastest Pentium 4 ever released. And the $74 E5300 is faster than the E6400, therefore the Pentium E5300 is faster than any Pentium 4 ever released.

Most people didn’t have 3.73GHz Pentium Extreme Editions in their systems - they had lower clocked versions, in which case the E5300 should be even faster. If you had a 2.8GHz Pentium D, I’d expect the Pentium E5300 to be anywhere between 20 - 40% faster regardless of application. Mmm Moore’s Law.

The Test

| Motherboard: | Intel DX48BT2 (Intel X48) MSI DKA790GX Platinum (AMD 790GX) |

| Chipset: | Intel X48 AMD 790GX |

| Chipset Drivers: | Intel 9.1.1.1010 (Intel) AMD Catalyst 8.12 |

| Hard Disk: | Intel X25-M SSD (80GB) |

| Memory: | G.Skill DDR2-800 2 x 2GB (4-4-4-12) G.Skill DDR2-1066 2 x 2GB (5-5-5-15) Qimonda DDR3-1066 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 280 |

| Video Drivers: | NVIDIA ForceWare 180.43 (Vista64) NVIDIA ForceWare 178.24 (Vista32) |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows Vista Ultimate 32-bit (for SYSMark) Windows Vista Ultimate 64-bit |

55 Comments

View All Comments

v12v12 - Tuesday, April 28, 2009 - link

What does personal mud slinging have to do with my points? Please debate them, if you can?Doc01 - Tuesday, July 27, 2010 - link

Athlon has surpassed all expectations, Athlon outside competition.<a href="http://www.salesgeneric.net">http://www.sa...

just4U - Tuesday, April 28, 2009 - link

I think overclocks are a non issue with the 5300. Why would anyone buy it over a 5200 which is already a proven performer. Not like the 5300 could actually outdue it... Anyway..I was faced with a decision on a couple of cheap builds. (no overclocking) The 5200 or the 7750. In the end I opted for the 7750 for a few reasons..

I kinda felt that the chips were comparable overall.. but a key deciding factor was the motherboards used. The 780G chipset is just way to tempting at such a low low price for a budget build that it sort of trumps Intel's cpu/mb considerations. Atleast in my opinion... am I wrong?

TA152H - Tuesday, April 28, 2009 - link

Anand, you mention the Pentium has a one cycle faster L1 cache, but my understanding is they are both three cycles. I know the K7 and K8 were three cycles. Did AMD slow down the L1 cache on the Phenom's?A few other things to consider. The L2 cache on the AMD is exclusive, Intel's is inclusive. But, the L1 cache of AMD processors is not really 128K, since they pad instructions for easier decoding, but I guess that's just nitpicking.

I'm really curious about the L1 cache latency though. Can you let us know if the Phenom is now 4 clock cycles? It's an ugly trend we're seeing, with the L1 cache latency still going up, except for the Itanium. Which, as we all know, will replace x86. Hmmmm, I guess Intel missed on that prediction :-P .

Anand Lal Shimpi - Tuesday, April 28, 2009 - link

You know, I didn't even catch that until now. I ran a quick latency test that reported 4 cycles but the original Phenom (and Phenom II) both have a 3 cycle L1. I've got a message in to AMD to see if the benchmark reported incorrectly or if something has changed. I'm guessing it's just a benchmark error but I want to confirm, I've seen stranger things happen :)Intel found that the 4 cycle L1 in Nehalem cost them ~2% in performance, but it was necessary to keep increasing clock speeds. I'd pay ~2% :)

Take care,

Anand

TA152H - Tuesday, April 28, 2009 - link

I was under the impression the reason Intel increased L1 cache latency was so they could use a lower power technology, and save some power.I heard the number was around 3 to 4 percentage loss in performance, but I guess it always depends on workload and who in Intel is saying it.

But, the whole setup seems strange to me now. Typically, when you go to the 3-level cache hierarchy, you see a smaller, faster L1 cache, not a very slow one like the Nehalem has. Especially with such a small, fast L2 cache, and the fact the L1 is inclusive in it, I'm not clear why they didn't cut the L1 cache in half, and lower the latency. You'd cut costs, you'd cut power, and I'm not sure you'd lose any performance with a 32K L1 cache with three cycles, instead of a 64K with four, when you have a 10 cycle L2 cache behind it. A non-exclusive L2 cache that is only four times the size of the L1 cache seems like an aberration to me. I wouldn't be surprised if this changed in some way for the next release, but I have no information on it at all.

But, mathematically, if you could but the L1 cache to three cycles by going to 32K, that would mean you'd get better performance for reads up to the 32K mark by one cycle, and worse by six cycles for anything between 32K and 64K. Typically, you'd expect this to favor the smaller cache, since the likelihood of it falling outside the 32K but within the 64K is probably less than 1/6 the chance of it falling inside the 32K. Really, we should be halving it, since it's instruction and data, but I think it's still true. On top of this, you'd always have lower power, and you'd always have a smaller die, and generate less heat. And you wouldn't have that crazy four to one L2 to L1 ratio.

But, the Nehalem has great performance, so obviously Intel knew what they were doing. Maybe they were able to hide the latency well beyond simple mathematics like I used above, or maybe cutting it to three cycles would have been difficult (very hard to imagine since it's working on the Penryn with 64K, and the clock speeds aren't so different). I wish I knew :-P .

Thanks for your response.

Anand Lal Shimpi - Tuesday, April 28, 2009 - link

This is what I wrote in my original Nehalem Architecture piece:"The L1 cache is the same size as what we have in Penryn, but it’s actually slower (4 cycles vs. 3 cycles). Intel slowed down the L1 cache as it was gating clock speed, especially as the chip grew in size and complexity. Intel estimated a 2 - 3% performance hit due to the higher latency L1 cache in Nehalem."

I believe Ronak Singhal was the source on that, the chief architect behind Nehalem.

I suspect the decision to stick with a 64KB L1 (I + D) instead of shrinking it has to do with basic statistics. There's no way the L1 is going to catch all of anything, but the whole idea behind the cache hierarchy is to catch a high enough percentage of data/instructions to limit the number of trips to lower levels of memory.

It's not impossible to build a 64KB 3-cycle L1, but if Ronak is correct then even a smaller L1 would not negate the need to make it a 4-cycle cache - the L1 was gating clock speed.

I think Intel found the right L1 size for its chips and the right L2 size. The L3 is up in the air at this point. Ronak said he wanted a larger cache, but definitely no less than 2MB per core (8MB for a quad-core).

Take care,

Anand

f4phantom2500 - Tuesday, April 28, 2009 - link

seriously, most people who come to anandtech and look at these things are at least aware of overclocking, if not overclockers themselves. whenever i build desktop rigs for myself i always go for the cheap "low end" chips and overclock them. the e5300 is an excellent example. honestly i'd expect it to completely decimate the 7850 in all ways if they were overclocked, but they should definitely have put it in this review, as there are 2 people who buy these types cheap cpus:1. people who do basic stuff and don't really care anyway

2. people who overclock

and people who overclock would most likely constitute the majority of the readers of a comparison between the two chips, because if you didn't care about performance that much then there aren't too many reasons why you'd bother reading this review.

sprockkets - Tuesday, April 28, 2009 - link

For me, it comes down to whether I want a Zotac mini ITX board based on the 8200 or 9300 chip. While the AMD one is cheaper, they neglected to put in a HDMI and SPDIF ports on it, so, I have to go for the Intel board.Besides, they have a small heatsink fan combo. I assume the AMD one is bigger, but of course, it mounts much easier and better than Intel's setup.

leexgx - Sunday, May 3, 2009 - link

the intel one make so little heat does not need an big heatsink, i going to have to start useing intel CPUs for my basic systems soon as where i get me cpus form only stock 2.7ghz amd X2 CPUs or the heat moster 7750