The Dark Knight: Intel's Core i7

by Anand Lal Shimpi & Gary Key on November 3, 2008 12:00 AM EST- Posted in

- CPUs

Nehalem's Weakness: Cache

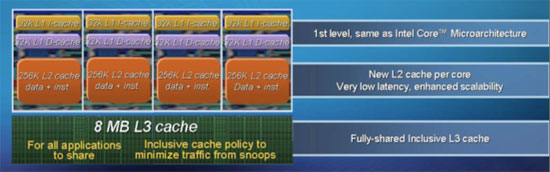

Intel opted for a very Opteron-like cache hierarchy with Nehalem, each core gets a small L2 cache and they all sit behind one large, shared L3 cache. This sort of a setup benefits large codebase applications that are also well threaded, for example the type of things you'd encounter in a database server. The problem is that the CPU launching today, the Core i7, is designed to be used in a desktop.

Let's look at a quick comparison between Nehalem and Penryn's cache setups:

| Intel Nehalem | Intel Penryn | |

| L1 Size / L1 Latency | 64KB / 4 cycles | 64KB / 3 cycles |

| L2 Size / L2 Latency | 256KB / 11 cycles | 6MB* / 15 cycles |

| L3 Size / L3 Latency | 8MB / 39 cycles | N/A |

| Main Memory Latency (DDR3-1600 CAS7) | 107 cycles (33.4 ns) | 160 cycles (50.3 ns) |

*Note 6MB per 2 cores

Nehalem's L2 cache does get a bit faster, but the speed doesn't make up for the lack of size. I suspect that Intel will address the L2 size issue with the 32nm shrink, but until then most applications will have to deal with a significantly reduced L2 cache size per core. The performance impact is mitigated by two things: 1) the fast L3 cache, and 2) the very fast on die memory controller. Fortunately for Nehalem, most applications can't fit entirely within cache and thus even the large 6MB and 12MB L2 caches of its predecessors can't completely contain everything, thus giving Nehalem's L3 cache and memory controller time to level the playing field.

The end result, as you'll soon see, is that in some cases Nehalem's architecture manages to take two steps forward, and two steps back, resulting a zero net improvement over Penryn. The perfect example is 3D gaming as you can see below:

| Intel Nehalem (3.2GHz) | Intel Penryn (3.2GHz) | |

| Age of Conan | 123 fps | 107.9 fps |

| Race Driver GRID | 102.9 fps | 103 fps |

| Crysis | 40.5 fps | 41.7 fps |

| Farcry 2 | 115.1 fps | 102.6 fps |

| Fallout 3 | 83.2 fps | 77.2 fps |

Age of Conan and Fallout 3 show significant improvements in performance when not GPU bound, while Crysis and Race Driver GRID offer absolutely no benefit to Nehalem. It's almost Prescott-like in that Intel put in a lot of architectural innovation into a design that can, at times, offer no performance improvement over its predecessor. Where Nehalem fails to be like Prescott is in that it can offer tremendous performance increases and it's on the very opposite end of the power efficiency spectrum, but we'll get to that in a moment.

73 Comments

View All Comments

Kaleid - Monday, November 3, 2008 - link

http://www.guru3d.com/news/intel-core-i7-multigpu-...">http://www.guru3d.com/news/intel-core-i...and-cros...bill3 - Monday, November 3, 2008 - link

Umm, seems the guru3d gains are probably explained by them using a dual core core2dou versus quad core i7...Quad core's run multi-gpu quiet a bit better I believe.tynopik - Monday, November 3, 2008 - link

what about those multi-threading tests you used to run with 20 tabs open in firefox while running av scan while compressing some files while converting something else while etc etc?this might be more important for daily performance than the standard desktop benchmarks

D3SI - Monday, November 3, 2008 - link

So the low end i7s are OC'able?

what the hell is toms hardware talking about lol

conquerist - Monday, November 3, 2008 - link

Concerning x264, Nehalem-specific improvements are coming as soon as the developers are free from their NDA.See http://x264dev.multimedia.cx/?p=40">http://x264dev.multimedia.cx/?p=40.

Spectator - Monday, November 3, 2008 - link

can they do some CUDA optimizations?. im guessing that video hardware has more processors than quad core intel :PIf all this i7 is new news and does stuff xx faster with 4 core's. how does 100+ core video hardware compare?.

Yes im messing but giant Intel want $1k for best i7 cpu. when likes of nvid make bigger transistor count silicon using a lesser process and others manufacture rest of vid card for $400-500 ?

Where is the Value for money in that. Chukkle.

gramboh - Monday, November 3, 2008 - link

The x264 team has specifically said they will not be working on CUDA development as it is too time intensive to basically start over from scratch in a more complex development environment.npp - Monday, November 3, 2008 - link

CUDA Optimizations? I bet you don't understand completely what you're talking about. You can't just optimize a piece of software for CUDA, you MUST write it from scratch for CUDA. That's the reason why you don't see too much software for nVidia GPUs, even though the CUDA concept was introduced at least two years ago. You have the BadaBOOM stuff, but it's far for mature, and the reason is that writing a sensible application for CUDA isn't exactly an easy task. Take your time to look at how it works and you'll understand why.You can't compare the 100+ cores of your typical GPU with a quad core directly, they are fundamentaly different in nature, with your GPU "cores" being rather limited in functionality. GPGPU is a nice hype, but you simply can't offload everything on a GPU.

As a side note, top-notch hardware always carries price premium, and Intel has had this tradition with high-end CPUs for quite a while now. There are plenty of people who need absolutely the fastest harware around and won't hesitate paying it.

Spectator - Monday, November 3, 2008 - link

Some of us want more info.A) How does the integrated Thermal sensor work with -50+c temps.

B) Can you Circumvent the 130W max load sensor

C) what are all those connection points on the top of the processor for?.

lol. Where do i put the 2B pencil to. to join that sht up so i dont have to worry about multiply settings or temp sensors or wattage sensors.

Hey dont shoot the messenger. but those top side chip contacts seem very curious and obviously must serve a purpose :P

Spectator - Monday, November 3, 2008 - link

Wait NO. i have thought about it..The contacts on top side could be for programming the chips default settings.

You know it makes sence.Perhaps its adjustable sram style, rather than burning connections.

yes some technical peeps can look at that. but still I want the fame for suggesting it first. lmao.

Have fun. but that does seem logical to build in some scope for alteration. alot easier to manufacture 1 solid item then mod your stock to suit market when you feel its neccessary.

Spectator.