Nehalem - Everything You Need to Know about Intel's New Architecture

by Anand Lal Shimpi on November 3, 2008 1:00 PM EST- Posted in

- CPUs

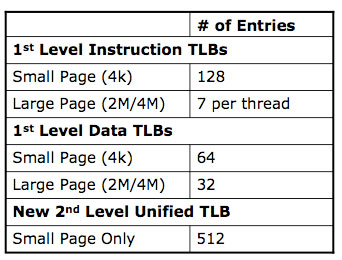

New TLBs, Faster Unaligned Cache Accesses

Historically the applications that push a microprocessor’s limits on TLB size and performance are server applications, once again with things like databases. Nehalem not only increases the sizes of its TLBs but also adds a 2nd level unified TLB for both code and data.

Another potentially significant fix that I’ve already talked about before is Nehalem’s faster unaligned cache accesses. The largest size of a SSE memory operation is 16-bytes (128-bits). For any of these load/store operations there are two versions: an op that is aligned on a 16-byte boundary, and an op that is unaligned.

Compilers will use the unaligned operation if they can’t guarantee that a memory access won’t fall on a 16-byte boundary. In all Core 2 processors, the unaligned op was significantly slower than the aligned op, even on aligned data.

The problem is that most compilers couldn’t guarantee that data would be aligned properly and would default to using the unaligned op, even though it would sometimes be used on aligned data.

With Nehalem, Intel has not only reduced the performance penalty of the unaligned op but also made it so that if you use the unaligned op on aligned data, there’s absolutely no performance degradation. Compilers can now always use the unaligned op without any fear of a reduction in performance.

To get around the unaligned data access penalty in previous Core 2 architectures, developers wrote additional code specifically targeted at this problem. Here’s one area where a re-optimization/re-compile would help since on Nehalem they can just go back to using the unaligned op.

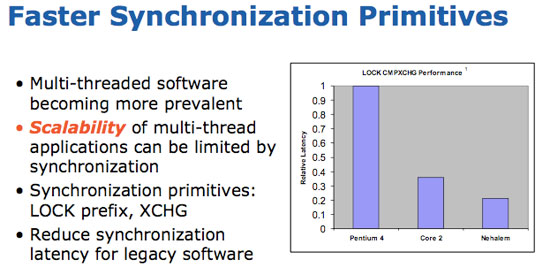

Thread synchronization performance is also improved on Nehalem, which leads us in to the next point.

35 Comments

View All Comments

smilingcrow - Thursday, August 21, 2008 - link

You do realise that you aren’t meant to drink the snakeoil as it can rot your brain….JarredWalton - Friday, August 22, 2008 - link

I don't think Nehalem will "fail" at running games... in fact I expect it to be faster than Penryn at equivalent clocks. I just don't expect it to be significantly faster. We'll know soon enough, though.chizow - Friday, August 22, 2008 - link

I'd agree with the OP, maybe with a different choice of words. Also, Johan seems to disagree with you Jarred, citing the decreased L2 and slower L3 along with similar maximum clock speeds as to why Nehalem may perform worst than Penryn at the same clocks in games. We've already seen a very preliminary report substantiating this from Hexus:http://www.hexus.net/content/item.php?item=15015&a...">http://www.hexus.net/content/item.php?item=15015&a...

There's still a lot of questions about overclockability as well without a FSB. It appears Nehalem will run slightly hotter and draw a bit more power under load than Penryn with similar maximum clockspeeds when overclocked.

What's most disappointing is all of these new, hyped features seem to amount to squat with Nehalem. Where's the benefit of the IMC? Where's the benefit of the triple channel memory bus? Where's the benefit of HT? I know....I know...its for the server market/HPC crowd, but no one finds it odd that something like an IMC is resulting in negligible gaming gains when it was such a boon for AMD's Athlon 64?

All in all, very disappointing for the Gamer/Enthusiast as we'll most likely have to wait for Westmere for a significant improvement over Penryn (or even Kentsfield) in games.

cornelius785 - Sunday, August 24, 2008 - link

From looking at that article, I have doubts that it could be worse than penryn in games. I also doubt that the performance increase is going to be the same as it was for Core 2. People are expecting this and since their figuring out that their unrealistic expectations can't be realized in Nehalem, they call Nehalem trash and say they'll go back to amd, ignoring the fact that Intel is still king in gaming with EXISTING processors. It is also not like 50 fps at extremely high setting and resolution isn't playable.JarredWalton - Friday, August 22, 2008 - link

Given that is early hardware, most likely not fully optimized, and performance is still substantially better than the QX6800 in several gaming tests (remember I said clock-for-clock I expected it to be faster, not necessarily faster than a 3.2GHz part when it's running at 2.93GHz).... Well, it's going to be close, but overall I think the final platform will be faster. Johan hasn't tested any games on the platform, so that's just speculation. Anyway, it's probably going to end up being a minor speed bump for gaming, and a big boost everywhere else.Felofasofanz - Thursday, August 21, 2008 - link

It was my understanding that tick was the new architecture and tock was the shrink. I thought Conroe was tick, Penryn tock, then Nehalem tick, and the 32nm shrink tock?npp - Thursday, August 21, 2008 - link

I think we're facing a strange issue right now, and it's about the degree of usefulness of Core i7 for the average user. Enterprise clients will surely benefit a lot from all the improvements introduced, but I would have hard time convincing my friends to upgrade from their E8400...And it's not about badly-threaded applications, the ones an average user has installed simply don't require such immense computing power. Look at your browser, or e-mail client - the tasks they execute are pretty well split over different threads, but they simply don't need 8 logical CPUs to run fast, most of them simply sit idle. It's a completely different matter that many applications who happen to be resource-hungry can't be parallelized to a reasonable degree - think about encryption/decryption, or similar, for example.

It seems to me that the Gustafson's law begins to speak here - if you can't escape your seqential code, make your task bigger. So expect something like UltraMegaHD soon, then try to transcode it in real time... But then again... who needs that? It seems like a cowardly manner to me.

Is it that software is laging behing technology? We need new OSes, completely different ways of interaction with our computers in order to justify so much GFLOPs on the desktop, in my opinion. Or that's at least what I'm dreaming of. The level of man-machine interaction has barely changed since the mouse was invented, and modern OSes are very similar to the very first OSes with a GUI, maybe somewhere in the 80s, or even earlier. But for that time span CPUs have increased their capabilities almost exponentially, can you spot a problem here?

The issue becomes clearer if we talk about 16-threaded octacores... God, I can't think of an application that would require that. (Let's be honest - most people don't transcode video or fold proteins all the time...). I think it would be great if a new Apple or Microsoft would emerge from some small garage, to come and change the old picture for good. The only way to justify technology would be to change the whole user-machine paradigm significantly, going the old way leads to nowhere, I suspect.

gamerk2 - Tuesday, August 26, 2008 - link

Actually, Windows is the biggest bottleneck there is. Windows doesn't SCALE. It does limited tasks well, but breaks under heavy loads.Even the M$ guys are realizing this. Midori will likely be the new post-windows OS after Vista SP2 (windows 7).

smilingcrow - Thursday, August 21, 2008 - link

So eventually we may end up with a separate class of systems that are akin to ‘proper’ sports cars with prices to match. Intel seemingly already sees this as a potential which is why they are releasing a separate high end platform for Nehalem. Unless a new killer app or two is found that appeals to the mainstream I can’t see many people wanting to pay a large premium for these systems since as the entry level performance continues to rise less and less people require anything more than what they offer.One thing mitigating against this is the current relatively low cost of workstation/server Dual Processor components which should continue to be affordable due to the strong demand for them within businesses. It’s foreseeable that it might eventually be more cost affective to build a DP workstation system than a UP high end desktop. This matches Apple’s current product range where they completely miss out on high end UP desktops and jump straight from mid range UP desktop to DP workstation.

retardliquidator - Thursday, August 21, 2008 - link

... think again.more luck next time before starting the flamebait about not two bytes wide but 20bits.

effective usable speed is exactly 2bytes, as with 10/8 coding you need 20bits to encode your 16 relevant ones.

you fail at failing.