AMD 780G: Preview of the Best Current IGP Solution

by Gary Key on March 10, 2008 12:00 PM EST- Posted in

- CPUs

H.264 Video Quality - Cars

One of our favorite movies last year was Cars from Disney / PIXAR. It may be a kid-oriented movie but who can resist fast cars and a love story to boot. This movie offers bitrate levels that average 14.1 Mb/s to 31.9 Mb/s. In our particular test scene, the Hudson Hornet is in the pit lanes acting as crew chief. This image provides an array of colors and contrast opportunities for dissection.

780G – Click to Enlarge |

G35 – Click to Enlarge |

GeForce 8200 – Click to Enlarge |

The differences in the images are small but the GeForce 8200 appears to have slightly deeper colors along with a very slight edge in sharpness that we will discuss shortly. The reference image was in between the G35 and 780G, but even that decision was a tossup in our opinion. However, our test audience voted differently than the reference image with 4 votes in favor of the GeForce 8200, 3 for the G35, and 1 for the 780G.

This is one title that benefits from NVIDIA's new HD Dynamic Contrast and Color enhancements that will vary contrast ratios on the fly while enforcing a slightly stronger color palette across the image. We find this technology is well suited to animated titles but our opinions differ strongly when it comes to natural images. Almost all of us did not like the contrast enhancement technology but several thought the color enhancement feature offered a better overall picture in most cases.

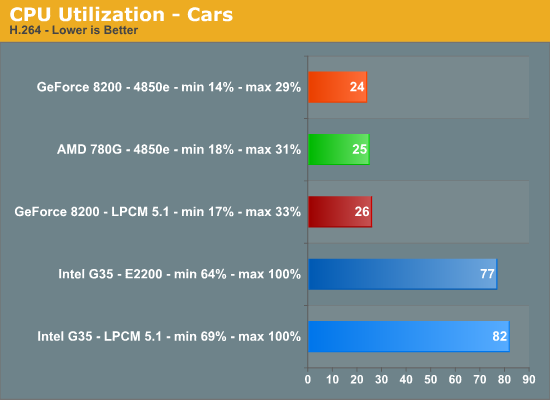

Even though the G35 platform had an excellent image, there is no comparison in the CPU utilization rates during playback compared to the hardware accelerated decoding offered by NVIDIA and AMD. As expected, the 780G kills the G35 with average utilization rates at 25% compared to 77%. Of course, the G35 does not offer hardware acceleration for decoding H.264 (AVC) content and as such suffers from severe judder at times once the CPU utilizations rates go above 95.

In early Phenom testing with the 8.3 release drivers we noticed CPU utilization rates dropping several percent while image quality improved due to the post-processing capabilities on the HD 3200 when paired with an HT 3.0 capable CPU. We will have those results shortly.

VC1 Video Quality - Dave Matthews and Tim Reynolds

We are constantly playing our “Live at Radio City” Blu-ray disc featuring Dave Matthews and Time Reynolds from ATO/RCA/BMG record labels in the labs. It is one of our reference discs for audio quality and besides we just enjoy the music. This disc offers bitrate levels that averaged 13.06 Mb/s to 31.3 Mb/s. In our particular test scene, we see Dave and Tim performing from the right side of the stage. This image provides an array of colors, shadows, and various lighting angles for review.

780G – Click to Enlarge |

G35 – Click to Enlarge |

GeForce 8200 – Click to Enlarge |

The differences in the images are minor but the 780G appears to offer slightly better detail and background image in our estimation. This time, the GeForce 8200 image was faithful to the reference image during playback tests. . Our test audience voted 3 in favor of the GeForce 8200, 3 for the 780G, and 2 for the G35.

This was one test where the opinions differed greatly after watching several minutes of the video. The majority thought the GeForce 8200 offered more “pleasant” tones and brightness control while several thought the 780G provided greater image depth and balanced colors (especially close-ups) that the G35/GeForce 8200 could not.

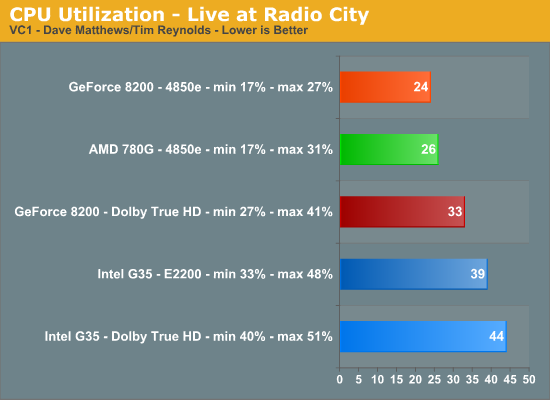

The 780G and GeForce 8200 offer CPU utilization rates several percent lower in this title than the G35. When using PowerDVD to decode the Dolby TrueHD 5.1 audio stream, we noticed our average processor utilization rate increased about 5%~9% on average.

49 Comments

View All Comments

- Monday, March 10, 2008 - link

Where is the discussion of this chipset as an HTPC? Just a tidbit here and there? I thought that was a major selling point here. With a single core sempron 1.8ghz being enough for an HTPC which NEVER hits 100% cpu usage (see tomshardware.com) you don't need a dual core and can probably hit 60w in your HTPC! Maybe less. Why was this not a major topic in this article? With you claiming the E8300/E8200 in your last article being a HTPC dreamers chip shouldn't you be talking about how low you could go with a sempron 1.8ghz? Isn't that the best HTPC combo out there now? No heat, super low cost running it all year long etc (NOISELESS with a proper heatsink).Are we still supposed to believe your article about the E8500? While I freely admit chomping at the bit to buy an E8500 to Overclock the crap out of it (I'm pretty happy now with my e4300@3.0 and can't wait for 3.6ghz with e8500, though it will go further probably who needs more than 3.6 today for gaming), it's a piece of junk for an HTPC. Overly expensive ($220? for e8300 that was recommended) compared to a lowly Sempron 1.8 which I can pick up for $34 at newegg. With that kind of savings I can throw in a 8800GT in my main PC as a bonus for avoiding Intel. What's the point in having an HTPC where the cpu utilization is only 25%? That's complete OVERKILL. I want that as close to 100% as possible to save me money on the chip and then on savings all year long with low watts. With $200 savings on a cpu I can throw in an audigy if needed for special audio applications (since you whined about 780G's audio). A 7.1channel Audigy with HD can be had for $33 at newegg. For an article totally about "MULTIMEDIA OUTPUT QUALITIES" where's the major HTPC slant?

sprockkets - Thursday, March 13, 2008 - link

Dude, buy a 2.2ghz Athlon X2 chip for like $55. You save what, around $20 or less with a Sempron nowadays?QuickComment - Tuesday, March 11, 2008 - link

It's not 'whining' about the audio. Sticking in a sound card from Creative still won't give 7.1 sound over HDMI. That's important for those that have a HDMI-amp in a home theatre setup.TheJian - Tuesday, March 11, 2008 - link

That amp doesn't also support digital audio/Optical? Are we just talking trying to do the job within 1 cable here instead of 2? Isn't that kind of being nit picky? To give up video quality to keep in on 1 cable to me is unacceptable (hence I'd never "lean" towards G35 as suggested in the article). I can't even watch if the video sucks.QuickComment2 - Tuesday, March 11, 2008 - link

No, its not about 1 cable instead of 2. SPDIF is fine for Dolby digital and the like, ie compressed audio, but not for 7.1 uncompressed audio. For that, you need HDMI. So, this is a real deal-breaker for those serious about audio.JarredWalton - Monday, March 10, 2008 - link

I don't know about others, but I find video encoding is something I do on a regular basis with my HTPC. No sense storing a full quality 1080i HDTV broadcast using 16GB of storage for two hours when a high quality DivX or H.264 encode can reduce disk usage down to 4GB, not to mention ripping out all the commercials. Or you can take the 3.5GB per hour Windows Media Center encoding and turn that into 700MB per hour.I've done exactly that type of video encoding on a 1.8GHz Sempron; it's PAINFUL! If you're willing to just spend a lot of money on HDD storage, sure it can be done. Long-term, I'm happier making a permanent "copy" of any shows I want to keep.

The reality is that I don't think many people are buying HTPCs when they can't afford more than a $40 CPU. HTPCs are something most people build as an extra PC to play around with. $50 (only $10 more) gets you twice the CPU performance, just in case you need it. If you can afford a reasonable HTPC case and power supply, I dare say spending $100-$200 on the CPU is a trivial concern.

Single-core older systems are still fine if you have one, but if you're building a new PC you should grab a dual-core CPU, regardless of how you plan to use the system. That's my two cents.

TheJian - Tuesday, March 11, 2008 - link

I guess you guys don't have a big TV. With a 65in Divx has been out of the question for me. It just turns to crap. I'd do anything regarding editing on my main PC with the HTPC merely being a cheap player for blu-ray etc. A network makes it easy to send them to the HTPC. Just set the affinity on one of your cores to vidcoding and I can still play a game on the other. Taking 3.5GB to 700MB looks like crap on a big tv. I've noticed it's watchable on my 46in, but awful on the 65. They look great on my PC, but I've never understood anyone watching anything on their PC. Perhaps a college kid with no room for a TV. Other than that...JarredWalton - Tuesday, March 11, 2008 - link

SD resolutions at 46" (what I have) or 65" are always going to look lousy. Keeping it in the original format doesn't fix that; it merely makes to use more space.My point is that a DivX, x64, or similar encoding of a Blu-ray, HDTV, or similar HD show loses very little in overall quality. I'm not saying take the recording and make it into a 640x360 SD resolution. I'm talking about converting a full bitrate 1080p source into a 1920x1080 DivX HD, x64, etc. file. Sure, there's some loss in quality, but it's still a world better than DVD quality.

It's like comparing a JPEG at 4-6 quality to the same image at 12 quality. If you do a diff, you will find lots of little changes on the lower quality image. If you want to print up a photo, the higher quality is desirable. If you're watching these images go by at 30FPS, though, you won't see much of a loss in overall quality. You'll just use about 1/3 the space and bandwidth.

Obviously, MPEG4 algorithms are *much* more complex than what I just described - which is closer to MPEG2. It's an analogy of how a high quality HD encode compares to original source material. Then again, in the case of HDTV, the original source material is MPEG2 encoded and will often have many artifacts already.

yehuda - Monday, March 10, 2008 - link

Great article. Thanks to Gary and everyone involved! The last paragraph is hilarious.One thing that bothers me about this launch is the fact that board vendors do not support the dual independent displays feature to full extent.

If I understand the article correctly, the onboard GPU lets you run two displays off any combination of ports of your choice (VGA, DVI, HDMI or DisplayPort).

However, board vendors do not let you do that with two digital ports. They let you use VGA+DVI or VGA+HDMI, but not DVI+HDMI. At least, this is what I have gathered reading the Gigabyte GA-MA78GM-S2H and Asus M3A78-EMH-HDMI manuals. Please correct me if I'm wrong.

How come tier-1 vendors overlook such a worthy feature? How come AMD lets them get away with it?

Ajax9000 - Tuesday, March 11, 2008 - link

They are appearing. At CeBIT Intel showed off two mini-ITX boards with dual digital.DQ45EK DVI+DVI

DG45FC DVI+HDMI

http://www.mini-itx.com/2008/03/06/intels-eaglelak...">http://www.mini-itx.com/2008/03/06/intels-eaglelak...