NVIDIA GeForce 8600: Full H.264 Decode Acceleration

by Anand Lal Shimpi on April 27, 2007 4:34 PM EST- Posted in

- GPUs

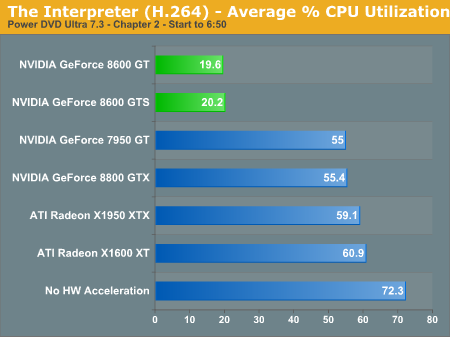

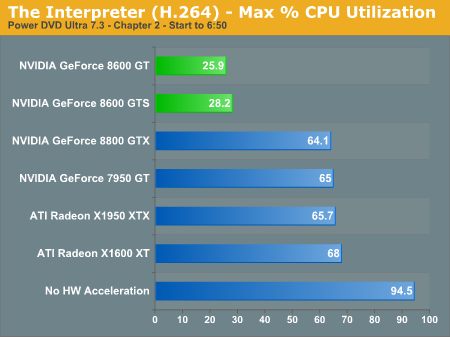

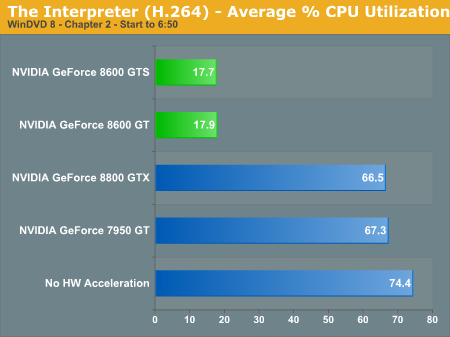

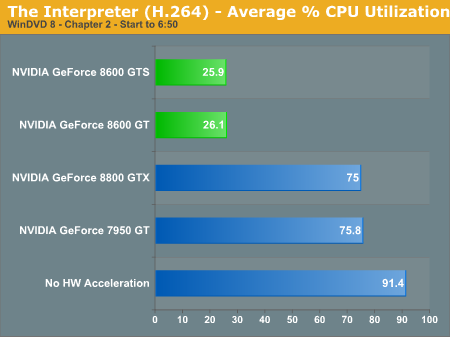

The Interpreter (H.264)

Our second H.264 test is The Interpreter which we've used in the past. Although it's not nearly as stressful as Yozakura, it still eats up almost all of our Core 2 Duo CPU at peak.

The BSP engine of the 8600 proves its worth once more as average CPU utilization drops to around 20% once more.

Maximum CPU utilization is a bit higher but still less than 30%. In a reversal from Yozakura, note how the 8600 GTS now has a slightly faster CPU utilization than the 8600 GT in PowerDVD.

WinDVD 8 tells a similar story: H.264 offload is absolutely necessary for good Blu-ray/HD-DVD playback.

64 Comments

View All Comments

Spoelie - Sunday, April 29, 2007 - link

Hi,I've been intrigued by the impact on video playback for a while now, and there are some questions that've been bothering me. Some of these I think can only be answered by the NVIDIA/ATi driver teams, but here goes anyway.

Is the GPU assisted decoding, H.264 spec compliant? In essence, does it have bit identical output as the reference decoder? I was under the impression (from reading doom9) that currently no GPU assisted decoding supported deblocking, an essential part of the spec. However, this was before the release of the 8000 series, so that may have changed.

Also YV12 being the colorspace of all mpeg codecs, for best quality, what is the best way to proceed? What are the YV12->RGB32 colorspace conversion algorithms of the video card, and do they compare to e.g. ffdshow's high precision conversion? Converting colorspace as late as possible improves cpu performance, since there are less bits to move around and process. More of this stuff: http://forum.doom9.org/showthread.php?t=106111&...">http://forum.doom9.org/showthread.php?t...&hig...

Lastly, can't something be done about resizing quality of overlays? This should be a driver thingy of our videocards. Current resizing is some crude bicubic form that produces noticeable artifacts (stairstepping in lines and blocks in gradients and uniform colors). Well, noticeable on lcd screens, crts have a tendency to hide them. There are a lot better algorithms like spline and lanczos. Again, you can do this in ffdshow in software, but this bumps up cpu usage from ~10% to ~80%, just for having a decent resizer. Supporting this in hardware would be nice.

othercents - Sunday, April 29, 2007 - link

Did you all test this on Windows XP? I have business reasons why I can't upgrade yet and wanted to know what the performance difference was between XP and Vista with HD-DVD and if it actually works. I already have the Computer connected to my TV and a TV Tuner card, so getting the 8600 is next on my list if it works with Windows XP.I am also going to be interested to see if AMD/ATI has the same results with their new video cards especially since I'm not impressed with the Gaming side of the 8600 cards.

Other

Bladen - Saturday, April 28, 2007 - link

On page 5 under the second picture is this text;"Maximum CPU utilization is a bit higher but still less than 30%. Again, note how the 8600 GTS is slightly faster than the 8600 GT in PowerDVD."

Yet the picture shows the GTS as having a higher CPU usage (in the first and second pics).

JarredWalton - Saturday, April 28, 2007 - link

Sorry, bad edit by me. I made a text addition and after the results in Yozakura my brain didn't register that the GTS was higher CPU this time.kelmerp - Saturday, April 28, 2007 - link

Will there be, or is there now an AGP version of this board? I have an old Athlon XP 3200, with a Geforce 6600GT card acting as my main HTPC. I can play most HD materials, except it can get a little choppy every now and then, and I can't play back 1080p material. I'm curious how upgrading to this card would afect my setup.Thank you, and keep up the good work.

irusun - Sunday, April 29, 2007 - link

I'd also like to see more testing with older CPUs with the upcoming review of the 8500.Many people use this kind of card with an *older* PC for their HTPC setup. With one of these cards, how low can you go on the CPU and still be able to play back HDTV smoothly? Could you be watching an HDTV movie and recording HDTV programming at the same time? That kind of info would be really informative to see in a review!

Thanks, and keep up the good work.

phusg - Saturday, April 28, 2007 - link

Any chance this technology will work over the AGP bus? Can you tell us how much bandwidth is being used during the H.264 decoding?I've been looking at the ATI/AMD X1950 for my AGP HTPC, but in several reviews of the AGP cards there were problems with the H.264 decoding.

I've also tried a legal copy of the CoreAVC software codec, but that isn't the solution I was hoping it would be, it seems fairly buggy (at least on my aging 2GHz Athlon XP system).

I think a AGP 8500 would be a very popular upgrade amoungst AGP HTPC owners like myself.

DerekWilson - Saturday, April 28, 2007 - link

NVIDIA has confirmed to us that AGP8x does not have enough bandwidth to handle H.264 content.The problem isn't total bandwidth, or even up/down stream bandwidth as I understand it.

The problem is that there is a need for 2 way communication between the GPU and the CPU during H.264 playback, and the AGP bus must stop down stream communication in order to allow up stream communication. The frequency with which the GPU needs to talk up stream causes too much latency and reduces effective bandwidth.

At least, this is what I got from my convo with NVIDIA ...

JarredWalton - Saturday, April 28, 2007 - link

At this point it's all hypothetical. Until NVIDIA or AMD releases an AGP card/GPU with full H.264 decoding support, we really can't say how it will perform. It seems possible that the technology might actually make use of the upstream (i.e. GPU to RAM/CPU) bandwidth more, in which case PCIe might actually be a requirement to get acceptable performance.kmmatney - Saturday, April 28, 2007 - link

Funny how ATI does worse with the hardware decode. Is the cpu utilization a truly meaningful figure? For instance, I can run SETI on my system, and it will use 100% of the cpu, but it will also give up any cpu power as soon as any other program needs it, as its all low priority. It seems like the best way to figure out the true resources needed would be to run another benchmark while decoding a movie.