nForce 500: nForce4 on Steroids?

by Gary Key & Wesley Fink on May 24, 2006 8:00 AM EST- Posted in

- CPUs

New Feature: DualNet

DualNet's suite of options actually brings a few enterprise type network technologies to the general desktop such as teaming, load balancing, and fail-over along with hardware based TCP/IP acceleration. Teaming will double the network link by combining the two integrated nForce5 Gigabit Ethernet ports into a single 2-Gigabit Ethernet connection. This brings the user improved link speeds while providing fail-over redundancy. TCP/IP acceleration reduces CPU utilization rates by offloading CPU-intensive packet processing tasks to hardware using a dedicated processor for accelerating traffic processing combined with optimized driver support.While all of this sounds impressive, the actual impact for the general computer user is minimal. On the other hand, a user setting up a game server/client for a LAN party or implementing a home gateway machine will find these options valuable. Overall, features like DualNet are better suited for the server and workstation market and we suspect these options are being provided since the NVIDIA professional workstation/server chipsets are typically based upon the same core logic.

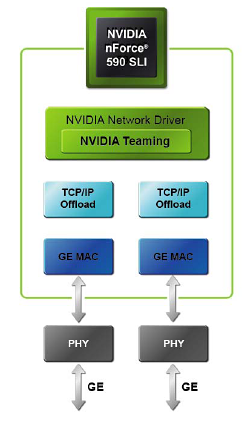

NVIDIA now integrates dual Gigabit Ethernet MACs using the same physical chip. This allows the two Gigabit Ethernet ports to be used individually or combined depending on the needs of the user. The previous NF4 boards offered the single Gigabit Ethernet MAC interface with motherboard suppliers having the option to add an additional Gigabit port via an external controller chip. This too often resulted in two different driver sets, with various controller chips residing on either the PCI Express or PCI bus with typically worse performance than well-implemented dual-PCIe Gigabit Ethernet.

New Feature: Teaming

Teaming allows both of the Gigabit Ethernet ports in NVIDIA DualNet configurations to be used in parallel to set up a 2-Gigabit Ethernet backbone. Multiple computers can be connected simultaneously at full gigabit speeds while load balancing the resulting traffic. When Teaming is enabled, the gigabit links within the team maintain their own dedicated MAC address while the combined team shares a single IP address.

Transmit load balancing uses the destination (client) IP address to assign outbound traffic to a particular gigabit connection within a team. When data transmission is required, the network driver uses this assignment to determine which gigabit connection will act as the transmission medium. This ensures that all connections are balanced across all the gigabit links in the team. If at any point one of the links is not being utilized, the algorithm dynamically adjusts the connections to ensure optimal or formance. Receive load balancing uses a connection steering method to distribute inbound traffic between the two gigabit links in the team. When the gigabit ports are connected to different servers, the inbound traffic is distributed between the links in the team.

The integrated fail-over technology ensures that if one link goes down, traffic is instantly and automatically redirected to the remaining link. As an example, if a file is being downloaded, the download will continue without loss of packet or corruption of data. Once the lost link has been restored, the grouping is re-established and traffic begins to transmit on the restored link.

NVIDIA quotes on average a 40% performance improvement in throughput can be realized when using teaming, although this number can go higher. In a multi-client demonstration, NVIDIA was able to achieve a 70% improvement in throughput utilizing six client machines. In our own internal test we realized about a 45% improvement in throughput utilizing our video streaming benchmark while playing Serious Sam II across four client machines. For those without a Gigabit network, DualNet has the capability to team two 10/100 Fast Ethernet connections. Once again, this is a feature set that few desktop users will truly be able to exploit currently, but we commend NVIDIA for some forward thinking in this area.

Improved Feature: TCP/IP Acceleration

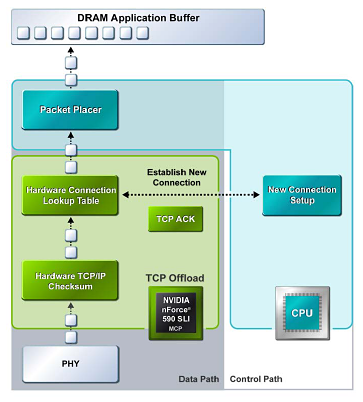

NVIDIA TCP/IP Acceleration is a networking solution that includes both a dedicated processor for accelerating networking traffic processing and optimized drivers. The current nForce500 MCPs have TCP/IP acceleration and hardware offload capability built in to both native Gigabit Ethernet Controllers. This typically will lower the CPU utilization rate when processing network data at gigabit speeds.

In software solutions, the CPU is responsible for processing all aspects of the TCP protocol: calculating checksums, ACK processing, and connection lookup. Depending upon network traffic and the types of data packets being transmitted, this can place a significant load upon the CPU. In the above example all packet data is processed and then checksummed inside the MCP instead of being moved to the CPU for software-based processing, and this improves overall throughout and reduces CPU utilization.

NVIDIA has dropped the ActiveArmor slogan for the nForce 500 release. The ActiveArmor firewall application has been jettisoned to deep space as NVIDIA pointed out that the features provided by ActiveArmor will be a part of the upcoming Microsoft Vista. No doubt NVIDIA was also influenced to drop ActiveArmor due to the reported data corruption issues with the nForce4 caused in part by overly aggressive CPU utilization settings, and quite possibly in part due to hardware "flaws" in the original nForce design.

We have not been able to replicate all of the reported data corruption errors with nForce4, but many of our readers reported errors with the nForce4 ActiveArmor even after the latest driver release. With nForce5 that is no longer a concern. This stability comes at a price though. If TCP/IP acceleration is enabled via the new control panel, then third party firewall applications (including Windows XP firewall) must be switched off in order to use the feature. We noticed CPU utilization rates near 14% with the TCP/IP offload engine enabled and rates above 30% without it.

64 Comments

View All Comments

Googer - Wednesday, May 24, 2006 - link

http://www.hardwarezone.com/news/view.php?id=4614&...">http://www.hardwarezone.com/news/view.php?id=4614&...http://www.neoseeker.com/Articles/Hardware/Reviews...">http://www.neoseeker.com/Articles/Hardware/Reviews...

Googer - Wednesday, May 24, 2006 - link

AM2 Now Shiping at Newegg.comhttp://www.newegg.com/Product/ProductList.asp?Subm...">http://www.newegg.com/Product/ProductLi...rchInDes...

Doormat - Wednesday, May 24, 2006 - link

The media shield feature looks nice. Buy two drives for a RAID-0 array for the OS and whatnot. Then the RAID-5 array for all your important stuff (saved games, documents, pictures, etc). Having both arrays on one chipset is nice.Pirks - Wednesday, May 24, 2006 - link

Why would you penalize your write speed with RAID5 when there is RAID1? Why not get RAID1 instead of RAID5 and enjoy 1) reliability (same as RAID5) 2) speed (same as single drive for writing, faster than single drive for reading) 3) low price (no need for more than two hard drives)mino - Wednesday, May 24, 2006 - link

AND lower available capacity for the money you pay. You see 4 300G drives in RAID5 bring you 900GB of (cheap and reliable) storage. Do that with 4 drives and RAID1(or 0+1 for that) means i.e. 2x400 + 2x500 which is SIGNIFICANTLY more expensive.Remember there are guys with 10 drives, any situation you could economically justify 3+ drives for storage RAID5 is the most cost effective way.

JarredWalton - Thursday, May 25, 2006 - link

Too bad the integrated RAID 5 solutions from NVIDIA only work with 3 drives (and potentially one hot-swap). Maybe I'm mistaken, but I'm pretty sure you can't run 4, 5, or 6 drives in a single RAID 5 array using the NVIDIA controller. That's why you can do two RAID 5 arrays with 3 drives in each array. Problem is, doing RAID 5 without a lot of RAM for the RAID controller can really hurt (write) performance.nordicpc - Wednesday, May 24, 2006 - link

Something I noticed yesterday while looking through the AM2 reviews that incorporated both ATI and nVidia's chipsets was the huge disparency in power usage, some 40 watts in some cases.Charlie D. has brought this up over at the Inq aswell.

Not only with nVidia's 5x0 series do you need a huge chunk of copper with 3 pipes to eliminate the fan, but also you'll be paying a bit extra on the power bill it seems, for what? Some extra networking options that most of us never use because they are so dodgy.

Where's the power consumption page on here?

Gary Key - Wednesday, May 24, 2006 - link

They are coming in a different article as we just started receiving our ATI AM2, nF550, and other boards. The pull in by AMD was a stretch for the board suppliers who had planned on rolling the AM2 series out during Computex and shipping at that time. NVIDIA was caught trying to qualify drivers for both the video and platform side in half the time. We just received final AM2 chips on Saturday morning. ;-)

NullSubroutine - Wednesday, May 24, 2006 - link

mehfitten - Wednesday, May 24, 2006 - link

I concurr.