Apple's M1 Pro, M1 Max SoCs Investigated: New Performance and Efficiency Heights

by Andrei Frumusanu on October 25, 2021 9:00 AM EST- Posted in

- Laptops

- Apple

- MacBook

- Apple M1 Pro

- Apple M1 Max

Power Behaviour: No Real TDP, but Wide Range

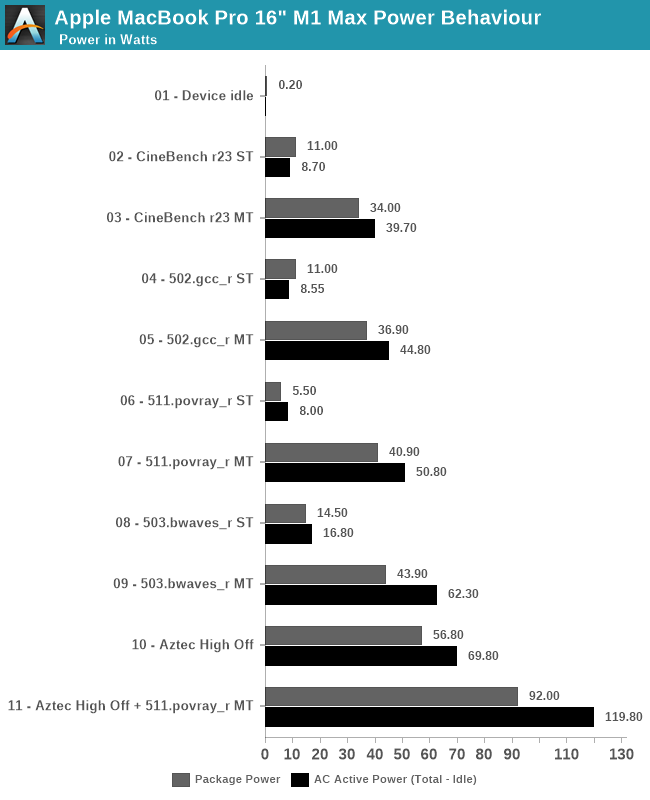

Last year when we reviewed the M1 inside the Mac mini, we did some rough power measurements based on the wall-power of the machine. Since then, we learned how to read out Apple’s individual CPU, GPU, NPU and memory controller power figures, as well as total advertised package power. We repeat the exercise here for the 16” MacBook Pro, focusing on chip package power, as well as AC active wall power, meaning device load power, minus idle power.

Apple doesn’t advertise any TDP for the chips of the devices – it’s our understanding that simply doesn’t exist, and the only limitation to the power draw of the chips and laptops are simply thermals. As long as temperature is kept in check, the silicon will not throttle or not limit itself in terms of power draw. Of course, there’s still an actual average power draw figure when under different scenarios, which is what we come to test here:

Starting off with device idle, the chip reports a package power of around 200mW when doing nothing but idling on a static screen. This is extremely low compared to competitor designs, and is likely a reason Apple is able achieve such fantastic battery life. The AC wall power under idle was 7.2W, this was on Apple’s included 140W charger, and while the laptop was on minimum display brightness – it’s likely the actual DC battery power under this scenario is much lower, but lacking the ability to measure this, it’s the second-best thing we have. One should probably assume a 90% efficiency figure in the AC-to-DC conversion chain from 230V wall to 28V USB-C MagSafe to whatever the internal PMIC usage voltage of the device is.

In single-threaded workloads, such as CineBench r23 and SPEC 502.gcc_r, both which are more mixed in terms of pure computation vs also memory demanding, we see the chip report 11W package power, however we’re just measuring a 8.5-8.7W difference at the wall when under use. It’s possible the software is over-reporting things here. The actual CPU cluster is only using around 4-5W under this scenario, and we don’t seem to see much of a difference to the M1 in that regard. The package and active power are higher than what we’ve seen on the M1, which could be explained by the much larger memory resources of the M1 Max. 511.povray is mostly core-bound with little memory traffic, package power is reported less, although at the wall again the difference is minor.

In multi-threaded scenarios, the package and wall power vary from 34-43W on package, and wall active power from 40 to 62W. 503.bwaves stands out as having a larger difference between wall power and reported package power – although Apple’s powermetrics showcases a “DRAM” power figure, I think this is just the memory controllers, and that the actual DRAM is not accounted for in the package power figure – the extra wattage that we’re measuring here, because it’s a massive DRAM workload, would be the memory of the M1 Max package.

On the GPU side, we lack notable workloads, but GFXBench Aztec High Offscreen ends up with a 56.8W package figure and 69.80W wall active figure. The GPU block itself is reported to be running at 43W.

Finally, stressing out both CPU and GPU at the same time, the SoC goes up to 92W package power and 120W wall active power. That’s quite high, and we haven’t tested how long the machine is able to sustain such loads (it’s highly environment dependent), but it very much appears that the chip and platform don’t have any practical power limit, and just uses whatever it needs as long as temperatures are in check.

| M1 Max MacBook Pro 16" |

Intel i9-11980HK MSI GE76 Raider |

|||||

| Score | Package Power (W) |

Wall Power Total - Idle (W) |

Score | Package Power (W) |

Wall Power Total - Idle (W) |

|

| Idle | 0.2 | 7.2 (Total) |

1.08 | 13.5 (Total) |

||

| CB23 ST | 1529 | 11.0 | 8.7 | 1604 | 30.0 | 43.5 |

| CB23 MT | 12375 | 34.0 | 39.7 | 12830 | 82.6 | 106.5 |

| 502 ST | 11.9 | 11.0 | 9.5 | 10.7 | 25.5 | 24.5 |

| 502 MT | 74.6 | 36.9 | 44.8 | 46.2 | 72.6 | 109.5 |

| 511 ST | 10.3 | 5.5 | 8.0 | 10.7 | 17.6 | 28.5 |

| 511 MT | 82.7 | 40.9 | 50.8 | 60.1 | 79.5 | 106.5 |

| 503 ST | 57.3 | 14.5 | 16.8 | 44.2 | 19.5 | 31.5 |

| 503 MT | 295.7 | 43.9 | 62.3 | 60.4 | 58.3 | 80.5 |

| Aztec High Off | 307fps | 56.8 | 69.8 | 266fps | 35 + 144 | 200.5 |

| Aztec+511MT | 92.0 | 119.8 | 78 + 142 | 256.5 | ||

Comparing the M1 Max against the competition, we resorted to Intel’s 11980HK on the MSI GE76 Raider. Unfortunately, we wanted to also do a comparison against AMD’s 5980HS, however our test machine is dead.

In single-threaded workloads, Apple’s showcases massive performance and power advantages against Intel’s best CPU. In CineBench, it’s one of the rare workloads where Apple’s cores lose out in performance for some reason, but this further widens the gap in terms of power usage, whereas the M1 Max only uses 8.7W, while a comparable figure on the 11980HK is 43.5W.

In other ST workloads, the M1 Max is more ahead in performance, or at least in a similar range. The performance/W difference here is around 2.5x to 3x in favour of Apple’s silicon.

In multi-threaded tests, the 11980HK is clearly allowed to go to much higher power levels than the M1 Max, reaching package power levels of 80W, for 105-110W active wall power, significantly more than what the MacBook Pro here is drawing. The performance levels of the M1 Max are significantly higher than the Intel chip here, due to the much better scalability of the cores. The perf/W differences here are 4-6x in favour of the M1 Max, all whilst posting significantly better performance, meaning the perf/W at ISO-perf would be even higher than this.

On the GPU side, the GE76 Raider comes with a GTX 3080 mobile. On Aztec High, this uses a total of 200W power for 266fps, while the M1 Max beats it at 307fps with just 70W wall active power. The package powers for the MSI system are reported at 35+144W.

Finally, the Intel and GeForce GPU go up to 256W power daw when used together, also more than double that of the MacBook Pro and its M1 Max SoC.

The 11980HK isn’t a very efficient chip, as we had noted it back in our May review, and AMD’s chips should fare quite a bit better in a comparison, however the Apple Silicon is likely still ahead by extremely comfortable margins.

493 Comments

View All Comments

caribbeanblue - Saturday, October 30, 2021 - link

Lol, you're just a troll at this point.sharath.naik - Monday, October 25, 2021 - link

The only reason M1 falls behind 3060 RTX is because the games are emulated.. if native M1 will match 3080. This is remarkable.. time for others to shift over to the same shared high bandwith memory on chip.vlad42 - Monday, October 25, 2021 - link

Go back and reread the article. Andrei explicitly mentioned that the games were GPU bound, not CPU bound. Here are the relevant quotes:Shadow of the Tomb Raider:

"We have to go to 4K just to help the M1 Max fully stretch its legs. Even then the 16-inch MacBook Pro is well off the 6800M. Though we’re definitely GPU-bound at this point, as reported by both the game itself, and demonstrated by the 2x performance scaling from the M1 Pro to the M1 Max."

Borderlands 3:

"The game seems to be GPU-bound at 4K, so it’s not a case of an obvious CPU bottleneck."

web2dot0 - Tuesday, October 26, 2021 - link

I heard otherwise on m1 optimized games like WoWAshlayW - Tuesday, October 26, 2021 - link

4096 ALU at 1.3 GHz vs 6144 ALU at 1.4-1.5 Ghz? What makes you think Apple's GPU is magic sauce?Ppietra - Tuesday, October 26, 2021 - link

Not going to argue that Apple's GPU is better, however the number of ALU and clock speed doesn’t tell the all story.Sometimes it can be faster not because it can work more but because it reduces some bottlenecks and because it works in a smarter way (by avoiding doing work that is not necessary for the end result).

jospoortvliet - Wednesday, October 27, 2021 - link

Thing is also that the game devs didn't write their game for and test on these gpus and drivers. Nor did Apple write or optimize their drivers for these games. Both of these can easily make high-double digit differences, so being 50% slower on a fully new platform without any optimizations and running half-emulated code is very promising.varase - Thursday, November 4, 2021 - link

Apple isn't interested in producing chips - they produce consumer electronics products.If they wanted to they could probably trash AMD and Intel by selling their silicon - but customers would expect them to remain static and support their legacy stuff forever.

When Apple finally decided ARMv7 was unoptimizable, they wrote 32 bit support out of iOS and dropped those logic blocks from their CPUs in something like 2 years. No one else can deprecate and shed baggage so quickly which is how they maintain their pace of innovation.

halo37253 - Monday, October 25, 2021 - link

Apple's GPU isn't magic. It is not going to be any more efficient than what Nvidia or AMD have.Clearly a Apple GPU that only uses around 65watts is going to compete with a Nvidia or AMD GPU that only uses around 65watts in actual usage.

Apple clearly has a node advantage at work here, and with that being said. It is clear to see that when it comes to actual workloads like games, Apple still has some work to do efficiency wise. As their GPU in the same performance/watt range compared to a Nvidia chip in the same performance/watt range on a older and not as power efficient node is able to still do better.

Apple's GPU is a compute champ and great for workloads that avg user will never see. This is why the M1 Pro makes a lot more sense then the M1 Max. The M1 Max seems like it will do fine for light gaming, but the cost of that chip must be crazy. It is a huge chip. Would love to see one in a mac mini.

misan - Monday, October 25, 2021 - link

Just replace GPU by CPU and you will see how devoid of logic your argument is.Apple has much more experience in low-power GPU design. Their silicon is specifically optimized for low-power usage. Why wouldn't it be more efficient than the competitors?

Besides, Andreis' test already confirm that your claims are pure speculation without any factual basis. Look at the power usage tests for the GFXbench. Almost three times lower power consumption with a better overall result.

These GPUs are incredible rasterizers. It's that you look at bad quality game ports and decide that they reflect the maximal possible reachable performance. Sure, GFXbench is crap, then look at Wild Life Extreme. That result translates to 20k points. Thats on par with the mobile RTX 3070 at 100W.