Intel Architecture Day 2021: Alder Lake, Golden Cove, and Gracemont Detailed

by Dr. Ian Cutress & Andrei Frumusanu on August 19, 2021 9:00 AM ESTGracemont Microarchitecture (E-Core) Examined

The smaller core as part of Intel’s hybrid Alder Lake design is called an E-core, and is built on the Gracemont microarchitecture. It forms part of Intel’s Atom family of processors, and is a significant microarchitectural jump over the previous Atom core design called Tremont.

- 2008: Bonnell

- 2011: Saltwell

- 2013: Silvermont

- 2015: Airmont

- 2016: Goldmont

- 2017: Goldmont Plus

- 2020: Tremont

- 2021: Gracemont

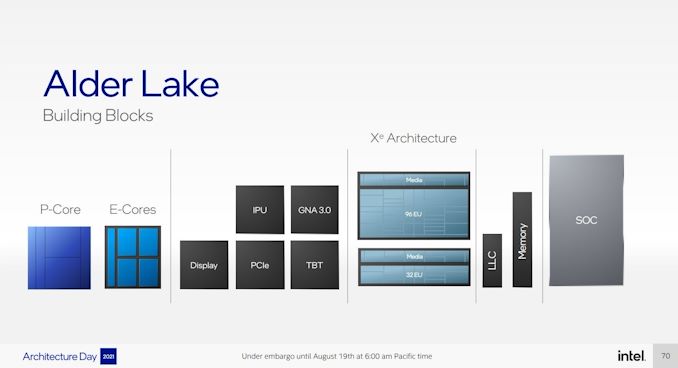

Based on Intel’s diagrams, the company is pitching that the size of its Golden Cove core means that in the space it would normally fit one of its P-core designs, it can enable a four core cluster of E-cores along with a shared 4MB L2 cache between them.

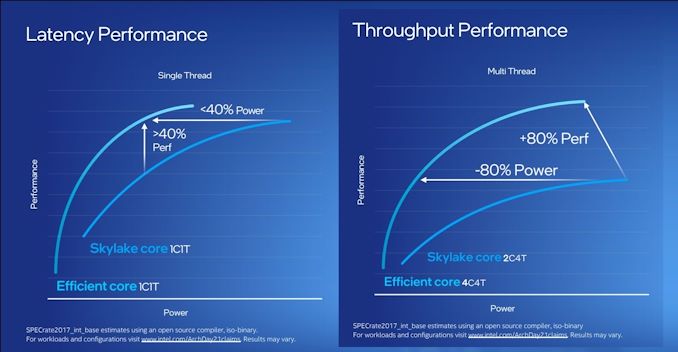

For performance, Intel has some pretty wild claims. It splits them up into single thread and multi-thread comparisons using SPECrate2017_int.

When comparing 1C1T of Gracemont against 1C1T of Skylake, Intel’s numbers suggest:

- +40% performance at iso-power (using a middling frequency)

- 40% less power* at iso-performance (peak Skylake performance)

*'<40%' is now stood to mean 'below 40 power'

When comparing 4C4T of Gracemont against 2C4T of Skylake, Intel’s numbers suggest:

- +80% performance peak vs peak

- 80% less power at iso performance)peak Skylake performance

We pushed the two Intel slides together to show how they presented this data.

By these graphics it looks like that for peak single thread, we should see around +8% better than Skylake performance while consuming just over half the power – look for Cinebench R20 scores for one Gracemont thread around 478 then (Skylake 6700K scored 443). With +8% for single thread in mind, the +80% in MT comparing 4 cores of Gracemont to two fully loaded Skylake cores seems a little low – we’ve got double the physical cores with Gracemont compared to Skylake here. But there’s likely some additional performance regression with the cache structure on the new Atom core, which we’ll get to later on this page.

These claims are substantial. Intel hasn’t compared the new Atom core generation on generation, because it felt that having AVX2 support would put the new Atom at a significant advantage. But what Intel is saying with these graphs is that we should expect better-than Skylake performance at much less power. We saw Skylake processors up to 28 cores in HEDT – it makes me wonder if Intel might not enable its new Atom core for that market. If that’s the case, where is our 64-core Atom version for HEDT? I’ll take one.

Front End

The big item about the Tremont front end of the core was the move to dual three-wide decode paths, enabling two concurrent streams of decode that could support 3 per cycle. That still remains in Gracemont, but is backed by a double-size 64 KB L1 Instruction cache. This ties into the branch predictor which enables prefetchers at all cache levels, along with a 5000-entry branch target cache which Intel says in improved over the previous generation.

Back on the decoder, Intel supports on-demand decode which stores a history of previous decodes in the instruction cache and if recent misses are recalled at the decode stage, the on-demand stream will pull directly from the instruction cache instead, saving time – if the prefetch/decode works, the content in the instruction cache is updated, however if it is doing poorly then the scope is ‘re-enabled for general fetches’ to get a better understanding of the instruction flow. This almost sounds like a micro-op cache without having a physical cache, but is more to do about instruction streaming. Either way, the decoders can push up to six uops into the second half of the front end.

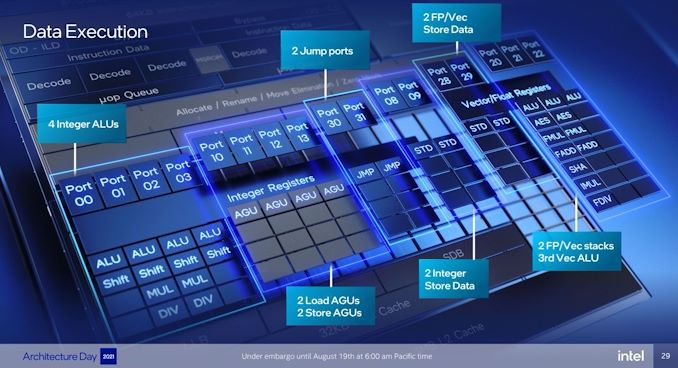

For Gracemont, the reorder buffer size has increased from 208 in Tremont to 256, which is important aspect given that Gracemont now has a total of 17 (!) execution ports, compared to eight in Tremont. This is also significantly wider than the execution capabilities of Golden Cove's 12 ports, related to the disaggregated integer and FP/vector pipeline design. However, despite that width, the allocation stage feeding into the reservation stations can only process five instructions per cycle. On the return path, each core can retire eight instructions per cycle.

Back End

So it’s a bit insane to have 17 execution ports here. There are a lot of repeated units as well, which means that Gracemont is expecting to see repeated instruction use and requires the parallelism to do more per cycle and then perhaps sit idle waiting for the next instructions to come down the pipe. Overall we have

- 4 Integer ALUs (ALU/Shift), two of which can do MUL/DIV

- 4 Address Generator Units, 2 Load + 2 Store

- 2 Branch Ports

- 2 Extra Integer Store ports

- 2 Floating Point/Vector store ports

- 3 Floating Point/Vector ALUs: 3x ALUs, 2x AES/FMUL/FADD, 1x SHA/IMUL/FDIV

It will be interesting to see exactly how many of these can be accessed simultaneously. In previous core designs a lot of this functionality would be enabled though the same port – even Alder Lake’s P-core only has 12 execution ports, with some ports doing double duty on Vector and Integer workloads. In the P-core there is a single scheduler for both types of workloads, whereas in the E-core there are two separate schedulers, which is more akin to what we see on non-x86 core designs. It’s a tradeoff in complexity and ease-of-use.

The back-end is support by a 32 KiB L1-Data cache, which supports a 3-cycle pointer chasing latency and 64 outstanding cache misses. There are two load and two store ports, which means 2x16 byte loads and 2 x 16 byte stores to the L1-D.

There is also has a shared 4 MB L2 cache across all four E-cores in a cluster with 17-cycle latency. The shared L2 cache can support 64 bytes per cycle read/write per core, which Intel states is sufficient for all four cores. The new L2 supports up to 64 outstanding misses to the deeper memory subsystem – which seems fair, but has to be shared amongst the 4 cores.

Intel states that it has a Resource Director that will arbitrate cache accesses between the four cores in a cluster to ensure fairness, confirming that Intel are building these E-cores in for multi-threaded performance rather than latency sensitive scenarios where one thread might have priority.

Other Highlights

As the first Atom core to have AVX2 enabled, there are two vector ports that support FMUL and FADD (port 20 and port 21), which means that we should expect peak performance compared to previous Atoms to be substantial. The addition of VNNI-INT8 over the AVX unit means that Intel wants these E-cores to enable situations where high inference throughput is needed, such as perhaps video analysis.

Intel was keen to point out that Gracemont has all of its latest security features including Control Flow Enhancement Technology (CET), and virtualization redirects under its VT-rp feature.

Overall, Intel stated that the E-cores are tuned for voltage more than anything else (such as performance, area). This means that the E-cores are set to use a lot less power, which may help in mobile scenarios. But as mentioned before on the first page, it will depend on how much power the ring has to consume in that environment - it should be worth noting that each four core Atom cluster only has a single stop on the full ring in Alder Lake, which Intel says should not cause congestion but it is a possibility – if each core is fully loaded, there is only 512 KB of L2 cache per core before making the jump to main memory, which indicates that in a fully loaded scenario, that might be a bottleneck.

223 Comments

View All Comments

abufrejoval - Saturday, August 21, 2021 - link

Since AVX-512 isn't new, I'm somewhat doubtful on the bug theory.And since Intel doesn't do chiplets yet, they can't be reusing that silicon for server CPUs either.

It really has me think that the AVX-512 guys tried to push their baby through into production until the bloody final battle, when the E/P-Core symmetry team shut them down (for now, it's all fuses, right?).

It's really very much a matter of how you want to use these resources and educating both operating systems and users about their potential and limitations. If all you see in E-cores is a way to run a P-core task on less energy budget, that symmetry is critical. If you see E-cores as an add-on resource that somewhat functionally limited (but might have better side-channel resilience or run special purpose VMs etc.), yet available for low silicon real-estate, it's another story.

On notebooks on batteries, the symmetric view wins out. For anything on a powerline, the E-cores may make some sense as functionally constrained extra resources, I can't see the power savings vs. good idle there (well, perhaps a single E-core, like the Tegra 3 had against it's quad P-cores).

It's very hard to maintain real flexibility when things get baked into silicon.

I'd say product managers got the better over the engineers and what you get is a compromise, which hardly ever ideal nor easy to understand without the context of its creation.

mode_13h - Sunday, August 22, 2021 - link

> It really has me think that the AVX-512 guys tried to push their baby through into> production until the bloody final battle,

That doesn't explain the backport of VNNI to AVX2, unless that was already being done for other reasons.

Intel went through this once, already, with Lakefield. That was like 2 years ago, and forced the same situation of the P-core being kneecapped. So, this thing can't have been a surprise.

Now, wouldn't it be cool if BIOS gave you a choice between enabling the E-cores and having AVX-512 on the P-cores? I know it'd create more headaches for the customer support teams at Intel and many OEMs, but that would at least be a more customer-centric way to make the tradeoff.

Spunjji - Tuesday, August 24, 2021 - link

Giving customers more choice for no additional cost is not the Intel way!Oxford Guy - Thursday, August 26, 2021 - link

Some here fervently believe enthusiasts who build their own PCs aren’t going to enter BIOS to turn on XMP...Spunjji - Friday, August 27, 2021 - link

@Oxford Guy - only ever seen people argue the majority of users won't do that, not enthusiasts specifically.SystemsBuilder - Friday, August 20, 2021 - link

Breaking out VNNI from AVX512 and keeping it in Alder Lake is to accelerate Neural Net inference. Many other parts of AVX512 (i.e. AVX512F etc) are necessary to sufficiently accelerate NN learning.Intel probably thought that Alder Lake CPUs would only be used in inference scenarios and therefor reserved AVX512 and AMX to Sapphire rapids server, workstation and hopefully the HEDT platform road maps.

Intel forgot (or more likely did not care) that companies have, after 5 years of AVX512 with implementations as far down into the consumer stack as Ice Lake and Tiger Lake lap tops, tuned libraries to take advantage of AVX512 in OTHER scenarios than deep learning. Those libraries are now going to be regressing to AVX2 when run on Alder lake CPUs, effectively knee capped, executed on P and crap cores, ops sorry, meant E cores.

mode_13h - Saturday, August 21, 2021 - link

To be fair, I think Intel had further motives for porting VNNI to AVX2. They sell Atom processors into applications where inferencing is a useful capability. Skylake CPUs are already pretty good at inferencing, with just baseline AVX2, so VNNI can only help.Still, the situation is something of an own-goal. I'll bet Intel will be nursing that wound for the next few years. I don't expect they'll make the same decision/mistake in Raptor Lake.

StoykovK - Friday, August 20, 2021 - link

Intel stated that ADL has 6 decoders from 4, but didn't Skylake has 5 (4 simple + 1 complex)?I'm a little bit confused. It looks like, from architecture point, Golden Cove compared to WillowCove is bigger update, than WillowCove to SkyLake, but both result ~20% IPC.

E-cores: Really good idea to get high score in multi-core benchmarks. GoldenCove looks like ~33% faster than E-cores, but taking a lot more power. Does anybody have an idea how wide is E-cores AVX- 128bit or 256bit.

TristanSDX - Friday, August 20, 2021 - link

SSE - 128 bit, AVX - 256 bit, AVX-512 - 512 bitStoykovK - Friday, August 20, 2021 - link

Zen/Zen+, Sandy Bridge, Ivy Bridge fuses 2x128bit units in order to execute single 256bit AVX.