The AMD Ryzen 7 5700G, Ryzen 5 5600G, and Ryzen 3 5300G Review

by Dr. Ian Cutress on August 4, 2021 1:45 PM ESTIntegrated Graphics Tests

Finding 60+ FPS

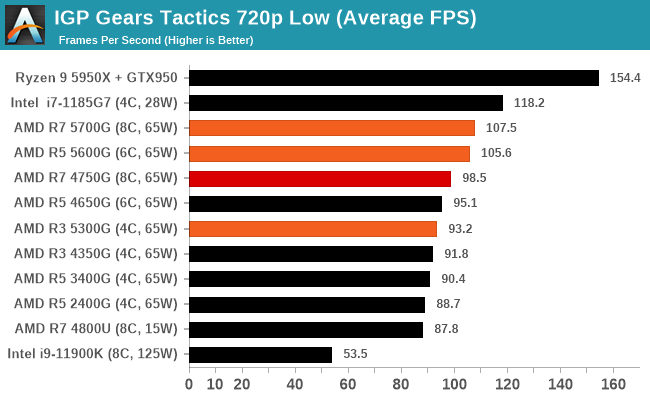

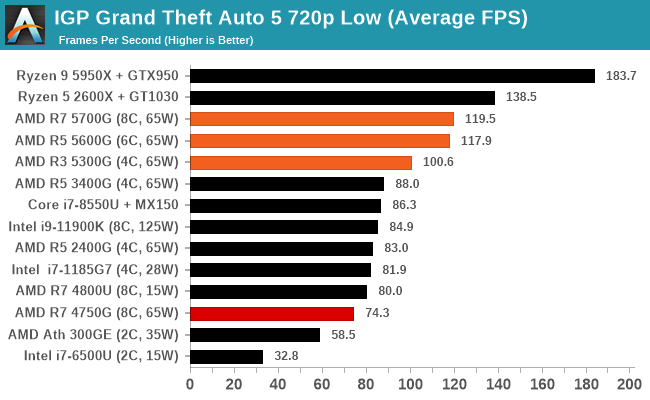

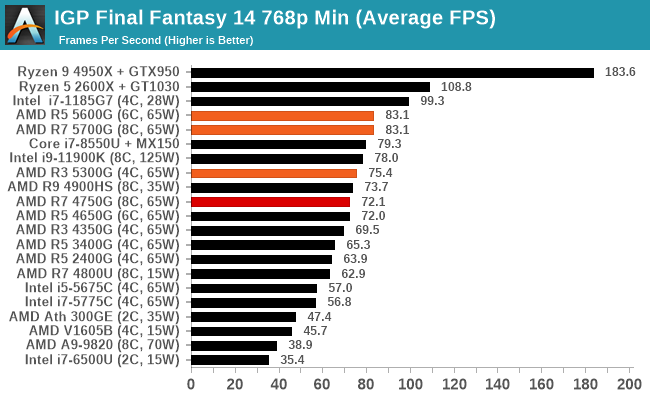

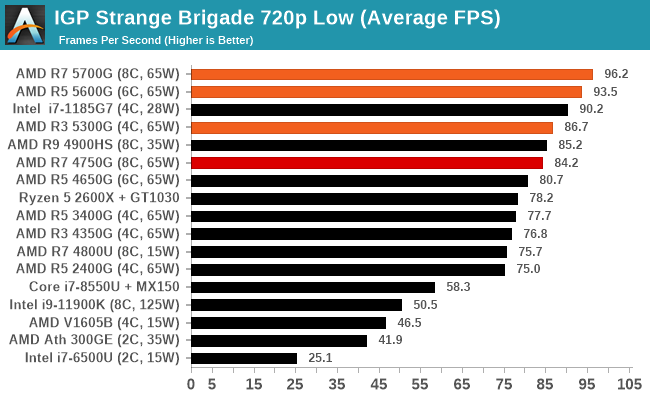

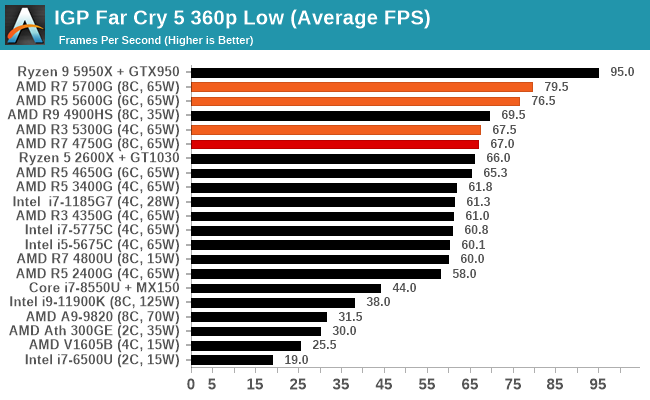

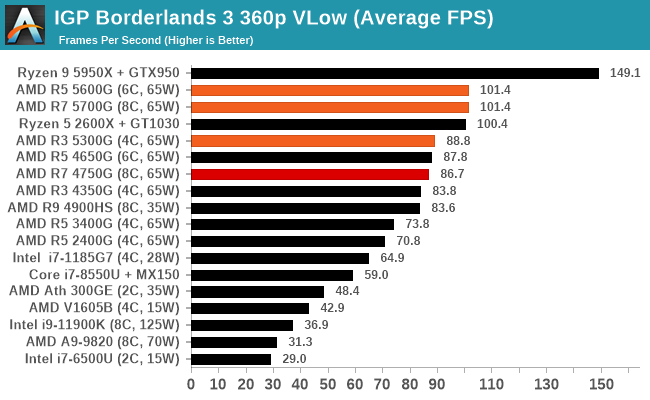

Never mind 30 frames per second, if we want gaming to be smooth, we look for true 60 FPS gaming. It's going to be a benchmark for any integrated graphics solution, but one question is if games are getting more difficult to render faster than integrated graphics is improving. Given how we used to talk about 30-40 FPS on integrated graphics before Ryzen, it stands to reason that the base requirements of games is only ever getting worse. To meet that need, we need processors with a good level of integrated oomph.

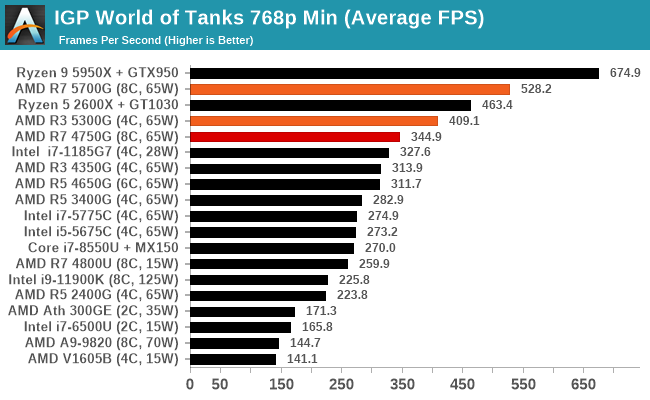

So here are a series of our tests that meet that mark. Unfortunately most of them are 720p Low (or worse).

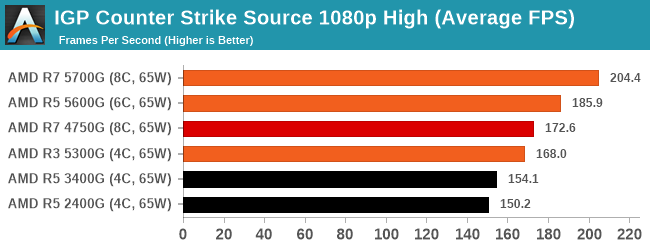

A full list of results at various resolutions and settings can be found in our Benchmark Database.

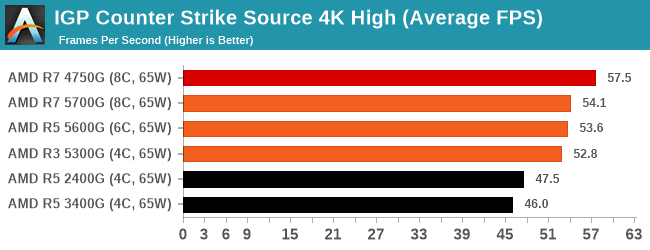

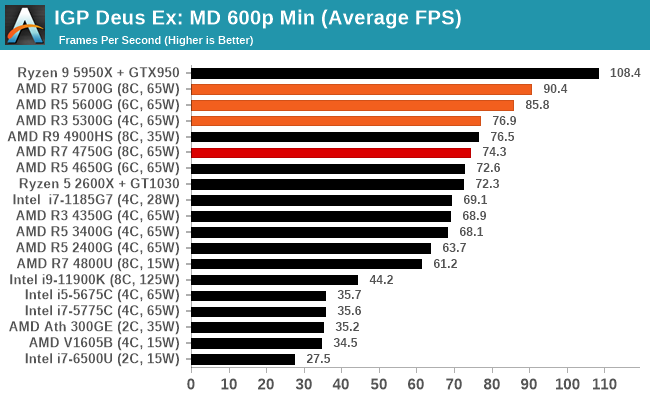

These last couple of games here, World of Tanks and CS:Source are getting on in age a bit. Playing at 1080p High/Max on both is easily done, but we cranked Source up to 4K and we're not even getting 60 frames per second. The previous generation R7 even beat out the new APUs here, probably indicating that the previous generation had more power going into the GPU and the new models are balanced towards the CPU cores a bit more. It works in some games clearly, and 1080p resolutions, but not here at 4K.

135 Comments

View All Comments

GeoffreyA - Friday, August 6, 2021 - link

Okay, that makes sense.19434949 - Friday, August 6, 2021 - link

Do you know if 5600G or 5700G can output 4k 120fps movie/video playback?GeoffreyA - Saturday, August 7, 2021 - link

Tested this now on a 2200G. Taking the Elysium trailer, I encoded a 10-second clip in H.264, H.265, and AV1 using FFmpeg. The original frame rate was 23.976, so using the -r switch, got it up to 120. Also, scaled the video from 3840x1606 to 3840x2160, and kept the audio (DTS-MA, 8 ch). On MPC-HC, they all ran smoothly. Rough CPU/GPU usage:H.264: 25% | 20%

H.265: 6% | 21%

AV1: 60% | 20%

So if Raven Ridge can hold up at 4K/120, Cezanne should have no problem. Note that the video was downscaled during playback, owing to the screen not being 4K. Not sure if that made it easier. 8K, choppy. And VLC, lower GPU usage but got stuck on the AV1 clip.

GeoffreyA - Sunday, August 8, 2021 - link

Something else I found. 10-bit H.264 seems to be going through software/CPU decoding, whereas 8-bit is running through the hardware, dropping CPU usage down to 6/8%. H.265 hasn't got the same problem. It could be the driver, the hardware, the player, or my computer.mode_13h - Monday, August 9, 2021 - link

> 10-bit H.264 seems to be going through software/CPU decoding...

> It could be the driver, the hardware, the player

It's quite likely the driver or a GPU hardware limitation. There's some nonzero chance it's the player, but I'd bet the player tries to use acceleration and then only falls back on software when that fails.

GeoffreyA - Tuesday, August 10, 2021 - link

Yes, quite likely.mode_13h - Monday, August 9, 2021 - link

> the video was downscaled during playback, owing to the screen not being 4K.> Not sure if that made it easier.

Not usually. I only have detailed knowledge of H.264, where hierarchical encoding is mostly aimed at adapting to different bitrates. That would never be enabled by default, because not only is it more work for the encoder, but it's also poorly supported by decoders and generates slightly less efficient bitstreams.

GeoffreyA - Tuesday, August 10, 2021 - link

"hierarchical encoding"That could be x264's b-pyramid, which, I think, is enabled most of the time. Apparently, allows B-frames to be used as references, saving bits. The stricter setting limits it to the Bluray spec.

GeoffreyA - Tuesday, August 10, 2021 - link

By the way, looking forward to x266 coming out. AV1 is excellent, but VVC appears to be slightly ahead of it.mode_13h - Wednesday, August 11, 2021 - link

> That could be x264's b-pyramidNo, I meant specifically Scalable Video Coding, which is what I thought, but I didn't want to cite the wrong term.

https://en.wikipedia.org/wiki/Advanced_Video_Codin...

The only way the decoder can opt to do less work is by discarding some layers of a SVC-encoded H.264 stream. Under any other circumstance, the decoder can't take any shortcuts without introducing a cascade of errors in all of the frames which reference the one being decoded.

> The stricter setting limits it to the Bluray spec.

I think blu-ray mainly limits which profiles (which are a collection of compression techniques) and levels (i.e. bitrates & resolutions) can be used, so that the bitstream can be decoded by all players and can be streamed off the disc fast enough.

I once tried authoring valid blu-ray video dics, but the consumer-grade tools were too limiting and the free tools were a mess to figure out and use. In the end, I found simply copying MPEG-2.ts files on a BD-R would play it every player I tested. I was mainly interested in using it for a few videos shot on a phone, plus a few things I recorded from broadcast TV.