Intel 3rd Gen Xeon Scalable (Ice Lake SP) Review: Generationally Big, Competitively Small

by Andrei Frumusanu on April 6, 2021 11:00 AM EST- Posted in

- Servers

- CPUs

- Intel

- Xeon

- Enterprise

- Xeon Scalable

- Ice Lake-SP

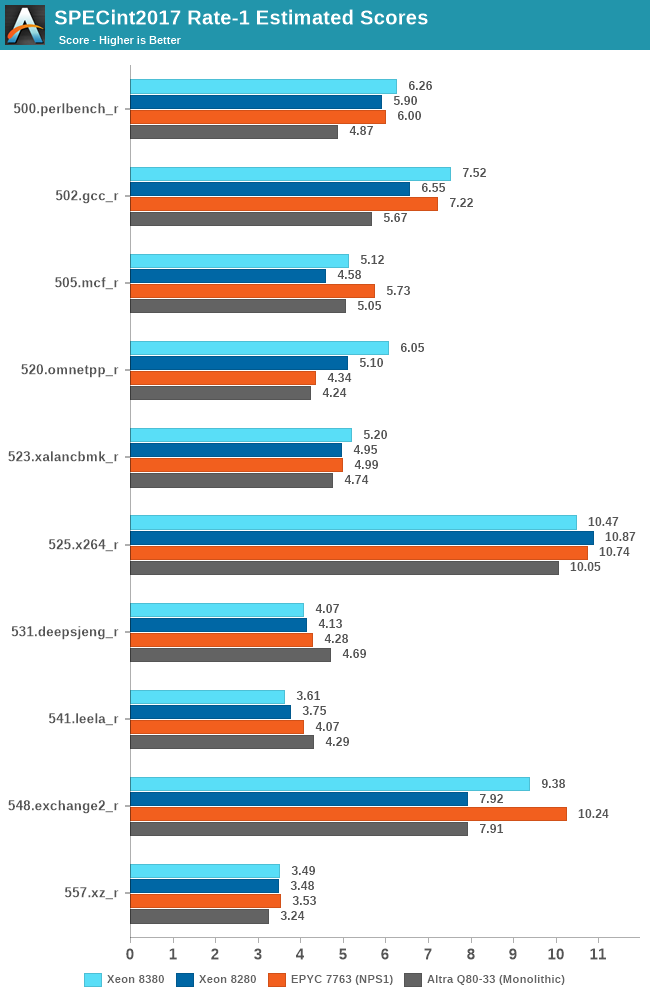

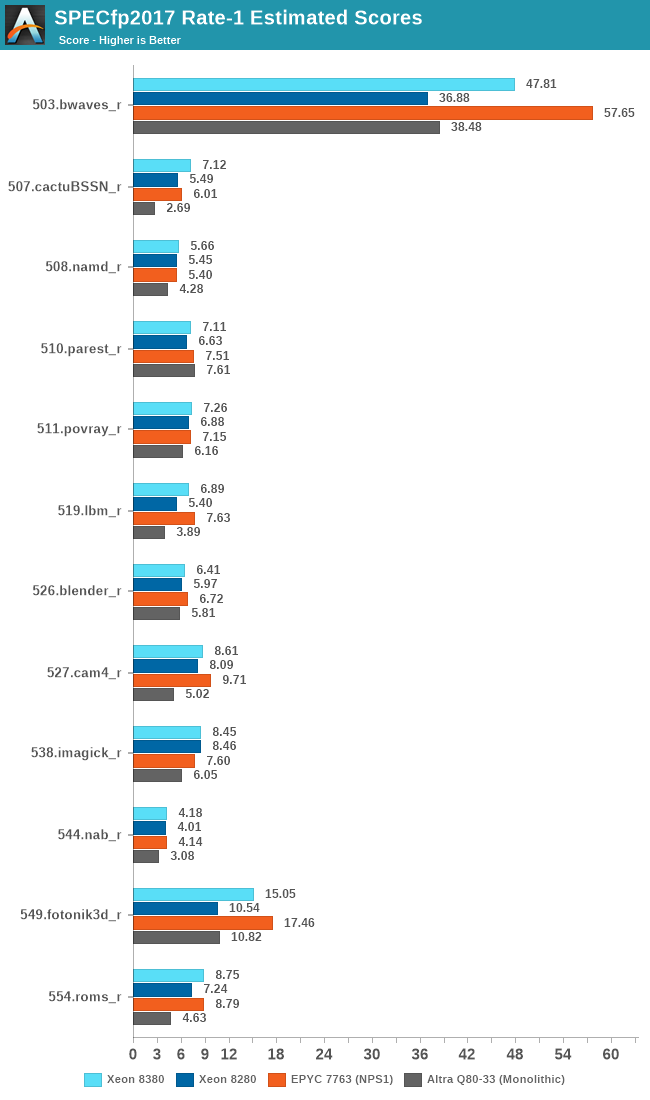

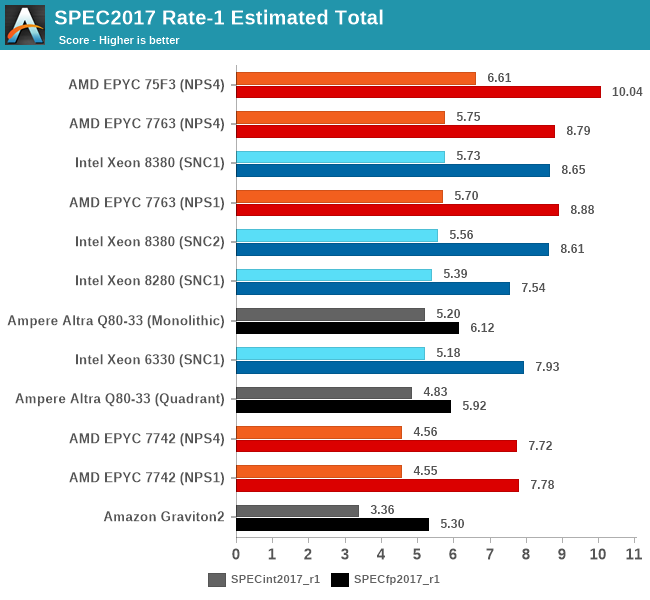

SPEC - Single-Threaded Performance

Single-thread performance of server CPUs usually isn’t the most important metric for most scale-out workloads, but there are use-cases such as EDA tools which are pretty much single-thread performance bound.

Power envelopes here usually don’t matter, and what is actually the performance factor that comes at play here is simply the boost clocks of the CPUs as well as the IPC improvement, and memory latency of the cores.

The one hiccup for the Xeon 8380 this generation is the fact that although there’s plenty of IPC gains to be had compared to previous microarchitectures, the new SKU is only boosting up to 3.4GHz, whereas the 8280 was able to boost up to 4GHz, which is a 15% deficit.

Even with the clock frequency disadvantage, thanks to the IPC gains, much improved memory bandwidth, as well as the much larger L3 cache, the new Ice Lake part to most of the time beat the Cascade Lake part, with only a couple of compute-bound core workloads where it falls behind.

The floating-point figures are more favourable to the ICX architecture due to the stronger memory performance.

Overall, the new Xeon 8380 at least manages to post slight single-threaded performance increases this generation, with larger gains in memory-bound workloads. The 8380 is essentially on par with AMD’s 7763, and loses out to the higher frequency optimised parts.

Intel has a few SKUs which offers slightly higher ST boost clocks of up to 3.7GHz – 300Mhz / 8.8% higher than the 8380, however that part is only 8-core and features only 18MB of cache. Other SKUS offer 3.5-3.6GHz boosts, but again less cache. So while the ST figures here could improve a bit on those parts, it’s unlikely to be significant.

169 Comments

View All Comments

fallaha56 - Tuesday, April 6, 2021 - link

surebut when your 64-core part virtually beats Intel's dual socket 32-core part on performance alone?

add the energy savings and suddenly it's a 300-400% perf lead

Jorgp2 - Tuesday, April 6, 2021 - link

The fuck?You do realize that they put more than CPUs onto servers right?

Andrei Frumusanu - Tuesday, April 6, 2021 - link

We are testing non-production server configurations, all with varying hardware, PSUs, and other setup differences. Socket comparisons remain relatively static between systems.edzieba - Tuesday, April 6, 2021 - link

Would be interesting to pit the 4309 (or 5315) against the Rocket Lake octacores. Yes, it's a very different platform aimed at a different market, but it would be interesting to see what a hypothetical '10nm Sunny Cove consumer desktop' could have resembled compared to what Rocket Lake's Sunny Cove delivered on 14nm.Jorgp2 - Tuesday, April 6, 2021 - link

You could also compare it to the 10900x, which is an existing AVX-512 CPU with large L2 caches.Holliday75 - Tuesday, April 6, 2021 - link

Typical consumer workloads the RL will be better. For typical server workloads the IL will be better. That is the gist of what would be said.ricebunny - Tuesday, April 6, 2021 - link

These tests are not entirely representative of real world use cases. For open source software, the icc compiler should always be the first choice for Intel chips. The fact that Intel provides such a compiler for free and AMD doesn’t is a perk that you get with owning Intel. It would be foolish not to take advantage of it.Andrei Frumusanu - Tuesday, April 6, 2021 - link

AMD provides AOCC and there's nothing stopping you from running ICC on AMD either. The relative positioning in that scenario doesn't change, and GCC is the industry standard in that regard in the real world.ricebunny - Tuesday, April 6, 2021 - link

Thanks for your reply. I was speaking from my experience in HPC: I’ve never compiled code that I intended to run on Intel architectures with anything but icc, except when the environment did not provide me such liberty, which was rare.If I were to run the benchmarks, I would build them with the most optimal settings for each architecture using their respective optimizing compilers. I would also make sure that I am using optimized libraries, e.g. Intel MKL and not Open BLAS for Intel architecture, etc.

Wilco1 - Tuesday, April 6, 2021 - link

And I could optimize benchmarks using hand crafted optimal inner loops in assembler. It's possible to double the SPEC score that way. By using such optimized code on a slow CPU, it can *appear* to beat a much faster CPU. And what does that prove exactly? How good one is at cheating?If we want to compare different CPUs then the only fair option is to use identical compilers and options like AnandTech does.