Intel Rocket Lake (14nm) Review: Core i9-11900K, Core i7-11700K, and Core i5-11600K

by Dr. Ian Cutress on March 30, 2021 10:03 AM EST- Posted in

- CPUs

- Intel

- LGA1200

- 11th Gen

- Rocket Lake

- Z590

- B560

- Core i9-11900K

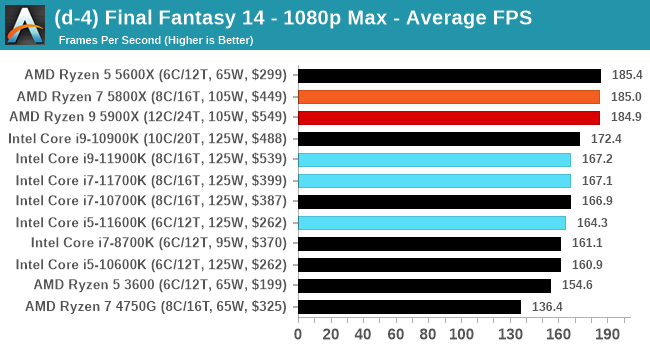

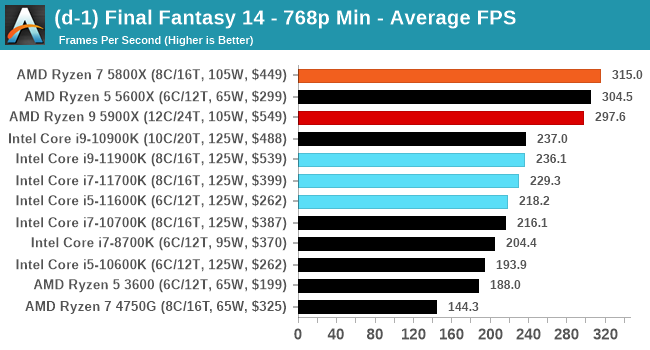

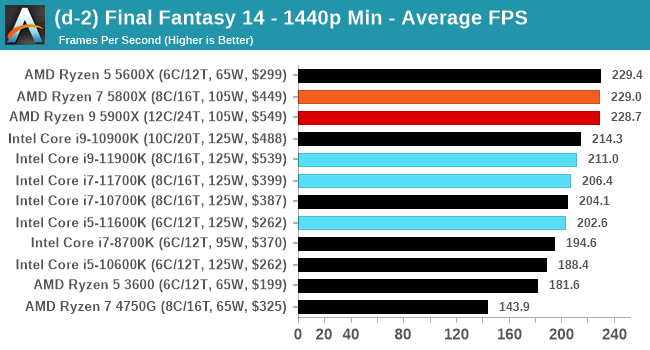

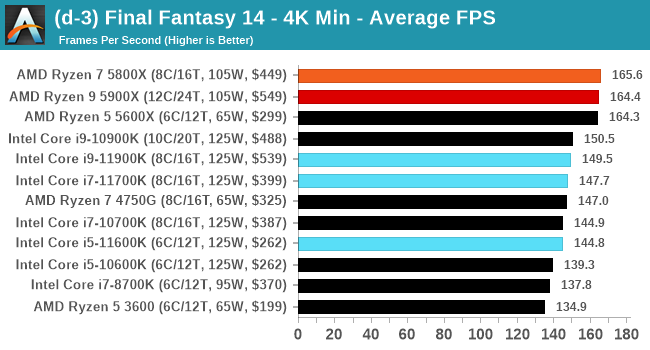

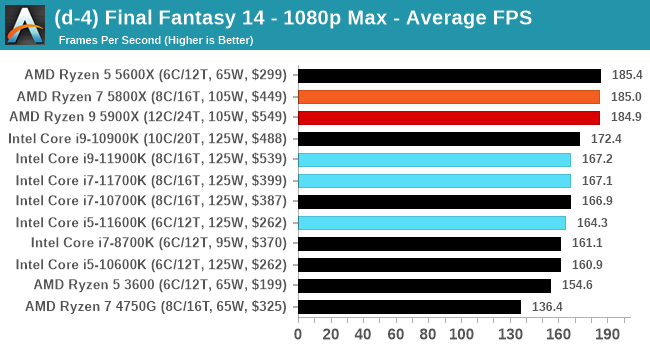

Gaming Tests: Final Fantasy XIV

Despite being one number less than Final Fantasy 15, because FF14 is a massively-multiplayer online title, there are always yearly update packages which give the opportunity for graphical updates too. In 2019, FFXIV launched its Shadowbringers expansion, and an official standalone benchmark was released at the same time for users to understand what level of performance they could expect. Much like the FF15 benchmark we’ve been using for a while, this test is a long 7-minute scene of simulated gameplay within the title. There are a number of interesting graphical features, and it certainly looks more like a 2019 title than a 2010 release, which is when FF14 first came out.

With this being a standalone benchmark, we do not have to worry about updates, and the idea for these sort of tests for end-users is to keep the code base consistent. For our testing suite, we are using the following settings:

- 768p Minimum, 1440p Minimum, 4K Minimum, 1080p Maximum

As with the other benchmarks, we do as many runs until 10 minutes per resolution/setting combination has passed, and then take averages. Realistically, because of the length of this test, this equates to two runs per setting.

| AnandTech | Low Resolution Low Quality |

Medium Resolution Low Quality |

High Resolution Low Quality |

Medium Resolution Max Quality |

| Average FPS |  |

|

|

|

As the resolution increases, the 11900K seemed to get a better average frame rate, but with the quality increased, it falls back down again, coming behind the older Intel CPUs.

All of our benchmark results can also be found in our benchmark engine, Bench.

279 Comments

View All Comments

mitox0815 - Tuesday, April 13, 2021 - link

Discounting the possibilty of great design ideas just because past attempts failed to varying degrees is a bit premature, methinks. But it does seem odd that it's constantly P6-esque design philosphies - VERY broadly speaking here - that take the price in the end when it comes to x86.blppt - Tuesday, March 30, 2021 - link

Even Jim Keller, the genius who designed the original x64 AMD chip, AND bailed out AMD with the excellent Zen, didn't last very long at Intel.Might be an indicator of how messed up things are there.

BushLin - Tuesday, March 30, 2021 - link

It's still possible that a yet to be released Jim Keller designed Intel CPU finally delivers a meaningful performance uplift in the next few years... I wouldn't bet on it but it isn't impossible either.philehidiot - Tuesday, March 30, 2021 - link

Indeed, it's a generation out. It's called "Intel Dynamic Breakfast Response". It goes "ding" when your bacon is ready for turning, rather than BSOD.Hifihedgehog - Tuesday, March 30, 2021 - link

Raja Koduri is a terrible human being and has wasted money on party buses and booze while “managing” his side of the house at Intel. I think Jim Keller knew the corporation was a big pander fest of bureaucracy and was smart to leave when he did. The chiplet idea he brought to the table, while not innovation since AMD already was first to market, will help them to stay in the game which wouldn’t have happened if he hadn’t contributed it.Oxford Guy - Saturday, April 3, 2021 - link

Oh? Firstly, I doubt he was the exec at AMD who invented the FX 9000 series scam. Secondly, AMD didn’t beat Nvidia for performance per watt but the Fury X coming with an AIO was a great improvement in performance per decibel — an important metric that is frequently undervalued by the tech press.What he deserves the most credit for, though, is making GPUs that made miners happy. Fact is that AMD is a corporation not a charity. And, not only is it happy to sell its entire stock to miners it is pleased to compete against PC gamers by propping up the console scam.

mitox0815 - Tuesday, April 13, 2021 - link

The first to the x86 market, yes. Chiplets - or modules, however you wanna call them - are MUCH much older than that. Just as AMD64 wasn't the stroke of genius it's made out to be by AMD diehards...they just repeated the trick Intel pulled off with their jump to 32 bit on the 386. Not even multicores were AMDs invention...I think both multicore CPUs and chiplet designs were done by IBM before.The same goes for Intel though, really. Or Microsoft. Or Apple. Or most other big players. Adopting ideas and pushing them with your market weight seems to be much more of a success story than actually innovating on your own...innovation pressure is always on the underdogs, after all.

KAlmquist - Wednesday, April 7, 2021 - link

The tick-tock model was designed to limit the impact of failures. For example, Broadwell was delayed because Intel couldn't get 14nm working, but that didn't matter too much because Broadwell was the same architecture as Haswell, just on a smaller node. By the time the Skylake design was completed, Intel had fixed the issues with 14nm and Skylake was released on schedule.What happened next indicates that people at Intel were still following the tick-tock model but had not internalized the reasoning that led Intel to adopt the tick-tock model in the first place. When Intel missed its target for 14nm, that meant it was likely that 10nm would be delayed as well. Intel did nothing. When the target date for 10nm came and went, Intel did nothing. When the target date for Sunny Cove arrived and it couldn't be released because the 10nm process wasn't there, Intel did nothing. Four years later, Intel has finally ported it to 14nm.

If Intel had been following the philosophy behind tick-tock, they would have released Rocket Lake in 2017 or 2018 to compete with Zen 1. They would have designed a new architecture prior to the release of Zen 3. The only reason they'd be trying to pit a Sunny Cove variant against Zen 3 would be if their effort to design a new architecture failed.

Khenglish - Tuesday, March 30, 2021 - link

I've said it before but I'll say it again. Software render Crysis by setting the benchmark to use the GPU, but disable the GPU driver in the device manager. This will cause Crysis to use the built-in Windows 10 software renderer, which is much newer and should be more optimized than the Crysis software renderer. It may even use AVX and AVX2, which Crysis certainly is too old for.Adonisds - Tuesday, March 30, 2021 - link

Great! Keep doing those Dolphin emulator tests. I wish there were even more emulator tests