The Ampere Altra Review: 2x 80 Cores Arm Server Performance Monster

by Andrei Frumusanu on December 18, 2020 6:00 AM EST- Posted in

- Servers

- Neoverse N1

- Ampere

- Altra

Compiling LLVM, NAMD Performance

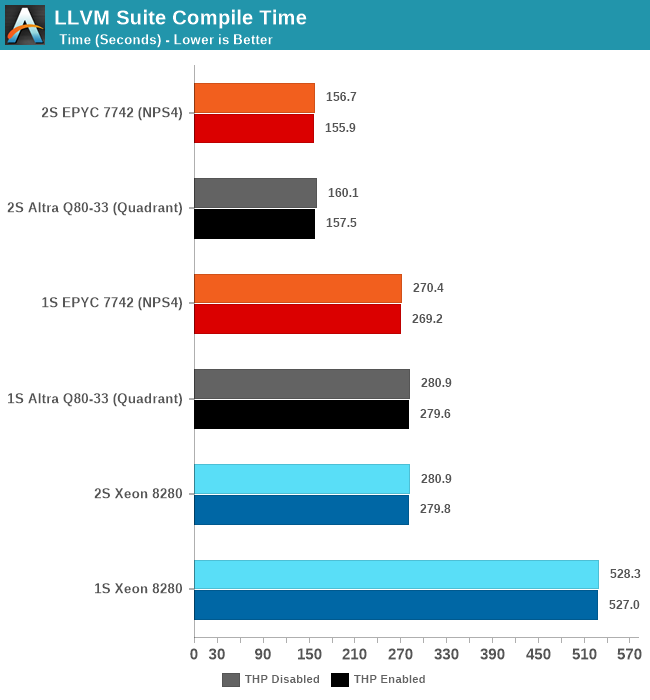

As we’re trying to rebuild our server test suite piece by piece – and there’s still a lot of work go ahead to get a good representative “real world” set of workloads, one more highly desired benchmark amongst readers was a more realistic compilation suite. Chrome and LLVM codebases being the most requested, I landed on LLVM as it’s fairly easy to set up and straightforward.

git clone https://github.com/llvm/llvm-project.gitcd llvm-projectgit checkout release/11.xmkdir ./buildcd ..mkdir llvm-project-tmpfssudo mount -t tmpfs -o size=10G,mode=1777 tmpfs ./llvm-project-tmpfscp -r llvm-project/* llvm-project-tmpfscd ./llvm-project-tmpfs/buildcmake -G Ninja \ -DLLVM_ENABLE_PROJECTS="clang;libcxx;libcxxabi;lldb;compiler-rt;lld" \ -DCMAKE_BUILD_TYPE=Release ../llvmtime cmake --build .We’re using the LLVM 11.0.0 release as the build target version, and we’re compiling Clang, libc++abi, LLDB, Compiler-RT and LLD using GCC 10.2 (self-compiled). To avoid any concerns about I/O we’re building things on a ramdisk – on a 4KB page system 5GB should be sufficient but on the Altra’s 64KB system it used up to 9.5GB, including the source directory. We’re measuring the actual build time and don’t include the configuration phase as usually in the real world that doesn’t happen repeatedly.

The Altra Q80-33 here performs admirably and pretty much matches the AMD EPYC 7742 both in 1S and 2S configurations. There isn’t exact perfect scaling between sockets because this being a actual build process, it also includes linking phases which are mostly single-threaded performance bound.

Generally, it’s interesting to see that the Altra here fares better than in the SPEC 502.gcc_r MT test – pointing out that real codebases might not be quite as demanding as the 502 reference source files, including a more diverse number of smaller files and objects that are being compiled concurrently.

NAMD

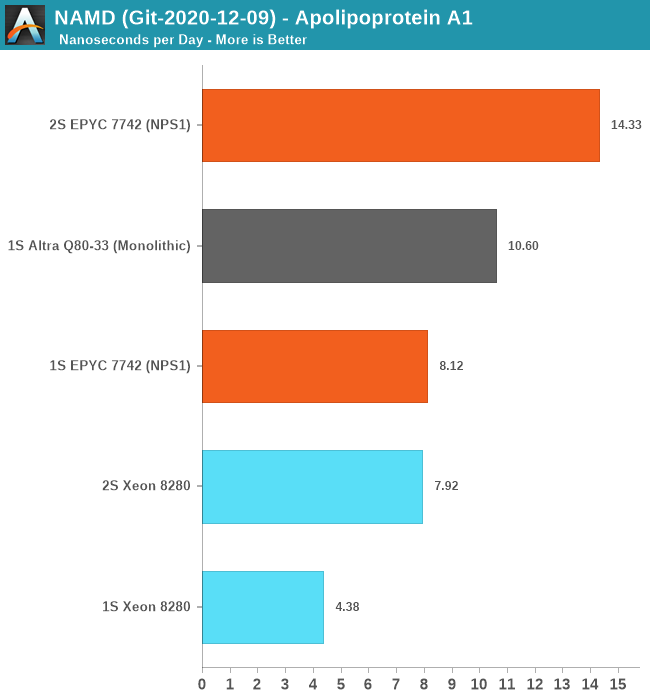

Another rather popular benchmark tool that we’ve actually seen being used by vendors such as AMD in their marketing materials when showcasing HPC performance for their server chips is NAMD. This actually quite an interesting adventure in terms of compiling the tool for AArch64 as essentially there little to no proper support for it. I’ve used the latest source drop, essentially the 2.15alpha / 3.0alpha tree, and compiled it from scratch on GCC 10.2 using the platform’s respective -march and -mtune targets.

For the Xeon 8280 – I did not use the AVX512 back-end for practical reasons: The code which introduces an AVX512 algorithm and was contributed by Intel engineers to NAMD has no portability to compilers other than ICC. Beyond this being a code-path that has no relation with the “normal” CPU algorithm – the reliance on ICC is something that definitely made me raise my eyebrows. It’s a whole other discussion topic on having a benchmark with real-world performance and the balance of having an actual fair and balanced apple to apples comparison. It’s something to revisit in the future as I invest more time into looking the code and see if I can port it to GCC or LLVM.

For the single-socket numbers – we’re using the multicore variant of the tool which has predictable scaling across a single NUMA node. Here, the Ampere Altra Q80-33 performed amazingly well and managed to outperform the AMD EPYC 7742 by 30% - signifying this is mostly a compute-bound workload that scales well with actual cores.

For the 2S figures, using the multicore binaries results in undeterministic performance – the Altra here regressed to 2ns/day and the EPYC system also crashed down to 4ns/day – oddly enough the Xeon system had absolutely no issue in running this properly as it had excellent performance scaling and actually outperforms the MPI version. The 2S EPYC scales well with the MPI version of the benchmark, as expected.

Unfortunately, I wasn’t able to compile an MPI version of NAMD for AArch64 as the codebase kept running into issues and it had no properly maintained build target for this. In general, I felt like I was amongst the first people to ever attempt this, even though there are some resources to attempt to help out on this.

I also tried running Blender on the Altra system but that ended up with so many headaches I had to abandon the idea – on CentOS there were only some really old build packages available in the repository. Building Blender from source on AArch64 with all of its dependencies ends up in a plethora of software packages which simply assume you’re running on x86 and rely on basic SSE intrinsics – easy enough to fix that in the makefiles, but then I hit some other compilation errors after which I lost my patience. Fedora Linux seemed to be the only distribution offering an up-to-date build package for Blender – but I stopped short of reinstalling the OS just to benchmark Blender.

So, while AArch64 has made great strides in the past few years – and the software situation might be quite good for server workloads, it’s not all rosy and we’re still have ways to go before it can be considered a first-class citizen in the software ecosystem. Hopefully Apple’s introduction of Apple Silicon Macs will accelerate the Arm software ecosystem.

148 Comments

View All Comments

Wilco1 - Saturday, December 19, 2020 - link

SMT gives very little benefit (only 15% faster on SPECINT and 3.5% *slower* on SPECFP), adds a lot of area and complexity, and results in very bad per-thread performance.It's always better to use 2 real cores instead of 1 SMT core. So if you have small cores like Neoverse N1, adding SMT makes no sense at all.

mode_13h - Sunday, December 20, 2020 - link

Whether SMT makes sense depends on the workload. Many tasks have greater branch-density and less computational density, in which case SMT is a massive win. I'm compiling code all the time and see huge speedups from SMT.That said, of course I'll take two real cores instead of 2-way SMT, all else being equal, but that always costs more. If SMT really made as little sense as you say, then it wouldn't be nearly so widespread.

Wilco1 - Monday, December 21, 2020 - link

There are certainly cases where SMT helps, but having some wins doesn't mean it is worth adding SMT. All too often people talk up the upsides and ignore the downsides. Let's see how Altra Max does vs Milan next year, that should answer which is best.Note almost none of the billions of CPUs sold every year have SMT (even if we exclude embedded). Adding another core is simpler, cheaper and gives more performance.

mode_13h - Monday, December 21, 2020 - link

> Let's see how Altra Max does vs Milan next year, that should answer which is best.I disagree. That's a bit apples and oranges. The differences between Zen2/Skylake and N1 are too big. You need to look at the overhead of SMT for a particular core vs. the benefits for that core.

These x86 cores are large not just because of SMT, but also the x86 tax, their wide vector units, and other things. It could be that SMT adds just 5% overhead, and that's not enough to increase your core count hardly at all, if you drop it.

Wilco1 - Monday, December 21, 2020 - link

It's never going to be a perfect comparison - nobody will design SMT and non-SMT variants of the same core! There are many differences because they use different design principles. However it will clearly show which of these designs works out best for top-end server performance.Spunjji - Monday, December 21, 2020 - link

SMT is a clear win where you already have large cores with a lot of execution resources, whereby the extra resources required aren't a large proportion of overall die area. It also helps if your tasks are focused on overall performance for a given number of threads, rather than performance-per-thread.Where the cores are this small, though, simply adding more of them seems to be the better option.

mode_13h - Monday, December 21, 2020 - link

Well said.mode_13h - Sunday, December 20, 2020 - link

Plus, per-thread performance is only an issue if that's how you're paying for CPU time, and then what you actually care about is thread performance per dollar, which would compensate for any cost differences due to SMT, as well.Wilco1 - Monday, December 21, 2020 - link

And Graviton 2 shows which is cheaper and faster.mode_13h - Monday, December 21, 2020 - link

That comparison is only valid for Amazon customers and in the short term. It can't be used to support a broader conclusion about SMT, because we lack transparency into the cost structure of Amazon's hosting, like whether they're subsidizing Graviton2 servers or even just charging enough for them to simply break even on the hardware.