The Ampere Altra Review: 2x 80 Cores Arm Server Performance Monster

by Andrei Frumusanu on December 18, 2020 6:00 AM EST- Posted in

- Servers

- Neoverse N1

- Ampere

- Altra

SPEC - Multi-Threaded Performance

While the single-threaded numbers were interesting, what we’re all looking after are the multi-core scores and what exactly 80 Neoverse-N1 cores can achieve within a single, and two sockets.

The performance measurements here were limited to quadrant and NPS4 configurations as that’s actually the default settings in which the Altra system came in, and what also AMD usually says customers want to deploy into production, achieving better performance by reducing cross-chip memory traffic.

The main comparison point here against the Q80-33 is AMD’s EPYC 7742 – 80 cores versus 64 cores with SMT, as well as similar TDPs. Intel’s Xeon 8280 with its 28 cores also comes into play but isn’t a credible competitor against the newer generation silicon designs on 7nm.

I’m keeping the detailed result sets limited to single-socket figures – we’ll check out the dual-socket numbers later on in the aggregate chart – essentially the 2S figures are simply 2x the performance.

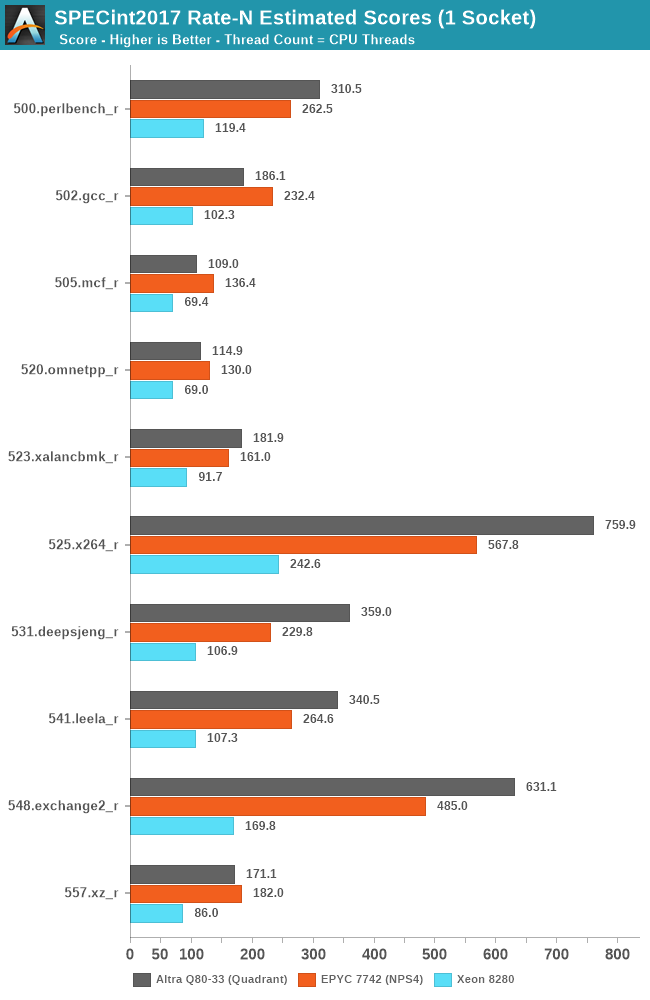

Starting off with SPECint2017, we’re seeing some absolutely smashing figures here on the part of the Altra Q80-33, with several workloads where the chip significantly outperforms the EPYC 7742, but also losing in some other workloads.

Starting off with the losing workloads, gcc, mcf, and omnetpp, these are all workloads with either high cache pressure or high memory requirements.

The Altra losing out in 502.gcc_r doesn’t come as much of a surprise as we also saw the Graviton2 suffering in this workload – the 1MB per core of L2 as well as 400KB per core of shared L3 really isn’t much and pales against the 4MB/core that’s available to the EPYC’s Zen2 cores. The Altra going from 2.5GHz to 3.3GHz and 64 cores to 80 cores only improves the score from 176.9 to 186.1 in comparison to the Graviton2. I’m not including the Graviton2 in the charts as it’s not quite the apples-to-apples comparisons due to compiler and run environments, but one can look up the scores in that review.

Where the Altra does shine however is in more core-local workloads that are more compute oriented and have less of a memory footprint, of which we see quite a few here, such as 525.x264.

What’s really interesting here is that even though the latter tests in the suite are extremely friendly to SMT scaling on the x86 systems, with 531, 541, 548 and 557 scaling up with SMT threads in MT performance by respectively 30, 43, 25 and 36%, AMD’s Rome CPU still manages to lose to the Altra system by considerable amounts – only being slightly favoured in 557.xz_r by a slight margin – so while SMT helps, it’s not enough to counteract the raw 25% core count advantage of the Altra system when comparing 80 vs 64 cores.

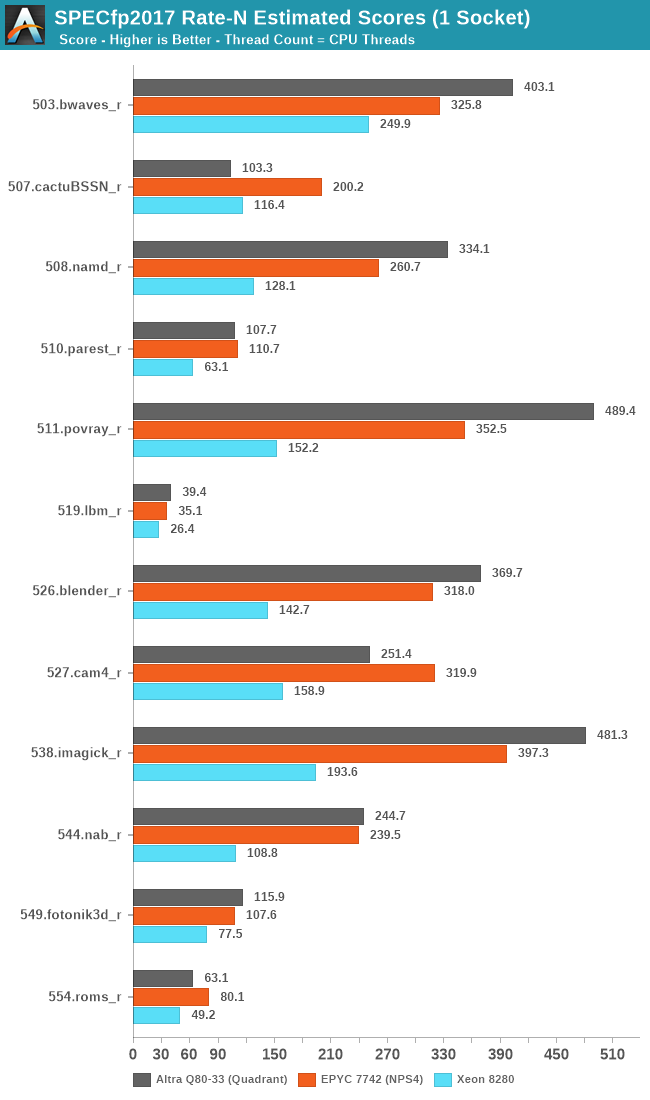

In SPECfp2017, things are also looking favourably for the Altra, although the differences aren’t as favourable except for 511.povray where the raw core count again comes into play.

The Altra again showcases really bad performance in 507.cactuBSSN_r, mirroring the lacklustre single-threaded scores and showing worse performance than a Graviton2 by considerable amounts.

The Arm design does well in 503.bwaves which is fairly high IPC as well as bandwidth hungry, however falls behind in other bandwidth hungry workloads such as 554.roms_r which has more sparse memory stores.

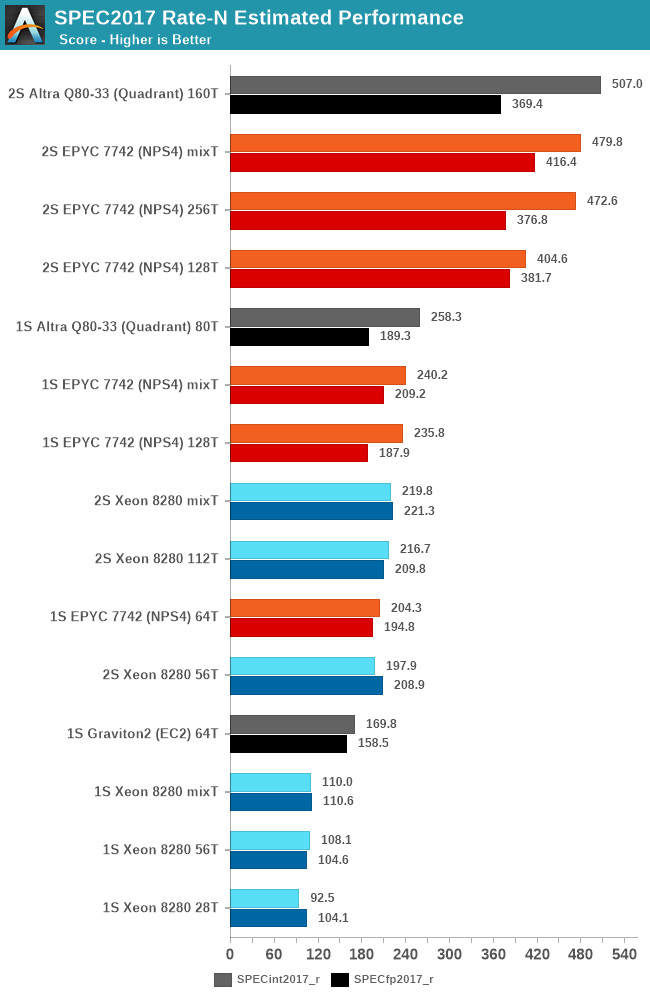

In the overall scores, both across single-socket and dual-socket systems, the new Altra Q80-33 performs outstandingly well, actually edging out the EPYC system by a small margin in SPECint, though it’s losing out in SPECfp and more cache-heavy workloads.

Beyond testing 1-socket and 2-socket scores, I’ve also taken the opportunity of this new round of testing across the various systems to test out 1 thread per core and 2 thread per core scores across the SMT systems.

While there are definitely workloads that scale well with SMT, overall, the technology has a smaller impact on the suite, averaging out at 15% for both EPYC and Xeon.

One thing we don’t usually do in the way we run SPEC is mixing together rate figures with different thread counts, however with such large core counts and threads it’s something I didn’t want to leave out for this piece. The “mixT” result set takes the best performing sub-score of either the 1T or 2T/core runs for a higher overall aggregate. Usually officially submitted SPEC scores do this by default in their _peak submissions while we usually run _base comparative scores. Even with this best-case methodology for the SMT systems, the Altra system still slightly edges out the performance of the EPYC 7742.

Intel’s Cascade Lake Xeon system here really isn’t of any consideration in the competitive landscape as a single-socket Altra system will outperform a dual-socket Xeon.

The Altra QuickSilver still has one weakness and that’s cache-heavy workloads – 32MB of L3 for 80 cores really isn’t near enough to keep up performance scaling across that many cores. In the end of the day however, it’s up to Ampere’s customers to give input what kind of workloads they use and if they stress the caches or not – given that both Amazon and Ampere chose the minimum cache configuration for their mesh implementations, maybe that’s not the case?

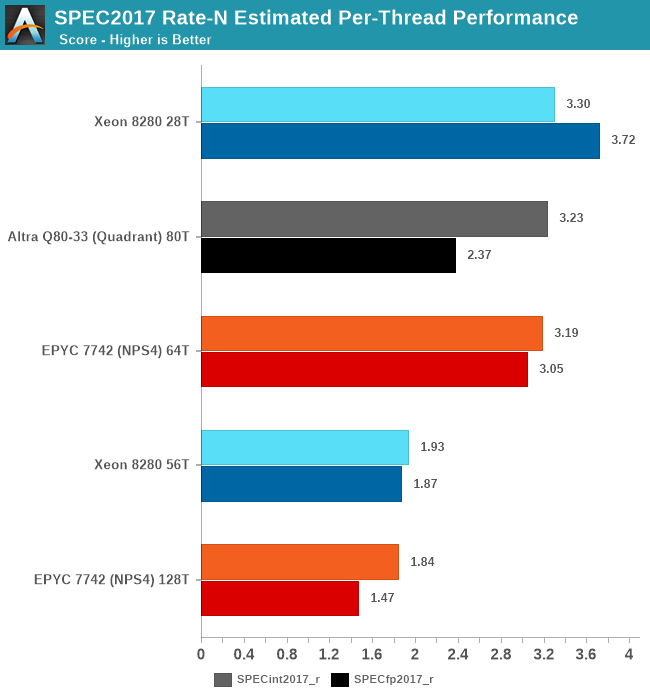

Finally, one last figure I wanted to showcase is the per-thread performance of the different designs. While scaling out multi-threaded performance across vast number of cores is a very important way to scale performance, it’s also important to not take a flock of chickens approach with too weak cores. Especially for customers Ampere is targeting, such as enterprise and cloud service providers, many times users will be running things on a subset of a given processor socket cores, so per-core and per-thread performance remains a very important metric.

Simply dividing the single-socket performance figures by the amount of threads run, we get to an average per-thread performance figure in the context of a fully loaded system, a figure that’s actually more realistic than the single-thread figures of the previous page where the rest of the CPU cores in the systems are doing nothing.

In this regard, Intel’s Xeon offering is still extremely competitive and actually takes the lead position here – although its low core count doesn’t favour it at all in the full throughput metrics of the socket, the per-thread performance is still the best amongst the current CPU offerings out there.

In SPECint, the Altra, EPYC and Xeon are all essentially tied in performance, whilst in SPECfp the Xeon takes the lead with the Altra falling notably behind – with the EPYC Rome chip falling in-between the two.

If per-thread performance is important to you, then obviously enough SMT isn’t an option as this vastly regresses performance in favour of a chance to get more aggregate performance across multiple threads. There’s many vendors or enterprise use-cases which for this reason just outright disable SMT.

148 Comments

View All Comments

Brane2 - Saturday, December 19, 2020 - link

Meh. Nothing special. it has been benchmarked on Phoronix and it performed more or less on par with Rome. 80 newest ARM cores against 64 mature x86 cores within constrained power envelope.Naples is just about to come out and I suspect some time after that AMD will have something like really wide new RISC-V cores.

Wilco1 - Saturday, December 19, 2020 - link

It won most benchmarks on Phoronix while using significantly less power. Yes Milan is about to be released, and it will have to compete with the 128-core Altra Max. Which do you believe is going to win - 64 SMT cores or 128 real cores?mode_13h - Sunday, December 20, 2020 - link

It actually won less than half of the benchmarks on phoronix, since a number of those graphs just re-state the results in score/W. There are also questions over some of the compiler options used on those benchmarks, since many of the tests are compiled with options that won't enable AVX on benchmarks where it should be beneficial (yet, not having SVE, the N1 cores are at no such disadvantage).Wilco1 - Monday, December 21, 2020 - link

"should be beneficial" -> "might help in a few limited cases". AVX/AVX512 isn't that useful for general C/C++ code. You typically only see large gains when people optimize using intrinsics.mode_13h - Monday, December 21, 2020 - link

Intrinsics don't compile if they're for a CPU arch beyond what the compiler is being instructed to target. So, even packages where people take the time to optimize with intrinsics need to guard them with compile-time checks to ensure the CPU target is capable of executing those instructions.Compilers do generate vectorized code. I don't know how well GCC is doing on that front, lately, but the TNN tests should be a good way to see that. Too bad those tests don't use -march=native.

What's interesting about TNN is I'm looking at the exact source revision Phoronix is using, and it seems they've completely dropped their backend for x86. The source/tnn/device/x86/ is simply missing. So, I wonder if they decided the compiler was good enough that they didn't need to bother with their own hand-optimized code for it, or if they just decided they don't care how fast their stuff runs on it.

See:

* https://openbenchmarking.org/innhold/83a730ed41d4e...

* https://github.com/Tencent/TNN/tree/v0.2.3

Wilco1 - Monday, December 21, 2020 - link

TNN does not benefit from -march=native. Phoronix uses the generic C++ version which doesn't benefit from vectorization. Try it yourself.Optimized versions using intrinsics typically use runtime checks so you automatically get the fastest version that works on your CPU. The makefile selects the right ISA variant for any files using intrinsics. But none of this is used in the TNN test.

mode_13h - Monday, December 21, 2020 - link

> TNN does not benefit from -march=native. Phoronix uses the generic C++ version which doesn't benefit from vectorization. Try it yourself.At this point, I probably will.

> Optimized versions using intrinsics typically use runtime checks so you automatically get the fastest version that works on your CPU.

That's a whole additional level of effort for the developers. For them to bother compiling and conditionally calling different versions only makes sense if they think their main userbase aren't going to bother recompiling specifically for their hardware. In the case of specialized packages, it's reasonable to expect your users to take a little trouble for the best performance. It's really things like very low-level libs or multimedia code where you tend to see the sort of elaborate runtime detection and dynamic codepath selection that you're describing.

mode_13h - Monday, December 21, 2020 - link

I think Basis Universal and High Performance Conjugate Gradient are some other cases where the wider SIMD of Zen2 and Skylake-SP should confer significant benefit.Wilco1 - Monday, December 21, 2020 - link

"should give significant benefit" -> "might give some benefit". I suggest you try out. Autovectorization is not nearly as good as you seem to believe, and the overall speedup is often disappointing even if some loops are 10-20x faster.vinayshivakumar - Saturday, December 19, 2020 - link

I am a bit puzzled why none of these processors support SMT... Can someone shed light on why this is the case ?