AMD Ryzen 5 3600 Review: Why Is This Amazon's Best Selling CPU?

by Dr. Ian Cutress on May 18, 2020 9:00 AM ESTCPU Performance: Encoding Tests

With the rise of streaming, vlogs, and video content as a whole, encoding and transcoding tests are becoming ever more important. Not only are more home users and gamers needing to convert video files into something more manageable, for streaming or archival purposes, but the servers that manage the output also manage around data and log files with compression and decompression. Our encoding tasks are focused around these important scenarios, with input from the community for the best implementation of real-world testing.

All of our benchmark results can also be found in our benchmark engine, Bench.

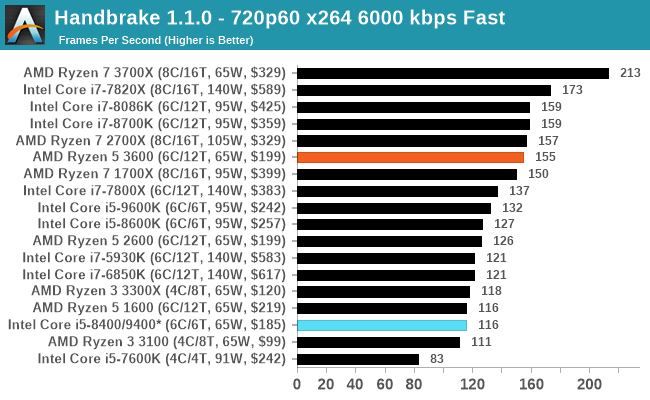

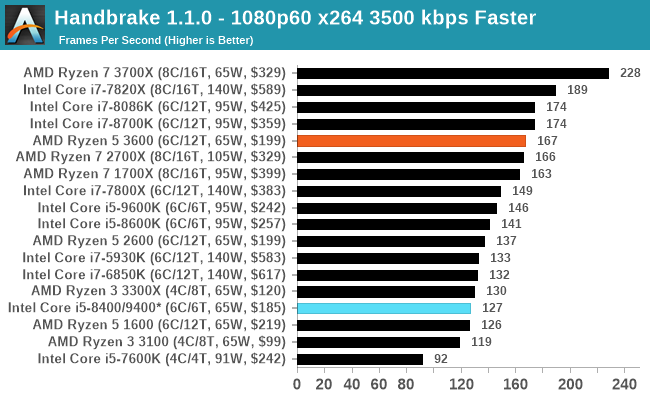

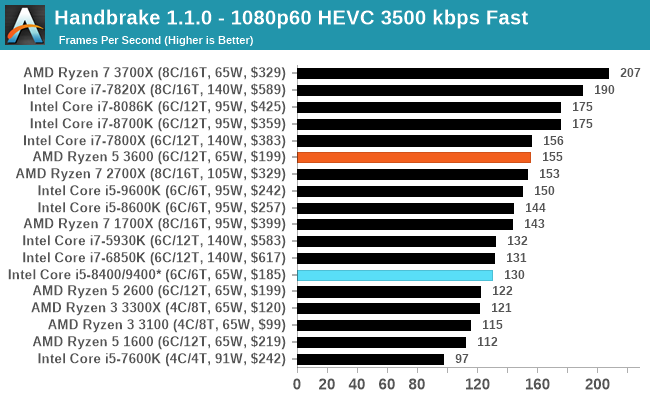

Handbrake 1.1.0: Streaming and Archival Video Transcoding

A popular open source tool, Handbrake is the anything-to-anything video conversion software that a number of people use as a reference point. The danger is always on version numbers and optimization, for example the latest versions of the software can take advantage of AVX-512 and OpenCL to accelerate certain types of transcoding and algorithms. The version we use here is a pure CPU play, with common transcoding variations.

We have split Handbrake up into several tests, using a Logitech C920 1080p60 native webcam recording (essentially a streamer recording), and convert them into two types of streaming formats and one for archival. The output settings used are:

- 720p60 at 6000 kbps constant bit rate, fast setting, high profile

- 1080p60 at 3500 kbps constant bit rate, faster setting, main profile

- 1080p60 HEVC at 3500 kbps variable bit rate, fast setting, main profile

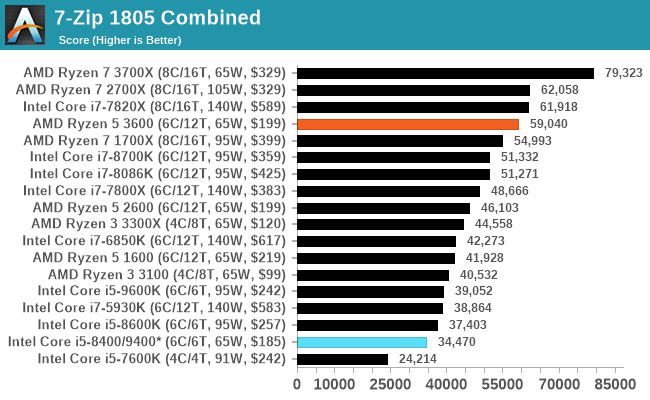

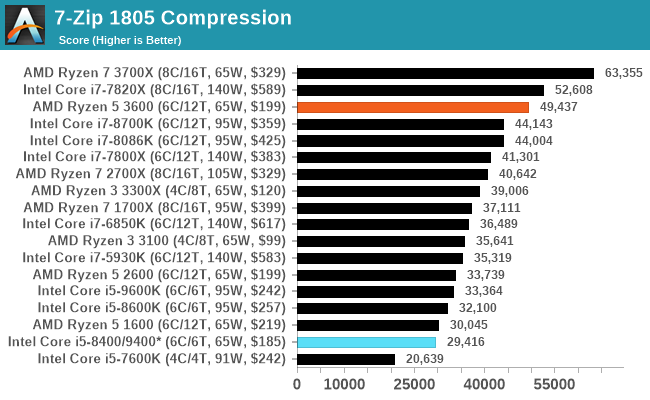

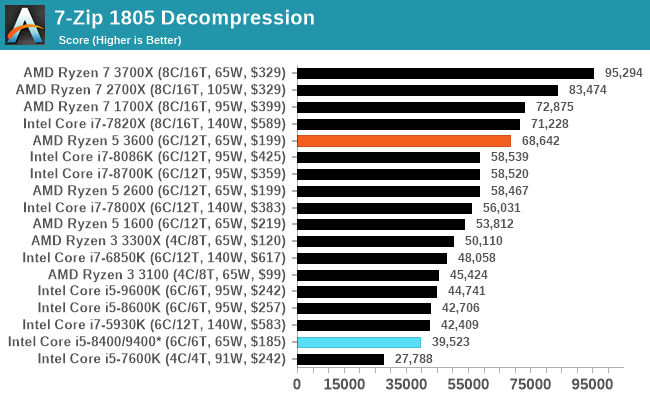

7-zip v1805: Popular Open-Source Encoding Engine

Out of our compression/decompression tool tests, 7-zip is the most requested and comes with a built-in benchmark. For our test suite, we’ve pulled the latest version of the software and we run the benchmark from the command line, reporting the compression, decompression, and a combined score.

It is noted in this benchmark that the latest multi-die processors have very bi-modal performance between compression and decompression, performing well in one and badly in the other. There are also discussions around how the Windows Scheduler is implementing every thread. As we get more results, it will be interesting to see how this plays out.

Please note, if you plan to share out the Compression graph, please include the Decompression one. Otherwise you’re only presenting half a picture.

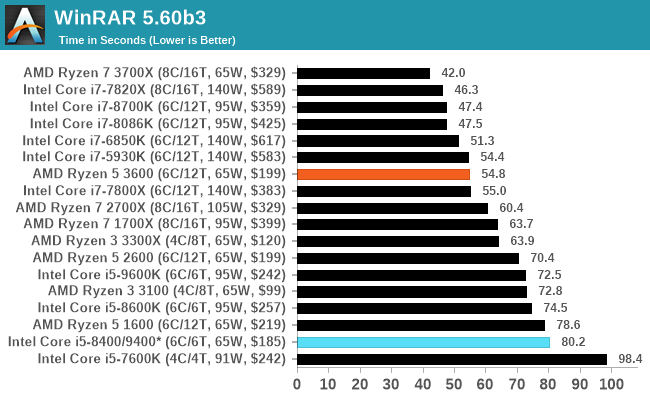

WinRAR 5.60b3: Archiving Tool

My compression tool of choice is often WinRAR, having been one of the first tools a number of my generation used over two decades ago. The interface has not changed much, although the integration with Windows right click commands is always a plus. It has no in-built test, so we run a compression over a set directory containing over thirty 60-second video files and 2000 small web-based files at a normal compression rate.

WinRAR is variable threaded but also susceptible to caching, so in our test we run it 10 times and take the average of the last five, leaving the test purely for raw CPU compute performance.

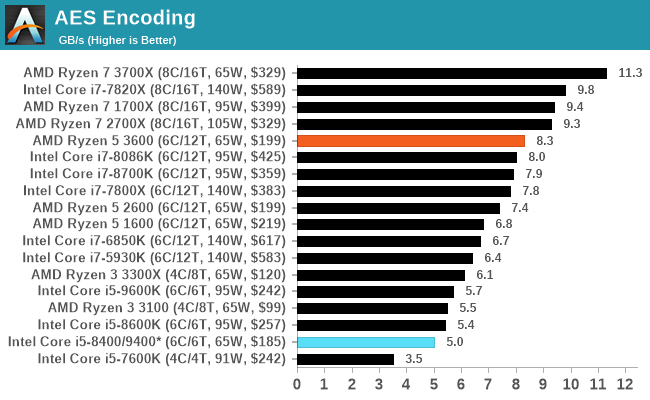

AES Encryption: File Security

A number of platforms, particularly mobile devices, are now offering encryption by default with file systems in order to protect the contents. Windows based devices have these options as well, often applied by BitLocker or third-party software. In our AES encryption test, we used the discontinued TrueCrypt for its built-in benchmark, which tests several encryption algorithms directly in memory.

The data we take for this test is the combined AES encrypt/decrypt performance, measured in gigabytes per second. The software does use AES commands for processors that offer hardware selection, however not AVX-512.

114 Comments

View All Comments

PeachNCream - Monday, May 18, 2020 - link

Anandtech spends a lot of time on gaming and on desktop PCs that are not representative of where and how people now accomplish compute tasks. They do spend a little time on mobile phones and that nets part of the market, but only at the pricey end of cellular handsets. Lower cost mobile for the masses and work-a-day PCs and laptops generally get a cursory acknowledgement once in a great while which is disappointing because there is a big chunk of the market that gets disregarded. IIRC, AT didn't even get around to reviewing the lower tiers of discrete GPUs in the past, effectively ignoring that chunk of the market until long after release and only if said lower end hardware happened to be in a system they ended up getting. They do not seem to actively seek out such components, sadly enough.whatthe123 - Monday, May 18, 2020 - link

AI/tensorflow runs so much faster even on mid tier GPUs that trying to argue CPUs are relevant is completely out of touch. No academic in their right mind is looking for a bang-for-buck CPU to train models, it would be an absurd waste of time.wolfesteinabhi - Tuesday, May 19, 2020 - link

well ..games also run on GPU ...so why bother benchmarking CPU's with them? ... same reason why anyone would want to look at other workflows .. i said tensor flow as just one of the examples(maybe not the best example) ..but more of such "work" or "development" oriented benchmarks.pashhtk27 - Thursday, May 21, 2020 - link

Or there should be proper support libraries for the integrated graphics to run tensor calculations. That would make GPU-less AI development machines a lot more cost effective. AMD and Intel are both working on this but it'll be hard to get around Nvidia's monopoly of AI computing. Free cloud compute services like colab have several problems and others are very cost prohibitive for students. And sometimes you just need to have a local system capable of loading and predicting. As a student, I think it would significantly lower the entry threshold if their cost effective laptops could run simple models and get output.We can talk about AI benchmarks then.

Gigaplex - Monday, May 18, 2020 - link

As a developer I just use whatever my company gives me. I wouldn't be shopping for consumer CPUs for work purposes.wolfesteinabhi - Tuesday, May 19, 2020 - link

not all developers are paid by their companies or make money with what they develop ... some are hobbyists and some do it as their "side" activities and with their own money at home apart from what they do at work with big guns!.mikato - Sunday, May 24, 2020 - link

As a developer, I built my own new computer at work and got to pick everything within budget.Achaios - Monday, May 18, 2020 - link

"Every so often there comes a processor that captures the market. "This used to be Sandy Bridge I5-2500K, all time best seller.

Oh, how the Mighty Chipzilla has fallen.

mikelward - Monday, May 18, 2020 - link

My current PC is a 2500K. My next one will be a 3600.Spunjji - Tuesday, May 19, 2020 - link

Sandy was an absolute knockout. Most of the development thereafter was aimed at sticking similarly powerful CPUs in sleeker packages rather than increasing desktop performance, and while I feel like Intel deserve more credit for some things than they get (e.g. the leap in mobile power/performance that can from Haswell) they really shit the bed on 10nm and responding to Ryzen.