AMD Ryzen 5 3600 Review: Why Is This Amazon's Best Selling CPU?

by Dr. Ian Cutress on May 18, 2020 9:00 AM ESTGaming: Ashes Classic (DX12)

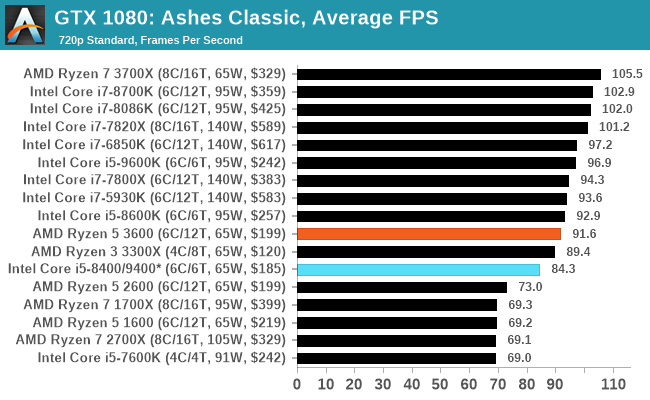

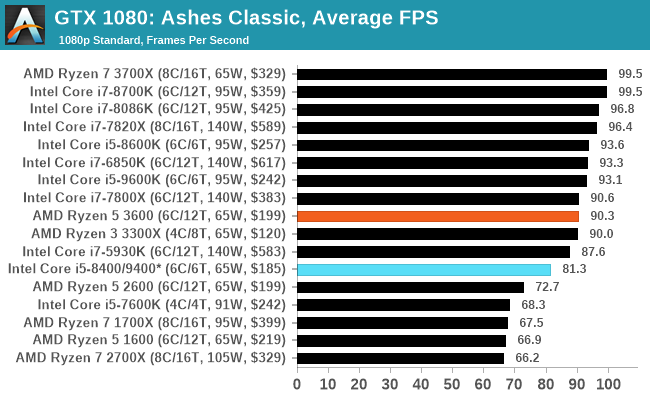

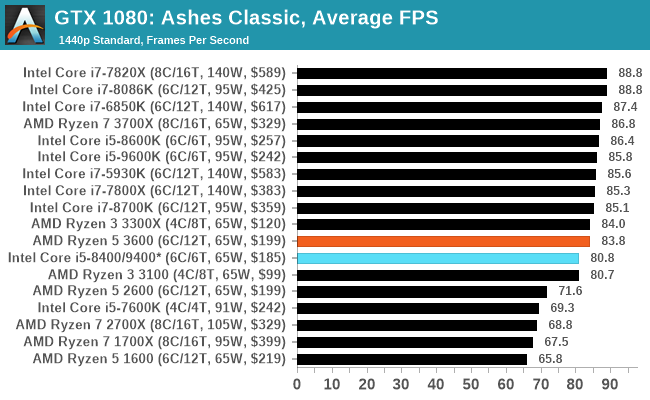

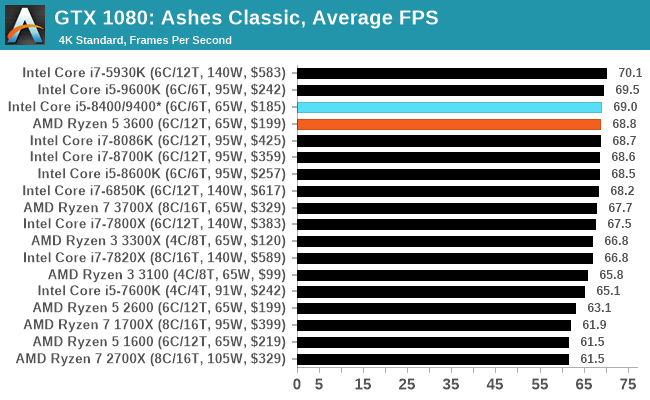

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of the DirectX12 features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

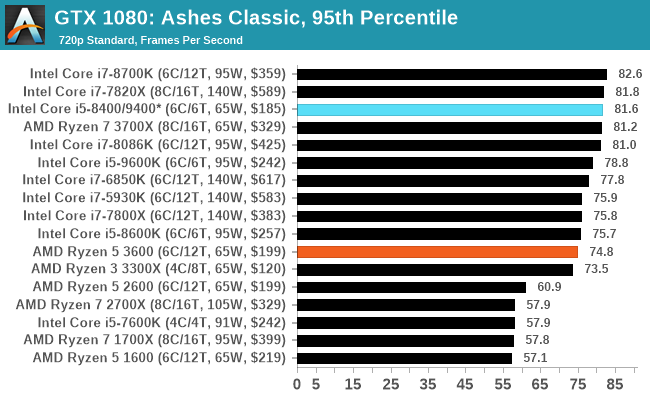

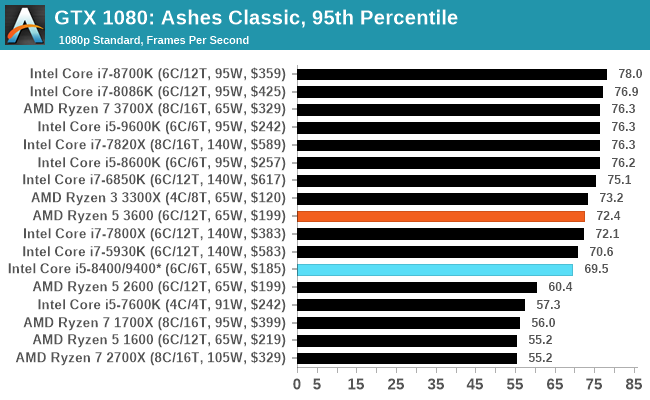

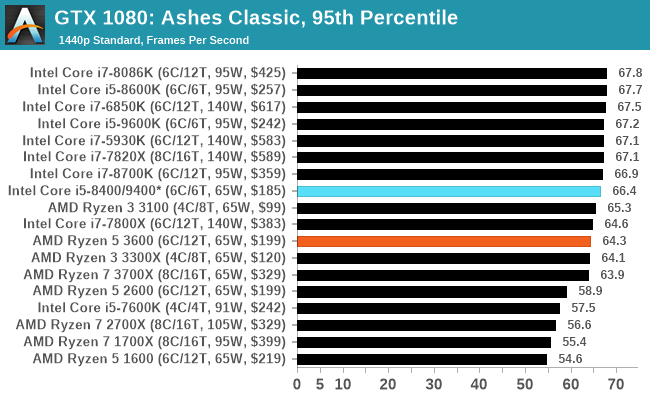

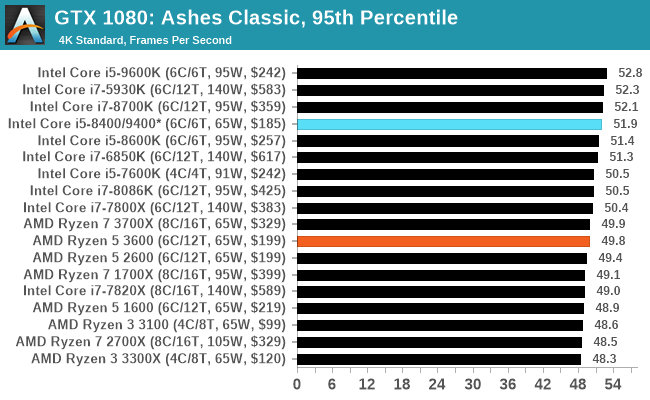

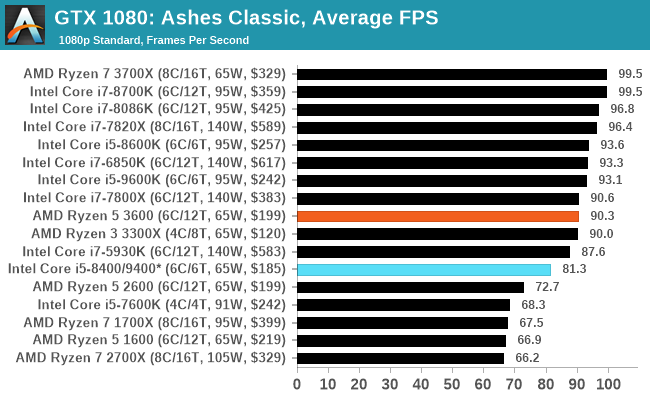

For our benchmark, we run Ashes Classic: an older version of the game before the Escalation update. The reason for this is that this is easier to automate, without a splash screen, but still has a strong visual fidelity to test.

Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at the above settings, and take the frame-time output for our average and percentile numbers.

All of our benchmark results can also be found in our benchmark engine, Bench.

| AnandTech | IGP | Low | Medium | High |

| Average FPS |  |

|

|

|

| 95th Percentile |  |

|

|

|

114 Comments

View All Comments

PeachNCream - Monday, May 18, 2020 - link

Anandtech spends a lot of time on gaming and on desktop PCs that are not representative of where and how people now accomplish compute tasks. They do spend a little time on mobile phones and that nets part of the market, but only at the pricey end of cellular handsets. Lower cost mobile for the masses and work-a-day PCs and laptops generally get a cursory acknowledgement once in a great while which is disappointing because there is a big chunk of the market that gets disregarded. IIRC, AT didn't even get around to reviewing the lower tiers of discrete GPUs in the past, effectively ignoring that chunk of the market until long after release and only if said lower end hardware happened to be in a system they ended up getting. They do not seem to actively seek out such components, sadly enough.whatthe123 - Monday, May 18, 2020 - link

AI/tensorflow runs so much faster even on mid tier GPUs that trying to argue CPUs are relevant is completely out of touch. No academic in their right mind is looking for a bang-for-buck CPU to train models, it would be an absurd waste of time.wolfesteinabhi - Tuesday, May 19, 2020 - link

well ..games also run on GPU ...so why bother benchmarking CPU's with them? ... same reason why anyone would want to look at other workflows .. i said tensor flow as just one of the examples(maybe not the best example) ..but more of such "work" or "development" oriented benchmarks.pashhtk27 - Thursday, May 21, 2020 - link

Or there should be proper support libraries for the integrated graphics to run tensor calculations. That would make GPU-less AI development machines a lot more cost effective. AMD and Intel are both working on this but it'll be hard to get around Nvidia's monopoly of AI computing. Free cloud compute services like colab have several problems and others are very cost prohibitive for students. And sometimes you just need to have a local system capable of loading and predicting. As a student, I think it would significantly lower the entry threshold if their cost effective laptops could run simple models and get output.We can talk about AI benchmarks then.

Gigaplex - Monday, May 18, 2020 - link

As a developer I just use whatever my company gives me. I wouldn't be shopping for consumer CPUs for work purposes.wolfesteinabhi - Tuesday, May 19, 2020 - link

not all developers are paid by their companies or make money with what they develop ... some are hobbyists and some do it as their "side" activities and with their own money at home apart from what they do at work with big guns!.mikato - Sunday, May 24, 2020 - link

As a developer, I built my own new computer at work and got to pick everything within budget.Achaios - Monday, May 18, 2020 - link

"Every so often there comes a processor that captures the market. "This used to be Sandy Bridge I5-2500K, all time best seller.

Oh, how the Mighty Chipzilla has fallen.

mikelward - Monday, May 18, 2020 - link

My current PC is a 2500K. My next one will be a 3600.Spunjji - Tuesday, May 19, 2020 - link

Sandy was an absolute knockout. Most of the development thereafter was aimed at sticking similarly powerful CPUs in sleeker packages rather than increasing desktop performance, and while I feel like Intel deserve more credit for some things than they get (e.g. the leap in mobile power/performance that can from Haswell) they really shit the bed on 10nm and responding to Ryzen.