AMD’s Mobile Revival: Redefining the Notebook Business with the Ryzen 9 4900HS (A Review)

by Dr. Ian Cutress on April 9, 2020 9:00 AM ESTGPU Benchmarks

Graphics is going to be a bit more challenging than the CPU tests. Games that test both the CPU and the GPU to the limits are going to find different tradeoffs with each of these systems.

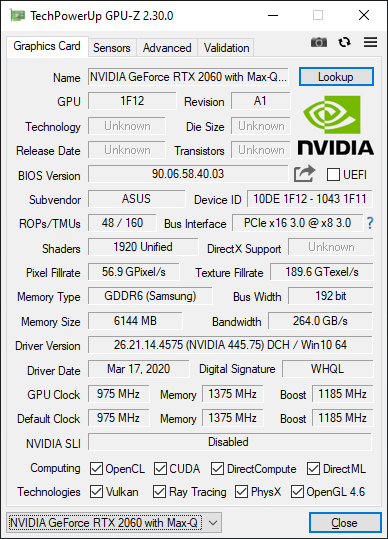

The ASUS Zephyrus G14 is smaller and more thermally limited. It doesn’t have an AMD GPU, so it can’t take advantage of AMD’s new features like SmartShift that can manage power between the CPU and GPU. It technically has the stronger CPU, and while the graphics card is the same, ASUS has the Max-Q version of the RTX 2060, which is optimized for power and efficiency, and exhibits lower clocks. Technically the base frequency of this configuration is higher, at 975 MHz, the turbo is lower at 1185 MHz, and the GDDR6 memory is a lot lower at 1375 MHz (11Gbps/pin).

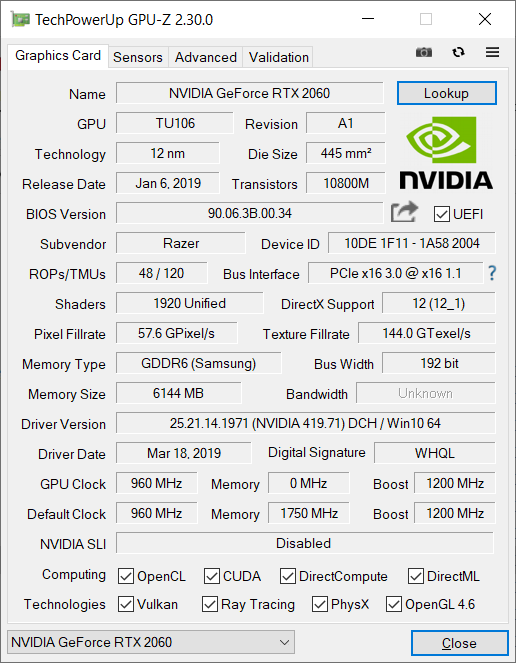

The Razer Blade 15 has the bigger chassis, and we assume is built for a larger overall TDP. While it has the ‘weaker’ CPU of the two, with fewer cores and lower frequency, it is paired with a full-fat GTX 2060 graphics card. We looked at the data for this card, and it exhibits a lower 960 MHz base frequency, it has the higher 1200 MHz turbo, 1750 MHz memory, and has a direct PCIe 3.0 x16 connection with the processor, while the ASUS system is only an x8.

For our tests, I’ve taken an older test (CS:Source), a couple of modern tests (Civ 6, FFXV) and a new test in Borderlands 3. We used the following settings:

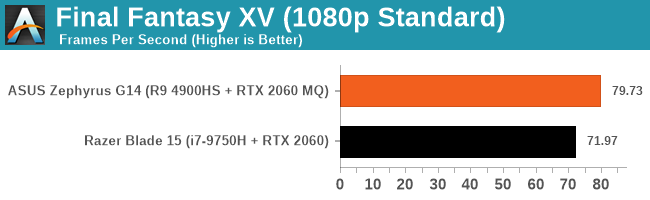

- Final Fantasy, 1080p Fullscreen, Standard Quality

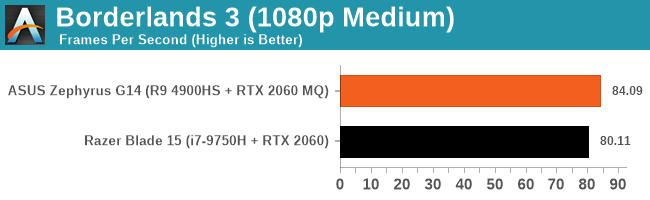

- Borderlands 3, 1080p, Medium Pre-Set

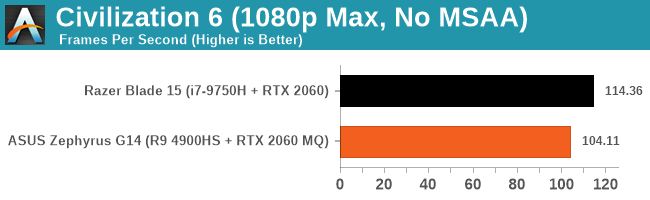

- Civilization 6, 1080p Maximum Preset No MSAA / 1K Occlusion Textures

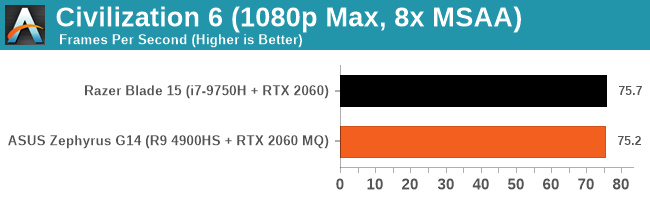

- Civilization 6, 1080p Maximum Preset 8x MSAA / 2K Occlusion Textures

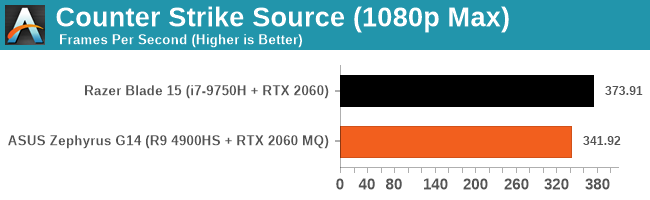

- Counter Strike Source, 1080p Maximum

In Final Fantasy, the results were around 10% different, favoring the AMD system.

Borderlands 3 was actually fairly close, with less than 5% between them, but still favoring AMD. I did notice that we were fairly close to the cutoff here between being CPU limited and GPU limited.

Civilization 6 is well known for constantly updating and being optimized, and here it seems the more powerful GPU wins out by a large 10 FPS margin.

However, if we add in some more compute and detail, we move to a more GPU limited scenario where the results are essentially equal.

Counter Strike is an odd one, given how old the game is. Here the game favors the Intel machine, with a ~10% advantage.

Like in the CPU tests, I did some of these gaming tests with the power cord removed and on battery saver mode. Results were interesting, to say the least, and can be found on the next page.

267 Comments

View All Comments

Kishoreshack - Monday, April 13, 2020 - link

@ian cuttresWhy soo harsh on AMD?

Its outdoing intel processor which draw 2 or 3 times more power

It literally smokes any Intel processor in the same power envelope

You should be giving praises & awards to AMD

instead the tone of article doesn't do justice to AMD

Deicidium369 - Monday, April 13, 2020 - link

Facts hurt, huh? It's impressive but not life altering. What sort of awards? "Award for not going bankrupt" or "Award for FINALLY putting something worth purchasing" or "Award because you hut AMD's fee fees"The tone is fair, Ian is a skilled writer and reviewer - I never felt he has leaned more to one side or the other.

destorofall - Tuesday, April 14, 2020 - link

can I give intel an award for "masterfully delaying a node ramp-up for almost half a decade."Korguz - Tuesday, April 14, 2020 - link

they didnt delaying, they screwed up some how, some say intel was too aggressive on what it was trying to do with it, and it didnt workWineohe - Monday, April 13, 2020 - link

This does potentially offer OEMs some breathing room with features, if the CPU and Chipset can lower their costs by a few hundred dollars. They can offer a Notebook with similar or better performance and battery life, with more features. Say a 1TB SSD versus a 512GB, 32GB vs 16GB, better display, or better dedicated GPU. This would easily sway me as a consumer toward the AMD option.Deicidium369 - Monday, April 13, 2020 - link

Nope. Most people would still buy Intel - price isn't most people's primary metric - some buy Intel because why buy something that is "like Intel" when you can, you know, "Buy Intel". OEMs build what people want - and this new CPU will be a major rarity - and will not sell in even large numbers by AMDs standards.Qasar - Monday, April 13, 2020 - link

" OEMs build what people want " or buy intel because intel bribes or threatens them, remember the athlon 64 days ? guess what, intel got taken to court over their shady dealings, and they lost, but i know you won't remember that, because your an intel fanboy, and intel cant do anything wrong.watzupken - Monday, April 13, 2020 - link

Its true that most consumers will still prefer Intel chips due to the reputation they have build for themselves over the years. However in this age where people can find information readily, that advantage may not be firm. With more enthusiasts leaning towards AMD, this may also filter down to those who are not tech savvy through positive word of mouth. For example, it is not uncommon for someone who is not tech savvy to get recommendation from a technology savvy person when buying a computer/ laptop. Moreover, AMD is very aggressive when it comes to the cost of the chips, further adding another carrot to consumers to switch camp. One other key problem was poorer battery life on AMD mobile chips, i.e. Ryzen 2xxx and 3xxx for mobile devices, which is no longer the case here unless the manufacturers deliberately gimp the battery capacity.sleeperclass - Monday, April 13, 2020 - link

No webcam is a big blow. In these times, where everyone is using online chat platforms for communicating such as Teams, Zoom ,etc, this is something that should have just been there.All said and done, good progress by AMD in the mobile cpu space.

Qasar - Monday, April 13, 2020 - link

both of the notebooks i have, have webcams, both are unplugged and i picked up a logitech C920 i think it is, just didnt like the idea of having to tilt the screen so the person i was talking to could see me better, the separate webcam, allows that no matter how the screen is tilted. but to each his own :-)