The Toshiba/Kioxia BG4 1TB SSD Review: A Look At Your Next Laptop's SSD

by Billy Tallis on October 18, 2019 11:30 AM ESTWhole-Drive Fill

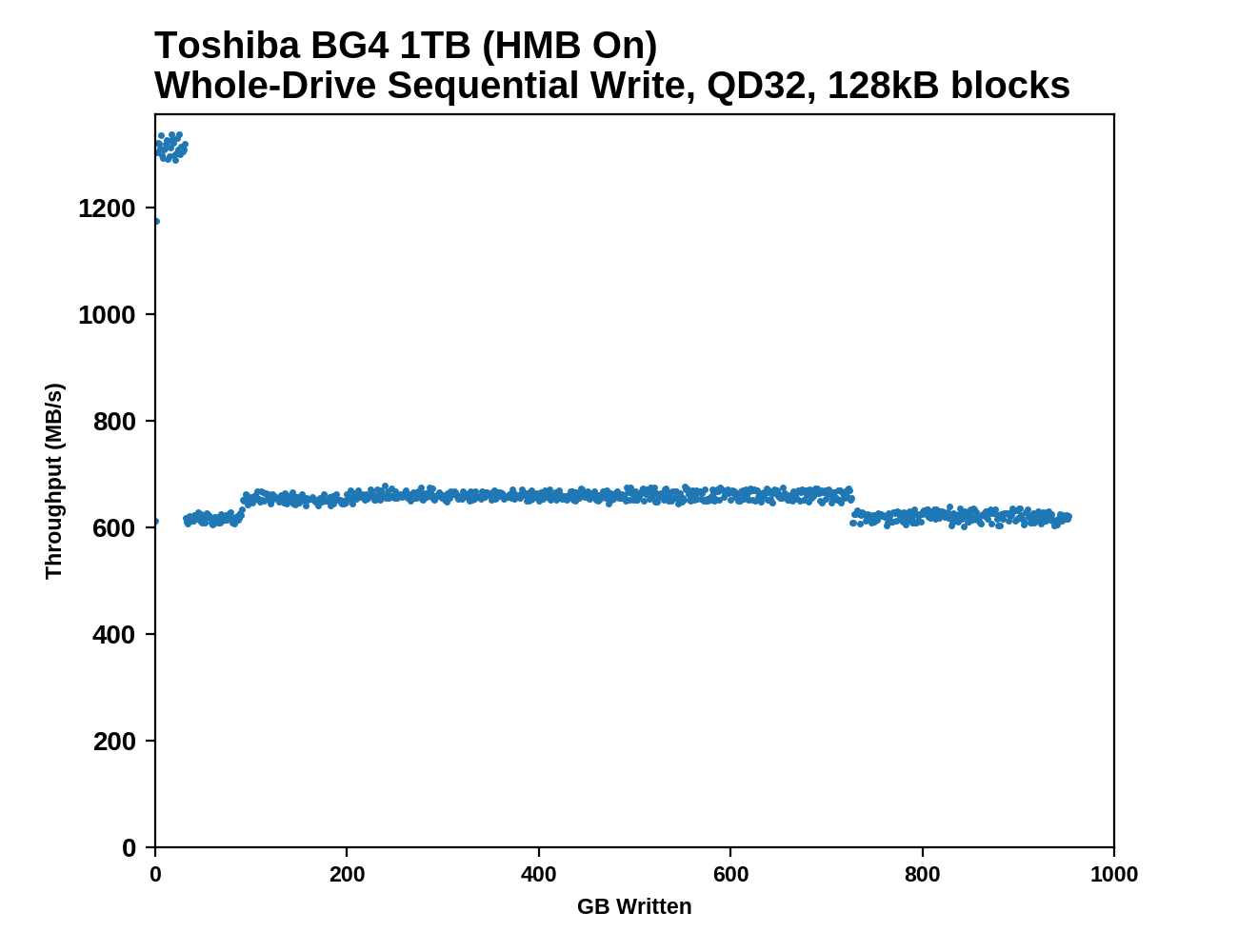

This test starts with a freshly-erased drive and fills it with 128kB sequential writes at queue depth 32, recording the write speed for each 1GB segment. This test is not representative of any ordinary client/consumer usage pattern, but it does allow us to observe transitions in the drive's behavior as it fills up. This can allow us to estimate the size of any SLC write cache, and get a sense for how much performance remains on the rare occasions where real-world usage keeps writing data after filling the cache.

|

|||||||||

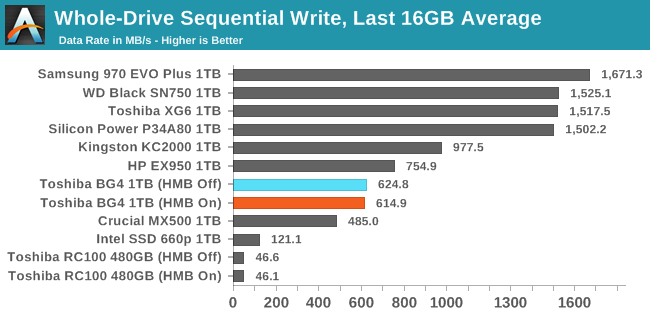

The 1TB Toshiba/Kioxia BG4 appears to have a 32GB SLC write cache that's far slower than what high-end NVMe SSDs provide, but still well above 1GB/s. After the cache is full, write speed is cut in half but remains steady. As expected, the Host Memory Buffer feature has minimal impact on this purely sequential workload.

|

|||||||||

| Average Throughput for last 16 GB | Overall Average Throughput | ||||||||

After the SLC cache is full, the BG4's sequential write speed remains comfortably out of reach of SATA SSDs, but it doesn't come close to what high-end NVMe SSDs (including Toshiba's own XG series) provide. The BG3-based Toshiba RC100's write speed seriously tanked when the drive got close to full, but the 1TB BG4 doesn't suffer from that second major performance drop.

Working Set Size

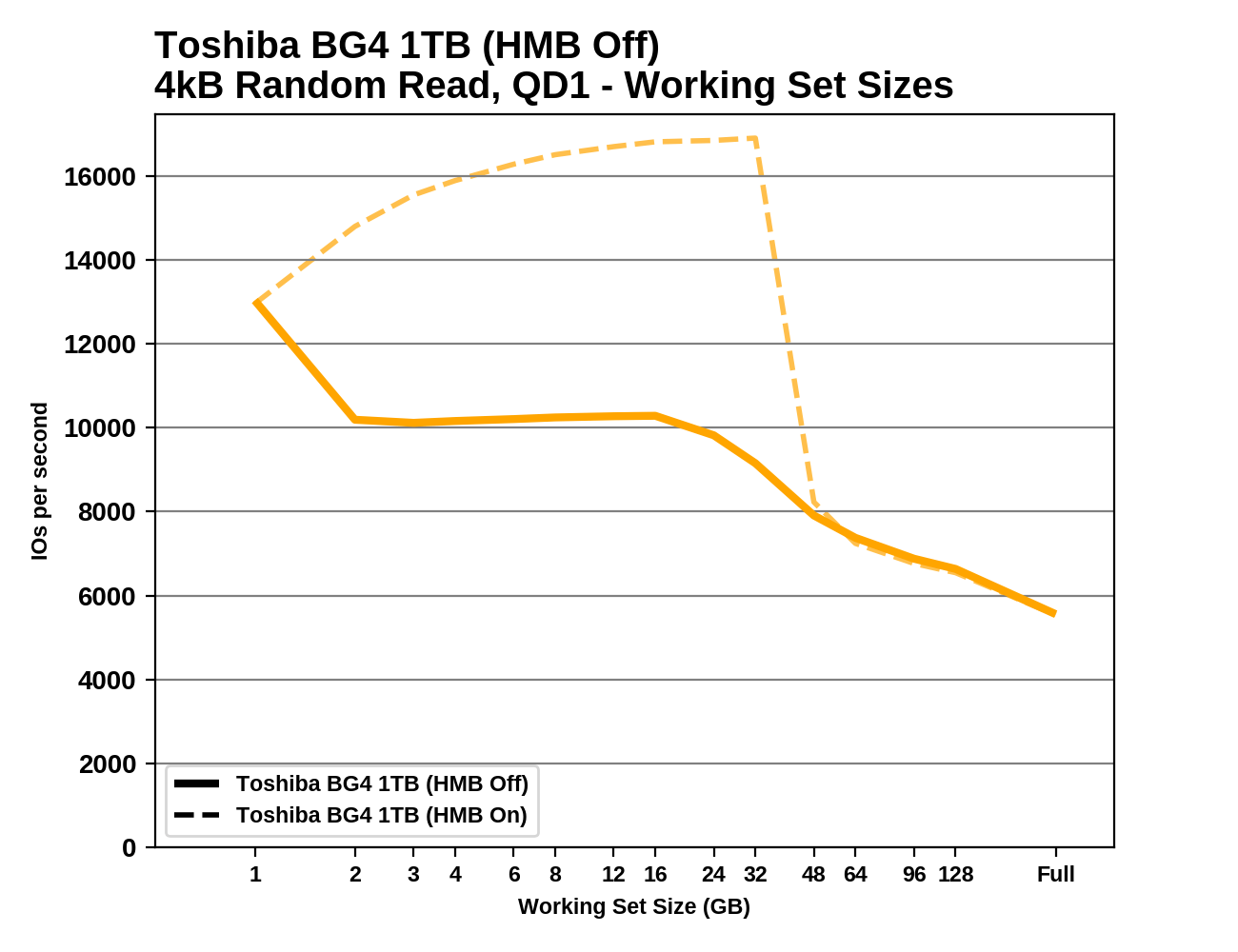

Most mainstream SSDs have enough DRAM to store the entire mapping table that translates logical block addresses into physical flash memory addresses. DRAMless drives only have small buffers to cache a portion of this mapping information. Some NVMe SSDs (the BG4 included) support the Host Memory Buffer feature and can borrow a piece of the host system's DRAM for this cache rather needing lots of on-controller memory.

When accessing a logical block whose mapping is not cached, the drive needs to read the mapping from the full table stored on the flash memory before it can read the user data stored at that logical block. This adds extra latency to read operations and in the worst case may double random read latency.

We can see the effects of the size of any mapping buffer by performing random reads from different sized portions of the drive. When performing random reads from a small slice of the drive, we expect the mappings to all fit in the cache, and when performing random reads from the entire drive, we expect mostly cache misses.

When performing this test on mainstream drives with a full-sized DRAM cache, we expect performance to be generally constant regardless of the working set size, or for performance to drop only slightly as the working set size increases.

|

|||||||||

HMB has no effect on the BG4's random read performance for working set sizes larger than about 32GB. For smaller working sets, HMB provides a big performance boost. Oddly, the performance of the BG4 gets better through the early part of this test, until the working set size gets too big. With HMB off, the BG4 seems to have enough on-controller memory to handle a 1GB working set. As compared with the older BG3-based Toshiba RC100, the BG4 seems to use a slightly larger HMB, and it gets a bigger performance boost from that extra buffer.

31 Comments

View All Comments

MrCommunistGen - Friday, October 18, 2019 - link

I recently picked up a Dell Optiplex 3070 Micro for a family member, and it shipped with a 128GB BG4. Performance of the 128GB model is going to obviously be much lower than the 1TB model tested here.�From my anecdotal experience, performance is acceptable, but could easily be better. I replaced it with a 1TB XG6 (~$120 from eBay) - mostly for capacity, but the performance uplift was (understandably) noticeable.

abufrejoval - Friday, October 18, 2019 - link

Nice review for a solid product: Thanks!While I guess it reduces the worries about a soldered down SSD somewhat, I just hope they'll continue to sell even ultrabooks with M.2 or XFMExpress: Just feels safer and helps reducing iSurcharges on capacity.

Targon - Friday, October 18, 2019 - link

Agreed. If the motherboard fails, being able to remove the SSD for data recovery SHOULD be seen as essential by most people.Wheaties88 - Friday, October 18, 2019 - link

I don’t see why most manufacturers wouldn’t see it as useful as well. Surely it would allow for less replacement motherboards needed if they could simply change the drive size. But what do I know.kingpotnoodle - Monday, October 21, 2019 - link

Nobody considers it essential because relying on removing your SSD for data recovery if the motherboard fails is a deeply flawed strategy and not applicable to the vast majority of people who wouldn't even consider opening their laptop nevermind knowing how to remove the drive and access it outside the laptop.The same thing that saves you if your SSD fails will also save you if anything else makes the machine unbootable - a proper backup.

Targon - Monday, October 21, 2019 - link

You haven't had people come to you because their laptop has died but they need their data? Consumers may not be ready or able to get data from a dead laptop that has a drive you can remove, but the places they turn to SHOULD be able to.Tell me, can you recover data from a dead Macbook(dead motherboard) these days with the storage on the motherboard yourself? If the motherboard in your own personal laptop failed, wouldn't YOU want to be able to pull the drive if you needed data from it?

abufrejoval - Friday, October 25, 2019 - link

Those who know me well enough to entrust me with their computer, know me well enough not to come close with a Macbook.And I am not even all *that* prejudiced. I loved my Apple ][ (clone), went for the PC because even my 80286 already ran Unix and I was a computer scientist after all.

I keep doing Hackintoshs every now and then, just to get an understanding of how a Mac feels and because it's a bit of a challenge.

But it's seriously behind in just about every aspect important to me: The combination of Linux and Windows gives me much more in any direction, for work and for fun. And mixing both is much less of a technical issue than life-balance.

And then the notion of having your most personal handheld computer managed by an external party is just so wrong, I am flabbergasted that Apple managers still walk free, when computer sabotage is a felony.

The Apple ][ didn't even screw down the top lid. Swapping out components and parts, adding all sorts of functionality and upgrades made it great.

This solid brick of aluminum, glue, soldered on chips and hapless keyboard mechanics they call an Apple computer these days is just so wrong, I'd throw it into recycling the minute I got one for free. I don't know if I could give an Apple notebook or phone even to a foe, let alone a friend.

domboy - Friday, October 18, 2019 - link

Since Microsoft used this in the Surface Laptop 3, I wonder if they also used it in the Surface Pro X since that also has a removable SSD. I'll be interested to find out...taz-nz - Friday, October 18, 2019 - link

Now we just need them to apply this tech to a standard 2280 form factor and give us a 4TB m.2 SSD, doesn't have to have best in class performance just a consumer class 4TB m.2 SSD.Death666Angel - Saturday, October 19, 2019 - link

There already are Samsung and Toshiba 4TB M,2 drives.